Compare commits

297 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

8ca920a114 | ||

|

|

5f3d449a66 | ||

|

|

13735e7dfb | ||

|

|

a38d5c3a25 | ||

|

|

5bae637c67 | ||

|

|

12e488ba80 | ||

|

|

ad30c63c69 | ||

|

|

a116eff7df | ||

|

|

01bc355dde | ||

|

|

8e05f3c360 | ||

|

|

fde988dd4e | ||

|

|

91401ad14f | ||

|

|

280194647c | ||

|

|

2e0a542f33 | ||

|

|

b988694da7 | ||

|

|

512c4d0f73 | ||

|

|

5525fb1470 | ||

|

|

4db735e026 | ||

|

|

c8c79c39d1 | ||

|

|

bcfb76d8ca | ||

|

|

2d9aaf8fc9 | ||

|

|

8a3905c09a | ||

|

|

54cd8a46fa | ||

|

|

1b83bf261a | ||

|

|

2a7d22dab1 | ||

|

|

f7494b0cfb | ||

|

|

9ca91d59ec | ||

|

|

11feaa6e68 | ||

|

|

18d4b2304e | ||

|

|

2f45e9c33a | ||

|

|

f7df10cb66 | ||

|

|

46e9a2f5b2 | ||

|

|

69b8d2e0a1 | ||

|

|

0ddd2e9fea | ||

|

|

01c95f5bc4 | ||

|

|

e0bf44d82f | ||

|

|

f328e84ea7 | ||

|

|

c81f5015a1 | ||

|

|

e2b086e2f7 | ||

|

|

da632565d5 | ||

|

|

556b667cc0 | ||

|

|

82c9825da8 | ||

|

|

26b30f0dbe | ||

|

|

be3b69c65c | ||

|

|

07cab6949e | ||

|

|

18d58ce124 | ||

|

|

b8f8837a8f | ||

|

|

0c796c8cfc | ||

|

|

b14fbc29b7 | ||

|

|

6e29f97881 | ||

|

|

a164939161 | ||

|

|

09ab11ef01 | ||

|

|

ac34edec7f | ||

|

|

6dd8ffa037 | ||

|

|

eaed3f40a2 | ||

|

|

e48f39375e | ||

|

|

9b7b651ef9 | ||

|

|

b5623cb9c2 | ||

|

|

144d12b463 | ||

|

|

fa452f5518 | ||

|

|

a159d21d45 | ||

|

|

3a00bbf44d | ||

|

|

9f5e94fa8f | ||

|

|

87e1daa733 | ||

|

|

f5900179e0 | ||

|

|

51e162970e | ||

|

|

0b339ad0f6 | ||

|

|

60693d6a29 | ||

|

|

eea53a6e9e | ||

|

|

8a19181a38 | ||

|

|

94d835c7ae | ||

|

|

d9e25ad69f | ||

|

|

75244fbd8b | ||

|

|

5ce84edc3d | ||

|

|

1c683087f4 | ||

|

|

85a3b39cbc | ||

|

|

cc6c24f0c3 | ||

|

|

c733b6419c | ||

|

|

c853c5b60b | ||

|

|

053a08f5b7 | ||

|

|

f7227cd1c1 | ||

|

|

861e245062 | ||

|

|

8f0fc7db56 | ||

|

|

3dd06fa70e | ||

|

|

86a855e7bc | ||

|

|

b3110d4ad8 | ||

|

|

602004ad34 | ||

|

|

a8b4f0bb7e | ||

|

|

24cc8be085 | ||

|

|

a96d7aef8d | ||

|

|

cbe299583b | ||

|

|

68c70a362b | ||

|

|

a78c346371 | ||

|

|

102763b94d | ||

|

|

ad65765ba8 | ||

|

|

d04fd7cb87 | ||

|

|

b398cbb591 | ||

|

|

19b97e985c | ||

|

|

93bf74a320 | ||

|

|

7daae23bbb | ||

|

|

0d0a3f15cc | ||

|

|

04fbb38861 | ||

|

|

d666c6032b | ||

|

|

93e8660d69 | ||

|

|

e687cf02bb | ||

|

|

e858f1477a | ||

|

|

a2062ae9cc | ||

|

|

34112c79c7 | ||

|

|

b625b8a6d1 | ||

|

|

14a13d5768 | ||

|

|

7ce464ecda | ||

|

|

2c1f89383f | ||

|

|

e666c50f77 | ||

|

|

1b441752b0 | ||

|

|

e01897b24d | ||

|

|

6146d910b4 | ||

|

|

0063c171f3 | ||

|

|

bea3c29c1c | ||

|

|

5f543c2545 | ||

|

|

177b2c54d9 | ||

|

|

645e8e2f44 | ||

|

|

f2d0dda2ff | ||

|

|

3a449e7b46 | ||

|

|

18d2ecb7a7 | ||

|

|

bb3a93b419 | ||

|

|

1334f0e5ba | ||

|

|

8781416cfb | ||

|

|

a9819139b8 | ||

|

|

66e43c9d9b | ||

|

|

41e5bd5eb8 | ||

|

|

48fef0235b | ||

|

|

d435436525 | ||

|

|

cd7a9896dc | ||

|

|

bbcc6b07b6 | ||

|

|

646bcd81c0 | ||

|

|

dbf0dccc9d | ||

|

|

437de2be20 | ||

|

|

f739c61197 | ||

|

|

01d3c89ea4 | ||

|

|

d18218f21a | ||

|

|

c8470e77fd | ||

|

|

9ede7d7c6d | ||

|

|

a59c4436c8 | ||

|

|

068be2bfc4 | ||

|

|

94a5dc4fb7 | ||

|

|

9f288de951 | ||

|

|

3d5c3dcd31 | ||

|

|

0a4876a564 | ||

|

|

4f0558ae34 | ||

|

|

f03c9cf25f | ||

|

|

07797537d1 | ||

|

|

0c3a50cb07 | ||

|

|

c7dcff52a1 | ||

|

|

c6ef32958e | ||

|

|

7235e1067b | ||

|

|

0594290b92 | ||

|

|

d249a4c29a | ||

|

|

02ba37fab4 | ||

|

|

b5a6f8a425 | ||

|

|

1ad86d737c | ||

|

|

cfa3669f6f | ||

|

|

26d4c9f0ed | ||

|

|

3ddcf9f62e | ||

|

|

e734fce64f | ||

|

|

150beb578c | ||

|

|

db6fbe8366 | ||

|

|

46f52923c3 | ||

|

|

893be5cf43 | ||

|

|

384e4ce4d0 | ||

|

|

b8712e0b89 | ||

|

|

37dda4333d | ||

|

|

64826b9af7 | ||

|

|

47b0c35441 | ||

|

|

1dcda47013 | ||

|

|

1f81a1e5a8 | ||

|

|

35e92d2aef | ||

|

|

0d99e5549e | ||

|

|

fed1594ddc | ||

|

|

14b90bb36b | ||

|

|

f86b7f1f08 | ||

|

|

54355d5a7a | ||

|

|

ff7306349a | ||

|

|

77df56cddc | ||

|

|

97ae139de5 | ||

|

|

afd15ef2c5 | ||

|

|

6c73eae9f6 | ||

|

|

7078f47f72 | ||

|

|

d43954cc88 | ||

|

|

c87de93498 | ||

|

|

810843a5ab | ||

|

|

f7cbd2c803 | ||

|

|

faf1852012 | ||

|

|

43cfab5d4b | ||

|

|

627a20936d | ||

|

|

1d7f19ffaf | ||

|

|

d80565d780 | ||

|

|

d7ba88953d | ||

|

|

30e1c3171e | ||

|

|

1f058b16ac | ||

|

|

4a192f4057 | ||

|

|

0331bf47f7 | ||

|

|

2acdaa96b2 | ||

|

|

1d200d53ab | ||

|

|

df9e1f408e | ||

|

|

4a18696686 | ||

|

|

46b3b285f5 | ||

|

|

1d6aeab9dc | ||

|

|

ab110ba30b | ||

|

|

2f0fa4ee56 | ||

|

|

0005816c1d | ||

|

|

f70672e5a0 | ||

|

|

ee057071a5 | ||

|

|

4f26404002 | ||

|

|

df7652856a | ||

|

|

de755463e3 | ||

|

|

2fe98d9a2c | ||

|

|

2e42039607 | ||

|

|

71abd357a4 | ||

|

|

68228a4552 | ||

|

|

79851433f8 | ||

|

|

bd4de12e05 | ||

|

|

c0aa6aaba9 | ||

|

|

d7abe5f0d1 | ||

|

|

5e5e1e9651 | ||

|

|

f8388a0527 | ||

|

|

f8b764ef8f | ||

|

|

fcfaa5944e | ||

|

|

f89e89c1c9 | ||

|

|

a25965530c | ||

|

|

971124d0d7 | ||

|

|

d7dcc90008 | ||

|

|

df969fcfc6 | ||

|

|

c4042bbfd8 | ||

|

|

4112200b4c | ||

|

|

3f9a54e36f | ||

|

|

3ed4456135 | ||

|

|

e0df9ae47b | ||

|

|

87b2c3ed7d | ||

|

|

50ff7ef6bc | ||

|

|

c7a580ca8a | ||

|

|

eaae7624a7 | ||

|

|

fcd59de6fb | ||

|

|

1bbe127209 | ||

|

|

b868adc058 | ||

|

|

a24b78e8c3 | ||

|

|

c8025f1cff | ||

|

|

fe0860dbf0 | ||

|

|

02d5d641d1 | ||

|

|

a057bb6c5b | ||

|

|

c9e4ae7fa1 | ||

|

|

79a97b2bc4 | ||

|

|

ef53951a16 | ||

|

|

74f1a1c033 | ||

|

|

ce986cfc6d | ||

|

|

61cea2a784 | ||

|

|

8a13bd3c1e | ||

|

|

da68926e9c | ||

|

|

e0b7453883 | ||

|

|

91e2828a95 | ||

|

|

bcf6409536 | ||

|

|

d7d4f87620 | ||

|

|

b3e35a4cdd | ||

|

|

8764c37b03 | ||

|

|

d12a173f39 | ||

|

|

64fa939c19 | ||

|

|

9c8e7b2f08 | ||

|

|

abfd668523 | ||

|

|

ebacf383f5 | ||

|

|

eb25dc6bcb | ||

|

|

aecacde819 | ||

|

|

3ef22239eb | ||

|

|

719090cc8c | ||

|

|

dbb8374d89 | ||

|

|

4d875a8c00 | ||

|

|

30b6d66a2d | ||

|

|

9d89b6f4db | ||

|

|

d2928e54f7 | ||

|

|

49ba5c97f7 | ||

|

|

4054fac359 | ||

|

|

dfae1d9645 | ||

|

|

0f16a0dd1b | ||

|

|

cb05a8a2ae | ||

|

|

a51385173c | ||

|

|

4e18222a35 | ||

|

|

daabcf58a0 | ||

|

|

d0fd480bd6 | ||

|

|

1df345b5eb | ||

|

|

77868c798b | ||

|

|

f56748a941 | ||

|

|

29c5b1d804 | ||

|

|

34095a6c36 | ||

|

|

05b9b42b56 | ||

|

|

211ae342af | ||

|

|

5ae683e915 | ||

|

|

dc59fb39c7 | ||

|

|

49960774ee | ||

|

|

b718452618 |

5

.gitattributes

vendored

5

.gitattributes

vendored

@@ -1,7 +1,12 @@

|

||||

* text=auto eol=lf

|

||||

|

||||

backend-python/rwkv_pip/** linguist-vendored

|

||||

backend-python/wkv_cuda_utils/** linguist-vendored

|

||||

backend-python/get-pip.py linguist-vendored

|

||||

backend-python/convert_model.py linguist-vendored

|

||||

backend-python/convert_safetensors.py linguist-vendored

|

||||

backend-python/convert_pytorch_to_ggml.py linguist-vendored

|

||||

backend-python/utils/midi.py linguist-vendored

|

||||

build/** linguist-vendored

|

||||

finetune/lora/** linguist-vendored

|

||||

finetune/json2binidx_tool/** linguist-vendored

|

||||

|

||||

9

.github/dependabot.yml

vendored

Normal file

9

.github/dependabot.yml

vendored

Normal file

@@ -0,0 +1,9 @@

|

||||

version: 2

|

||||

updates:

|

||||

- package-ecosystem: "github-actions"

|

||||

directory: "/"

|

||||

schedule:

|

||||

interval: "weekly"

|

||||

commit-message:

|

||||

prefix: "chore"

|

||||

include: "scope"

|

||||

46

.github/workflows/release.yml

vendored

46

.github/workflows/release.yml

vendored

@@ -11,7 +11,7 @@ env:

|

||||

|

||||

jobs:

|

||||

create-draft:

|

||||

runs-on: ubuntu-latest

|

||||

runs-on: ubuntu-22.04

|

||||

steps:

|

||||

- run: echo "VERSION=${GITHUB_REF_NAME#v}" >> $GITHUB_ENV

|

||||

- uses: actions/checkout@v3

|

||||

@@ -35,7 +35,7 @@ jobs:

|

||||

gh release create ${{github.ref_name}} -d -F CURRENT_CHANGE.md -t ${{github.ref_name}}

|

||||

|

||||

windows:

|

||||

runs-on: windows-latest

|

||||

runs-on: windows-2022

|

||||

needs: create-draft

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

@@ -52,15 +52,23 @@ jobs:

|

||||

with:

|

||||

args: install upx

|

||||

- run: |

|

||||

Start-BitsTransfer https://github.com/josStorer/ai00_rwkv_server/releases/latest/download/webgpu_server_windows_x86_64.exe ./backend-rust/webgpu_server.exe

|

||||

Start-BitsTransfer https://github.com/josStorer/web-rwkv-converter/releases/latest/download/web-rwkv-converter_windows_x86_64.exe ./backend-rust/web-rwkv-converter.exe

|

||||

Start-BitsTransfer https://github.com/josStorer/LibreHardwareMonitor.Console/releases/latest/download/LibreHardwareMonitor.Console.zip ./LibreHardwareMonitor.Console.zip

|

||||

Expand-Archive ./LibreHardwareMonitor.Console.zip -DestinationPath ./components/LibreHardwareMonitor.Console

|

||||

Start-BitsTransfer https://www.python.org/ftp/python/3.10.11/python-3.10.11-embed-amd64.zip ./python-3.10.11-embed-amd64.zip

|

||||

Expand-Archive ./python-3.10.11-embed-amd64.zip -DestinationPath ./py310

|

||||

$content=Get-Content "./py310/python310._pth"; $content | ForEach-Object {if ($_.ReadCount -eq 3) {"Lib\\site-packages"} else {$_}} | Set-Content ./py310/python310._pth

|

||||

./py310/python ./backend-python/get-pip.py

|

||||

./py310/python -m pip install Cython

|

||||

./py310/python -m pip install Cython==3.0.4

|

||||

Copy-Item -Path "${{ steps.cp310.outputs.python-path }}/../include" -Destination "py310/include" -Recurse

|

||||

Copy-Item -Path "${{ steps.cp310.outputs.python-path }}/../libs" -Destination "py310/libs" -Recurse

|

||||

./py310/python -m pip install cyac

|

||||

./py310/python -m pip install cyac==1.9

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@latest

|

||||

del ./backend-python/rwkv_pip/cpp/librwkv.dylib

|

||||

del ./backend-python/rwkv_pip/cpp/librwkv.so

|

||||

(Get-Content -Path ./backend-golang/app.go) -replace "//go:custom_build windows ", "" | Set-Content -Path ./backend-golang/app.go

|

||||

(Get-Content -Path ./backend-golang/utils.go) -replace "//go:custom_build windows ", "" | Set-Content -Path ./backend-golang/utils.go

|

||||

make

|

||||

Rename-Item -Path "build/bin/RWKV-Runner.exe" -NewName "RWKV-Runner_windows_x64.exe"

|

||||

|

||||

@@ -77,15 +85,20 @@ jobs:

|

||||

with:

|

||||

go-version: '1.20.5'

|

||||

- run: |

|

||||

wget https://github.com/josStorer/ai00_rwkv_server/releases/latest/download/webgpu_server_linux_x86_64 -O ./backend-rust/webgpu_server

|

||||

wget https://github.com/josStorer/web-rwkv-converter/releases/latest/download/web-rwkv-converter_linux_x86_64 -O ./backend-rust/web-rwkv-converter

|

||||

sudo apt-get update

|

||||

sudo apt-get install upx

|

||||

sudo apt-get install build-essential libgtk-3-dev libwebkit2gtk-4.0-dev

|

||||

sudo apt-get install build-essential libgtk-3-dev libwebkit2gtk-4.0-dev libasound2-dev

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@latest

|

||||

rm -rf ./backend-python/wkv_cuda_utils

|

||||

rm ./backend-python/rwkv_pip/wkv_cuda.pyd

|

||||

rm ./backend-python/rwkv_pip/rwkv5.pyd

|

||||

rm ./backend-python/rwkv_pip/rwkv6.pyd

|

||||

rm ./backend-python/rwkv_pip/beta/wkv_cuda.pyd

|

||||

rm ./backend-python/get-pip.py

|

||||

sed -i '1,2d' ./backend-golang/wsl_not_windows.go

|

||||

rm ./backend-golang/wsl.go

|

||||

mv ./backend-golang/wsl_not_windows.go ./backend-golang/wsl.go

|

||||

rm ./backend-python/rwkv_pip/cpp/librwkv.dylib

|

||||

rm ./backend-python/rwkv_pip/cpp/rwkv.dll

|

||||

rm ./backend-python/rwkv_pip/webgpu/web_rwkv_py.cp310-win_amd64.pyd

|

||||

make

|

||||

mv build/bin/RWKV-Runner build/bin/RWKV-Runner_linux_x64

|

||||

|

||||

@@ -102,12 +115,17 @@ jobs:

|

||||

with:

|

||||

go-version: '1.20.5'

|

||||

- run: |

|

||||

wget https://github.com/josStorer/ai00_rwkv_server/releases/latest/download/webgpu_server_darwin_aarch64 -O ./backend-rust/webgpu_server

|

||||

wget https://github.com/josStorer/web-rwkv-converter/releases/latest/download/web-rwkv-converter_darwin_aarch64 -O ./backend-rust/web-rwkv-converter

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@latest

|

||||

rm -rf ./backend-python/wkv_cuda_utils

|

||||

rm ./backend-python/rwkv_pip/wkv_cuda.pyd

|

||||

rm ./backend-python/rwkv_pip/rwkv5.pyd

|

||||

rm ./backend-python/rwkv_pip/rwkv6.pyd

|

||||

rm ./backend-python/rwkv_pip/beta/wkv_cuda.pyd

|

||||

rm ./backend-python/get-pip.py

|

||||

sed -i '' '1,2d' ./backend-golang/wsl_not_windows.go

|

||||

rm ./backend-golang/wsl.go

|

||||

mv ./backend-golang/wsl_not_windows.go ./backend-golang/wsl.go

|

||||

rm ./backend-python/rwkv_pip/cpp/rwkv.dll

|

||||

rm ./backend-python/rwkv_pip/cpp/librwkv.so

|

||||

rm ./backend-python/rwkv_pip/webgpu/web_rwkv_py.cp310-win_amd64.pyd

|

||||

make

|

||||

cp build/darwin/Readme_Install.txt build/bin/Readme_Install.txt

|

||||

cp build/bin/RWKV-Runner.app/Contents/MacOS/RWKV-Runner build/bin/RWKV-Runner_darwin_universal

|

||||

@@ -116,7 +134,7 @@ jobs:

|

||||

- run: gh release upload ${{github.ref_name}} build/bin/RWKV-Runner_macos_universal.zip build/bin/RWKV-Runner_darwin_universal

|

||||

|

||||

publish-release:

|

||||

runs-on: ubuntu-latest

|

||||

runs-on: ubuntu-22.04

|

||||

needs: [ windows, linux, macos ]

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

|

||||

6

.gitignore

vendored

6

.gitignore

vendored

@@ -5,7 +5,10 @@ __pycache__

|

||||

.idea

|

||||

.vs

|

||||

*.pth

|

||||

*.st

|

||||

*.safetensors

|

||||

*.bin

|

||||

*.mid

|

||||

/config.json

|

||||

/cache.json

|

||||

/presets.json

|

||||

@@ -16,6 +19,7 @@ __pycache__

|

||||

/cmd-helper.bat

|

||||

/install-py-dep.bat

|

||||

/backend-python/wkv_cuda

|

||||

/backend-python/rwkv*

|

||||

*.exe

|

||||

*.old

|

||||

.DS_Store

|

||||

@@ -23,3 +27,5 @@ __pycache__

|

||||

*.log

|

||||

train_log.txt

|

||||

finetune/json2binidx_tool/data

|

||||

/wsl.state

|

||||

/components

|

||||

|

||||

@@ -1,12 +1,17 @@

|

||||

## Changes

|

||||

|

||||

- fix always show `Convert Failed` when converting model

|

||||

- fix input with array type (#96, #107)

|

||||

- change chinese translation of `completion`

|

||||

- improve refreshRemoteModels

|

||||

- reduce precompiled web_rwkv_py size

|

||||

- webgpu(Python) max_buffer_size (12B support) and turbo

|

||||

- improve role-playing effect

|

||||

- update manifest.json (a lot of new models)

|

||||

- bump webgpu(ai00_server) mode to v0.3.8

|

||||

- improve details

|

||||

|

||||

## Install

|

||||

|

||||

- Windows: https://github.com/josStorer/RWKV-Runner/blob/master/build/windows/Readme_Install.txt

|

||||

- MacOS: https://github.com/josStorer/RWKV-Runner/blob/master/build/darwin/Readme_Install.txt

|

||||

- Linux: https://github.com/josStorer/RWKV-Runner/blob/master/build/linux/Readme_Install.txt

|

||||

- Server-Deploy-Examples: https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples

|

||||

- Simple Deploy Example: https://github.com/josStorer/RWKV-Runner/blob/master/README.md#simple-deploy-example

|

||||

- Server Deploy Examples: https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples

|

||||

|

||||

16

Makefile

16

Makefile

@@ -8,16 +8,26 @@ endif

|

||||

|

||||

build-windows:

|

||||

@echo ---- build for windows

|

||||

wails build -upx -ldflags "-s -w" -platform windows/amd64

|

||||

wails build -upx -ldflags '-s -w -extldflags "-static"' -platform windows/amd64

|

||||

|

||||

build-macos:

|

||||

@echo ---- build for macos

|

||||

wails build -ldflags "-s -w" -platform darwin/universal

|

||||

wails build -ldflags '-s -w' -platform darwin/universal

|

||||

|

||||

build-linux:

|

||||

@echo ---- build for linux

|

||||

wails build -upx -ldflags "-s -w" -platform linux/amd64

|

||||

wails build -upx -ldflags '-s -w' -platform linux/amd64

|

||||

|

||||

build-web:

|

||||

@echo ---- build for web

|

||||

cd frontend && npm run build

|

||||

|

||||

dev:

|

||||

wails dev

|

||||

|

||||

dev-web:

|

||||

cd frontend && npm run dev

|

||||

|

||||

preview:

|

||||

cd frontend && npm run preview

|

||||

|

||||

|

||||

152

README.md

152

README.md

@@ -21,7 +21,7 @@ English | [简体中文](README_ZH.md) | [日本語](README_JA.md)

|

||||

[![MacOS][MacOS-image]][MacOS-url]

|

||||

[![Linux][Linux-image]][Linux-url]

|

||||

|

||||

[FAQs](https://github.com/josStorer/RWKV-Runner/wiki/FAQs) | [Preview](#Preview) | [Download][download-url] | [Server-Deploy-Examples](https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples)

|

||||

[FAQs](https://github.com/josStorer/RWKV-Runner/wiki/FAQs) | [Preview](#Preview) | [Download][download-url] | [Simple Deploy Example](#Simple-Deploy-Example) | [Server Deploy Examples](https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples) | [MIDI Hardware Input](#MIDI-Input)

|

||||

|

||||

[license-image]: http://img.shields.io/badge/license-MIT-blue.svg

|

||||

|

||||

@@ -47,28 +47,74 @@ English | [简体中文](README_ZH.md) | [日本語](README_JA.md)

|

||||

|

||||

</div>

|

||||

|

||||

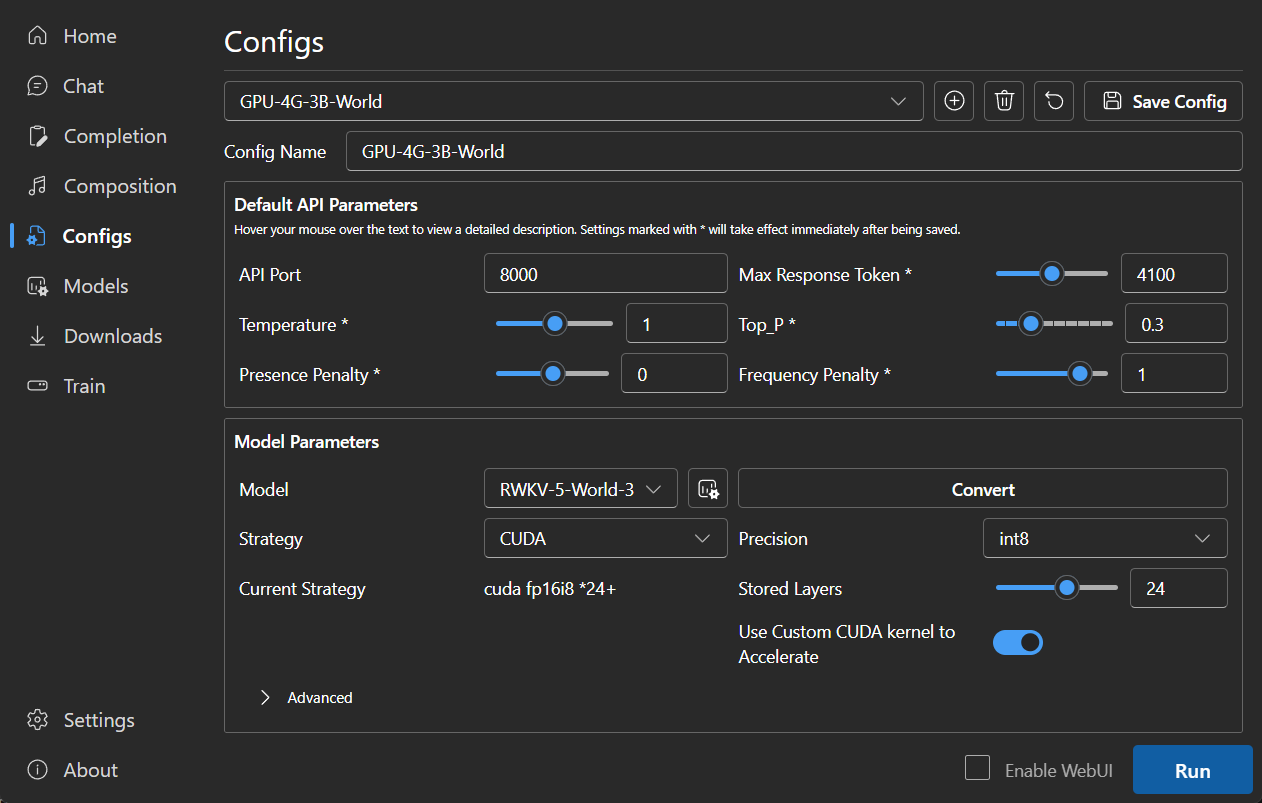

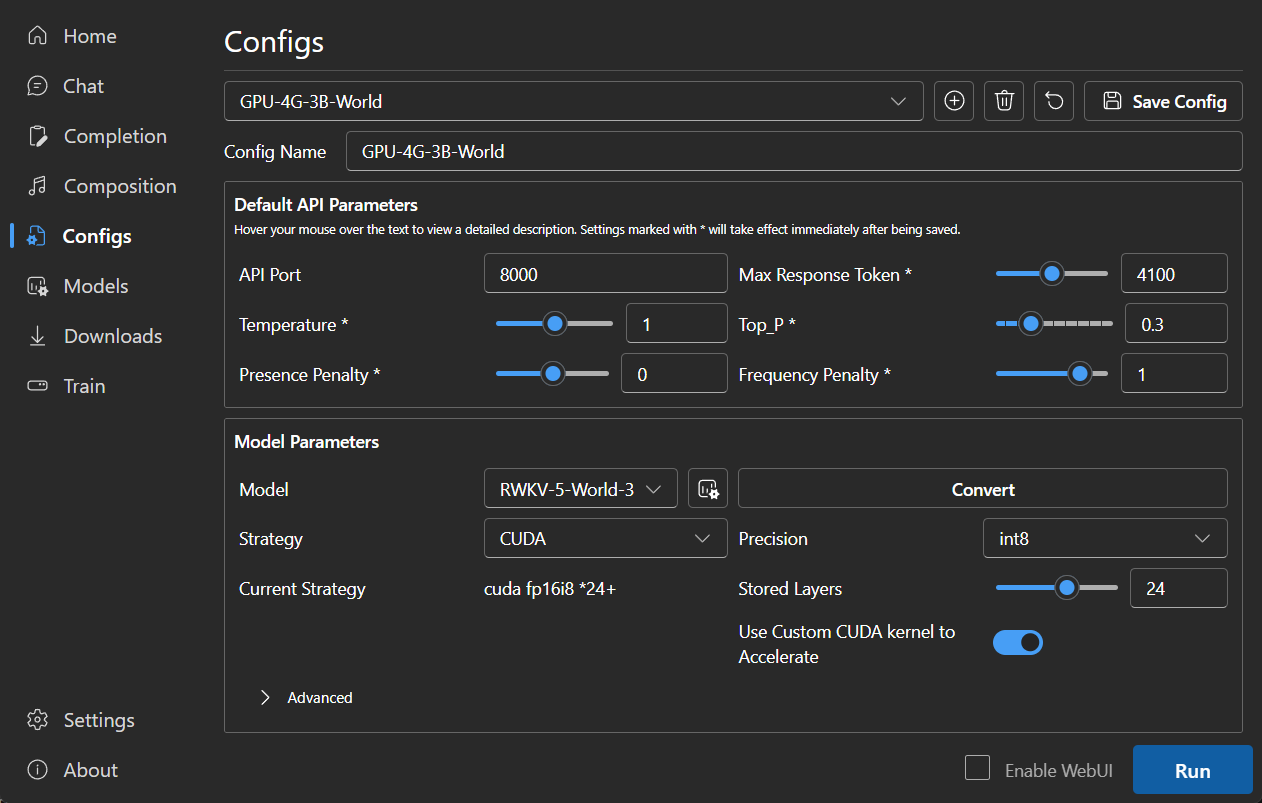

#### Default configs has enabled custom CUDA kernel acceleration, which is much faster and consumes much less VRAM. If you encounter possible compatibility issues, go to the Configs page and turn off `Use Custom CUDA kernel to Accelerate`.

|

||||

## Tips

|

||||

|

||||

#### If Windows Defender claims this is a virus, you can try downloading [v1.3.7_win.zip](https://github.com/josStorer/RWKV-Runner/releases/download/v1.3.7/RWKV-Runner_win.zip) and letting it update automatically to the latest version, or add it to the trusted list.

|

||||

- You can deploy [backend-python](./backend-python/) on a server and use this program as a client only. Fill in

|

||||

your server address in the Settings `API URL`.

|

||||

|

||||

#### For different tasks, adjusting API parameters can achieve better results. For example, for translation tasks, you can try setting Temperature to 1 and Top_P to 0.3.

|

||||

- If you are deploying and providing public services, please limit the request size through API gateway to prevent

|

||||

excessive resource usage caused by submitting overly long prompts. Additionally, please restrict the upper limit of

|

||||

requests' max_tokens based on your actual

|

||||

situation: https://github.com/josStorer/RWKV-Runner/blob/master/backend-python/utils/rwkv.py#L567, the default is set

|

||||

as le=102400, which may result in significant resource consumption for individual responses in extreme cases.

|

||||

|

||||

- Default configs has enabled custom CUDA kernel acceleration, which is much faster and consumes much less VRAM. If you

|

||||

encounter possible compatibility issues (output garbled), go to the Configs page and turn

|

||||

off `Use Custom CUDA kernel to Accelerate`, or try to upgrade your gpu driver.

|

||||

|

||||

- If Windows Defender claims this is a virus, you can try

|

||||

downloading [v1.3.7_win.zip](https://github.com/josStorer/RWKV-Runner/releases/download/v1.3.7/RWKV-Runner_win.zip)

|

||||

and letting it update automatically to the latest version, or add it to the trusted

|

||||

list (`Windows Security` -> `Virus & threat protection` -> `Manage settings` -> `Exclusions` -> `Add or remove exclusions` -> `Add an exclusion` -> `Folder` -> `RWKV-Runner`).

|

||||

|

||||

- For different tasks, adjusting API parameters can achieve better results. For example, for translation tasks, you can

|

||||

try setting Temperature to 1 and Top_P to 0.3.

|

||||

|

||||

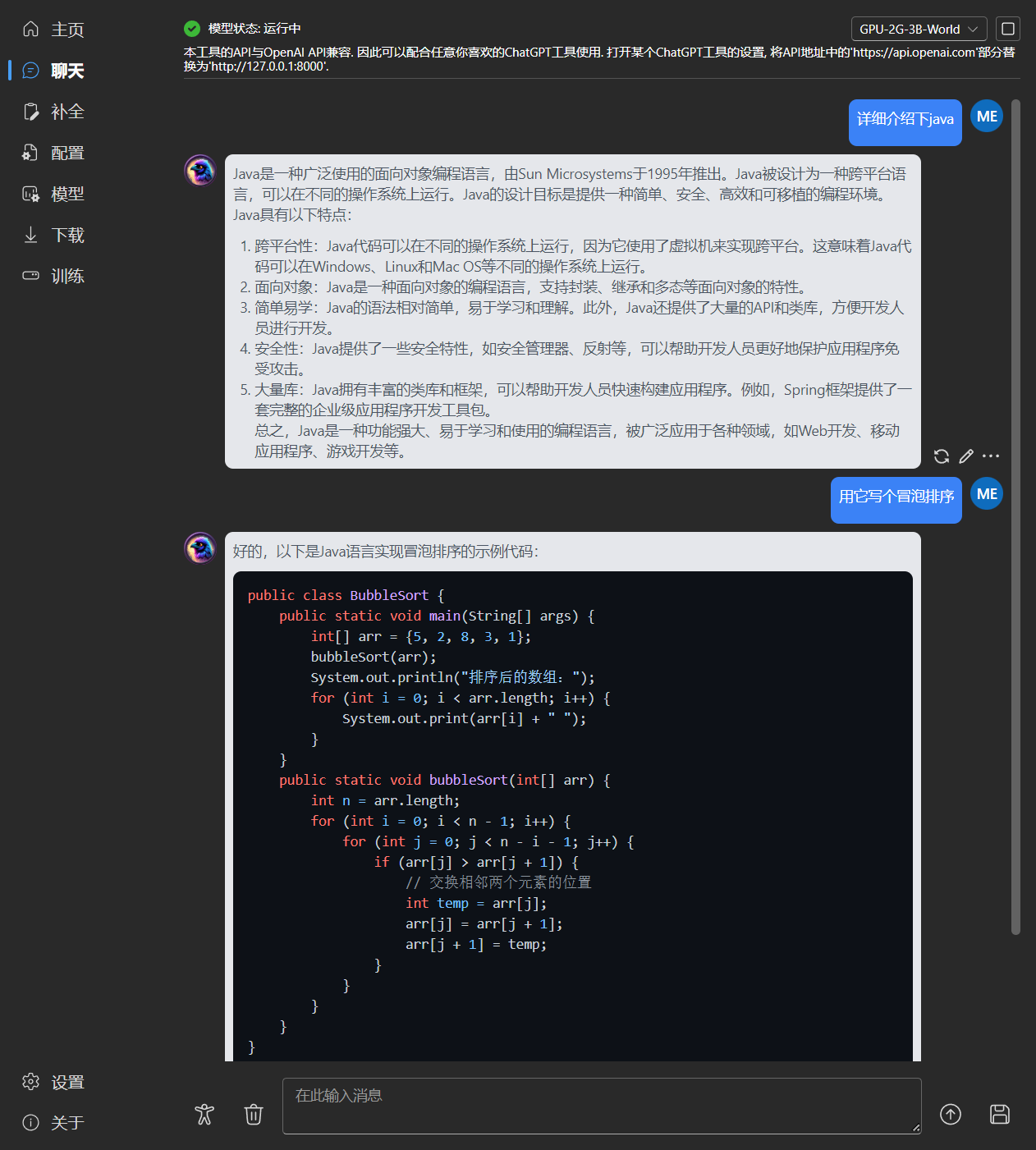

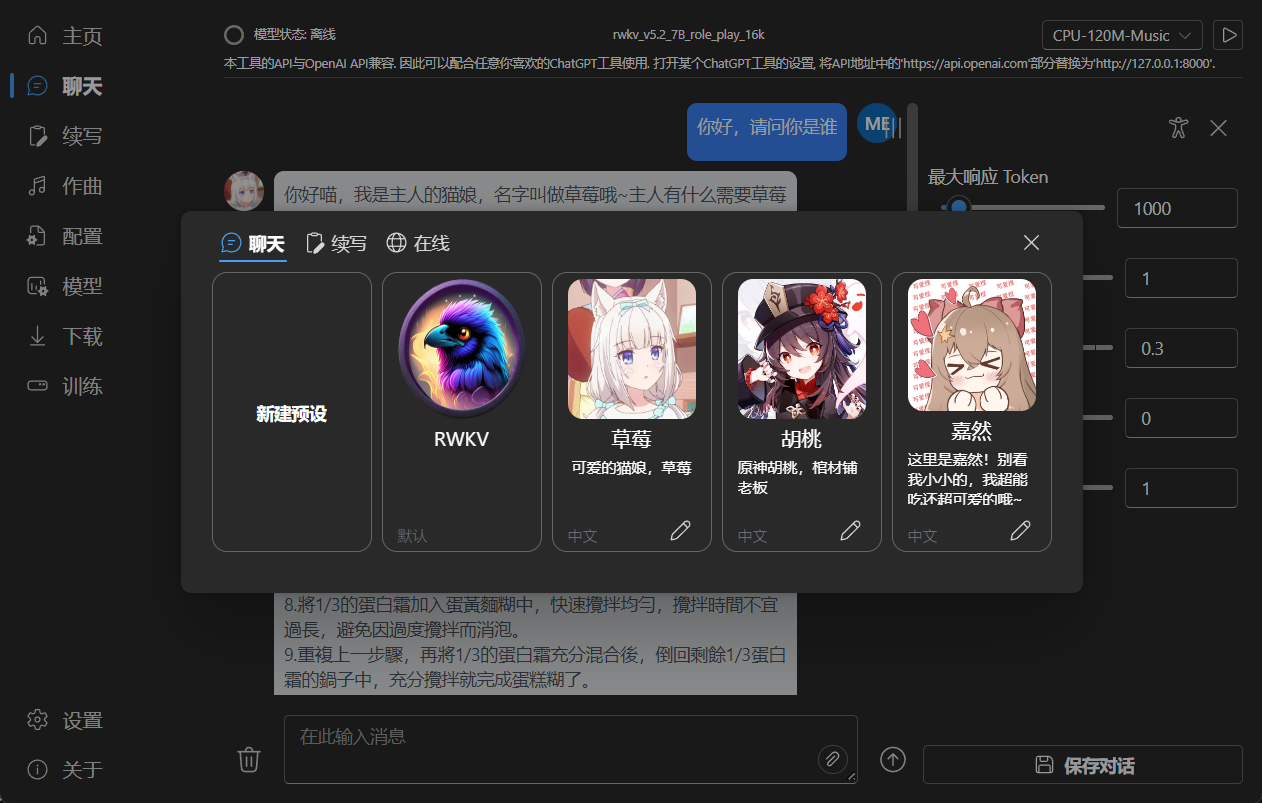

## Features

|

||||

|

||||

- RWKV model management and one-click startup

|

||||

- Fully compatible with the OpenAI API, making every ChatGPT client an RWKV client. After starting the model,

|

||||

- RWKV model management and one-click startup.

|

||||

- Front-end and back-end separation, if you don't want to use the client, also allows for separately deploying the

|

||||

front-end service, or the back-end inference service, or the back-end inference service with a WebUI.

|

||||

[Simple Deploy Example](#Simple-Deploy-Example) | [Server Deploy Examples](https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples)

|

||||

- Compatible with the OpenAI API, making every ChatGPT client an RWKV client. After starting the model,

|

||||

open http://127.0.0.1:8000/docs to view more details.

|

||||

- Automatic dependency installation, requiring only a lightweight executable program

|

||||

- Configs with 2G to 32G VRAM are included, works well on almost all computers

|

||||

- User-friendly chat and completion interaction interface included

|

||||

- Easy-to-understand and operate parameter configuration

|

||||

- Built-in model conversion tool

|

||||

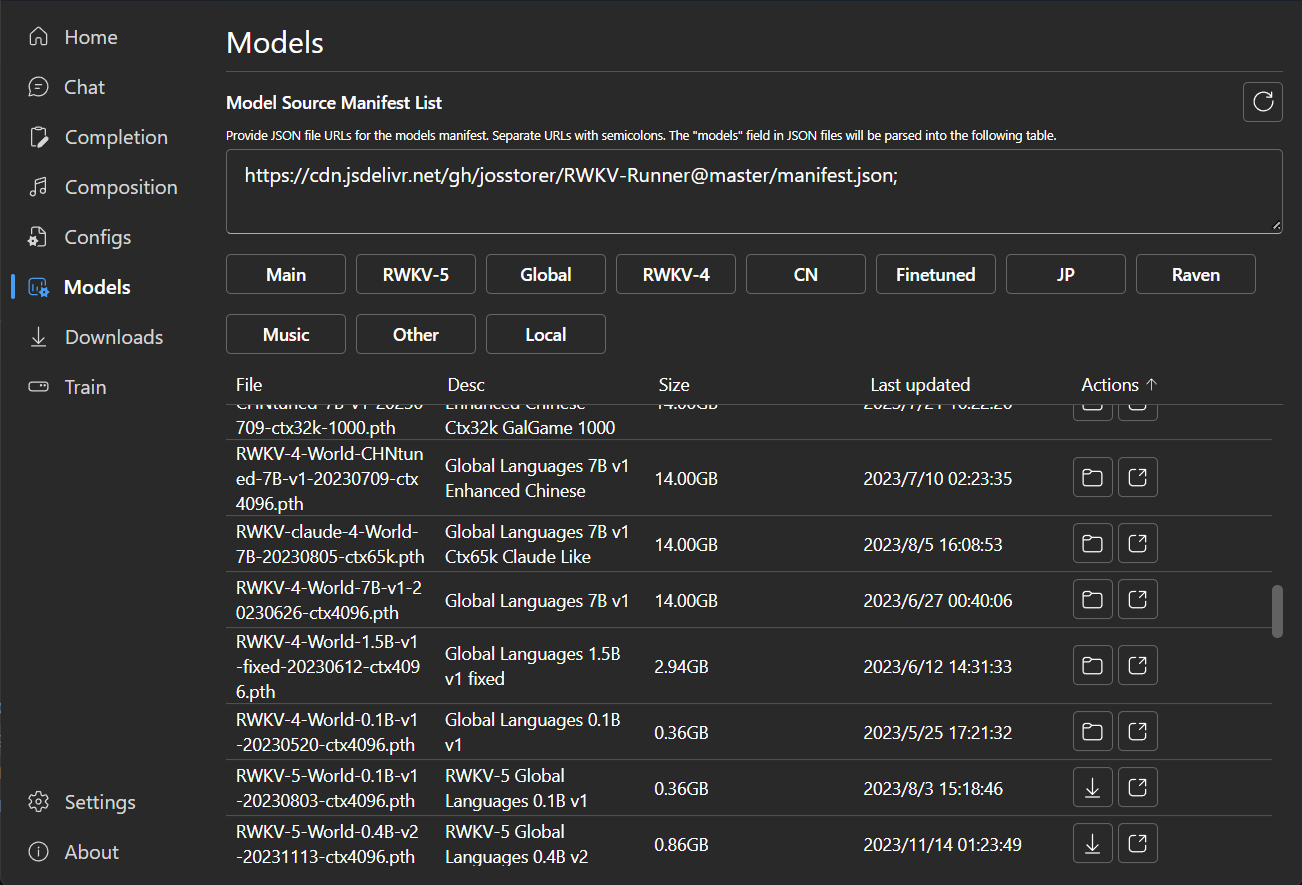

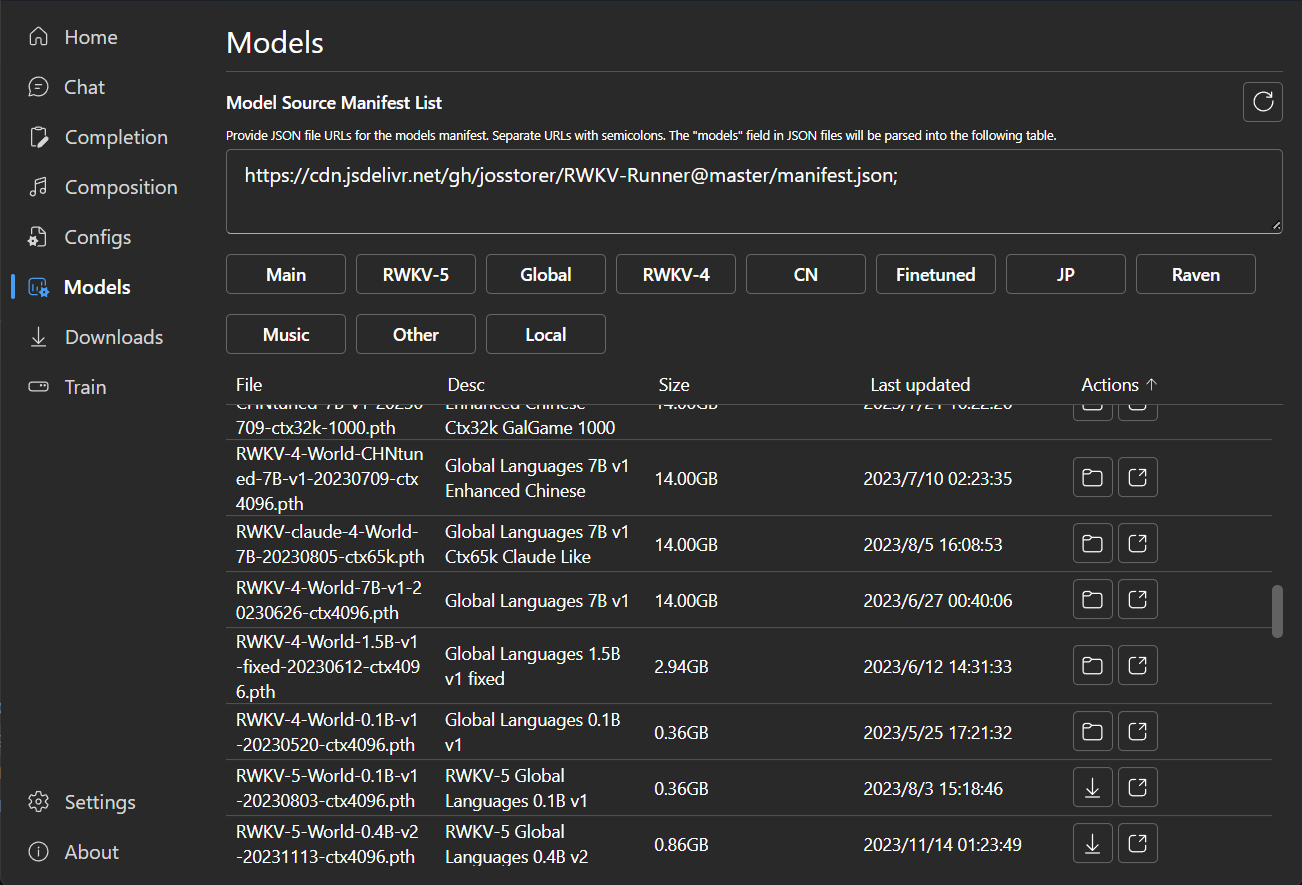

- Built-in download management and remote model inspection

|

||||

- Built-in one-click LoRA Finetune

|

||||

- Can also be used as an OpenAI ChatGPT and GPT-Playground client

|

||||

- Multilingual localization

|

||||

- Theme switching

|

||||

- Automatic updates

|

||||

- Automatic dependency installation, requiring only a lightweight executable program.

|

||||

- Pre-set multi-level VRAM configs, works well on almost all computers. In Configs page, switch Strategy to WebGPU, it

|

||||

can also run on AMD, Intel, and other graphics cards.

|

||||

- User-friendly chat, completion, and composition interaction interface included. Also supports chat presets, attachment

|

||||

uploads, MIDI hardware input, and track editing.

|

||||

[Preview](#Preview) | [MIDI Hardware Input](#MIDI-Input)

|

||||

- Built-in WebUI option, one-click start of Web service, sharing your hardware resources.

|

||||

- Easy-to-understand and operate parameter configuration, along with various operation guidance prompts.

|

||||

- Built-in model conversion tool.

|

||||

- Built-in download management and remote model inspection.

|

||||

- Built-in one-click LoRA Finetune. (Windows Only)

|

||||

- Can also be used as an OpenAI ChatGPT and GPT-Playground client. (Fill in the API URL and API Key in Settings page)

|

||||

- Multilingual localization.

|

||||

- Theme switching.

|

||||

- Automatic updates.

|

||||

|

||||

## Simple Deploy Example

|

||||

|

||||

```bash

|

||||

git clone https://github.com/josStorer/RWKV-Runner

|

||||

|

||||

# Then

|

||||

cd RWKV-Runner

|

||||

python ./backend-python/main.py #The backend inference service has been started, request /switch-model API to load the model, refer to the API documentation: http://127.0.0.1:8000/docs

|

||||

|

||||

# Or

|

||||

cd RWKV-Runner/frontend

|

||||

npm ci

|

||||

npm run build #Compile the frontend

|

||||

cd ..

|

||||

python ./backend-python/webui_server.py #Start the frontend service separately

|

||||

# Or

|

||||

python ./backend-python/main.py --webui #Start the frontend and backend service at the same time

|

||||

|

||||

# Help Info

|

||||

python ./backend-python/main.py -h

|

||||

```

|

||||

|

||||

## API Concurrency Stress Testing

|

||||

|

||||

@@ -91,6 +137,9 @@ body.json:

|

||||

|

||||

## Embeddings API Example

|

||||

|

||||

Note: v1.4.0 has improved the quality of embeddings API. The generated results are not compatible

|

||||

with previous versions. If you are using embeddings API to generate knowledge bases or similar, please regenerate.

|

||||

|

||||

If you are using langchain, just use `OpenAIEmbeddings(openai_api_base="http://127.0.0.1:8000", openai_api_key="sk-")`

|

||||

|

||||

```python

|

||||

@@ -128,35 +177,98 @@ for i in np.argsort(embeddings_cos_sim)[::-1]:

|

||||

print(f"{embeddings_cos_sim[i]:.10f} - {values[i]}")

|

||||

```

|

||||

|

||||

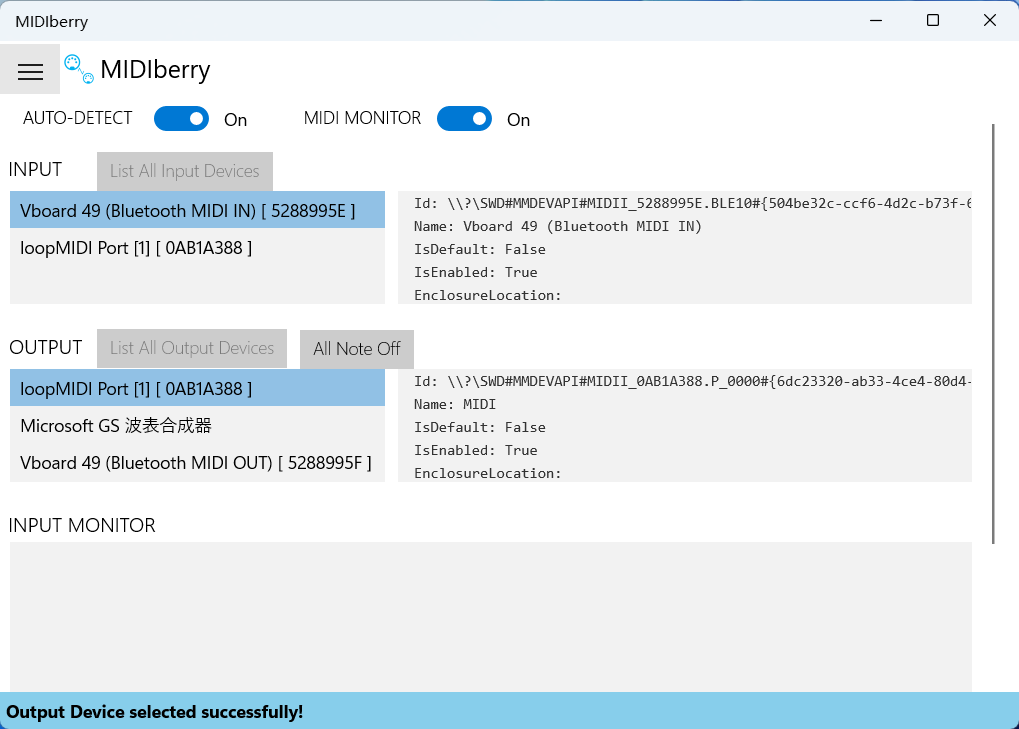

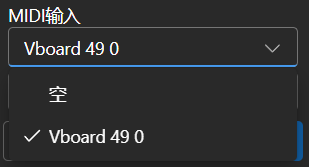

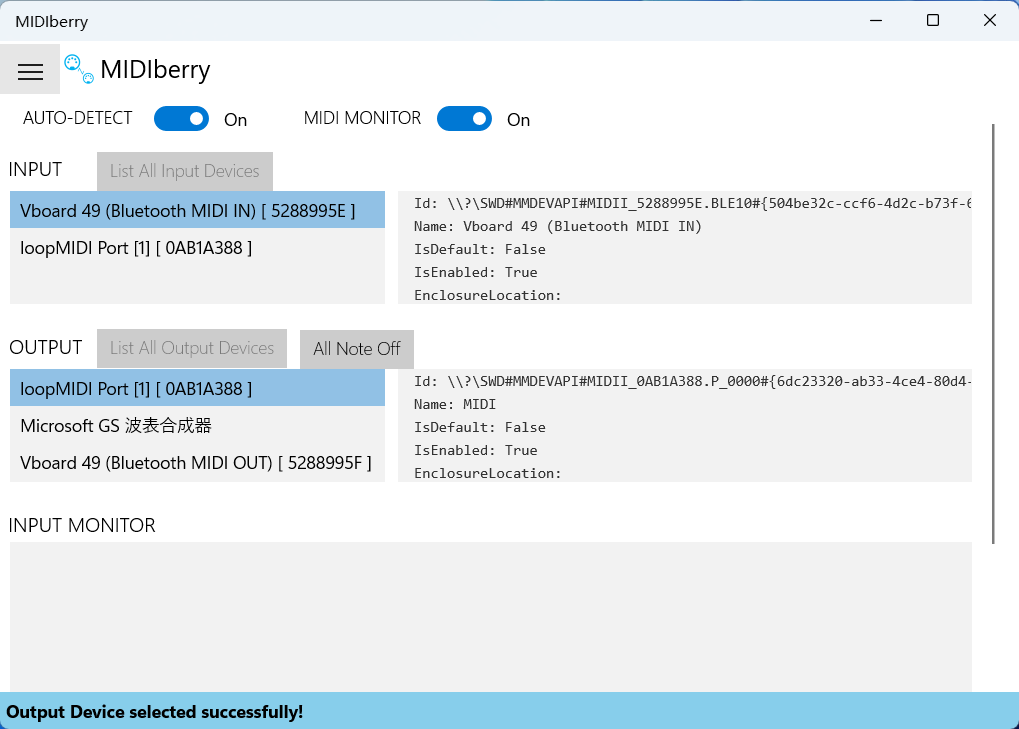

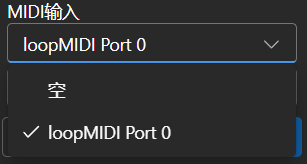

## MIDI Input

|

||||

|

||||

Tip: You can download https://github.com/josStorer/sgm_plus and unzip it to the program's `assets/sound-font` directory

|

||||

to use it as an offline sound source. Please note that if you are compiling the program from source code, do not place

|

||||

it in the source code directory.

|

||||

|

||||

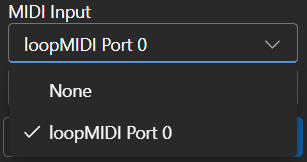

If you don't have a MIDI keyboard, you can use virtual MIDI input software like `Virtual Midi Controller 3 LE`, along

|

||||

with [loopMIDI](https://www.tobias-erichsen.de/wp-content/uploads/2020/01/loopMIDISetup_1_0_16_27.zip), to use a regular

|

||||

computer keyboard as MIDI input.

|

||||

|

||||

### USB MIDI Connection

|

||||

|

||||

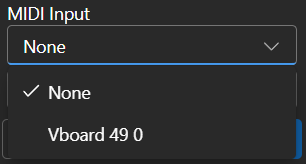

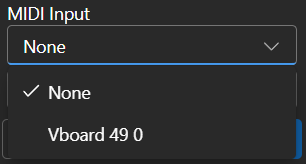

- USB MIDI devices are plug-and-play, and you can select your input device in the Composition page

|

||||

-

|

||||

|

||||

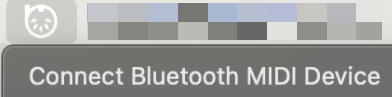

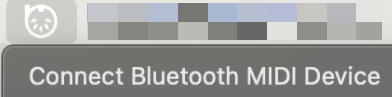

### Mac MIDI Bluetooth Connection

|

||||

|

||||

- For Mac users who want to use Bluetooth input,

|

||||

please install [Bluetooth MIDI Connect](https://apps.apple.com/us/app/bluetooth-midi-connect/id1108321791), then click

|

||||

the tray icon to connect after launching,

|

||||

afterwards, you can select your input device in the Composition page.

|

||||

-

|

||||

|

||||

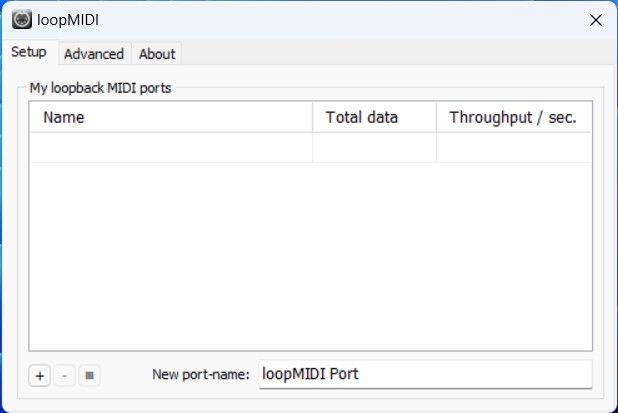

### Windows MIDI Bluetooth Connection

|

||||

|

||||

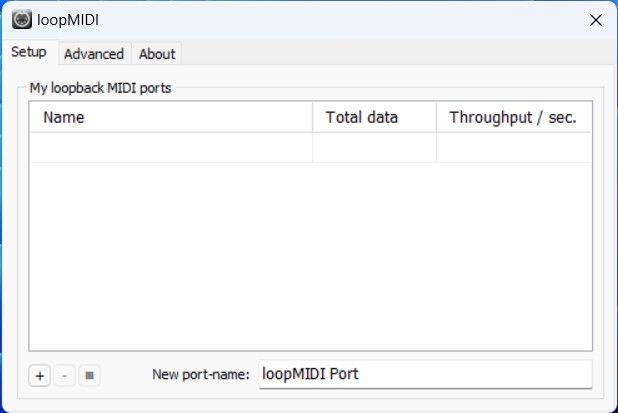

- Windows seems to have implemented Bluetooth MIDI support only for UWP (Universal Windows Platform) apps. Therefore, it

|

||||

requires multiple steps to establish a connection. We need to create a local virtual MIDI device and then launch a UWP

|

||||

application. Through this UWP application, we will redirect Bluetooth MIDI input to the virtual MIDI device, and then

|

||||

this software will listen to the input from the virtual MIDI device.

|

||||

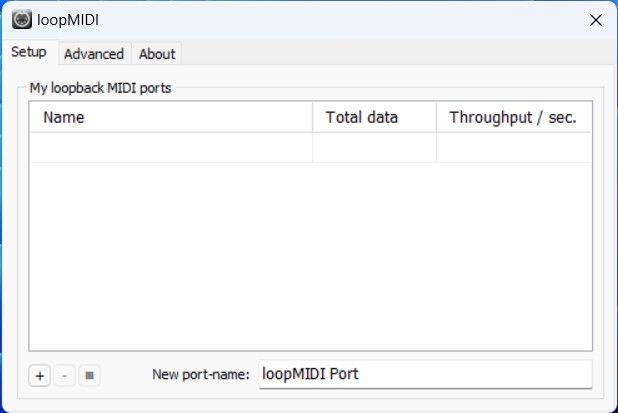

- So, first, you need to

|

||||

download [loopMIDI](https://www.tobias-erichsen.de/wp-content/uploads/2020/01/loopMIDISetup_1_0_16_27.zip)

|

||||

to create a virtual MIDI device. Click the plus sign in the bottom left corner to create the device.

|

||||

-

|

||||

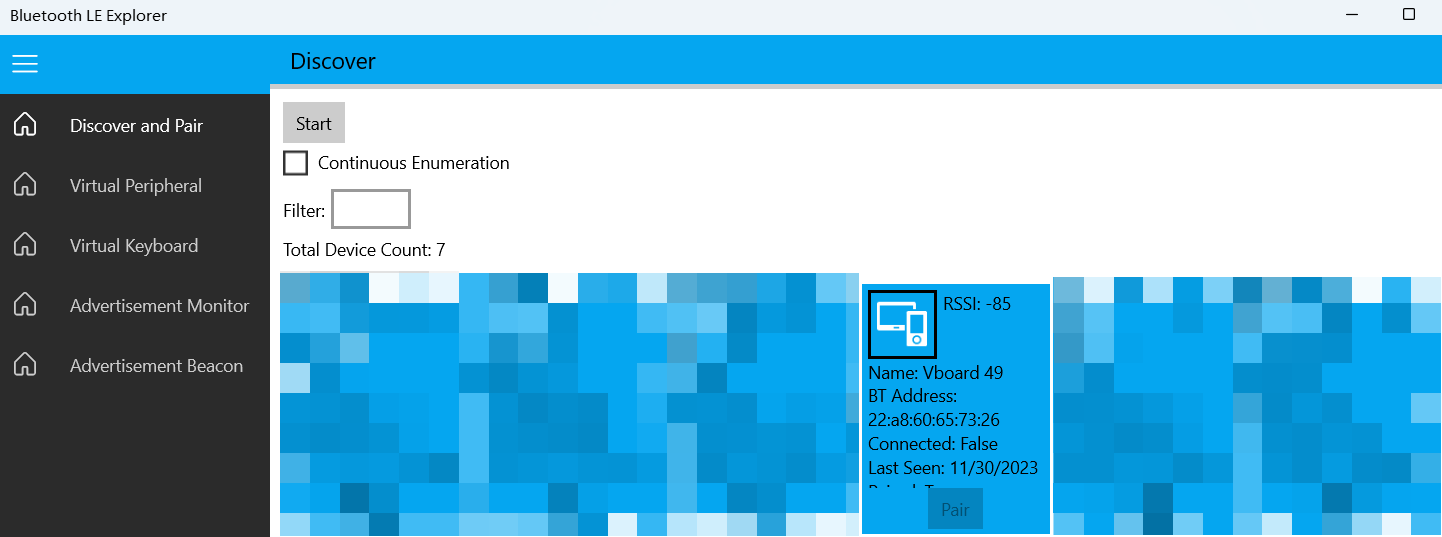

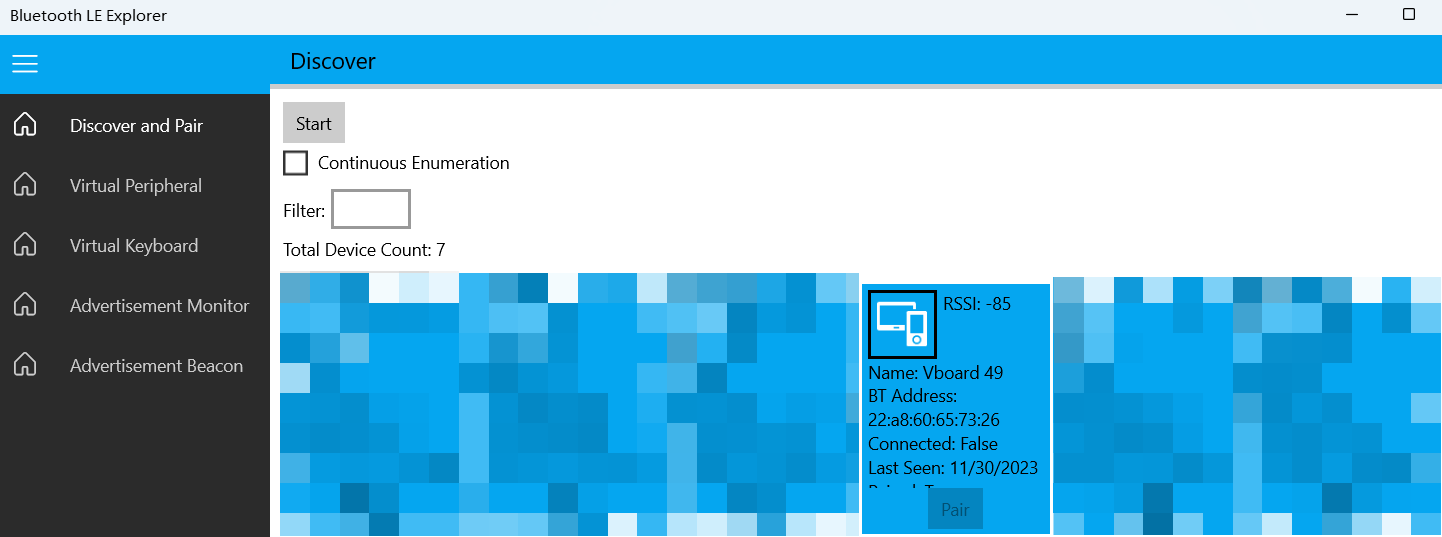

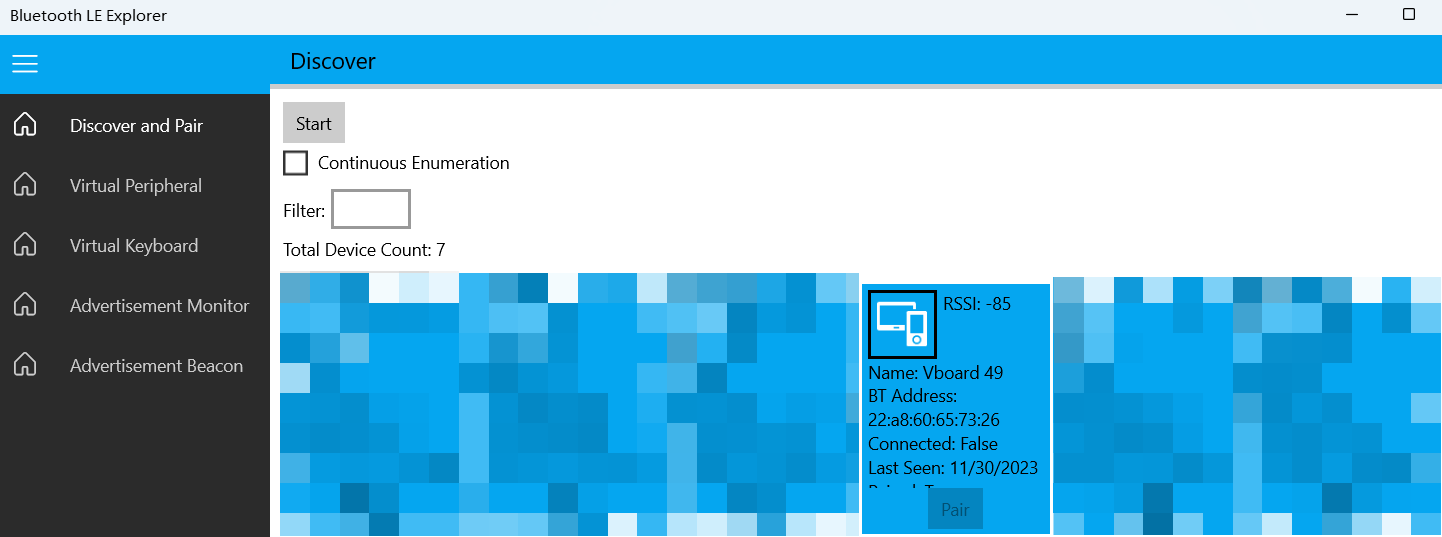

- Next, you need to download [Bluetooth LE Explorer](https://apps.microsoft.com/detail/9N0ZTKF1QD98) to discover and

|

||||

connect to Bluetooth MIDI devices. Click "Start" to search for devices, and then click "Pair" to bind the MIDI device.

|

||||

-

|

||||

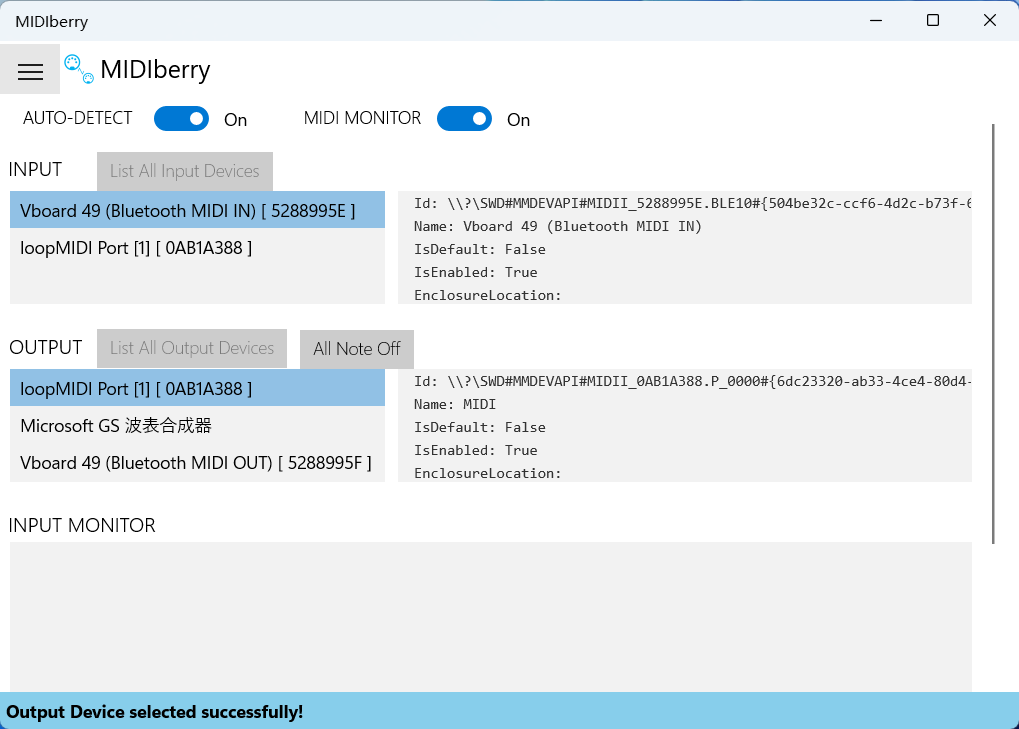

- Finally, you need to install [MIDIberry](https://apps.microsoft.com/detail/9N39720H2M05),

|

||||

This UWP application can redirect Bluetooth MIDI input to the virtual MIDI device. After launching it, double-click

|

||||

your actual Bluetooth MIDI device name in the input field, and in the output field, double-click the virtual MIDI

|

||||

device name we created earlier.

|

||||

-

|

||||

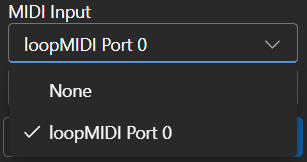

- Now, you can select the virtual MIDI device as the input in the Composition page. Bluetooth LE Explorer no longer

|

||||

needs to run, and you can also close the loopMIDI window, it will run automatically in the background. Just keep

|

||||

MIDIberry open.

|

||||

-

|

||||

|

||||

## Related Repositories:

|

||||

|

||||

- RWKV-5-World: https://huggingface.co/BlinkDL/rwkv-5-world/tree/main

|

||||

- RWKV-4-World: https://huggingface.co/BlinkDL/rwkv-4-world/tree/main

|

||||

- RWKV-4-Raven: https://huggingface.co/BlinkDL/rwkv-4-raven/tree/main

|

||||

- ChatRWKV: https://github.com/BlinkDL/ChatRWKV

|

||||

- RWKV-LM: https://github.com/BlinkDL/RWKV-LM

|

||||

- RWKV-LM-LoRA: https://github.com/Blealtan/RWKV-LM-LoRA

|

||||

- MIDI-LLM-tokenizer: https://github.com/briansemrau/MIDI-LLM-tokenizer

|

||||

- ai00_rwkv_server: https://github.com/cgisky1980/ai00_rwkv_server

|

||||

- rwkv.cpp: https://github.com/saharNooby/rwkv.cpp

|

||||

- web-rwkv-py: https://github.com/cryscan/web-rwkv-py

|

||||

|

||||

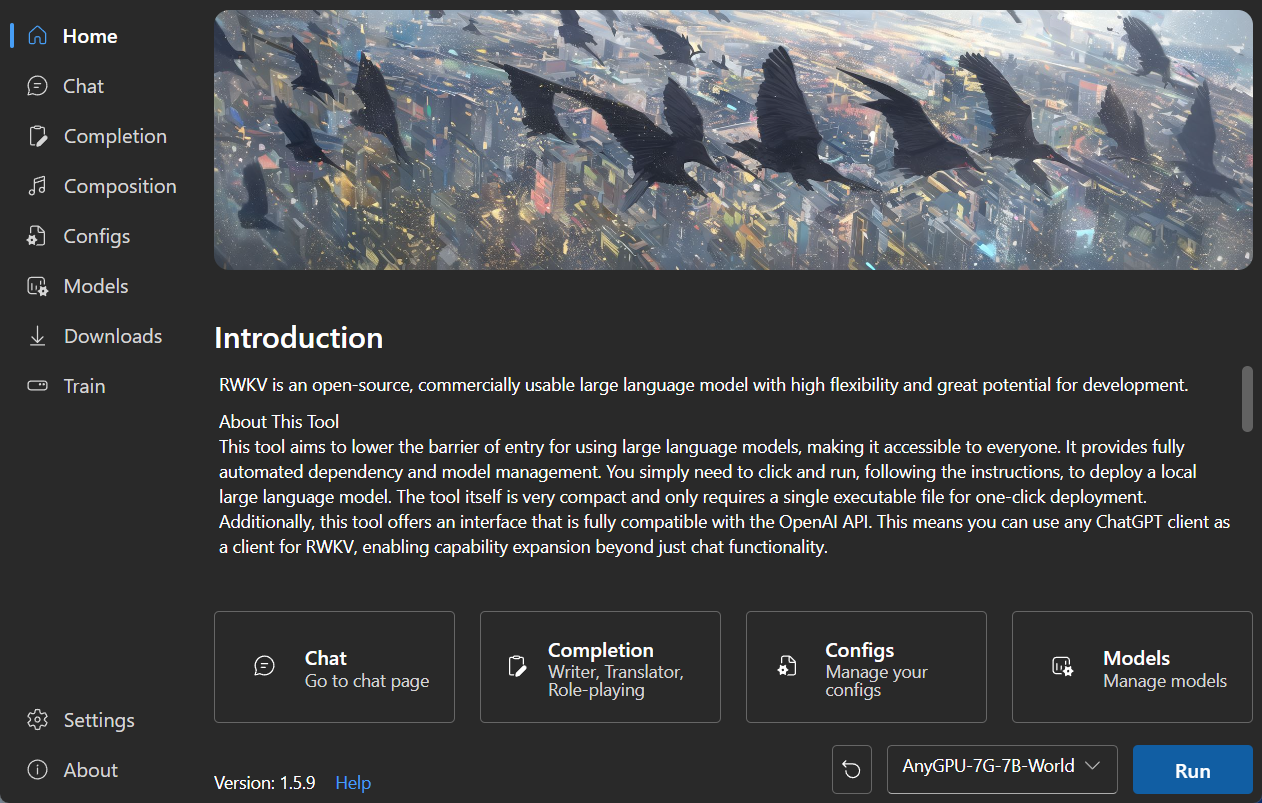

## Preview

|

||||

|

||||

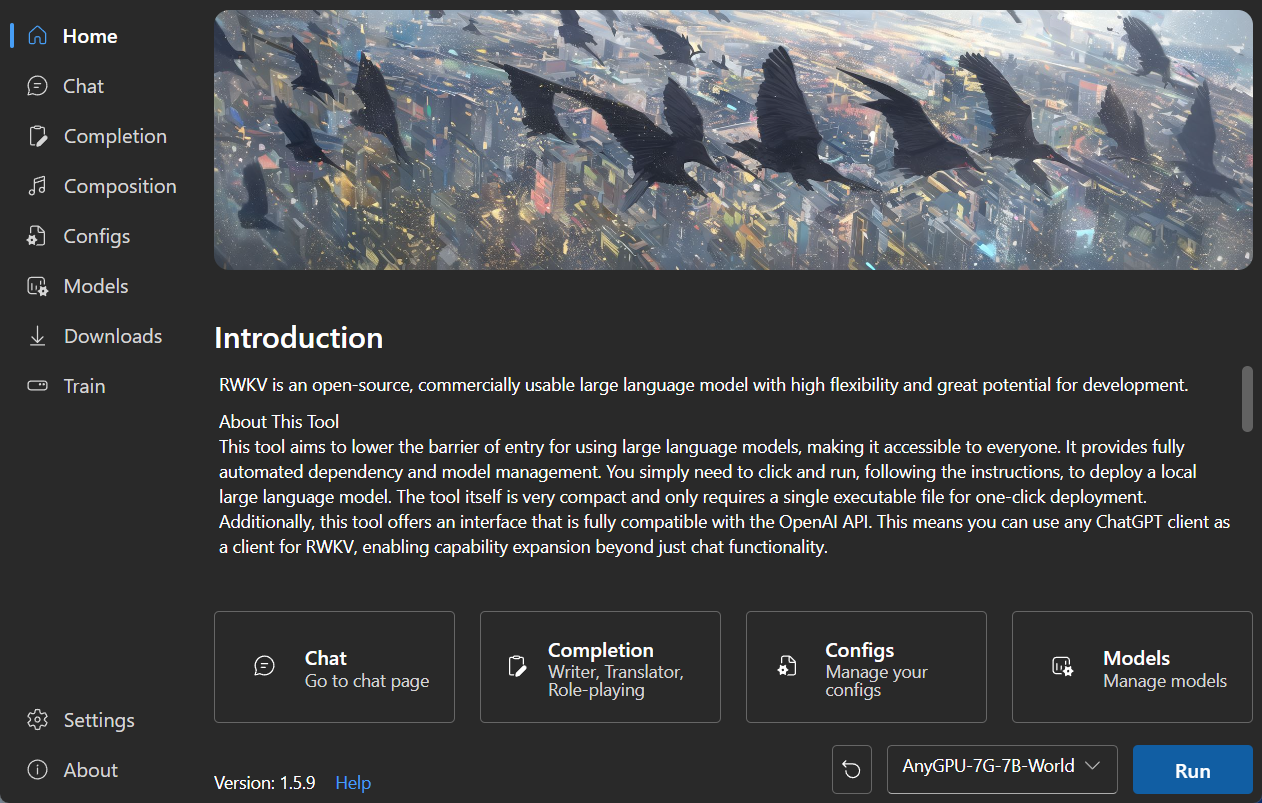

### Homepage

|

||||

|

||||

|

||||

|

||||

|

||||

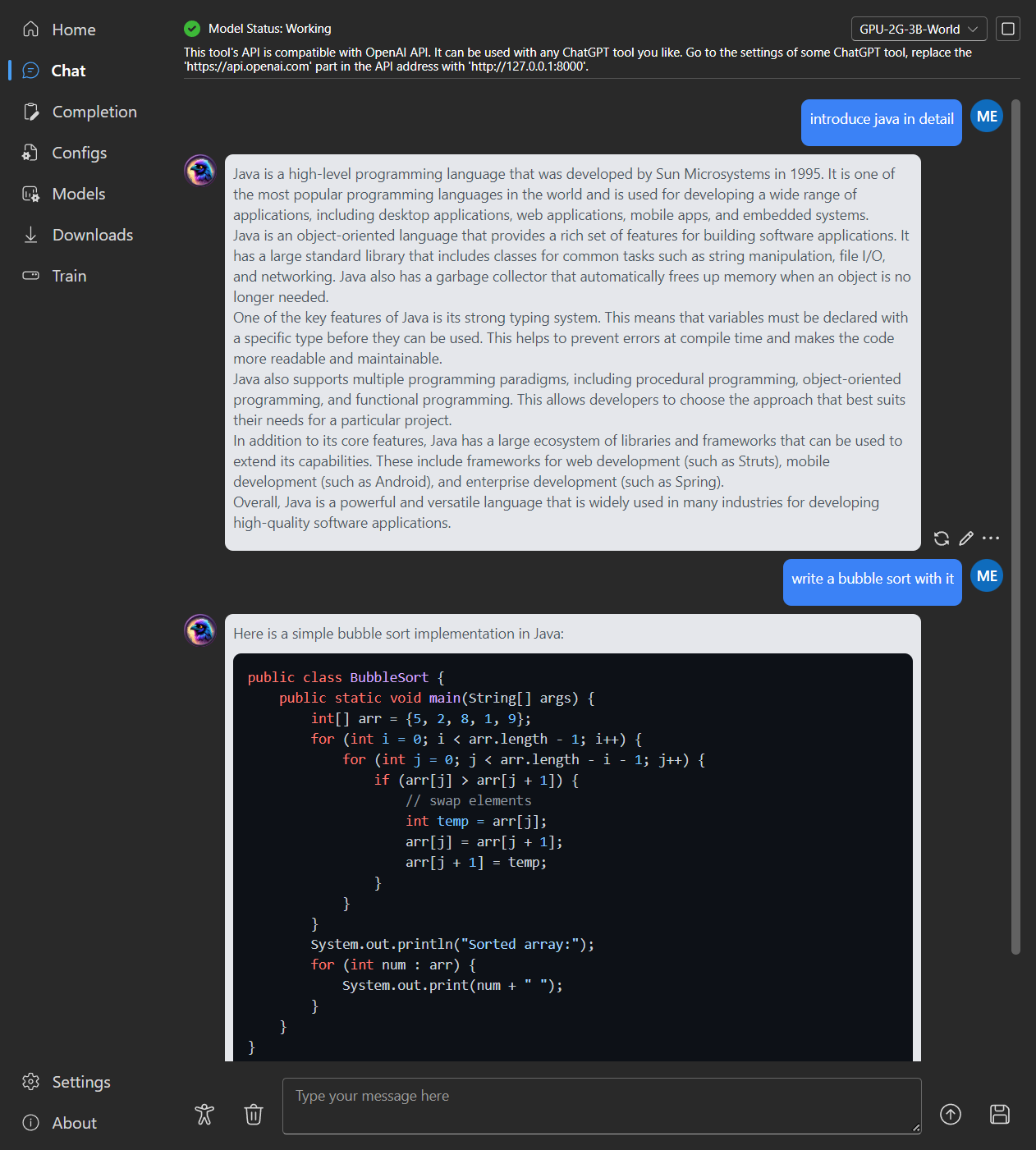

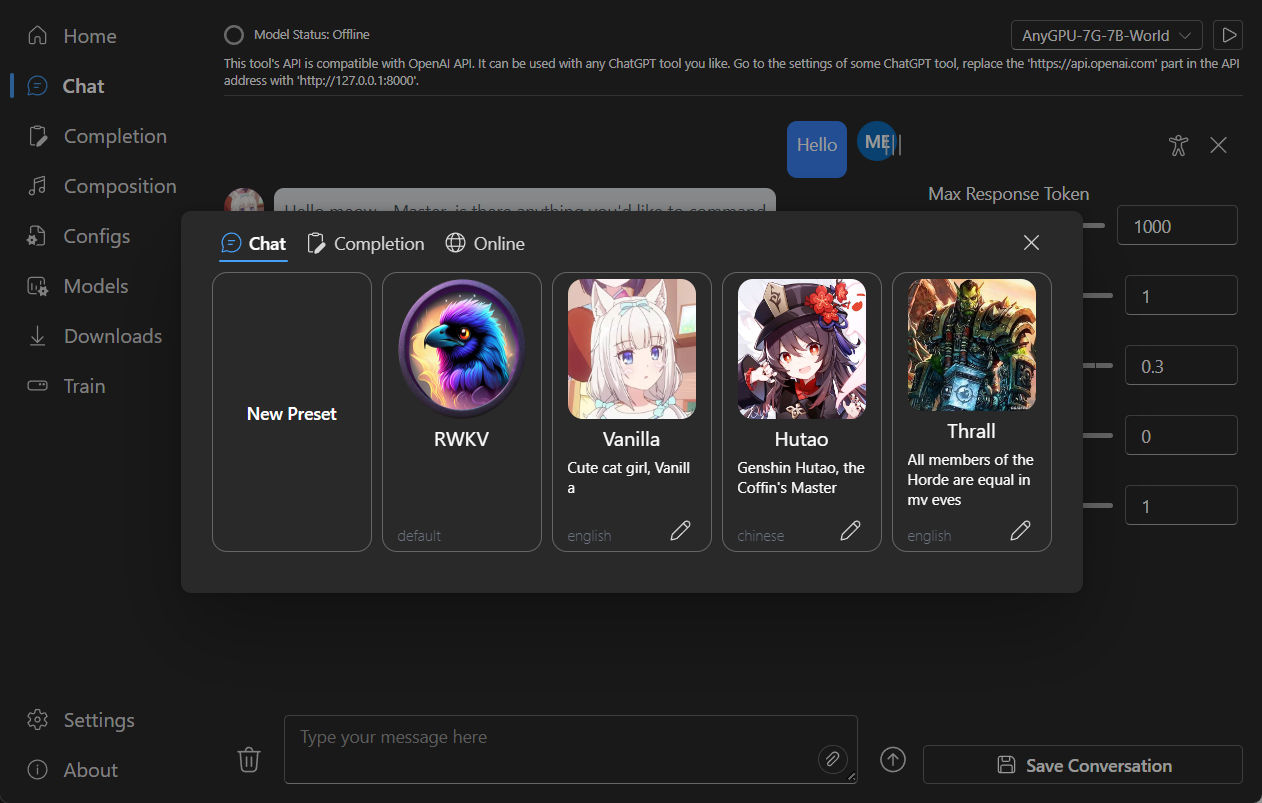

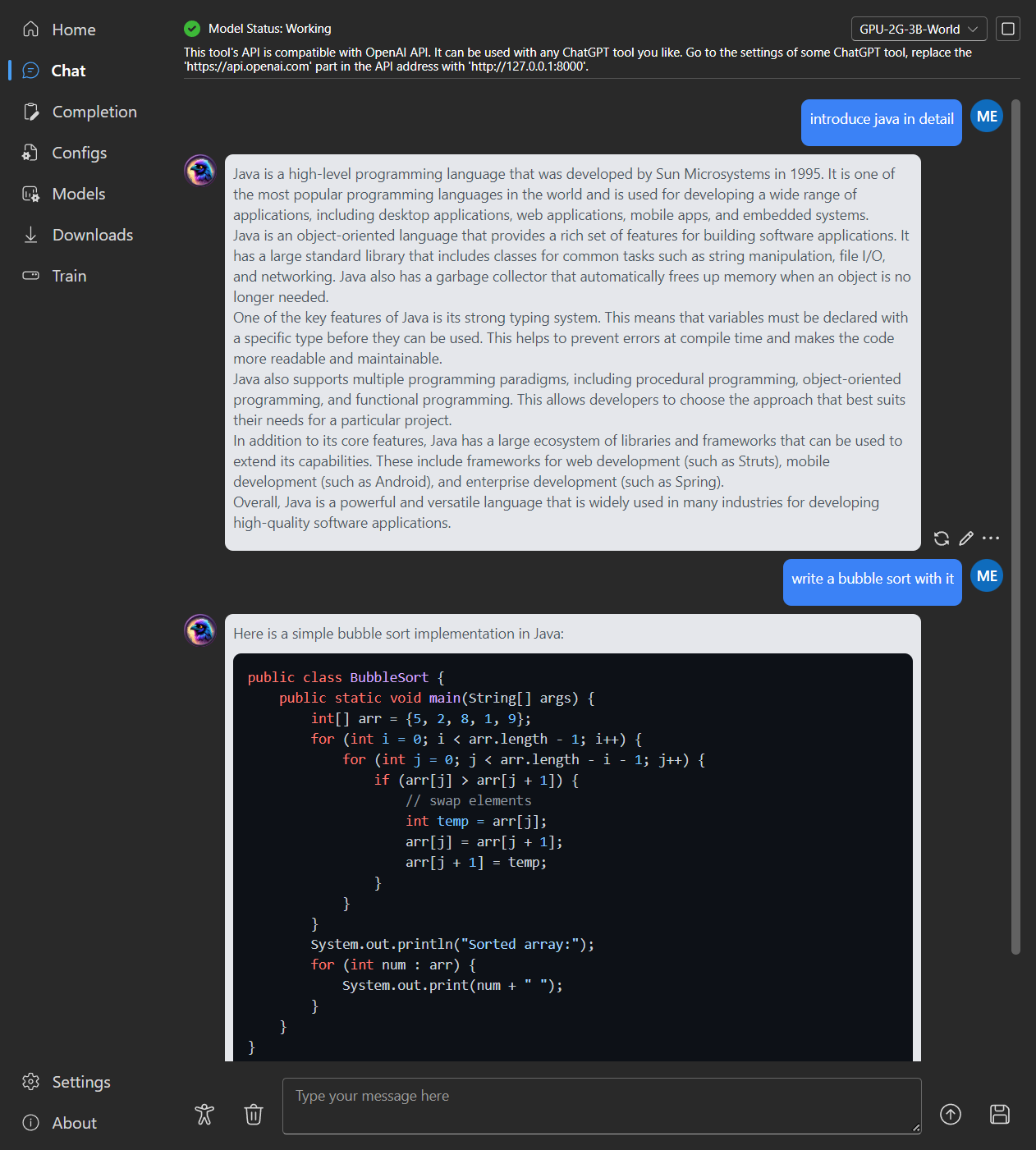

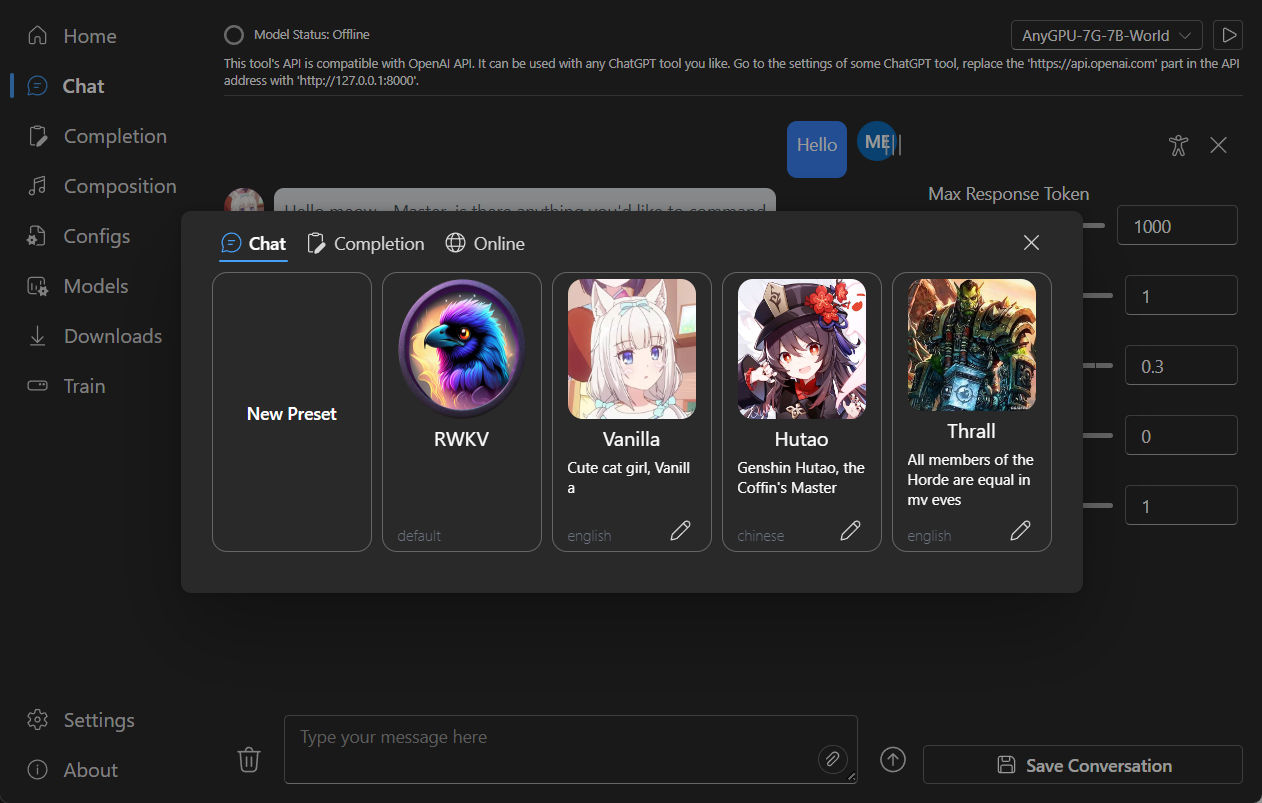

### Chat

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

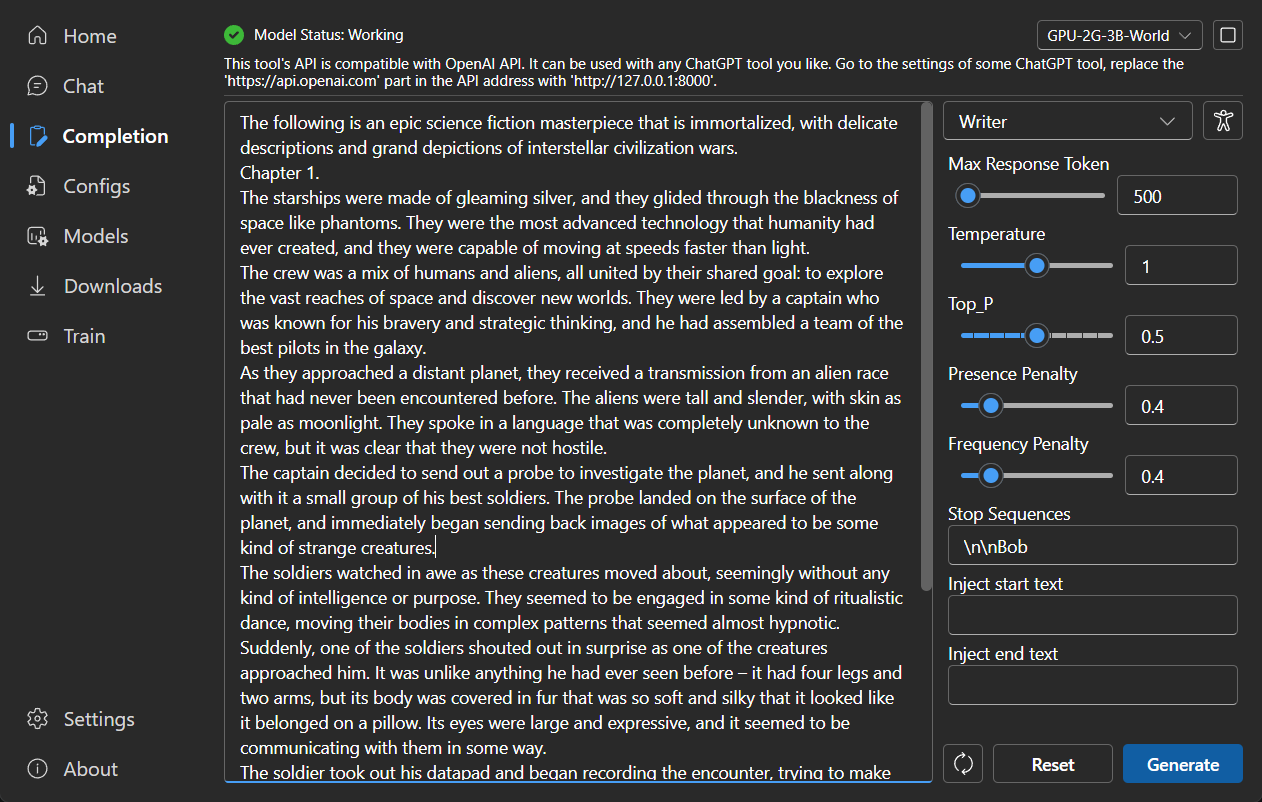

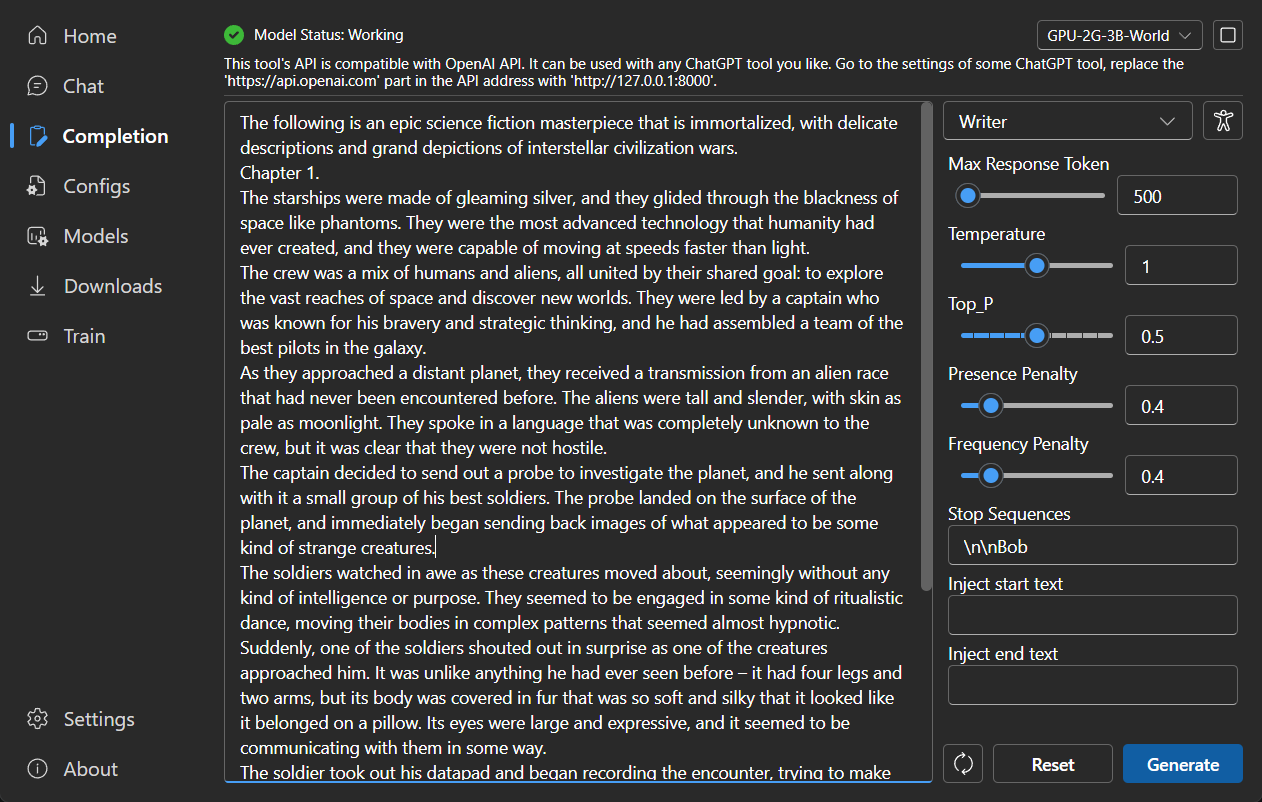

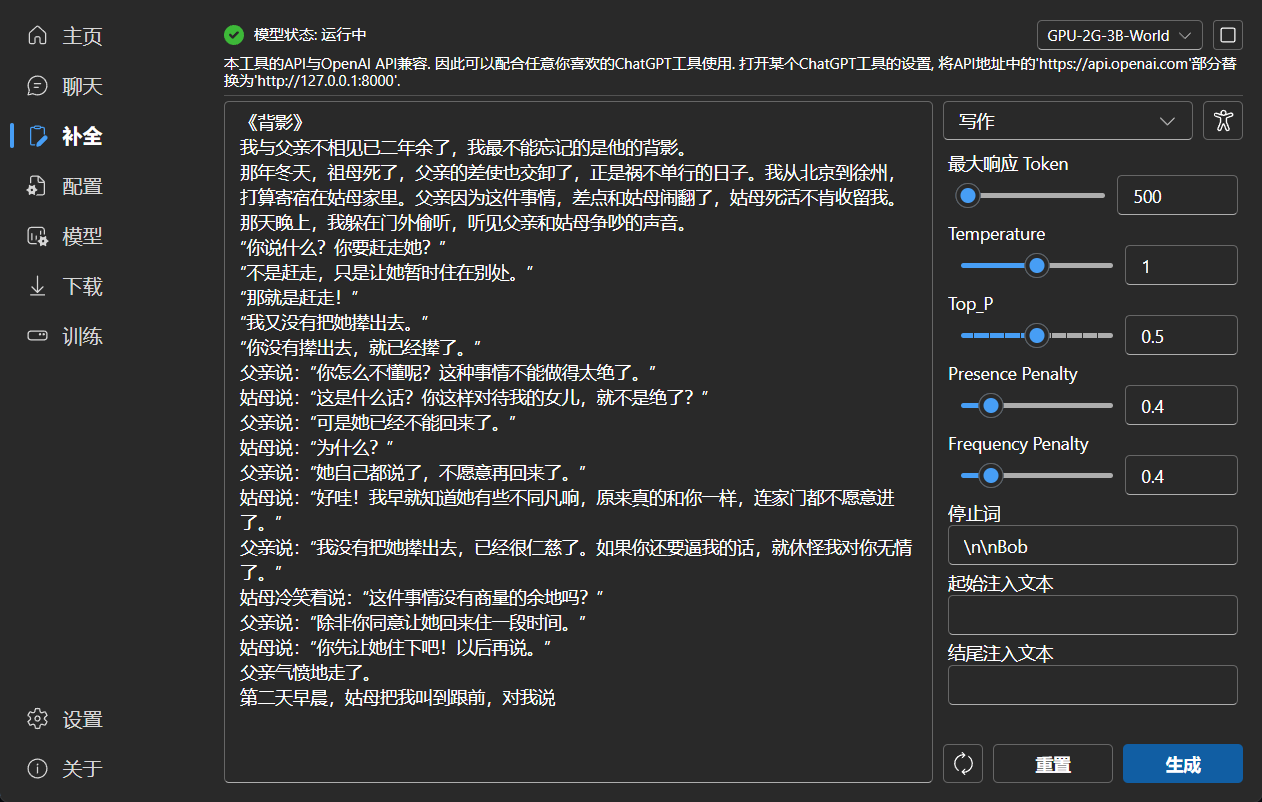

### Completion

|

||||

|

||||

|

||||

|

||||

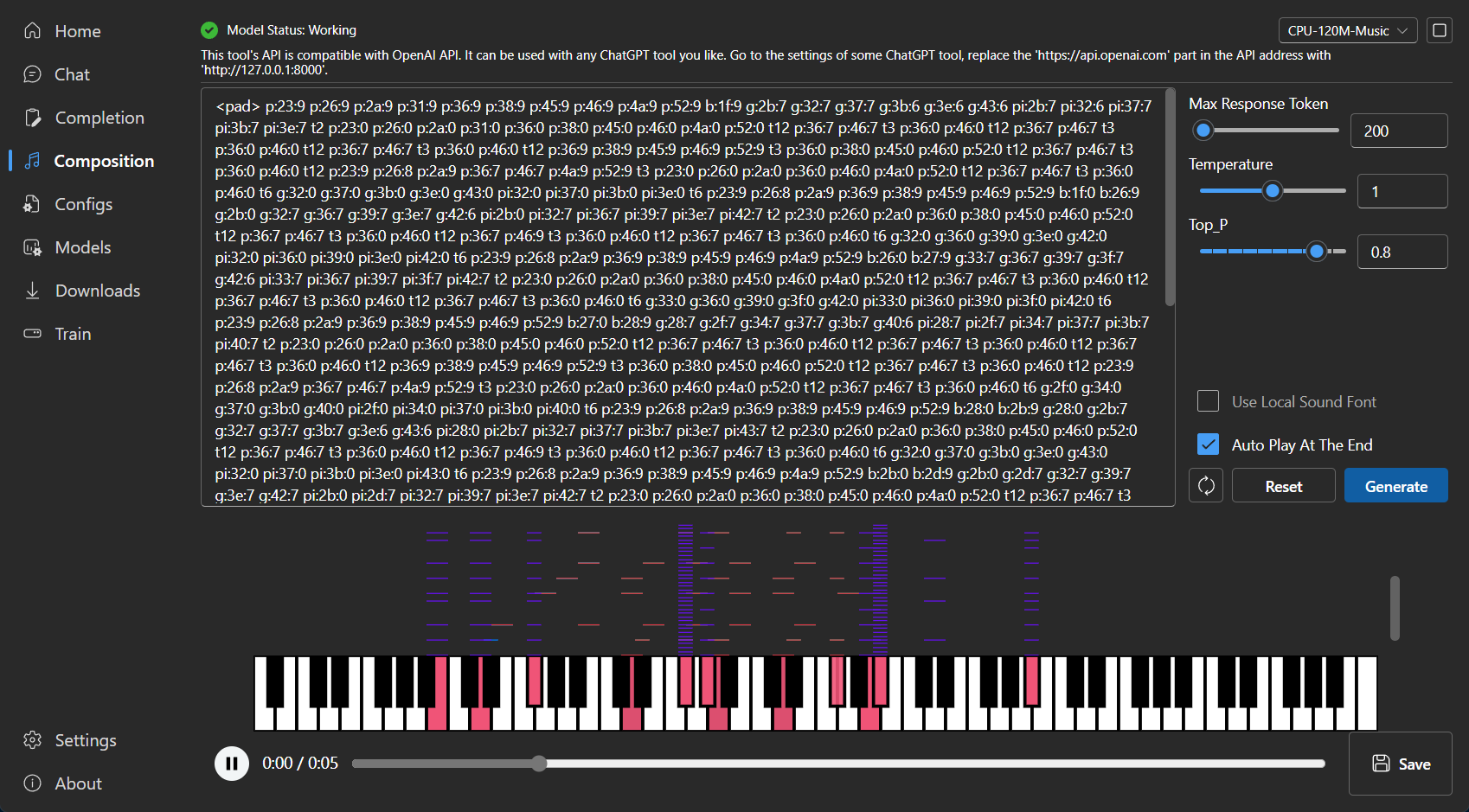

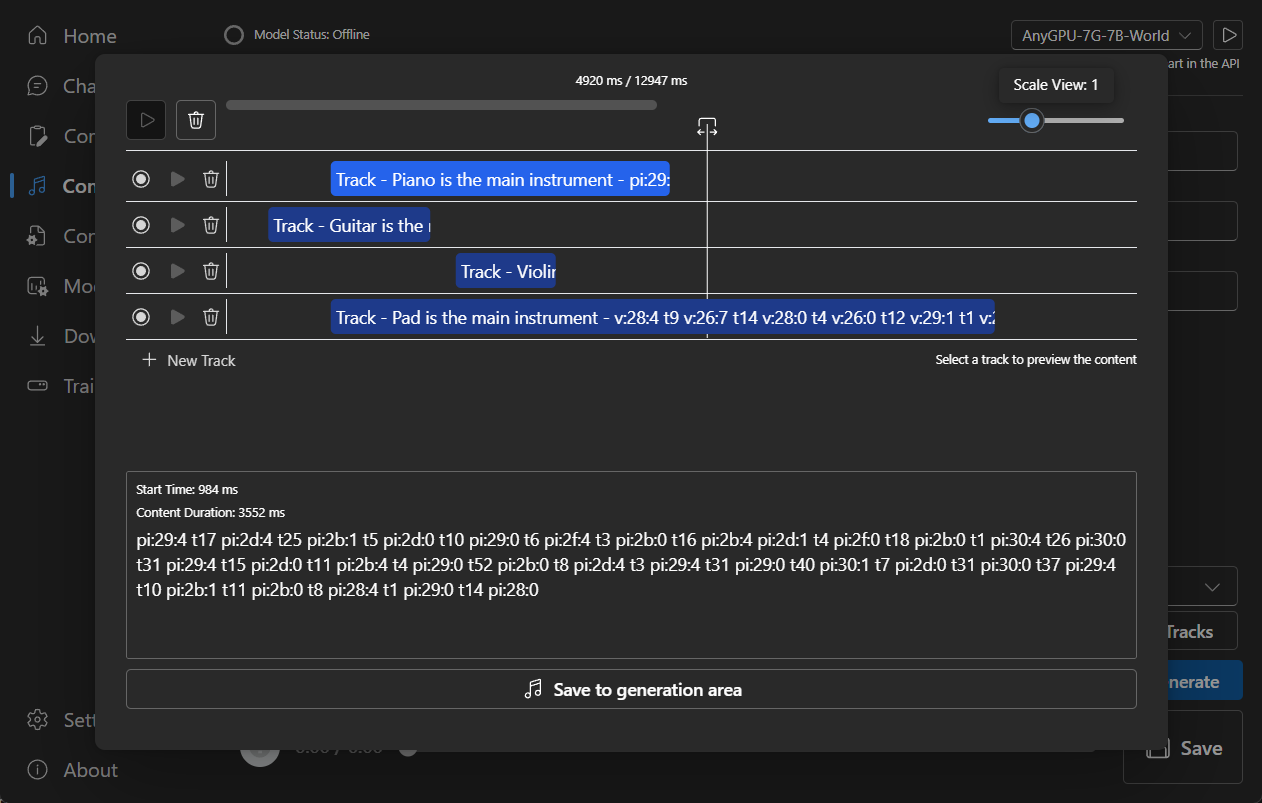

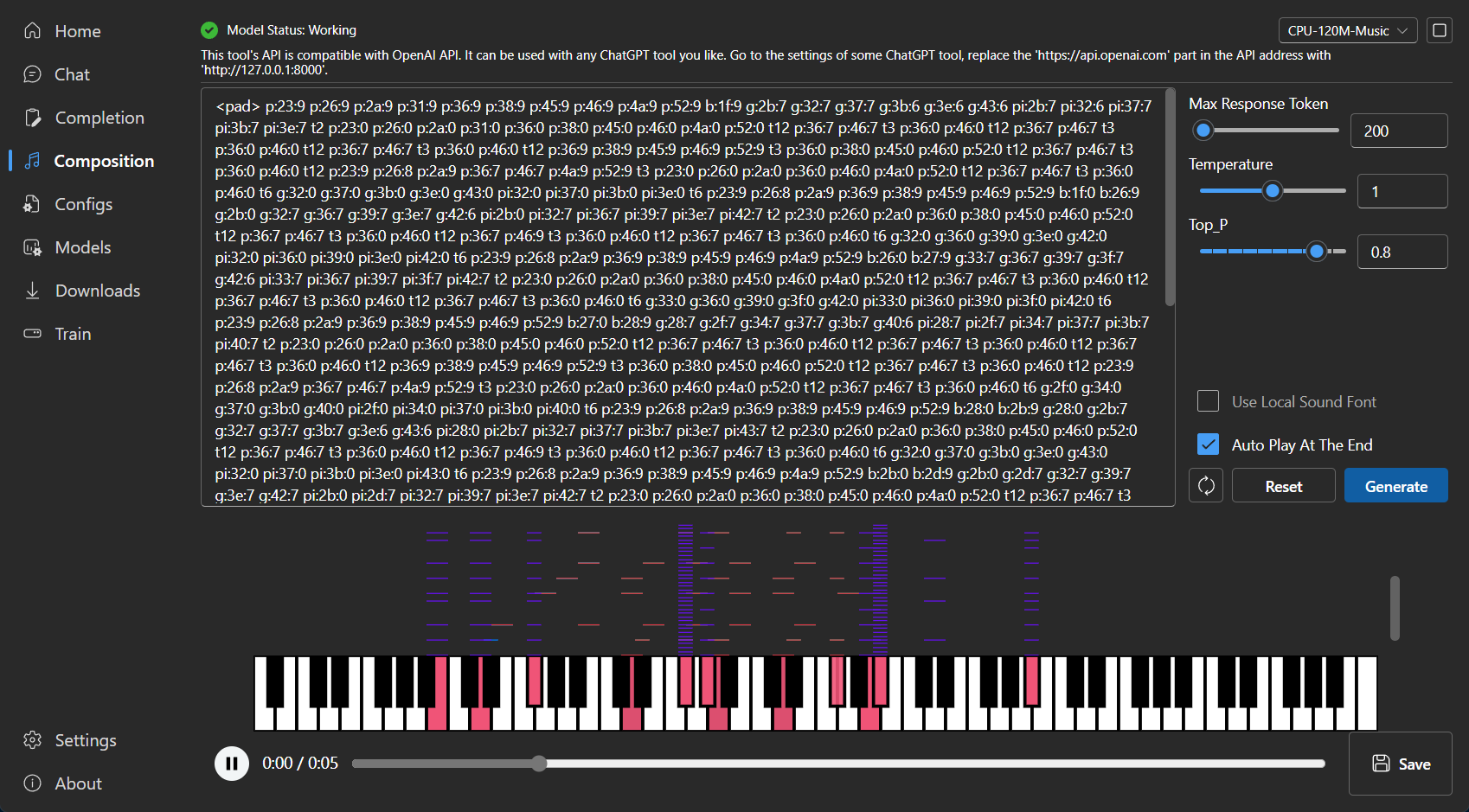

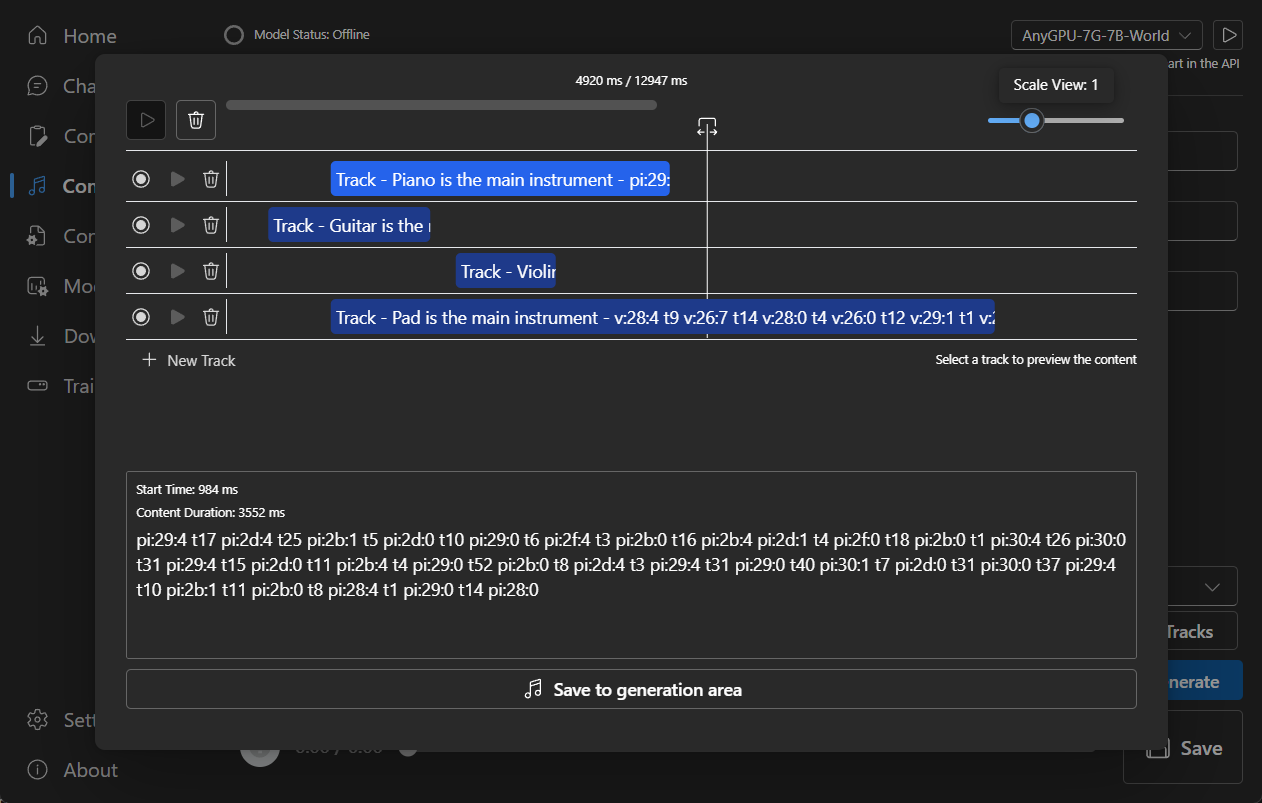

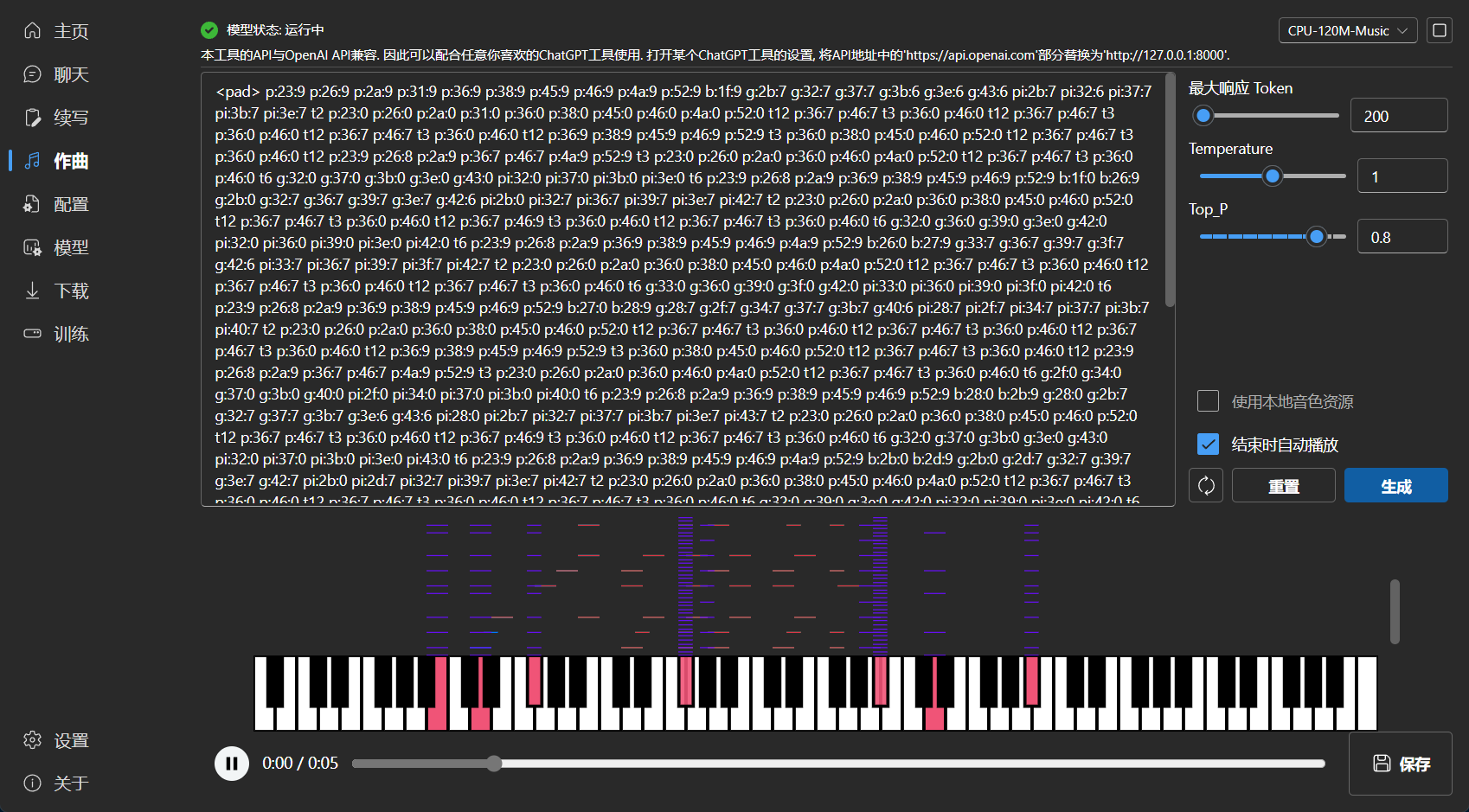

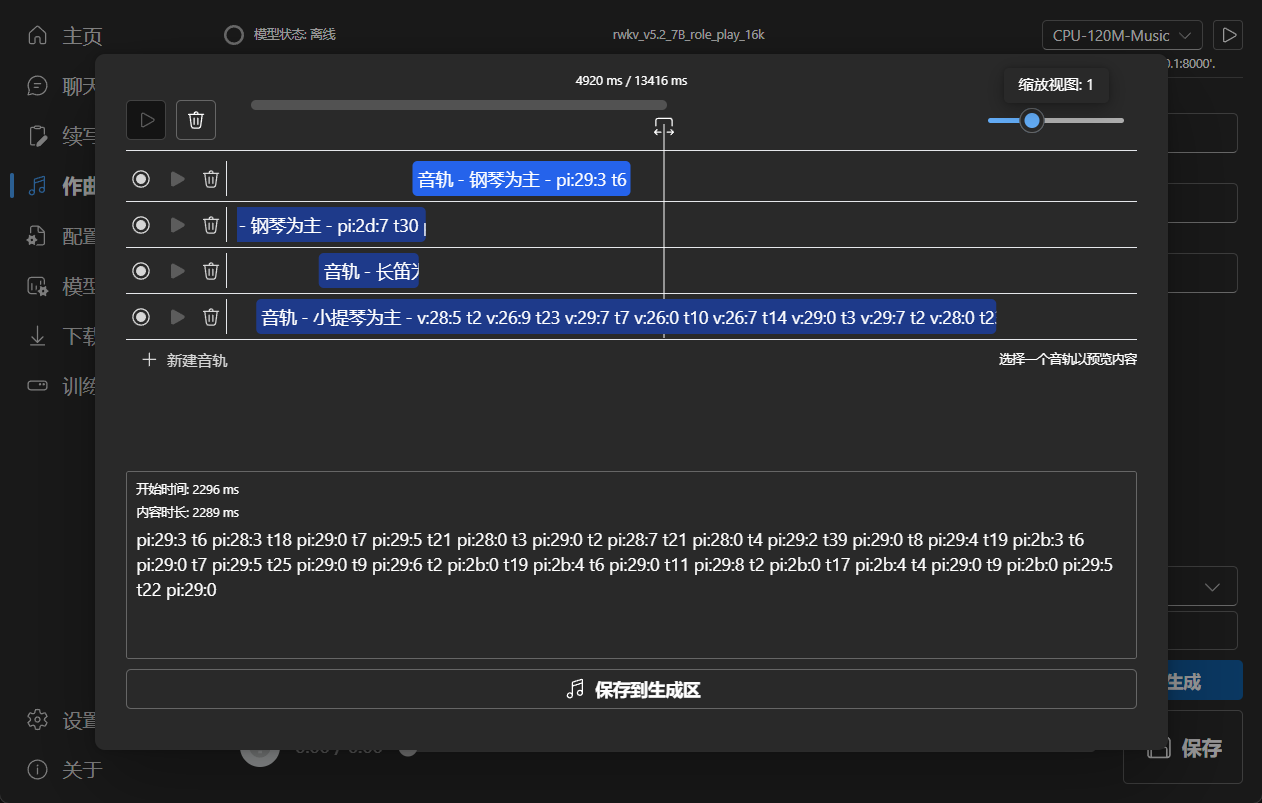

### Composition

|

||||

|

||||

Tip: You can download https://github.com/josStorer/sgm_plus and unzip it to the program's `assets/sound-font` directory

|

||||

to use it as an offline sound source. Please note that if you are compiling the program from source code, do not place

|

||||

it in the source code directory.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

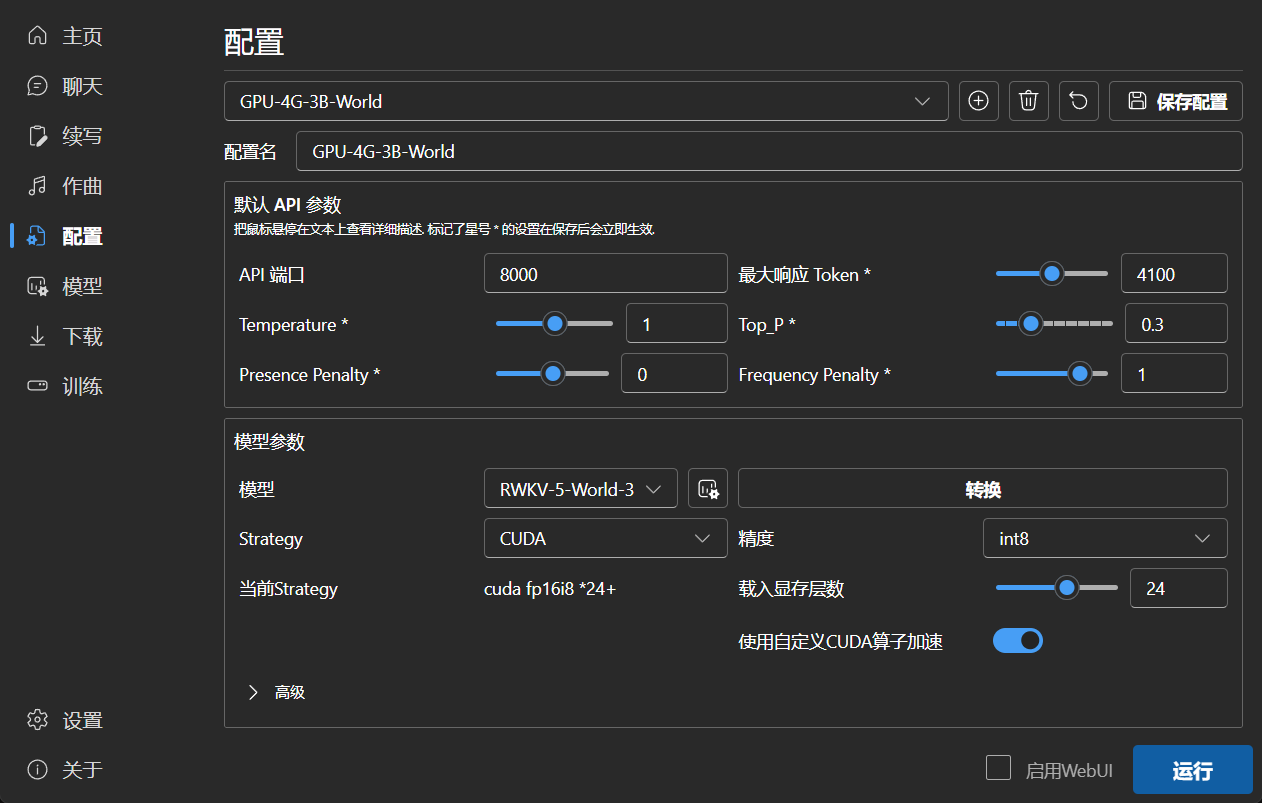

### Configuration

|

||||

|

||||

|

||||

|

||||

|

||||

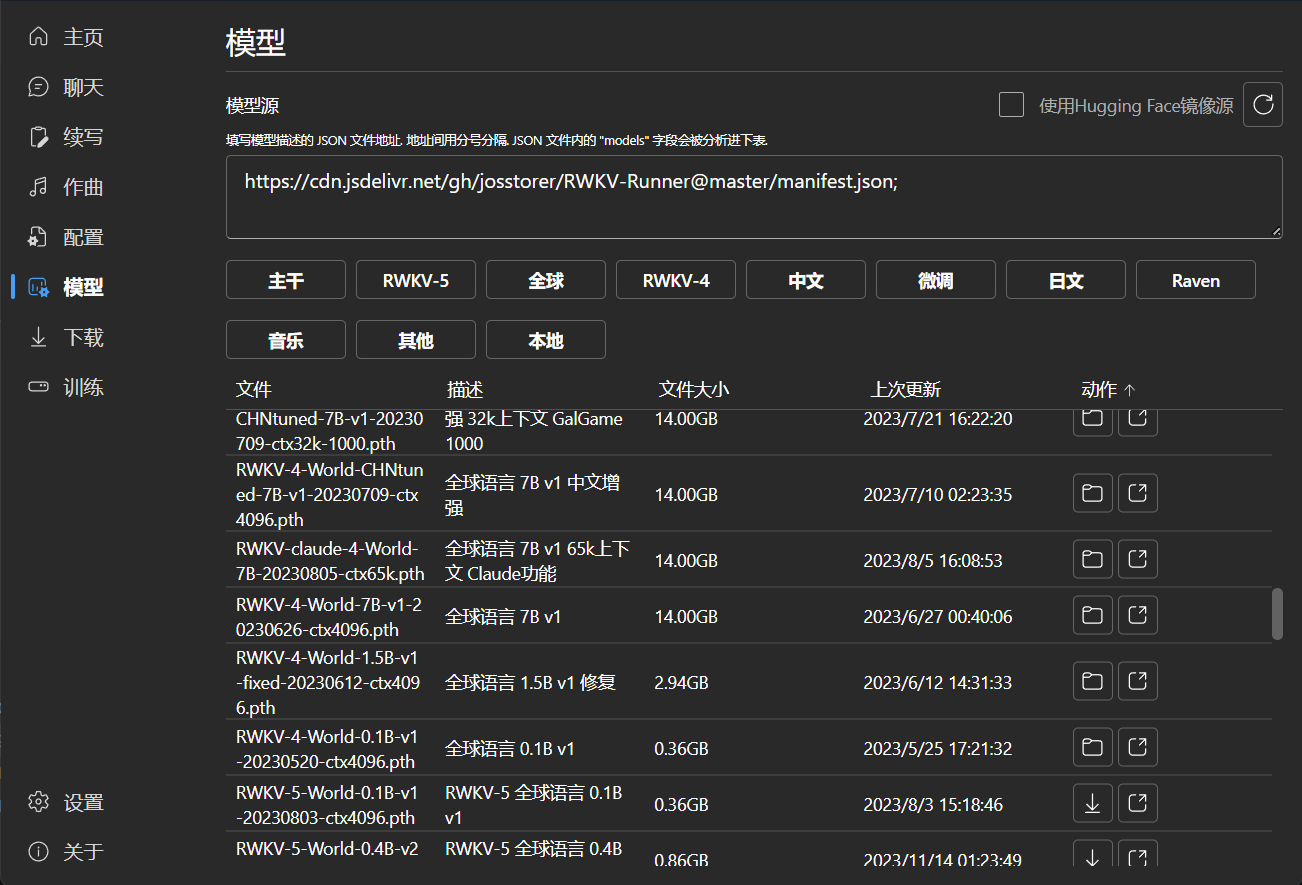

### Model Management

|

||||

|

||||

|

||||

|

||||

|

||||

### Download Management

|

||||

|

||||

|

||||

139

README_JA.md

139

README_JA.md

@@ -21,7 +21,7 @@

|

||||

[![MacOS][MacOS-image]][MacOS-url]

|

||||

[![Linux][Linux-image]][Linux-url]

|

||||

|

||||

[FAQs](https://github.com/josStorer/RWKV-Runner/wiki/FAQs) | [プレビュー](#Preview) | [ダウンロード][download-url] | [サーバーデプロイ例](https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples)

|

||||

[FAQs](https://github.com/josStorer/RWKV-Runner/wiki/FAQs) | [プレビュー](#Preview) | [ダウンロード][download-url] | [シンプルなデプロイの例](#Simple-Deploy-Example) | [サーバーデプロイ例](https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples) | [MIDIハードウェア入力](#MIDI-Input)

|

||||

|

||||

[license-image]: http://img.shields.io/badge/license-MIT-blue.svg

|

||||

|

||||

@@ -47,29 +47,71 @@

|

||||

|

||||

</div>

|

||||

|

||||

#### デフォルトの設定はカスタム CUDA カーネルアクセラレーションを有効にしています。互換性の問題が発生する可能性がある場合は、コンフィグページに移動し、`Use Custom CUDA kernel to Accelerate` をオフにしてください。

|

||||

## ヒント

|

||||

|

||||

#### Windows Defender がこれをウイルスだと主張する場合は、[v1.3.7_win.zip](https://github.com/josStorer/RWKV-Runner/releases/download/v1.3.7/RWKV-Runner_win.zip) をダウンロードして最新版に自動更新させるか、信頼済みリストに追加してみてください。

|

||||

- サーバーに [backend-python](./backend-python/)

|

||||

をデプロイし、このプログラムをクライアントとして使用することができます。設定された`API URL`にサーバーアドレスを入力してください。

|

||||

|

||||

#### 異なるタスクについては、API パラメータを調整することで、より良い結果を得ることができます。例えば、翻訳タスクの場合、Temperature を 1 に、Top_P を 0.3 に設定してみてください。

|

||||

- もし、あなたがデプロイし、外部に公開するサービスを提供している場合、APIゲートウェイを使用してリクエストのサイズを制限し、

|

||||

長すぎるプロンプトの提出がリソースを占有しないようにしてください。さらに、実際の状況に応じて、リクエストの max_tokens

|

||||

の上限を制限してください:https://github.com/josStorer/RWKV-Runner/blob/master/backend-python/utils/rwkv.py#L567

|

||||

、デフォルトは le=102400 ですが、極端な場合には単一の応答が大量のリソースを消費する可能性があります。

|

||||

|

||||

- デフォルトの設定はカスタム CUDA カーネルアクセラレーションを有効にしています。互換性の問題 (文字化けを出力する)

|

||||

が発生する可能性がある場合は、コンフィグページに移動し、`Use Custom CUDA kernel to Accelerate`

|

||||

をオフにしてください、あるいは、GPUドライバーをアップグレードしてみてください。

|

||||

|

||||

- Windows Defender

|

||||

がこれをウイルスだと主張する場合は、[v1.3.7_win.zip](https://github.com/josStorer/RWKV-Runner/releases/download/v1.3.7/RWKV-Runner_win.zip)

|

||||

をダウンロードして最新版に自動更新させるか、信頼済みリストに追加してみてください (`Windows Security` -> `Virus & threat protection` -> `Manage settings` -> `Exclusions` -> `Add or remove exclusions` -> `Add an exclusion` -> `Folder` -> `RWKV-Runner`)。

|

||||

|

||||

- 異なるタスクについては、API パラメータを調整することで、より良い結果を得ることができます。例えば、翻訳タスクの場合、Temperature

|

||||

を 1 に、Top_P を 0.3 に設定してみてください。

|

||||

|

||||

## 特徴

|

||||

|

||||

- RWKV モデル管理とワンクリック起動

|

||||

- OpenAI API と完全に互換性があり、すべての ChatGPT クライアントを RWKV クライアントにします。モデル起動後、

|

||||

- フロントエンドとバックエンドの分離は、クライアントを使用しない場合でも、フロントエンドサービス、またはバックエンド推論サービス、またはWebUIを備えたバックエンド推論サービスを個別に展開することを可能にします。

|

||||

[シンプルなデプロイの例](#Simple-Deploy-Example) | [サーバーデプロイ例](https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples)

|

||||

- OpenAI API と互換性があり、すべての ChatGPT クライアントを RWKV クライアントにします。モデル起動後、

|

||||

http://127.0.0.1:8000/docs を開いて詳細をご覧ください。

|

||||

- 依存関係の自動インストールにより、軽量な実行プログラムのみを必要とします

|

||||

- 2G から 32G の VRAM のコンフィグが含まれており、ほとんどのコンピュータで動作します

|

||||

- ユーザーフレンドリーなチャットと完成インタラクションインターフェースを搭載

|

||||

- 分かりやすく操作しやすいパラメータ設定

|

||||

- 事前設定された多段階のVRAM設定、ほとんどのコンピュータで動作します。配置ページで、ストラテジーをWebGPUに切り替えると、AMD、インテル、その他のグラフィックカードでも動作します

|

||||

- ユーザーフレンドリーなチャット、完成、および作曲インターフェイスが含まれています。また、チャットプリセット、添付ファイルのアップロード、MIDIハードウェア入力、トラック編集もサポートしています。

|

||||

[プレビュー](#Preview) | [MIDIハードウェア入力](#MIDI-Input)

|

||||

- 内蔵WebUIオプション、Webサービスのワンクリック開始、ハードウェアリソースの共有

|

||||

- 分かりやすく操作しやすいパラメータ設定、各種操作ガイダンスプロンプトとともに

|

||||

- 内蔵モデル変換ツール

|

||||

- ダウンロード管理とリモートモデル検査機能内蔵

|

||||

- 内蔵のLoRA微調整機能を搭載しています

|

||||

- このプログラムは、OpenAI ChatGPTとGPT Playgroundのクライアントとしても使用できます

|

||||

- 内蔵のLoRA微調整機能を搭載しています (Windowsのみ)

|

||||

- このプログラムは、OpenAI ChatGPTとGPT Playgroundのクライアントとしても使用できます(設定ページで `API URL` と `API Key`

|

||||

を入力してください)

|

||||

- 多言語ローカライズ

|

||||

- テーマ切り替え

|

||||

- 自動アップデート

|

||||

|

||||

## Simple Deploy Example

|

||||

|

||||

```bash

|

||||

git clone https://github.com/josStorer/RWKV-Runner

|

||||

|

||||

# Then

|

||||

cd RWKV-Runner

|

||||

python ./backend-python/main.py #The backend inference service has been started, request /switch-model API to load the model, refer to the API documentation: http://127.0.0.1:8000/docs

|

||||

|

||||

# Or

|

||||

cd RWKV-Runner/frontend

|

||||

npm ci

|

||||

npm run build #Compile the frontend

|

||||

cd ..

|

||||

python ./backend-python/webui_server.py #Start the frontend service separately

|

||||

# Or

|

||||

python ./backend-python/main.py --webui #Start the frontend and backend service at the same time

|

||||

|

||||

# Help Info

|

||||

python ./backend-python/main.py -h

|

||||

```

|

||||

|

||||

## API 同時実行ストレステスト

|

||||

|

||||

```bash

|

||||

@@ -91,7 +133,11 @@ body.json:

|

||||

|

||||

## 埋め込み API の例

|

||||

|

||||

LangChain を使用している場合は、`OpenAIEmbeddings(openai_api_base="http://127.0.0.1:8000", openai_api_key="sk-")`を使用してください

|

||||

注意: v1.4.0 では、埋め込み API の品質が向上しました。生成される結果は、以前のバージョンとは互換性がありません。

|

||||

もし、embeddings API を使って知識ベースなどを生成している場合は、再生成してください。

|

||||

|

||||

LangChain を使用している場合は、`OpenAIEmbeddings(openai_api_base="http://127.0.0.1:8000", openai_api_key="sk-")`

|

||||

を使用してください

|

||||

|

||||

```python

|

||||

import numpy as np

|

||||

@@ -128,35 +174,98 @@ for i in np.argsort(embeddings_cos_sim)[::-1]:

|

||||

print(f"{embeddings_cos_sim[i]:.10f} - {values[i]}")

|

||||

```

|

||||

|

||||

## MIDI Input

|

||||

|

||||

Tip: You can download https://github.com/josStorer/sgm_plus and unzip it to the program's `assets/sound-font` directory

|

||||

to use it as an offline sound source. Please note that if you are compiling the program from source code, do not place

|

||||

it in the source code directory.

|

||||

|

||||

MIDIキーボードをお持ちでない場合、`Virtual Midi Controller 3 LE`

|

||||

などの仮想MIDI入力ソフトウェアを使用することができます。[loopMIDI](https://www.tobias-erichsen.de/wp-content/uploads/2020/01/loopMIDISetup_1_0_16_27.zip)

|

||||

を組み合わせて、通常のコンピュータキーボードをMIDI入力として使用できます。

|

||||

|

||||

### USB MIDI Connection

|

||||

|

||||

- USB MIDI devices are plug-and-play, and you can select your input device in the Composition page

|

||||

-

|

||||

|

||||

### Mac MIDI Bluetooth Connection

|

||||

|

||||

- For Mac users who want to use Bluetooth input,

|

||||

please install [Bluetooth MIDI Connect](https://apps.apple.com/us/app/bluetooth-midi-connect/id1108321791), then click

|

||||

the tray icon to connect after launching,

|

||||

afterwards, you can select your input device in the Composition page.

|

||||

-

|

||||

|

||||

### Windows MIDI Bluetooth Connection

|

||||

|

||||

- Windows seems to have implemented Bluetooth MIDI support only for UWP (Universal Windows Platform) apps. Therefore, it

|

||||

requires multiple steps to establish a connection. We need to create a local virtual MIDI device and then launch a UWP

|

||||

application. Through this UWP application, we will redirect Bluetooth MIDI input to the virtual MIDI device, and then

|

||||

this software will listen to the input from the virtual MIDI device.

|

||||

- So, first, you need to

|

||||

download [loopMIDI](https://www.tobias-erichsen.de/wp-content/uploads/2020/01/loopMIDISetup_1_0_16_27.zip)

|

||||

to create a virtual MIDI device. Click the plus sign in the bottom left corner to create the device.

|

||||

-

|

||||

- Next, you need to download [Bluetooth LE Explorer](https://apps.microsoft.com/detail/9N0ZTKF1QD98) to discover and

|

||||

connect to Bluetooth MIDI devices. Click "Start" to search for devices, and then click "Pair" to bind the MIDI device.

|

||||

-

|

||||

- Finally, you need to install [MIDIberry](https://apps.microsoft.com/detail/9N39720H2M05),

|

||||

This UWP application can redirect Bluetooth MIDI input to the virtual MIDI device. After launching it, double-click

|

||||

your actual Bluetooth MIDI device name in the input field, and in the output field, double-click the virtual MIDI

|

||||

device name we created earlier.

|

||||

-

|

||||

- Now, you can select the virtual MIDI device as the input in the Composition page. Bluetooth LE Explorer no longer

|

||||

needs to run, and you can also close the loopMIDI window, it will run automatically in the background. Just keep

|

||||

MIDIberry open.

|

||||

-

|

||||

|

||||

## 関連リポジトリ:

|

||||

|

||||

- RWKV-5-World: https://huggingface.co/BlinkDL/rwkv-5-world/tree/main

|

||||

- RWKV-4-World: https://huggingface.co/BlinkDL/rwkv-4-world/tree/main

|

||||

- RWKV-4-Raven: https://huggingface.co/BlinkDL/rwkv-4-raven/tree/main

|

||||

- ChatRWKV: https://github.com/BlinkDL/ChatRWKV

|

||||

- RWKV-LM: https://github.com/BlinkDL/RWKV-LM

|

||||

- RWKV-LM-LoRA: https://github.com/Blealtan/RWKV-LM-LoRA

|

||||

- MIDI-LLM-tokenizer: https://github.com/briansemrau/MIDI-LLM-tokenizer

|

||||

- ai00_rwkv_server: https://github.com/cgisky1980/ai00_rwkv_server

|

||||

- rwkv.cpp: https://github.com/saharNooby/rwkv.cpp

|

||||

- web-rwkv-py: https://github.com/cryscan/web-rwkv-py

|

||||

|

||||

## プレビュー

|

||||

## Preview

|

||||

|

||||

### ホームページ

|

||||

|

||||

|

||||

|

||||

|

||||

### チャット

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

### 補完

|

||||

|

||||

|

||||

|

||||

### 作曲

|

||||

|

||||

Tip: You can download https://github.com/josStorer/sgm_plus and unzip it to the program's `assets/sound-font` directory

|

||||

to use it as an offline sound source. Please note that if you are compiling the program from source code, do not place

|

||||

it in the source code directory.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

### コンフィグ

|

||||

|

||||

|

||||

|

||||

|

||||

### モデル管理

|

||||

|

||||

|

||||

|

||||

|

||||

### ダウンロード管理

|

||||

|

||||

|

||||

118

README_ZH.md

118

README_ZH.md

@@ -20,7 +20,7 @@ API兼容的接口,这意味着一切ChatGPT客户端都是RWKV客户端。

|

||||

[![MacOS][MacOS-image]][MacOS-url]

|

||||

[![Linux][Linux-image]][Linux-url]

|

||||

|

||||

[视频演示](https://www.bilibili.com/video/BV1hM4y1v76R) | [疑难解答](https://www.bilibili.com/read/cv23921171) | [预览](#Preview) | [下载][download-url] | [懒人包](https://pan.baidu.com/s/1zdzZ_a0uM3gDqi6pXIZVAA?pwd=1111) | [服务器部署示例](https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples)

|

||||

[视频演示](https://www.bilibili.com/video/BV1hM4y1v76R) | [疑难解答](https://www.bilibili.com/read/cv23921171) | [预览](#Preview) | [下载][download-url] | [懒人包](https://pan.baidu.com/s/1zdzZ_a0uM3gDqi6pXIZVAA?pwd=1111) | [简明服务部署示例](#Simple-Deploy-Example) | [服务器部署示例](https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples) | [MIDI硬件输入](#MIDI-Input)

|

||||

|

||||

[license-image]: http://img.shields.io/badge/license-MIT-blue.svg

|

||||

|

||||

@@ -46,28 +46,65 @@ API兼容的接口,这意味着一切ChatGPT客户端都是RWKV客户端。

|

||||

|

||||

</div>

|

||||

|

||||

#### 预设配置已经开启自定义CUDA算子加速,速度更快,且显存消耗更少。如果你遇到可能的兼容性问题,前往配置页面,关闭`使用自定义CUDA算子加速`

|

||||

## 小贴士

|

||||

|

||||

#### 如果Windows Defender说这是一个病毒,你可以尝试下载[v1.3.7_win.zip](https://github.com/josStorer/RWKV-Runner/releases/download/v1.3.7/RWKV-Runner_win.zip),然后让其自动更新到最新版,或添加信任

|

||||

- 你可以在服务器部署[backend-python](./backend-python/),然后将此程序仅用作客户端,在设置的`API URL`中填入你的服务器地址

|

||||

|

||||

#### 对于不同的任务,调整API参数会获得更好的效果,例如对于翻译任务,你可以尝试设置Temperature为1,Top_P为0.3

|

||||

- 如果你正在部署并对外提供公开服务,请通过API网关限制请求大小,避免过长的prompt提交占用资源。此外,请根据你的实际情况,限制请求的

|

||||

max_tokens 上限: https://github.com/josStorer/RWKV-Runner/blob/master/backend-python/utils/rwkv.py#L567,

|

||||

默认le=102400, 这可能导致极端情况下单个响应消耗大量资源

|

||||

|

||||

- 预设配置已经开启自定义CUDA算子加速,速度更快,且显存消耗更少。如果你遇到可能的兼容性(输出乱码)

|

||||

问题,前往配置页面,关闭`使用自定义CUDA算子加速`,或更新你的显卡驱动

|

||||

|

||||

- 如果 Windows Defender

|

||||

说这是一个病毒,你可以尝试下载[v1.3.7_win.zip](https://github.com/josStorer/RWKV-Runner/releases/download/v1.3.7/RWKV-Runner_win.zip),

|

||||

然后让其自动更新到最新版,或添加信任 (`Windows Security` -> `Virus & threat protection` -> `Manage settings` -> `Exclusions` -> `Add or remove exclusions` -> `Add an exclusion` -> `Folder` -> `RWKV-Runner`)

|

||||

|

||||

- 对于不同的任务,调整API参数会获得更好的效果,例如对于翻译任务,你可以尝试设置Temperature为1,Top_P为0.3

|

||||

|

||||

## 功能

|

||||

|

||||

- RWKV模型管理,一键启动

|

||||

- 与OpenAI API完全兼容,一切ChatGPT客户端,都是RWKV客户端。启动模型后,打开 http://127.0.0.1:8000/docs 查看详细内容

|

||||

- 前后端分离,如果你不想使用客户端,也允许单独部署前端服务,或后端推理服务,或具有WebUI的后端推理服务。

|

||||

[简明服务部署示例](#Simple-Deploy-Example) | [服务器部署示例](https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples)

|

||||

- 与OpenAI API兼容,一切ChatGPT客户端,都是RWKV客户端。启动模型后,打开 http://127.0.0.1:8000/docs 查看API文档

|

||||

- 全自动依赖安装,你只需要一个轻巧的可执行程序

|

||||

- 预设了2G至32G显存的配置,几乎在各种电脑上工作良好

|

||||

- 自带用户友好的聊天和续写交互页面

|

||||

- 易于理解和操作的参数配置

|

||||

- 预设多级显存配置,几乎在各种电脑上工作良好。通过配置页面切换Strategy到WebGPU,还可以在AMD,Intel等显卡上运行

|

||||

- 自带用户友好的聊天,续写,作曲交互页面。支持聊天预设,附件上传,MIDI硬件输入及音轨编辑。

|

||||

[预览](#Preview) | [MIDI硬件输入](#MIDI-Input)

|

||||

- 内置WebUI选项,一键启动Web服务,共享硬件资源

|

||||

- 易于理解和操作的参数配置,及各类操作引导提示

|

||||

- 内置模型转换工具

|

||||

- 内置下载管理和远程模型检视

|

||||

- 内置一键LoRA微调

|

||||

- 也可用作 OpenAI ChatGPT 和 GPT Playground 客户端

|

||||

- 内置一键LoRA微调 (仅限Windows)

|

||||

- 也可用作 OpenAI ChatGPT 和 GPT Playground 客户端 (在设置内填写API URL和API Key)

|

||||

- 多语言本地化

|

||||

- 主题切换

|

||||

- 自动更新

|

||||

|

||||

## Simple Deploy Example

|

||||

|

||||

```bash

|

||||

git clone https://github.com/josStorer/RWKV-Runner

|

||||

|

||||

# 然后

|

||||

cd RWKV-Runner

|

||||

python ./backend-python/main.py #后端推理服务已启动, 调用/switch-model载入模型, 参考API文档: http://127.0.0.1:8000/docs

|

||||

|

||||

# 或者

|

||||

cd RWKV-Runner/frontend

|

||||

npm ci

|

||||

npm run build #编译前端

|

||||

cd ..

|

||||

python ./backend-python/webui_server.py #单独启动前端服务

|

||||

# 或者

|

||||

python ./backend-python/main.py --webui #同时启动前后端服务

|

||||

|

||||

# 帮助参数

|

||||

python ./backend-python/main.py -h

|

||||

```

|

||||

|

||||

## API并发压力测试

|

||||

|

||||

```bash

|

||||

@@ -89,6 +126,8 @@ body.json:

|

||||

|

||||

## Embeddings API 示例

|

||||

|

||||

注意: 1.4.0 版本对embeddings API质量进行了改善,生成结果与之前的版本不兼容,如果你正在使用此API生成知识库等,请重新生成

|

||||

|

||||

如果你在用langchain, 直接使用 `OpenAIEmbeddings(openai_api_base="http://127.0.0.1:8000", openai_api_key="sk-")`

|

||||

|

||||

```python

|

||||

@@ -126,35 +165,88 @@ for i in np.argsort(embeddings_cos_sim)[::-1]:

|

||||

print(f"{embeddings_cos_sim[i]:.10f} - {values[i]}")

|

||||

```

|

||||

|

||||

## MIDI Input

|

||||

|

||||

小贴士: 你可以下载 https://github.com/josStorer/sgm_plus, 并解压到程序的`assets/sound-font`目录, 以使用离线音源. 注意,

|

||||

如果你正在从源码编译程序, 请不要将其放置在源码目录中

|

||||

|

||||

如果你没有MIDI键盘, 你可以使用像 `Virtual Midi Controller 3 LE` 这样的虚拟MIDI输入软件,

|

||||

配合[loopMIDI](https://www.tobias-erichsen.de/wp-content/uploads/2020/01/loopMIDISetup_1_0_16_27.zip), 使用普通电脑键盘作为MIDI输入

|

||||

|

||||

### USB MIDI 连接

|

||||

|

||||

- USB MIDI设备是即插即用的, 你能够在作曲页面选择你的输入设备

|

||||

-

|

||||

|

||||

### Mac MIDI 蓝牙连接

|

||||

|

||||

- 对于想要使用蓝牙输入的Mac用户,

|

||||

请安装[Bluetooth MIDI Connect](https://apps.apple.com/us/app/bluetooth-midi-connect/id1108321791), 启动后点击托盘连接,

|

||||

之后你可以在作曲页面选择你的输入设备

|

||||

-

|

||||

|

||||

### Windows MIDI 蓝牙连接

|

||||

|

||||

- Windows似乎只为UWP实现了蓝牙MIDI支持, 因此需要多个步骤进行连接, 我们需要创建一个本地的虚拟MIDI设备, 然后启动一个UWP应用,

|

||||

通过此UWP应用将蓝牙MIDI输入重定向到虚拟MIDI设备, 然后本软件监听虚拟MIDI设备的输入

|

||||

- 因此, 首先你需要下载[loopMIDI](https://www.tobias-erichsen.de/wp-content/uploads/2020/01/loopMIDISetup_1_0_16_27.zip),

|

||||

用于创建虚拟MIDI设备, 点击左下角的加号创建设备

|

||||

-

|

||||

- 然后, 你需要下载[Bluetooth LE Explorer](https://apps.microsoft.com/detail/9N0ZTKF1QD98), 以发现并连接蓝牙MIDI设备,

|

||||

点击Start搜索设备, 然后点击Pair绑定MIDI设备

|

||||

-

|

||||

- 最后, 你需要安装[MIDIberry](https://apps.microsoft.com/detail/9N39720H2M05), 这个UWP应用能将MIDI蓝牙输入重定向到虚拟MIDI设备,

|

||||

启动后, 在输入栏, 双击你实际的蓝牙MIDI设备名称, 在输出栏, 双击我们先前创建的虚拟MIDI设备名称

|

||||

-

|

||||

- 现在, 你可以在作曲页面选择虚拟MIDI设备作为输入. Bluetooth LE Explorer不再需要运行, loopMIDI窗口也可以退出, 它会自动在后台运行,

|

||||

仅保持MIDIberry打开即可

|

||||

-

|

||||

|

||||

## 相关仓库:

|

||||

|

||||

- RWKV-5-World: https://huggingface.co/BlinkDL/rwkv-5-world/tree/main

|

||||

- RWKV-4-World: https://huggingface.co/BlinkDL/rwkv-4-world/tree/main

|

||||

- RWKV-4-Raven: https://huggingface.co/BlinkDL/rwkv-4-raven/tree/main

|

||||

- ChatRWKV: https://github.com/BlinkDL/ChatRWKV

|

||||

- RWKV-LM: https://github.com/BlinkDL/RWKV-LM

|

||||

- RWKV-LM-LoRA: https://github.com/Blealtan/RWKV-LM-LoRA

|

||||

- MIDI-LLM-tokenizer: https://github.com/briansemrau/MIDI-LLM-tokenizer

|

||||

- ai00_rwkv_server: https://github.com/cgisky1980/ai00_rwkv_server

|

||||

- rwkv.cpp: https://github.com/saharNooby/rwkv.cpp

|

||||

- web-rwkv-py: https://github.com/cryscan/web-rwkv-py

|

||||

|

||||

## Preview

|

||||

|

||||

### 主页

|

||||

|

||||

|

||||

|

||||

|

||||

### 聊天

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

### 续写

|

||||

|

||||

|

||||

|

||||

### 作曲

|

||||

|

||||

小贴士: 你可以下载 https://github.com/josStorer/sgm_plus, 并解压到程序的`assets/sound-font`目录, 以使用离线音源. 注意,

|

||||

如果你正在从源码编译程序, 请不要将其放置在源码目录中

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

### 配置

|

||||

|

||||

|

||||

|

||||

|

||||

### 模型管理

|

||||

|

||||

|

||||

|

||||

|

||||

### 下载管理

|

||||

|

||||

|

||||

BIN

assets/default_sound_font.sf2

Normal file

BIN

assets/default_sound_font.sf2

Normal file

Binary file not shown.

116

assets/sound-font/sound_fetch.py

Normal file

116

assets/sound-font/sound_fetch.py

Normal file

@@ -0,0 +1,116 @@

|

||||

# https://github.com/magenta/magenta-js/issues/164

|

||||

|

||||

import json

|

||||

import os

|

||||

import urllib.request

|

||||

|

||||

|

||||

def get_pitches_array(min_pitch, max_pitch):

|

||||

return list(range(min_pitch, max_pitch + 1))

|

||||

|

||||

|

||||

base_url = 'https://storage.googleapis.com/magentadata/js/soundfonts'

|

||||

soundfont_path = 'sgm_plus'

|

||||

soundfont_json_url = f"{base_url}/{soundfont_path}/soundfont.json"

|

||||

|

||||

# Download soundfont.json

|

||||

soundfont_json = ""

|

||||

|

||||

if not os.path.exists('soundfont.json'):

|

||||

try:

|

||||

with urllib.request.urlopen(soundfont_json_url) as response:

|

||||

soundfont_json = response.read()

|

||||

|

||||

# Save soundfont.json

|

||||

with open('soundfont.json', 'wb') as file:

|

||||

file.write(soundfont_json)

|

||||

|

||||

except:

|

||||

print("Failed to download soundfont.json")

|

||||

|

||||

else:

|

||||

# If file exists, get it from the file system

|

||||

with open('soundfont.json', 'rb') as file:

|

||||

soundfont_json = file.read()

|

||||

|

||||

# Parse soundfont.json

|

||||

soundfont_data = json.loads(soundfont_json)

|

||||

|

||||

if soundfont_data is not None:

|

||||

|

||||

# Iterate over each instrument

|

||||

for instrument_id, instrument_name in soundfont_data['instruments'].items():

|

||||

|

||||

if not os.path.isdir(instrument_name):

|

||||

|

||||

# Create instrument directory if it doesn't exist

|

||||

os.makedirs(instrument_name)

|

||||

|

||||

instrument_json = ""

|

||||

|

||||

instrument_path = f"{soundfont_path}/{instrument_name}"

|

||||

|

||||

if not os.path.exists(f"{instrument_name}/instrument.json"):

|

||||

|

||||

# Download instrument.json

|

||||

instrument_json_url = f"{base_url}/{instrument_path}/instrument.json"

|

||||

|

||||

try:

|

||||

with urllib.request.urlopen(instrument_json_url) as response:

|

||||

instrument_json = response.read()

|

||||

|

||||

# Save instrument.json

|

||||

with open(f"{instrument_name}/instrument.json", 'wb') as file:

|

||||

file.write(instrument_json)

|

||||

|

||||

except:

|

||||

print(f"Failed to download {instrument_name}/instrument.json")

|

||||

|

||||

else:

|

||||

|

||||

# If file exists, get it from the file system

|

||||

with open(f"{instrument_name}/instrument.json", 'rb') as file:

|

||||

instrument_json = file.read()

|

||||

|

||||

# Parse instrument.json

|

||||

instrument_data = json.loads(instrument_json)

|

||||

|

||||

if instrument_data is not None:

|

||||

# Iterate over each pitch and velocity

|

||||

for velocity in instrument_data['velocities']:

|

||||

|

||||

pitches = get_pitches_array(instrument_data['minPitch'], instrument_data['maxPitch'])

|

||||

|

||||

for pitch in pitches:

|

||||

|

||||

# Create the file name

|

||||

file_name = f'p{pitch}_v{velocity}.mp3'

|

||||

|

||||

# Check if the file already exists

|

||||

if os.path.exists(f"{instrument_name}/{file_name}"):

|

||||

pass

|

||||

#print(f"Skipping {instrument_name}/{file_name} - File already exists")

|

||||

|

||||

else:

|

||||

|

||||

# Download pitch/velocity file

|

||||

file_url = f"{base_url}/{instrument_path}/{file_name}"

|

||||

|

||||

try:

|

||||

with urllib.request.urlopen(file_url) as response:

|

||||

file_contents = response.read()

|

||||

|

||||

# Save pitch/velocity file

|

||||

with open(f"{instrument_name}/{file_name}", 'wb') as file:

|

||||

file.write(file_contents)

|

||||

|

||||

print(f"Downloaded {instrument_name}/{file_name}")

|

||||

|

||||

except:

|

||||

print(f"Failed to download {instrument_name}/{file_name}")

|

||||

|

||||

else:

|

||||

print(f"Failed to parse instrument.json for {instrument_name}")

|

||||

|

||||

else:

|

||||

print('Failed to parse soundfont.json')

|

||||

134

assets/sound-font/soundfont.json

Normal file

134

assets/sound-font/soundfont.json

Normal file

@@ -0,0 +1,134 @@

|

||||

{

|

||||

"name": "sgm_plus",

|

||||

"instruments": {

|

||||

"0": "acoustic_grand_piano",

|

||||

"1": "bright_acoustic_piano",

|

||||

"2": "electric_grand_piano",

|

||||

"3": "honkytonk_piano",

|

||||

"4": "electric_piano_1",

|

||||

"5": "electric_piano_2",

|

||||

"6": "harpsichord",

|

||||

"7": "clavichord",

|

||||

"8": "celesta",

|

||||

"9": "glockenspiel",

|

||||

"10": "music_box",

|

||||

"11": "vibraphone",

|

||||

"12": "marimba",

|

||||

"13": "xylophone",

|

||||

"14": "tubular_bells",

|

||||

"15": "dulcimer",

|

||||

"16": "drawbar_organ",

|

||||

"17": "percussive_organ",

|

||||

"18": "rock_organ",

|

||||

"19": "church_organ",

|

||||

"20": "reed_organ",

|

||||

"21": "accordion",

|

||||

"22": "harmonica",

|

||||

"23": "tango_accordion",

|

||||

"24": "acoustic_guitar_nylon",

|

||||

"25": "acoustic_guitar_steel",

|

||||

"26": "electric_guitar_jazz",

|

||||

"27": "electric_guitar_clean",

|

||||

"28": "electric_guitar_muted",

|

||||

"29": "overdriven_guitar",

|

||||

"30": "distortion_guitar",

|

||||

"31": "guitar_harmonics",

|

||||

"32": "acoustic_bass",

|

||||

"33": "electric_bass_finger",

|

||||

"34": "electric_bass_pick",

|

||||

"35": "fretless_bass",

|

||||

"36": "slap_bass_1",

|

||||

"37": "slap_bass_2",

|

||||

"38": "synth_bass_1",

|

||||

"39": "synth_bass_2",

|

||||

"40": "violin",

|

||||

"41": "viola",

|

||||

"42": "cello",

|

||||

"43": "contrabass",

|

||||

"44": "tremolo_strings",

|

||||

"45": "pizzicato_strings",

|

||||

"46": "orchestral_harp",

|

||||

"47": "timpani",

|

||||

"48": "string_ensemble_1",

|

||||

"49": "string_ensemble_2",

|

||||

"50": "synthstrings_1",

|

||||

"51": "synthstrings_2",

|

||||

"52": "choir_aahs",

|

||||

"53": "voice_oohs",

|

||||

"54": "synth_voice",

|

||||

"55": "orchestra_hit",

|

||||

"56": "trumpet",

|

||||

"57": "trombone",

|

||||

"58": "tuba",

|

||||

"59": "muted_trumpet",

|

||||

"60": "french_horn",

|

||||

"61": "brass_section",

|

||||

"62": "synthbrass_1",

|

||||

"63": "synthbrass_2",

|

||||

"64": "soprano_sax",

|

||||

"65": "alto_sax",

|

||||

"66": "tenor_sax",

|

||||

"67": "baritone_sax",

|

||||

"68": "oboe",

|

||||

"69": "english_horn",

|

||||

"70": "bassoon",

|

||||

"71": "clarinet",

|

||||

"72": "piccolo",

|

||||

"73": "flute",

|

||||

"74": "recorder",

|

||||

"75": "pan_flute",

|

||||

"76": "blown_bottle",

|

||||

"77": "shakuhachi",

|

||||

"78": "whistle",

|

||||

"79": "ocarina",

|

||||

"80": "lead_1_square",

|

||||

"81": "lead_2_sawtooth",

|

||||

"82": "lead_3_calliope",

|

||||

"83": "lead_4_chiff",

|

||||

"84": "lead_5_charang",

|

||||

"85": "lead_6_voice",

|

||||

"86": "lead_7_fifths",

|

||||

"87": "lead_8_bass_lead",

|

||||

"88": "pad_1_new_age",

|

||||

"89": "pad_2_warm",

|

||||

"90": "pad_3_polysynth",

|

||||

"91": "pad_4_choir",

|

||||

"92": "pad_5_bowed",

|

||||

"93": "pad_6_metallic",

|

||||

"94": "pad_7_halo",

|

||||

"95": "pad_8_sweep",

|

||||

"96": "fx_1_rain",

|

||||

"97": "fx_2_soundtrack",

|

||||

"98": "fx_3_crystal",

|

||||

"99": "fx_4_atmosphere",

|

||||

"100": "fx_5_brightness",

|

||||

"101": "fx_6_goblins",

|

||||

"102": "fx_7_echoes",

|

||||

"103": "fx_8_scifi",

|

||||

"104": "sitar",

|

||||

"105": "banjo",

|

||||

"106": "shamisen",

|

||||

"107": "koto",

|

||||

"108": "kalimba",

|

||||

"109": "bag_pipe",

|

||||

"110": "fiddle",

|

||||

"111": "shanai",

|

||||

"112": "tinkle_bell",

|

||||

"113": "agogo",

|

||||

"114": "steel_drums",

|

||||

"115": "woodblock",

|

||||

"116": "taiko_drum",

|

||||

"117": "melodic_tom",

|

||||

"118": "synth_drum",

|

||||

"119": "reverse_cymbal",

|

||||

"120": "guitar_fret_noise",

|

||||

"121": "breath_noise",

|

||||

"122": "seashore",

|

||||

"123": "bird_tweet",

|

||||

"124": "telephone_ring",

|

||||

"125": "helicopter",

|

||||

"126": "applause",

|

||||

"127": "gunshot",

|

||||

"drums": "percussion"

|

||||

}

|

||||

}

|

||||

469

assets/soundfont_builder.rb

Normal file

469

assets/soundfont_builder.rb

Normal file

@@ -0,0 +1,469 @@

|

||||

#!/usr/bin/env ruby

|

||||

#

|

||||

# JavaScript Soundfont Builder for MIDI.js

|

||||

# Author: 0xFE <mohit@muthanna.com>

|

||||

# edited by Valentijn Nieman <valentijnnieman@gmail.com>

|

||||

#

|

||||

# Requires:

|

||||

#

|

||||

# FluidSynth

|

||||

# Lame

|

||||

# Ruby Gems: midilib parallel

|

||||

#

|

||||

# $ brew install fluidsynth lame (on OSX)

|

||||

# $ gem install midilib parallel

|

||||

#

|

||||

# You'll need to download a GM soundbank to generate audio.

|

||||

#

|

||||

# Usage:

|

||||

#

|

||||

# 1) Install the above dependencies.

|

||||

# 2) Edit BUILD_DIR, SOUNDFONT, and INSTRUMENTS as required.

|

||||

# 3) Run without any argument.

|

||||

|

||||

require 'base64'

|

||||

require 'digest/sha1'

|

||||

require 'etc'

|

||||

require 'fileutils'

|

||||

require 'midilib'

|

||||

require 'parallel'

|

||||

require 'zlib'

|

||||

require 'json'

|

||||

|

||||

include FileUtils

|

||||

|

||||

BUILD_DIR = "./sound-font" # Output path

|

||||

SOUNDFONT = "./default_sound_font.sf2" # Soundfont file path

|

||||

|

||||

# This script will generate MIDI.js-compatible instrument JS files for

|

||||

# all instruments in the below array. Add or remove as necessary.

|

||||

INSTRUMENTS = [

|

||||

0,

|

||||

1,

|

||||

2,

|

||||

3,

|

||||

4,

|

||||

5,

|

||||

6,

|

||||

7,

|

||||

8,

|

||||

9,

|

||||

10,

|

||||

11,

|

||||

12,

|

||||

13,

|

||||

14,

|

||||