Compare commits

17 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

bfbf43f45c | ||

|

|

cc8b22f0fb | ||

|

|

a2bbbabee2 | ||

|

|

b52873cb37 | ||

|

|

00d82154dc | ||

|

|

440b70eb15 | ||

|

|

2a55c8256d | ||

|

|

2ddcd17d23 | ||

|

|

14461930ab | ||

|

|

79eff01b33 | ||

|

|

b19ea95f88 | ||

|

|

4f92366ea5 | ||

|

|

235b587789 | ||

|

|

c6a4a71cf1 | ||

|

|

150bb089cf | ||

|

|

5c8a637cf5 | ||

|

|

6c7b40a9c1 |

15

.github/workflows/pre-release.yml

vendored

15

.github/workflows/pre-release.yml

vendored

@@ -18,11 +18,11 @@ jobs:

|

||||

ref: master

|

||||

- uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version: '1.20.5'

|

||||

go-version: "1.20.5"

|

||||

- uses: actions/setup-python@v5

|

||||

id: cp310

|

||||

with:

|

||||

python-version: '3.10'

|

||||

python-version: "3.10"

|

||||

- uses: crazy-max/ghaction-chocolatey@v3

|

||||

with:

|

||||

args: install upx

|

||||

@@ -39,7 +39,7 @@ jobs:

|

||||

Copy-Item -Path "${{ steps.cp310.outputs.python-path }}/../include" -Destination "py310/include" -Recurse

|

||||

Copy-Item -Path "${{ steps.cp310.outputs.python-path }}/../libs" -Destination "py310/libs" -Recurse

|

||||

./py310/python -m pip install cyac==1.9

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@latest

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@v2.8.0

|

||||

del ./backend-python/rwkv_pip/cpp/librwkv.dylib

|

||||

del ./backend-python/rwkv_pip/cpp/librwkv.so

|

||||

(Get-Content -Path ./backend-golang/app.go) -replace "//go:custom_build windows ", "" | Set-Content -Path ./backend-golang/app.go

|

||||

@@ -60,14 +60,14 @@ jobs:

|

||||

ref: master

|

||||

- uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version: '1.20.5'

|

||||

go-version: "1.20.5"

|

||||

- run: |

|

||||

wget https://github.com/josStorer/ai00_rwkv_server/releases/latest/download/webgpu_server_linux_x86_64 -O ./backend-rust/webgpu_server

|

||||

wget https://github.com/josStorer/web-rwkv-converter/releases/latest/download/web-rwkv-converter_linux_x86_64 -O ./backend-rust/web-rwkv-converter

|

||||

sudo apt-get update

|

||||

sudo apt-get install upx

|

||||

sudo apt-get install build-essential libgtk-3-dev libwebkit2gtk-4.0-dev libasound2-dev

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@latest

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@v2.8.0

|

||||

rm ./backend-python/rwkv_pip/wkv_cuda.pyd

|

||||

rm ./backend-python/rwkv_pip/rwkv5.pyd

|

||||

rm ./backend-python/rwkv_pip/rwkv6.pyd

|

||||

@@ -92,11 +92,11 @@ jobs:

|

||||

ref: master

|

||||

- uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version: '1.20.5'

|

||||

go-version: "1.20.5"

|

||||

- run: |

|

||||

wget https://github.com/josStorer/ai00_rwkv_server/releases/latest/download/webgpu_server_darwin_aarch64 -O ./backend-rust/webgpu_server

|

||||

wget https://github.com/josStorer/web-rwkv-converter/releases/latest/download/web-rwkv-converter_darwin_aarch64 -O ./backend-rust/web-rwkv-converter

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@latest

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@v2.8.0

|

||||

rm ./backend-python/rwkv_pip/wkv_cuda.pyd

|

||||

rm ./backend-python/rwkv_pip/rwkv5.pyd

|

||||

rm ./backend-python/rwkv_pip/rwkv6.pyd

|

||||

@@ -114,4 +114,3 @@ jobs:

|

||||

with:

|

||||

name: RWKV-Runner_macos_universal.zip

|

||||

path: build/bin/RWKV-Runner_macos_universal.zip

|

||||

|

||||

|

||||

18

.github/workflows/release.yml

vendored

18

.github/workflows/release.yml

vendored

@@ -18,7 +18,7 @@ jobs:

|

||||

with:

|

||||

ref: master

|

||||

|

||||

- uses: jossef/action-set-json-field@v2.1

|

||||

- uses: jossef/action-set-json-field@v2.2

|

||||

with:

|

||||

file: manifest.json

|

||||

field: version

|

||||

@@ -43,11 +43,11 @@ jobs:

|

||||

ref: master

|

||||

- uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version: '1.20.5'

|

||||

go-version: "1.20.5"

|

||||

- uses: actions/setup-python@v5

|

||||

id: cp310

|

||||

with:

|

||||

python-version: '3.10'

|

||||

python-version: "3.10"

|

||||

- uses: crazy-max/ghaction-chocolatey@v3

|

||||

with:

|

||||

args: install upx

|

||||

@@ -64,7 +64,7 @@ jobs:

|

||||

Copy-Item -Path "${{ steps.cp310.outputs.python-path }}/../include" -Destination "py310/include" -Recurse

|

||||

Copy-Item -Path "${{ steps.cp310.outputs.python-path }}/../libs" -Destination "py310/libs" -Recurse

|

||||

./py310/python -m pip install cyac==1.9

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@latest

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@v2.8.0

|

||||

del ./backend-python/rwkv_pip/cpp/librwkv.dylib

|

||||

del ./backend-python/rwkv_pip/cpp/librwkv.so

|

||||

(Get-Content -Path ./backend-golang/app.go) -replace "//go:custom_build windows ", "" | Set-Content -Path ./backend-golang/app.go

|

||||

@@ -83,14 +83,14 @@ jobs:

|

||||

ref: master

|

||||

- uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version: '1.20.5'

|

||||

go-version: "1.20.5"

|

||||

- run: |

|

||||

wget https://github.com/josStorer/ai00_rwkv_server/releases/latest/download/webgpu_server_linux_x86_64 -O ./backend-rust/webgpu_server

|

||||

wget https://github.com/josStorer/web-rwkv-converter/releases/latest/download/web-rwkv-converter_linux_x86_64 -O ./backend-rust/web-rwkv-converter

|

||||

sudo apt-get update

|

||||

sudo apt-get install upx

|

||||

sudo apt-get install build-essential libgtk-3-dev libwebkit2gtk-4.0-dev libasound2-dev

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@latest

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@v2.8.0

|

||||

rm ./backend-python/rwkv_pip/wkv_cuda.pyd

|

||||

rm ./backend-python/rwkv_pip/rwkv5.pyd

|

||||

rm ./backend-python/rwkv_pip/rwkv6.pyd

|

||||

@@ -113,11 +113,11 @@ jobs:

|

||||

ref: master

|

||||

- uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version: '1.20.5'

|

||||

go-version: "1.20.5"

|

||||

- run: |

|

||||

wget https://github.com/josStorer/ai00_rwkv_server/releases/latest/download/webgpu_server_darwin_aarch64 -O ./backend-rust/webgpu_server

|

||||

wget https://github.com/josStorer/web-rwkv-converter/releases/latest/download/web-rwkv-converter_darwin_aarch64 -O ./backend-rust/web-rwkv-converter

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@latest

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@v2.8.0

|

||||

rm ./backend-python/rwkv_pip/wkv_cuda.pyd

|

||||

rm ./backend-python/rwkv_pip/rwkv5.pyd

|

||||

rm ./backend-python/rwkv_pip/rwkv6.pyd

|

||||

@@ -135,7 +135,7 @@ jobs:

|

||||

|

||||

publish-release:

|

||||

runs-on: ubuntu-22.04

|

||||

needs: [ windows, linux, macos ]

|

||||

needs: [windows, linux, macos]

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- run: gh release edit ${{github.ref_name}} --draft=false

|

||||

|

||||

@@ -1,41 +1,25 @@

|

||||

## Changes

|

||||

|

||||

- bump webgpu mode [ai00_server v0.4.2](https://github.com/Ai00-X/ai00_server) (huge performance improvement)

|

||||

- upgrade to rwkv 0.8.26 (state-tuned model support)

|

||||

- update defaultConfigs and manifest.json

|

||||

- chores

|

||||

### Features

|

||||

|

||||

## Breaking Changes

|

||||

- add support for dynamic state-tuned models

|

||||

|

||||

- change the default value of `presystem` to false

|

||||

|

||||

|

||||

For the convenience of using the future state-tuned models, the default value of `presystem` has been set to false. This

|

||||

means that the RWKV-Runner service will no longer automatically insert recommended RWKV pre-prompts for you:

|

||||

### Upgrades

|

||||

|

||||

```

|

||||

User: hi

|

||||

- bump webgpu mode [ai00_server v0.4.8](https://github.com/Ai00-X/ai00_server)

|

||||

|

||||

Assistant: Hi. I am your assistant and I will provide expert full response in full details. Please feel free to ask any question and I will always answer it.

|

||||

```

|

||||

### Improvements

|

||||

|

||||

If you are using the API service and conducting a rigorous RWKV conversation, please manually send the above messages to

|

||||

the `/chat/completions` API's `messages` array, or manually send `presystem: true` to have the server automatically

|

||||

insert pre-prompts.

|

||||

- add tps console output

|

||||

- add torch cnMirror

|

||||

- disable pre_ffn and head_qk

|

||||

- improve frontend details

|

||||

|

||||

If you are using the RWKV-Runner client for chatting, you can enable `Insert default system prompt at the beginning` in

|

||||

the preset editor.

|

||||

### Chores

|

||||

|

||||

Of course, in reality, even if you do not perform the above, there is usually no significant negative impact.

|

||||

|

||||

If you are using the new RWKV state-tuned models, you do not need to perform the above.

|

||||

|

||||

The new RWKV state-tuned models can be downloaded here, they are very interesting:

|

||||

|

||||

- https://huggingface.co/BlinkDL/rwkv-6-state-instruct-aligned

|

||||

- https://huggingface.co/BlinkDL/temp-latest-training-models

|

||||

|

||||

If you are interested in state-tuning, please refer

|

||||

to: https://github.com/BlinkDL/RWKV-LM#state-tuning-tuning-the-initial-state-zero-inference-overhead

|

||||

- update manifest.json and defaultModelConfigs

|

||||

|

||||

## Install

|

||||

|

||||

|

||||

@@ -125,6 +125,7 @@ func (a *App) OnStartup(ctx context.Context) {

|

||||

os.Chmod(a.exDir+"backend-rust/web-rwkv-converter", 0777)

|

||||

os.Mkdir(a.exDir+"models", os.ModePerm)

|

||||

os.Mkdir(a.exDir+"lora-models", os.ModePerm)

|

||||

os.Mkdir(a.exDir+"state-models", os.ModePerm)

|

||||

os.Mkdir(a.exDir+"finetune/json2binidx_tool/data", os.ModePerm)

|

||||

trainLogPath := "lora-models/train_log.txt"

|

||||

if !a.FileExists(trainLogPath) {

|

||||

@@ -151,8 +152,9 @@ func (a *App) OnBeforeClose(ctx context.Context) bool {

|

||||

func (a *App) watchFs() {

|

||||

watcher, err := fsnotify.NewWatcher()

|

||||

if err == nil {

|

||||

watcher.Add(a.exDir + "./lora-models")

|

||||

watcher.Add(a.exDir + "./models")

|

||||

watcher.Add(a.exDir + "./lora-models")

|

||||

watcher.Add(a.exDir + "./state-models")

|

||||

go func() {

|

||||

for {

|

||||

select {

|

||||

|

||||

@@ -215,8 +215,12 @@ func (a *App) DepCheck(python string) error {

|

||||

|

||||

func (a *App) InstallPyDep(python string, cnMirror bool) (string, error) {

|

||||

var err error

|

||||

torchWhlUrl := "torch==1.13.1 torchvision==0.14.1 torchaudio==0.13.1 --index-url https://download.pytorch.org/whl/cu117"

|

||||

if python == "" {

|

||||

python, err = GetPython()

|

||||

if cnMirror && python == "py310/python.exe" {

|

||||

torchWhlUrl = "https://mirrors.aliyun.com/pytorch-wheels/cu117/torch-1.13.1+cu117-cp310-cp310-win_amd64.whl"

|

||||

}

|

||||

if runtime.GOOS == "windows" {

|

||||

python = `"%CD%/` + python + `"`

|

||||

}

|

||||

@@ -228,7 +232,7 @@ func (a *App) InstallPyDep(python string, cnMirror bool) (string, error) {

|

||||

if runtime.GOOS == "windows" {

|

||||

ChangeFileLine("./py310/python310._pth", 3, "Lib\\site-packages")

|

||||

installScript := python + " ./backend-python/get-pip.py -i https://mirrors.aliyun.com/pypi/simple --no-warn-script-location\n" +

|

||||

python + " -m pip install torch==1.13.1 torchvision==0.14.1 torchaudio==0.13.1 --index-url https://download.pytorch.org/whl/cu117 --no-warn-script-location\n" +

|

||||

python + " -m pip install " + torchWhlUrl + " --no-warn-script-location\n" +

|

||||

python + " -m pip install -r ./backend-python/requirements.txt -i https://mirrors.aliyun.com/pypi/simple --no-warn-script-location\n" +

|

||||

"exit"

|

||||

if !cnMirror {

|

||||

|

||||

1

backend-python/convert_safetensors.py

vendored

1

backend-python/convert_safetensors.py

vendored

@@ -102,6 +102,7 @@ if __name__ == "__main__":

|

||||

"time_mix_w2",

|

||||

"time_decay_w1",

|

||||

"time_decay_w2",

|

||||

"time_state",

|

||||

"lora.0",

|

||||

],

|

||||

)

|

||||

|

||||

@@ -4,6 +4,7 @@ from threading import Lock

|

||||

from typing import List, Union

|

||||

from enum import Enum

|

||||

import base64

|

||||

import time

|

||||

|

||||

from fastapi import APIRouter, Request, status, HTTPException

|

||||

from sse_starlette.sse import EventSourceResponse

|

||||

@@ -151,10 +152,13 @@ async def eval_rwkv(

|

||||

print(get_rwkv_config(model))

|

||||

|

||||

response, prompt_tokens, completion_tokens = "", 0, 0

|

||||

completion_start_time = None

|

||||

for response, delta, prompt_tokens, completion_tokens in model.generate(

|

||||

prompt,

|

||||

stop=stop,

|

||||

):

|

||||

if not completion_start_time:

|

||||

completion_start_time = time.time()

|

||||

if await request.is_disconnected():

|

||||

break

|

||||

if stream:

|

||||

@@ -186,6 +190,10 @@ async def eval_rwkv(

|

||||

)

|

||||

# torch_gc()

|

||||

requests_num = requests_num - 1

|

||||

completion_end_time = time.time()

|

||||

tps = completion_tokens / (completion_end_time - completion_start_time)

|

||||

print(f"Generation TPS: {tps:.2f}")

|

||||

|

||||

if await request.is_disconnected():

|

||||

print(f"{request.client} Stop Waiting")

|

||||

quick_log(

|

||||

|

||||

@@ -120,6 +120,9 @@ def update_config(body: ModelConfigBody):

|

||||

model_config = ModelConfigBody()

|

||||

global_var.set(global_var.Model_Config, model_config)

|

||||

merge_model(model_config, body)

|

||||

exception = load_rwkv_state(global_var.get(global_var.Model), model_config.state)

|

||||

if exception is not None:

|

||||

raise exception

|

||||

print("Updated Model Config:", model_config)

|

||||

|

||||

return "success"

|

||||

|

||||

@@ -176,6 +176,19 @@ def reset_state():

|

||||

return "success"

|

||||

|

||||

|

||||

def force_reset_state():

|

||||

global trie, dtrie

|

||||

|

||||

if trie is None:

|

||||

return

|

||||

|

||||

import cyac

|

||||

|

||||

trie = cyac.Trie()

|

||||

dtrie = {}

|

||||

gc.collect()

|

||||

|

||||

|

||||

class LongestPrefixStateBody(BaseModel):

|

||||

prompt: str

|

||||

|

||||

|

||||

@@ -4,9 +4,10 @@ import os

|

||||

import pathlib

|

||||

import copy

|

||||

import re

|

||||

import time

|

||||

from typing import Dict, Iterable, List, Tuple, Union, Type, Callable

|

||||

from utils.log import quick_log

|

||||

from fastapi import HTTPException

|

||||

from fastapi import HTTPException, status

|

||||

from pydantic import BaseModel, Field

|

||||

from routes import state_cache

|

||||

import global_var

|

||||

@@ -26,6 +27,7 @@ class AbstractRWKV(ABC):

|

||||

self.EOS_ID = 0

|

||||

|

||||

self.name = "rwkv"

|

||||

self.model_path = ""

|

||||

self.version = 4

|

||||

self.model = model

|

||||

self.pipeline = pipeline

|

||||

@@ -42,6 +44,8 @@ class AbstractRWKV(ABC):

|

||||

self.penalty_alpha_frequency = 1

|

||||

self.penalty_decay = 0.996

|

||||

self.global_penalty = False

|

||||

self.state_path = ""

|

||||

self.state_tuned = None

|

||||

|

||||

@abstractmethod

|

||||

def adjust_occurrence(self, occurrence: Dict, token: int):

|

||||

@@ -235,7 +239,10 @@ class AbstractRWKV(ABC):

|

||||

except HTTPException:

|

||||

pass

|

||||

if cache is None or cache["prompt"] == "" or cache["state"] is None:

|

||||

self.model_state = None

|

||||

if self.state_path:

|

||||

self.model_state = copy.deepcopy(self.state_tuned)

|

||||

else:

|

||||

self.model_state = None

|

||||

self.model_tokens = []

|

||||

else:

|

||||

delta_prompt = prompt[len(cache["prompt"]) :]

|

||||

@@ -245,9 +252,13 @@ class AbstractRWKV(ABC):

|

||||

|

||||

prompt_token_len = 0

|

||||

if delta_prompt != "":

|

||||

prompt_start_time = time.time()

|

||||

logits, prompt_token_len = self.run_rnn(

|

||||

self.fix_tokens(self.pipeline.encode(delta_prompt))

|

||||

)

|

||||

prompt_end_time = time.time()

|

||||

tps = prompt_token_len / (prompt_end_time - prompt_start_time)

|

||||

print(f"Prompt Prefill TPS: {tps:.2f}", end=" ", flush=True)

|

||||

try:

|

||||

state_cache.add_state(

|

||||

state_cache.AddStateBody(

|

||||

@@ -601,13 +612,13 @@ def get_model_path(model_path: str) -> str:

|

||||

|

||||

|

||||

def RWKV(model: str, strategy: str, tokenizer: Union[str, None]) -> AbstractRWKV:

|

||||

model = get_model_path(model)

|

||||

model_path = get_model_path(model)

|

||||

|

||||

rwkv_beta = global_var.get(global_var.Args).rwkv_beta

|

||||

rwkv_cpp = getattr(global_var.get(global_var.Args), "rwkv.cpp")

|

||||

webgpu = global_var.get(global_var.Args).webgpu

|

||||

|

||||

if "midi" in model.lower() or "abc" in model.lower():

|

||||

if "midi" in model_path.lower() or "abc" in model_path.lower():

|

||||

os.environ["RWKV_RESCALE_LAYER"] = "999"

|

||||

|

||||

# dynamic import to make RWKV_CUDA_ON work

|

||||

@@ -632,8 +643,8 @@ def RWKV(model: str, strategy: str, tokenizer: Union[str, None]) -> AbstractRWKV

|

||||

)

|

||||

from rwkv_pip.utils import PIPELINE

|

||||

|

||||

filename, _ = os.path.splitext(os.path.basename(model))

|

||||

model = Model(model, strategy)

|

||||

filename, _ = os.path.splitext(os.path.basename(model_path))

|

||||

model = Model(model_path, strategy)

|

||||

if not tokenizer:

|

||||

tokenizer = get_tokenizer(len(model.w["emb.weight"]))

|

||||

pipeline = PIPELINE(model, tokenizer)

|

||||

@@ -666,6 +677,7 @@ def RWKV(model: str, strategy: str, tokenizer: Union[str, None]) -> AbstractRWKV

|

||||

else:

|

||||

rwkv = TextRWKV(model, pipeline)

|

||||

rwkv.name = filename

|

||||

rwkv.model_path = model_path

|

||||

rwkv.version = model.version

|

||||

|

||||

return rwkv

|

||||

@@ -683,6 +695,7 @@ class ModelConfigBody(BaseModel):

|

||||

default=None,

|

||||

description="When generating a response, whether to include the submitted prompt as a penalty factor. By turning this off, you will get the same generated results as official RWKV Gradio. If you find duplicate results in the generated results, turning this on can help avoid generating duplicates.",

|

||||

)

|

||||

state: str = Field(default=None, description="state-tuned file path")

|

||||

|

||||

model_config = {

|

||||

"json_schema_extra": {

|

||||

@@ -694,11 +707,80 @@ class ModelConfigBody(BaseModel):

|

||||

"frequency_penalty": 1,

|

||||

"penalty_decay": 0.996,

|

||||

"global_penalty": False,

|

||||

"state": "",

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

|

||||

def load_rwkv_state(model: AbstractRWKV, state_path: str) -> HTTPException:

|

||||

if model:

|

||||

if state_path:

|

||||

if model.model_path.endswith(".pth") and state_path.endswith(".pth"):

|

||||

import torch

|

||||

|

||||

state_path = get_model_path(state_path)

|

||||

if model.state_path == state_path:

|

||||

return

|

||||

|

||||

state_raw = torch.load(state_path, map_location="cpu")

|

||||

state_raw_shape = next(iter(state_raw.values())).shape

|

||||

|

||||

args = model.model.args

|

||||

if (

|

||||

len(state_raw) != args.n_layer

|

||||

or state_raw_shape[0] * state_raw_shape[1] != args.n_embd

|

||||

):

|

||||

if model.state_path:

|

||||

pass

|

||||

else:

|

||||

print("state failed to load")

|

||||

return HTTPException(

|

||||

status.HTTP_400_BAD_REQUEST, "state shape mismatch"

|

||||

)

|

||||

|

||||

strategy = model.model.strategy

|

||||

model.state_tuned = [None] * args.n_layer * 3

|

||||

|

||||

for i in range(args.n_layer):

|

||||

dd = strategy[i]

|

||||

dev = dd.device

|

||||

atype = dd.atype

|

||||

model.state_tuned[i * 3 + 0] = torch.zeros(

|

||||

args.n_embd, dtype=atype, requires_grad=False, device=dev

|

||||

).contiguous()

|

||||

model.state_tuned[i * 3 + 1] = (

|

||||

state_raw[f"blocks.{i}.att.time_state"]

|

||||

.transpose(1, 2)

|

||||

.to(dtype=torch.float, device=dev)

|

||||

.requires_grad_(False)

|

||||

.contiguous()

|

||||

)

|

||||

model.state_tuned[i * 3 + 2] = torch.zeros(

|

||||

args.n_embd, dtype=atype, requires_grad=False, device=dev

|

||||

).contiguous()

|

||||

|

||||

state_cache.force_reset_state()

|

||||

model.state_path = state_path

|

||||

print("state loaded")

|

||||

else:

|

||||

if model.state_path:

|

||||

pass

|

||||

else:

|

||||

print("state failed to load")

|

||||

return HTTPException(

|

||||

status.HTTP_400_BAD_REQUEST,

|

||||

"file format of the model or state model not supported",

|

||||

)

|

||||

else:

|

||||

state_cache.force_reset_state()

|

||||

model.state_path = ""

|

||||

model.state_tuned = None # TODO cached

|

||||

print("state unloaded")

|

||||

else:

|

||||

print("state not loaded")

|

||||

|

||||

|

||||

def set_rwkv_config(model: AbstractRWKV, body: ModelConfigBody):

|

||||

if body.max_tokens is not None:

|

||||

model.max_tokens_per_generation = body.max_tokens

|

||||

@@ -719,6 +801,8 @@ def set_rwkv_config(model: AbstractRWKV, body: ModelConfigBody):

|

||||

model.top_k = body.top_k

|

||||

if body.global_penalty is not None:

|

||||

model.global_penalty = body.global_penalty

|

||||

if body.state is not None:

|

||||

load_rwkv_state(model, body.state)

|

||||

|

||||

|

||||

def get_rwkv_config(model: AbstractRWKV) -> ModelConfigBody:

|

||||

@@ -731,4 +815,5 @@ def get_rwkv_config(model: AbstractRWKV) -> ModelConfigBody:

|

||||

penalty_decay=model.penalty_decay,

|

||||

top_k=model.top_k,

|

||||

global_penalty=model.global_penalty,

|

||||

state=model.state_path,

|

||||

)

|

||||

|

||||

@@ -354,5 +354,10 @@

|

||||

"Inside the model, there is a default prompt to improve the model's handling of common issues, but it may degrade the role-playing effect. You can disable this option to achieve a better role-playing effect.": "モデル内部には、一般的な問題の処理を改善するためのデフォルトのプロンプトがありますが、役割演技の効果を低下させる可能性があります。このオプションを無効にすることで、より良い役割演技効果を得ることができます。",

|

||||

"Exit without saving": "保存せずに終了",

|

||||

"Content has been changed, are you sure you want to exit without saving?": "コンテンツが変更されています、保存せずに終了してもよろしいですか?",

|

||||

"Don't forget to correctly fill in your Ollama API Chat Model Name.": "Ollama APIチャットモデル名を正しく記入するのを忘れないでください。"

|

||||

"Don't forget to correctly fill in your Ollama API Chat Model Name.": "Ollama APIチャットモデル名を正しく記入するのを忘れないでください。",

|

||||

"State-tuned Model": "State調整モデル",

|

||||

"See More": "もっと見る",

|

||||

"State Model": "Stateモデル",

|

||||

"State model mismatch": "Stateモデルの不一致",

|

||||

"File format of the model or state model not supported": "モデルまたはStateモデルのファイル形式がサポートされていません"

|

||||

}

|

||||

@@ -354,5 +354,10 @@

|

||||

"Inside the model, there is a default prompt to improve the model's handling of common issues, but it may degrade the role-playing effect. You can disable this option to achieve a better role-playing effect.": "模型内部有一个默认提示来改善模型处理常规问题的效果, 但它可能会让角色扮演的效果变差, 你可以关闭此选项来获得更好的角色扮演效果",

|

||||

"Exit without saving": "退出而不保存",

|

||||

"Content has been changed, are you sure you want to exit without saving?": "内容已经被修改, 你确定要退出而不保存吗?",

|

||||

"Don't forget to correctly fill in your Ollama API Chat Model Name.": "不要忘记正确填写你的Ollama API 聊天模型名"

|

||||

"Don't forget to correctly fill in your Ollama API Chat Model Name.": "不要忘记正确填写你的Ollama API 聊天模型名",

|

||||

"State-tuned Model": "State微调模型",

|

||||

"See More": "查看更多",

|

||||

"State Model": "State模型",

|

||||

"State model mismatch": "State模型不匹配",

|

||||

"File format of the model or state model not supported": "模型或state模型的文件格式不支持"

|

||||

}

|

||||

@@ -214,7 +214,18 @@ export const RunButton: FC<{ onClickRun?: MouseEventHandler, iconMode?: boolean

|

||||

presence_penalty: modelConfig.apiParameters.presencePenalty,

|

||||

frequency_penalty: modelConfig.apiParameters.frequencyPenalty,

|

||||

penalty_decay: modelConfig.apiParameters.penaltyDecay,

|

||||

global_penalty: modelConfig.apiParameters.globalPenalty

|

||||

global_penalty: modelConfig.apiParameters.globalPenalty,

|

||||

state: modelConfig.apiParameters.stateModel

|

||||

}).then(async r => {

|

||||

if (r.status !== 200) {

|

||||

const error = await r.text();

|

||||

if (error.includes('state shape mismatch'))

|

||||

toast(t('State model mismatch'), { type: 'error' });

|

||||

else if (error.includes('file format of the model or state model not supported'))

|

||||

toast(t('File format of the model or state model not supported'), { type: 'error' });

|

||||

else

|

||||

toast(error, { type: 'error' });

|

||||

}

|

||||

});

|

||||

}

|

||||

|

||||

|

||||

@@ -7,11 +7,13 @@ import {

|

||||

Dropdown,

|

||||

Input,

|

||||

Label,

|

||||

Link,

|

||||

Option,

|

||||

PresenceBadge,

|

||||

Select,

|

||||

Switch,

|

||||

Text

|

||||

Text,

|

||||

Tooltip

|

||||

} from '@fluentui/react-components';

|

||||

import { AddCircle20Regular, DataUsageSettings20Regular, Delete20Regular, Save20Regular } from '@fluentui/react-icons';

|

||||

import React, { FC, useCallback, useEffect, useRef } from 'react';

|

||||

@@ -27,7 +29,7 @@ import { Page } from '../components/Page';

|

||||

import { useNavigate } from 'react-router';

|

||||

import { RunButton } from '../components/RunButton';

|

||||

import { updateConfig } from '../apis';

|

||||

import { getStrategy } from '../utils';

|

||||

import { getStrategy, isDynamicStateSupported } from '../utils';

|

||||

import { useTranslation } from 'react-i18next';

|

||||

import strategyImg from '../assets/images/strategy.jpg';

|

||||

import strategyZhImg from '../assets/images/strategy_zh.jpg';

|

||||

@@ -36,6 +38,7 @@ import { useMediaQuery } from 'usehooks-ts';

|

||||

import { ApiParameters, Device, ModelParameters, Precision } from '../types/configs';

|

||||

import { convertModel, convertToGGML, convertToSt } from '../utils/convert-model';

|

||||

import { defaultPenaltyDecay } from './defaultConfigs';

|

||||

import { BrowserOpenURL } from '../../wailsjs/runtime';

|

||||

|

||||

const ConfigSelector: FC<{

|

||||

selectedIndex: number,

|

||||

@@ -112,6 +115,8 @@ const Configs: FC = observer(() => {

|

||||

|

||||

const onClickSave = () => {

|

||||

commonStore.setModelConfig(selectedIndex, selectedConfig);

|

||||

// When clicking RunButton in Configs page, updateConfig will be called twice,

|

||||

// because there are also RunButton in other pages, and the calls to updateConfig in both places are necessary.

|

||||

updateConfig({

|

||||

max_tokens: selectedConfig.apiParameters.maxResponseToken,

|

||||

temperature: selectedConfig.apiParameters.temperature,

|

||||

@@ -119,7 +124,18 @@ const Configs: FC = observer(() => {

|

||||

presence_penalty: selectedConfig.apiParameters.presencePenalty,

|

||||

frequency_penalty: selectedConfig.apiParameters.frequencyPenalty,

|

||||

penalty_decay: selectedConfig.apiParameters.penaltyDecay,

|

||||

global_penalty: selectedConfig.apiParameters.globalPenalty

|

||||

global_penalty: selectedConfig.apiParameters.globalPenalty,

|

||||

state: selectedConfig.apiParameters.stateModel

|

||||

}).then(async r => {

|

||||

if (r.status !== 200) {

|

||||

const error = await r.text();

|

||||

if (error.includes('state shape mismatch'))

|

||||

toast(t('State model mismatch'), { type: 'error' });

|

||||

else if (error.includes('file format of the model or state model not supported'))

|

||||

toast(t('File format of the model or state model not supported'), { type: 'error' });

|

||||

else

|

||||

toast(error, { type: 'error' });

|

||||

}

|

||||

});

|

||||

toast(t('Config Saved'), { autoClose: 300, type: 'success' });

|

||||

};

|

||||

@@ -200,6 +216,34 @@ const Configs: FC = observer(() => {

|

||||

});

|

||||

}} />

|

||||

} />

|

||||

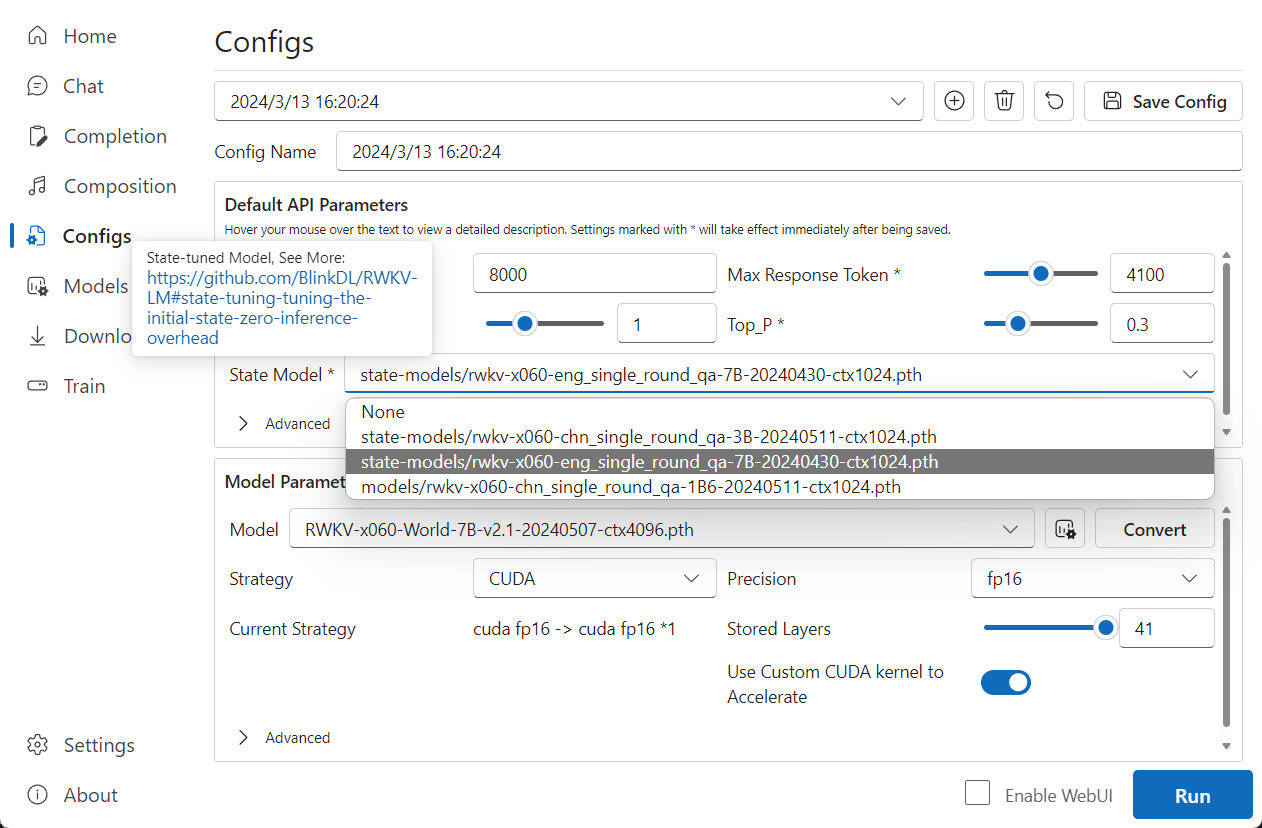

{isDynamicStateSupported(selectedConfig) &&

|

||||

<div className="sm:col-span-2 flex gap-2 items-center min-w-0">

|

||||

<Tooltip content={<div>

|

||||

{t('State-tuned Model')}, {t('See More')}: <Link

|

||||

onClick={() => BrowserOpenURL('https://github.com/BlinkDL/RWKV-LM#state-tuning-tuning-the-initial-state-zero-inference-overhead')}>{'https://github.com/BlinkDL/RWKV-LM#state-tuning-tuning-the-initial-state-zero-inference-overhead'}

|

||||

</Link>

|

||||

</div>} showDelay={0} hideDelay={0}

|

||||

relationship="description">

|

||||

<div className="shrink-0">

|

||||

{t('State Model') + ' *'}

|

||||

</div>

|

||||

</Tooltip>

|

||||

<Select style={{ minWidth: 0 }} className="grow"

|

||||

value={selectedConfig.apiParameters.stateModel}

|

||||

onChange={(e, data) => {

|

||||

setSelectedConfigApiParams({

|

||||

stateModel: data.value

|

||||

});

|

||||

}}>

|

||||

<option key={-1} value={''}>

|

||||

{t('None')}

|

||||

</option>

|

||||

{commonStore.stateModels.map((modelName, index) =>

|

||||

<option key={index} value={modelName}>{modelName}</option>

|

||||

)}

|

||||

</Select>

|

||||

</div>

|

||||

}

|

||||

<Accordion className="sm:col-span-2" collapsible

|

||||

openItems={!commonStore.apiParamsCollapsed && 'advanced'}

|

||||

onToggle={(e, data) => {

|

||||

@@ -268,50 +312,58 @@ const Configs: FC = observer(() => {

|

||||

title={t('Model Parameters')}

|

||||

content={

|

||||

<div className="grid grid-cols-1 sm:grid-cols-2 gap-2">

|

||||

<Labeled label={t('Model')} content={

|

||||

<div className="flex gap-2 grow">

|

||||

<Select style={{ minWidth: 0 }} className="grow"

|

||||

value={selectedConfig.modelParameters.modelName}

|

||||

onChange={(e, data) => {

|

||||

const modelSource = commonStore.modelSourceList.find(item => item.name === data.value);

|

||||

if (modelSource?.customTokenizer)

|

||||

setSelectedConfigModelParams({

|

||||

modelName: data.value,

|

||||

useCustomTokenizer: true,

|

||||

customTokenizer: modelSource?.customTokenizer

|

||||

});

|

||||

else // prevent customTokenizer from being overwritten

|

||||

setSelectedConfigModelParams({

|

||||

modelName: data.value,

|

||||

useCustomTokenizer: false

|

||||

});

|

||||

}}>

|

||||

{!commonStore.modelSourceList.find(item => item.name === selectedConfig.modelParameters.modelName)?.isComplete

|

||||

&& <option key={-1}

|

||||

value={selectedConfig.modelParameters.modelName}>{selectedConfig.modelParameters.modelName}

|

||||

</option>}

|

||||

{commonStore.modelSourceList.map((modelItem, index) =>

|

||||

modelItem.isComplete && <option key={index} value={modelItem.name}>{modelItem.name}</option>

|

||||

)}

|

||||

</Select>

|

||||

<ToolTipButton desc={t('Manage Models')} icon={<DataUsageSettings20Regular />} onClick={() => {

|

||||

navigate({ pathname: '/models' });

|

||||

}} />

|

||||

<div className="sm:col-span-2">

|

||||

<div className="flex flex-col sm:flex-row gap-2">

|

||||

<div className="flex gap-2 items-center min-w-0 grow">

|

||||

<div className="shrink-0">

|

||||

{t('Model')}

|

||||

</div>

|

||||

<Select style={{ minWidth: 0 }} className="grow"

|

||||

value={selectedConfig.modelParameters.modelName}

|

||||

onChange={(e, data) => {

|

||||

const modelSource = commonStore.modelSourceList.find(item => item.name === data.value);

|

||||

if (modelSource?.customTokenizer)

|

||||

setSelectedConfigModelParams({

|

||||

modelName: data.value,

|

||||

useCustomTokenizer: true,

|

||||

customTokenizer: modelSource?.customTokenizer

|

||||

});

|

||||

else // prevent customTokenizer from being overwritten

|

||||

setSelectedConfigModelParams({

|

||||

modelName: data.value,

|

||||

useCustomTokenizer: false

|

||||

});

|

||||

}}>

|

||||

{!commonStore.modelSourceList.find(item => item.name === selectedConfig.modelParameters.modelName)?.isComplete

|

||||

&& <option key={-1}

|

||||

value={selectedConfig.modelParameters.modelName}>{selectedConfig.modelParameters.modelName}

|

||||

</option>}

|

||||

{commonStore.modelSourceList.map((modelItem, index) =>

|

||||

modelItem.isComplete && <option key={index} value={modelItem.name}>{modelItem.name}</option>

|

||||

)}

|

||||

</Select>

|

||||

<ToolTipButton desc={t('Manage Models')} icon={<DataUsageSettings20Regular />} onClick={() => {

|

||||

navigate({ pathname: '/models' });

|

||||

}} />

|

||||

</div>

|

||||

{

|

||||

!selectedConfig.modelParameters.device.startsWith('WebGPU') ?

|

||||

(selectedConfig.modelParameters.device !== 'CPU (rwkv.cpp)' ?

|

||||

<ToolTipButton text={t('Convert')}

|

||||

className="shrink-0"

|

||||

desc={t('Convert model with these configs. Using a converted model will greatly improve the loading speed, but model parameters of the converted model cannot be modified.')}

|

||||

onClick={() => convertModel(selectedConfig, navigate)} /> :

|

||||

<ToolTipButton text={t('Convert To GGML Format')}

|

||||

className="shrink-0"

|

||||

desc=""

|

||||

onClick={() => convertToGGML(selectedConfig, navigate)} />)

|

||||

: <ToolTipButton text={t('Convert To Safe Tensors Format')}

|

||||

className="shrink-0"

|

||||

desc=""

|

||||

onClick={() => convertToSt(selectedConfig, navigate)} />

|

||||

}

|

||||

</div>

|

||||

} />

|

||||

{

|

||||

!selectedConfig.modelParameters.device.startsWith('WebGPU') ?

|

||||

(selectedConfig.modelParameters.device !== 'CPU (rwkv.cpp)' ?

|

||||

<ToolTipButton text={t('Convert')}

|

||||

desc={t('Convert model with these configs. Using a converted model will greatly improve the loading speed, but model parameters of the converted model cannot be modified.')}

|

||||

onClick={() => convertModel(selectedConfig, navigate)} /> :

|

||||

<ToolTipButton text={t('Convert To GGML Format')}

|

||||

desc=""

|

||||

onClick={() => convertToGGML(selectedConfig, navigate)} />)

|

||||

: <ToolTipButton text={t('Convert To Safe Tensors Format')}

|

||||

desc=""

|

||||

onClick={() => convertToSt(selectedConfig, navigate)} />

|

||||

}

|

||||

</div>

|

||||

<Labeled label={t('Strategy')} content={

|

||||

<Dropdown style={{ minWidth: 0 }} className="grow" value={t(selectedConfig.modelParameters.device)!}

|

||||

selectedOptions={[selectedConfig.modelParameters.device]}

|

||||

|

||||

@@ -116,9 +116,9 @@ const loraFinetuneParametersOptions: Array<[key: keyof LoraFinetuneParameters, t

|

||||

['loraR', 'number', 'LoRA R'],

|

||||

['loraAlpha', 'number', 'LoRA Alpha'],

|

||||

['loraDropout', 'number', 'LoRA Dropout'],

|

||||

['beta1', 'any', ''],

|

||||

['preFfn', 'boolean', 'Pre-FFN'],

|

||||

['headQk', 'boolean', 'Head QK']

|

||||

['beta1', 'any', '']

|

||||

// ['preFfn', 'boolean', 'Pre-FFN'],

|

||||

// ['headQk', 'boolean', 'Head QK']

|

||||

];

|

||||

|

||||

const showError = (e: any) => {

|

||||

@@ -304,7 +304,7 @@ const LoraFinetune: FC = observer(() => {

|

||||

`--ctx_len ${ctxLen} --epoch_steps ${loraParams.epochSteps} --epoch_count ${loraParams.epochCount} ` +

|

||||

`--epoch_begin ${loraParams.epochBegin} --epoch_save ${loraParams.epochSave} ` +

|

||||

`--micro_bsz ${loraParams.microBsz} --accumulate_grad_batches ${loraParams.accumGradBatches} ` +

|

||||

`--pre_ffn ${loraParams.preFfn ? '1' : '0'} --head_qk ${loraParams.headQk ? '1' : '0'} --lr_init ${loraParams.lrInit} --lr_final ${loraParams.lrFinal} ` +

|

||||

`--pre_ffn ${loraParams.preFfn ? '0' : '0'} --head_qk ${loraParams.headQk ? '0' : '0'} --lr_init ${loraParams.lrInit} --lr_final ${loraParams.lrFinal} ` +

|

||||

`--warmup_steps ${loraParams.warmupSteps} ` +

|

||||

`--beta1 ${loraParams.beta1} --beta2 ${loraParams.beta2} --adam_eps ${loraParams.adamEps} ` +

|

||||

`--devices ${loraParams.devices} --precision ${loraParams.precision} ` +

|

||||

@@ -436,7 +436,9 @@ const LoraFinetune: FC = observer(() => {

|

||||

content={

|

||||

<div className="grid grid-cols-1 sm:grid-cols-2 gap-2">

|

||||

<div className="flex gap-2 items-center">

|

||||

{t('Base Model')}

|

||||

<div className="shrink-0">

|

||||

{t('Base Model')}

|

||||

</div>

|

||||

<Select style={{ minWidth: 0 }} className="grow"

|

||||

value={loraParams.baseModel}

|

||||

onChange={(e, data) => {

|

||||

|

||||

@@ -261,7 +261,7 @@ export const defaultModelConfigsMac: ModelConfig[] = [

|

||||

frequencyPenalty: 1

|

||||

},

|

||||

modelParameters: {

|

||||

modelName: 'RWKV-5-World-7B-v2-20240128-ctx4096.pth',

|

||||

modelName: 'RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth',

|

||||

device: 'WebGPU',

|

||||

precision: 'nf4',

|

||||

storedLayers: 41,

|

||||

@@ -279,7 +279,7 @@ export const defaultModelConfigsMac: ModelConfig[] = [

|

||||

frequencyPenalty: 1

|

||||

},

|

||||

modelParameters: {

|

||||

modelName: 'RWKV-5-World-7B-v2-20240128-ctx4096.pth',

|

||||

modelName: 'RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth',

|

||||

device: 'WebGPU',

|

||||

precision: 'nf4',

|

||||

storedLayers: 41,

|

||||

@@ -390,7 +390,7 @@ export const defaultModelConfigsMac: ModelConfig[] = [

|

||||

frequencyPenalty: 1

|

||||

},

|

||||

modelParameters: {

|

||||

modelName: 'RWKV-5-World-7B-v2-20240128-ctx4096.pth',

|

||||

modelName: 'RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth',

|

||||

device: 'MPS',

|

||||

precision: 'fp32',

|

||||

storedLayers: 41,

|

||||

@@ -507,7 +507,7 @@ export const defaultModelConfigs: ModelConfig[] = [

|

||||

frequencyPenalty: 1

|

||||

},

|

||||

modelParameters: {

|

||||

modelName: 'RWKV-5-World-7B-v2-20240128-ctx4096.pth',

|

||||

modelName: 'RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth',

|

||||

device: 'CUDA',

|

||||

precision: 'int8',

|

||||

storedLayers: 8,

|

||||

@@ -526,7 +526,7 @@ export const defaultModelConfigs: ModelConfig[] = [

|

||||

frequencyPenalty: 1

|

||||

},

|

||||

modelParameters: {

|

||||

modelName: 'RWKV-5-World-7B-v2-20240128-ctx4096.pth',

|

||||

modelName: 'RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth',

|

||||

device: 'CUDA',

|

||||

precision: 'int8',

|

||||

storedLayers: 8,

|

||||

@@ -583,7 +583,7 @@ export const defaultModelConfigs: ModelConfig[] = [

|

||||

frequencyPenalty: 1

|

||||

},

|

||||

modelParameters: {

|

||||

modelName: 'RWKV-5-World-7B-v2-20240128-ctx4096.pth',

|

||||

modelName: 'RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth',

|

||||

device: 'CUDA',

|

||||

precision: 'int8',

|

||||

storedLayers: 18,

|

||||

@@ -602,7 +602,7 @@ export const defaultModelConfigs: ModelConfig[] = [

|

||||

frequencyPenalty: 1

|

||||

},

|

||||

modelParameters: {

|

||||

modelName: 'RWKV-5-World-7B-v2-20240128-ctx4096.pth',

|

||||

modelName: 'RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth',

|

||||

device: 'CUDA',

|

||||

precision: 'int8',

|

||||

storedLayers: 18,

|

||||

@@ -659,7 +659,7 @@ export const defaultModelConfigs: ModelConfig[] = [

|

||||

frequencyPenalty: 1

|

||||

},

|

||||

modelParameters: {

|

||||

modelName: 'RWKV-5-World-7B-v2-20240128-ctx4096.pth',

|

||||

modelName: 'RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth',

|

||||

device: 'CUDA',

|

||||

precision: 'int8',

|

||||

storedLayers: 27,

|

||||

@@ -678,7 +678,7 @@ export const defaultModelConfigs: ModelConfig[] = [

|

||||

frequencyPenalty: 1

|

||||

},

|

||||

modelParameters: {

|

||||

modelName: 'RWKV-5-World-7B-v2-20240128-ctx4096.pth',

|

||||

modelName: 'RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth',

|

||||

device: 'CUDA',

|

||||

precision: 'int8',

|

||||

storedLayers: 27,

|

||||

@@ -697,7 +697,7 @@ export const defaultModelConfigs: ModelConfig[] = [

|

||||

frequencyPenalty: 1

|

||||

},

|

||||

modelParameters: {

|

||||

modelName: 'RWKV-5-World-7B-v2-20240128-ctx4096.pth',

|

||||

modelName: 'RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth',

|

||||

device: 'CUDA',

|

||||

precision: 'int8',

|

||||

storedLayers: 41,

|

||||

@@ -716,7 +716,7 @@ export const defaultModelConfigs: ModelConfig[] = [

|

||||

frequencyPenalty: 1

|

||||

},

|

||||

modelParameters: {

|

||||

modelName: 'RWKV-5-World-7B-v2-20240128-ctx4096.pth',

|

||||

modelName: 'RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth',

|

||||

device: 'CUDA',

|

||||

precision: 'int8',

|

||||

storedLayers: 41,

|

||||

@@ -735,7 +735,7 @@ export const defaultModelConfigs: ModelConfig[] = [

|

||||

frequencyPenalty: 1

|

||||

},

|

||||

modelParameters: {

|

||||

modelName: 'RWKV-5-World-7B-v2-20240128-ctx4096.pth',

|

||||

modelName: 'RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth',

|

||||

device: 'CUDA',

|

||||

precision: 'fp16',

|

||||

storedLayers: 41,

|

||||

@@ -754,7 +754,7 @@ export const defaultModelConfigs: ModelConfig[] = [

|

||||

frequencyPenalty: 1

|

||||

},

|

||||

modelParameters: {

|

||||

modelName: 'RWKV-5-World-7B-v2-20240128-ctx4096.pth',

|

||||

modelName: 'RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth',

|

||||

device: 'CUDA',

|

||||

precision: 'fp16',

|

||||

storedLayers: 41,

|

||||

@@ -863,7 +863,7 @@ export const defaultModelConfigs: ModelConfig[] = [

|

||||

frequencyPenalty: 1

|

||||

},

|

||||

modelParameters: {

|

||||

modelName: 'RWKV-5-World-7B-v2-20240128-ctx4096.pth',

|

||||

modelName: 'RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth',

|

||||

device: 'WebGPU',

|

||||

precision: 'nf4',

|

||||

storedLayers: 41,

|

||||

@@ -881,7 +881,7 @@ export const defaultModelConfigs: ModelConfig[] = [

|

||||

frequencyPenalty: 1

|

||||

},

|

||||

modelParameters: {

|

||||

modelName: 'RWKV-5-World-7B-v2-20240128-ctx4096.pth',

|

||||

modelName: 'RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth',

|

||||

device: 'WebGPU',

|

||||

precision: 'nf4',

|

||||

storedLayers: 41,

|

||||

|

||||

@@ -1,6 +1,14 @@

|

||||

import commonStore, { MonitorData, Platform } from './stores/commonStore';

|

||||

import { FileExists, GetPlatform, ListDirFiles, ReadJson } from '../wailsjs/go/backend_golang/App';

|

||||

import { Cache, checkUpdate, downloadProgramFiles, LocalConfig, refreshLocalModels, refreshModels } from './utils';

|

||||

import {

|

||||

bytesToMb,

|

||||

Cache,

|

||||

checkUpdate,

|

||||

downloadProgramFiles,

|

||||

LocalConfig,

|

||||

refreshLocalModels,

|

||||

refreshModels

|

||||

} from './utils';

|

||||

import { getStatus } from './apis';

|

||||

import { EventsOn, WindowSetTitle } from '../wailsjs/runtime';

|

||||

import manifest from '../../manifest.json';

|

||||

@@ -29,6 +37,7 @@ export async function startup() {

|

||||

});

|

||||

initLocalModelsNotify();

|

||||

initLoraModels();

|

||||

initStateModels();

|

||||

initHardwareMonitor();

|

||||

initMidi();

|

||||

}

|

||||

@@ -124,12 +133,42 @@ async function initLoraModels() {

|

||||

});

|

||||

}

|

||||

|

||||

async function initStateModels() {

|

||||

const refreshStateModels = throttle(async () => {

|

||||

const stateModels = await ListDirFiles('state-models').then((data) => {

|

||||

if (!data) return [];

|

||||

const stateModels = [];

|

||||

for (const f of data) {

|

||||

if (!f.isDir && f.name.endsWith('.pth')) {

|

||||

stateModels.push('state-models/' + f.name);

|

||||

}

|

||||

}

|

||||

return stateModels;

|

||||

});

|

||||

await ListDirFiles('models').then((data) => {

|

||||

if (!data) return;

|

||||

for (const f of data) {

|

||||

if (!f.isDir && f.name.endsWith('.pth') && Number(bytesToMb(f.size)) < 200) {

|

||||

stateModels.push('models/' + f.name);

|

||||

}

|

||||

}

|

||||

});

|

||||

commonStore.setStateModels(stateModels);

|

||||

}, 2000);

|

||||

|

||||

refreshStateModels();

|

||||

EventsOn('fsnotify', (data: string) => {

|

||||

if ((data.includes('models') && !data.includes('lora-models')) || data.includes('state-models'))

|

||||

refreshStateModels();

|

||||

});

|

||||

}

|

||||

|

||||

async function initLocalModelsNotify() {

|

||||

const throttleRefreshLocalModels = throttle(() => {

|

||||

refreshLocalModels({ models: commonStore.modelSourceList }, false); //TODO fix bug that only add models

|

||||

}, 2000);

|

||||

EventsOn('fsnotify', (data: string) => {

|

||||

if (data.includes('models') && !data.includes('lora-models'))

|

||||

if (data.includes('models') && !data.includes('lora-models') && !data.includes('state-models'))

|

||||

throttleRefreshLocalModels();

|

||||

});

|

||||

}

|

||||

|

||||

@@ -65,6 +65,7 @@ class CommonStore {

|

||||

platform: Platform = 'windows';

|

||||

proxyPort: number = 0;

|

||||

lastModelName: string = '';

|

||||

stateModels: string[] = [];

|

||||

// presets manager

|

||||

editingPreset: Preset | null = null;

|

||||

presets: Preset[] = [];

|

||||

@@ -410,6 +411,10 @@ class CommonStore {

|

||||

this.loraModels = value;

|

||||

}

|

||||

|

||||

setStateModels(value: string[]) {

|

||||

this.stateModels = value;

|

||||

}

|

||||

|

||||

setAttachmentUploading(value: boolean) {

|

||||

this.attachmentUploading = value;

|

||||

}

|

||||

|

||||

@@ -7,6 +7,7 @@ export type ApiParameters = {

|

||||

frequencyPenalty: number;

|

||||

penaltyDecay?: number;

|

||||

globalPenalty?: boolean;

|

||||

stateModel?: string;

|

||||

}

|

||||

export type Device = 'CPU' | 'CPU (rwkv.cpp)' | 'CUDA' | 'CUDA-Beta' | 'WebGPU' | 'WebGPU (Python)' | 'MPS' | 'Custom';

|

||||

export type Precision = 'fp16' | 'int8' | 'fp32' | 'nf4' | 'Q5_1';

|

||||

|

||||

@@ -322,7 +322,7 @@ export async function getReqUrl(port: number, path: string, isCore: boolean = fa

|

||||

headers: { [key: string]: string }

|

||||

}> {

|

||||

const realUrl = getServerRoot(port, isCore) + path;

|

||||

if (commonStore.platform === 'web')

|

||||

if (commonStore.platform === 'web' || realUrl.startsWith('https'))

|

||||

return {

|

||||

url: realUrl,

|

||||

headers: {}

|

||||

@@ -676,4 +676,11 @@ export function newChatConversation() {

|

||||

commonStore.setConversationOrder(conversationOrder);

|

||||

};

|

||||

return { pushMessage, saveConversation };

|

||||

}

|

||||

|

||||

export function isDynamicStateSupported(modelConfig: ModelConfig) {

|

||||

return modelConfig.modelParameters.device === 'CUDA' ||

|

||||

modelConfig.modelParameters.device === 'CPU' ||

|

||||

modelConfig.modelParameters.device === 'Custom' ||

|

||||

modelConfig.modelParameters.device === 'MPS';

|

||||

}

|

||||

@@ -1,5 +1,5 @@

|

||||

{

|

||||

"version": "1.7.8",

|

||||

"version": "1.8.0",

|

||||

"introduction": {

|

||||

"en": "RWKV is an open-source, commercially usable large language model with high flexibility and great potential for development.\n### About This Tool\nThis tool aims to lower the barrier of entry for using large language models, making it accessible to everyone. It provides fully automated dependency and model management. You simply need to click and run, following the instructions, to deploy a local large language model. The tool itself is very compact and only requires a single executable file for one-click deployment.\nAdditionally, this tool offers an interface that is fully compatible with the OpenAI API. This means you can use any ChatGPT client as a client for RWKV, enabling capability expansion beyond just chat functionality.\n### Preset Configuration Rules at the Bottom\nThis tool comes with a series of preset configurations to reduce complexity. The naming rules for each configuration represent the following in order: device - required VRAM/memory - model size - model language.\nFor example, \"GPU-8G-3B-EN\" indicates that this configuration is for a graphics card with 8GB of VRAM, a model size of 3 billion parameters, and it uses an English language model.\nLarger model sizes have higher performance and VRAM requirements. Among configurations with the same model size, those with higher VRAM usage will have faster runtime.\nFor example, if you have 12GB of VRAM but running the \"GPU-12G-7B-EN\" configuration is slow, you can downgrade to \"GPU-8G-3B-EN\" for a significant speed improvement.\n### About RWKV\nRWKV is an RNN with Transformer-level LLM performance, which can also be directly trained like a GPT transformer (parallelizable). And it's 100% attention-free. You only need the hidden state at position t to compute the state at position t+1. You can use the \"GPT\" mode to quickly compute the hidden state for the \"RNN\" mode.<br/>So it's combining the best of RNN and transformer - great performance, fast inference, saves VRAM, fast training, \"infinite\" ctx_len, and free sentence embedding (using the final hidden state).",

|

||||

"zh": "RWKV是一个开源且允许商用的大语言模型,灵活性很高且极具发展潜力。\n### 关于本工具\n本工具旨在降低大语言模型的使用门槛,做到人人可用,本工具提供了全自动化的依赖和模型管理,你只需要直接点击运行,跟随引导,即可完成本地大语言模型的部署,工具本身体积极小,只需要一个exe即可完成一键部署。\n此外,本工具提供了与OpenAI API完全兼容的接口,这意味着你可以把任意ChatGPT客户端用作RWKV的客户端,实现能力拓展,而不局限于聊天。\n### 底部的预设配置规则\n本工具内置了一系列预设配置,以降低使用难度,每个配置名的规则,依次代表着:设备-所需显存/内存-模型规模-模型语言。\n例如,GPU-8G-3B-CN,表示该配置用于显卡,需要8G显存,模型规模为30亿参数,使用的是中文模型。\n模型规模越大,性能要求越高,显存要求也越高,而同样模型规模的配置中,显存占用越高的,运行速度越快。\n例如当你有12G显存,但运行GPU-12G-7B-CN配置速度比较慢,可降级成GPU-8G-3B-CN,将会大幅提速。\n### 关于RWKV\nRWKV是具有Transformer级别LLM性能的RNN,也可以像GPT Transformer一样直接进行训练(可并行化)。而且它是100% attention-free的。你只需在位置t处获得隐藏状态即可计算位置t + 1处的状态。你可以使用“GPT”模式快速计算用于“RNN”模式的隐藏状态。\n因此,它将RNN和Transformer的优点结合起来 - 高性能、快速推理、节省显存、快速训练、“无限”上下文长度以及免费的语句嵌入(使用最终隐藏状态)。"

|

||||

@@ -99,6 +99,27 @@

|

||||

],

|

||||

"hide": true

|

||||

},

|

||||

{

|

||||

"name": "RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth",

|

||||

"desc": {

|

||||

"en": "RWKV-6 Global Languages 7B v2.1",

|

||||

"zh": "RWKV-6 全球语言 7B v2.1",

|

||||

"ja": "RWKV-6 グローバル言語 7B v2.1"

|

||||

},

|

||||

"size": 15271627549,

|

||||

"SHA256": "81863a63c9f726d41b9150d9c6e16567bf3c44e75685539a1091b4ab0b78d6c9",

|

||||

"lastUpdated": "2024-05-07T03:07:33",

|

||||

"url": "https://huggingface.co/BlinkDL/rwkv-6-world/blob/main/RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth",

|

||||

"downloadUrl": "https://huggingface.co/BlinkDL/rwkv-6-world/resolve/main/RWKV-x060-World-7B-v2.1-20240507-ctx4096.pth",

|

||||

"tags": [

|

||||

"Official",

|

||||

"RWKV-6",

|

||||

"Global",

|

||||

"Recommended",

|

||||

"CN",

|

||||

"JP"

|

||||

]

|

||||

},

|

||||

{

|

||||

"name": "RWKV-5-World-0.1B-v1-20230803-ctx4096.pth",

|

||||

"desc": {

|

||||

@@ -250,7 +271,6 @@

|

||||

"Official",

|

||||

"RWKV-5",

|

||||

"Global",

|

||||

"Recommended",

|

||||

"CN",

|

||||

"JP"

|

||||

]

|

||||

|

||||

Reference in New Issue

Block a user