Compare commits

5 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

15ae312b37 | ||

|

|

6938b5b20e | ||

|

|

9b3b06ab04 | ||

|

|

e2a7c93753 | ||

|

|

34349aee0b |

@@ -1,8 +1,8 @@

|

||||

## Changes

|

||||

|

||||

- fix cross-device state cache exception

|

||||

- training: fix data EOL format

|

||||

- save conversation as txt (originally in md)

|

||||

- fix always show `Convert Failed` when converting model

|

||||

- fix input with array type (#96, #107)

|

||||

- change chinese translation of `completion`

|

||||

|

||||

## Install

|

||||

|

||||

|

||||

@@ -58,7 +58,7 @@ API兼容的接口,这意味着一切ChatGPT客户端都是RWKV客户端。

|

||||

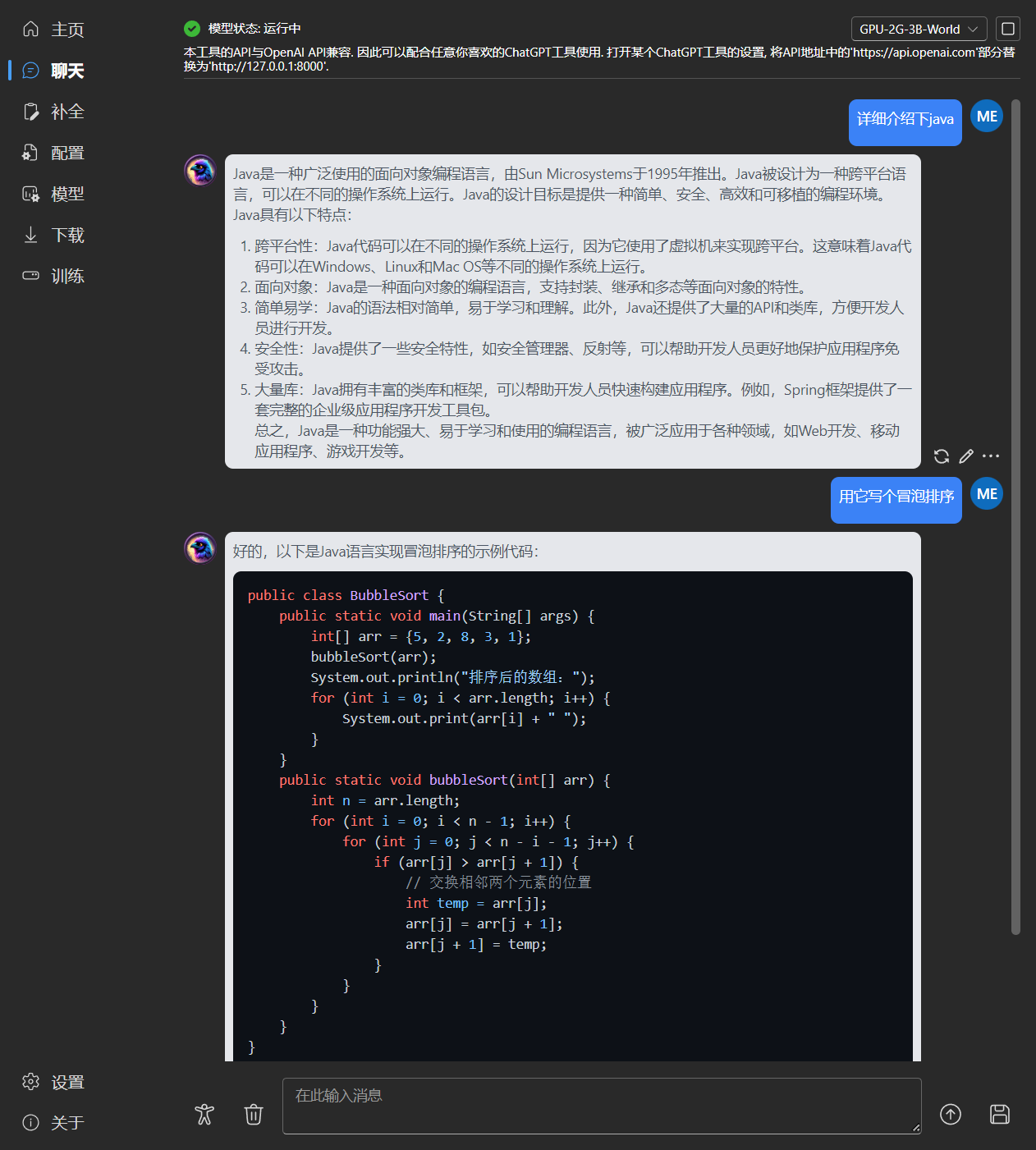

- 与OpenAI API完全兼容,一切ChatGPT客户端,都是RWKV客户端。启动模型后,打开 http://127.0.0.1:8000/docs 查看详细内容

|

||||

- 全自动依赖安装,你只需要一个轻巧的可执行程序

|

||||

- 预设了2G至32G显存的配置,几乎在各种电脑上工作良好

|

||||

- 自带用户友好的聊天和补全交互页面

|

||||

- 自带用户友好的聊天和续写交互页面

|

||||

- 易于理解和操作的参数配置

|

||||

- 内置模型转换工具

|

||||

- 内置下载管理和远程模型检视

|

||||

@@ -144,7 +144,7 @@ for i in np.argsort(embeddings_cos_sim)[::-1]:

|

||||

|

||||

|

||||

|

||||

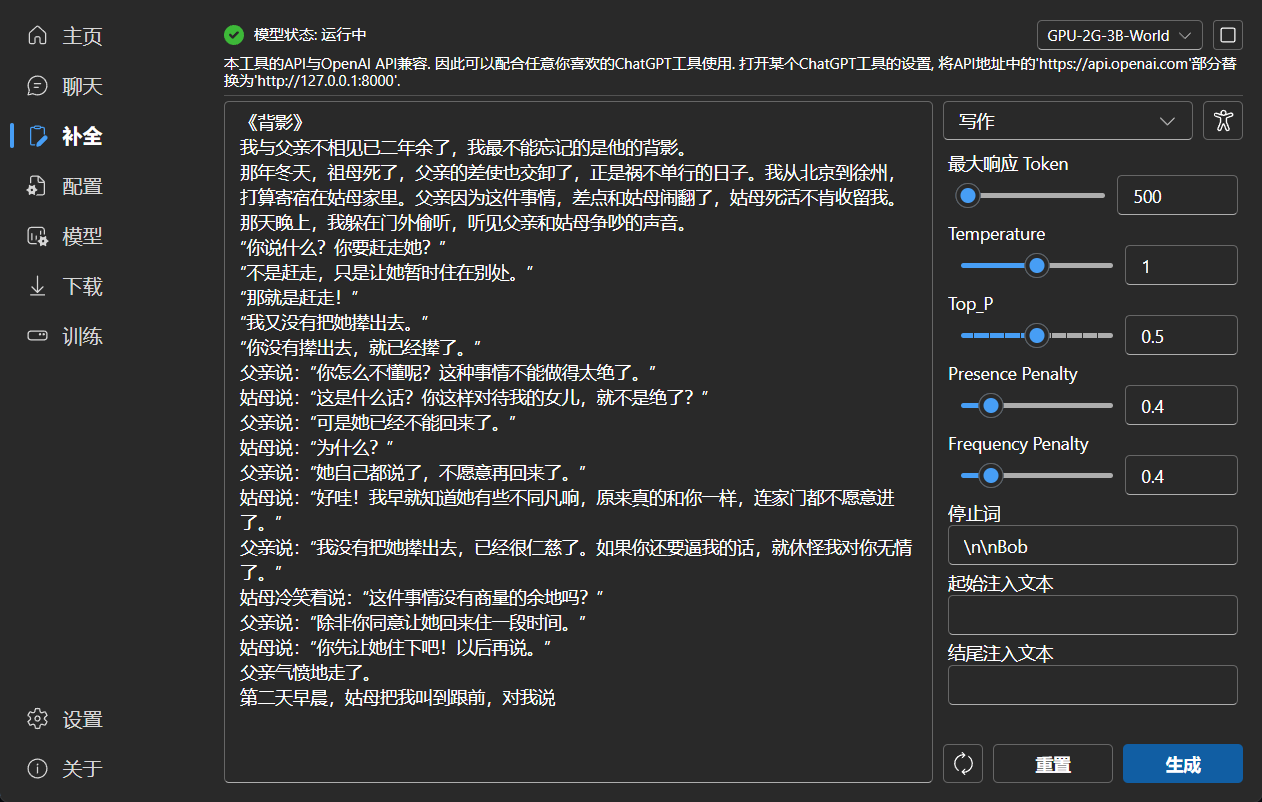

### 补全

|

||||

### 续写

|

||||

|

||||

|

||||

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

import asyncio

|

||||

import json

|

||||

from threading import Lock

|

||||

from typing import List

|

||||

from typing import List, Union

|

||||

import base64

|

||||

|

||||

from fastapi import APIRouter, Request, status, HTTPException

|

||||

@@ -44,7 +44,7 @@ class ChatCompletionBody(ModelConfigBody):

|

||||

|

||||

|

||||

class CompletionBody(ModelConfigBody):

|

||||

prompt: str or List[str]

|

||||

prompt: Union[str, List[str]]

|

||||

model: str = "rwkv"

|

||||

stream: bool = False

|

||||

stop: str = None

|

||||

@@ -326,7 +326,7 @@ async def completions(body: CompletionBody, request: Request):

|

||||

|

||||

|

||||

class EmbeddingsBody(BaseModel):

|

||||

input: str or List[str] or List[List[int]]

|

||||

input: Union[str, List[str], List[List[int]]]

|

||||

model: str = "rwkv"

|

||||

encoding_format: str = None

|

||||

fast_mode: bool = False

|

||||

|

||||

@@ -104,7 +104,7 @@

|

||||

"Supported custom cuda file not found": "没有找到支持的自定义cuda文件",

|

||||

"Failed to copy custom cuda file": "自定义cuda文件复制失败",

|

||||

"Downloading update, please wait. If it is not completed, please manually download the program from GitHub and replace the original program.": "正在下载更新,请等待。如果一直未完成,请从Github手动下载并覆盖原程序",

|

||||

"Completion": "补全",

|

||||

"Completion": "续写",

|

||||

"Parameters": "参数",

|

||||

"Stop Sequences": "停止词",

|

||||

"When this content appears in the response result, the generation will end.": "响应结果出现该内容时就结束生成",

|

||||

@@ -153,7 +153,7 @@

|

||||

"Restart the app to apply DPI Scaling.": "重启应用以使显示缩放生效",

|

||||

"Restart": "重启",

|

||||

"API Chat Model Name": "API聊天模型名",

|

||||

"API Completion Model Name": "API补全模型名",

|

||||

"API Completion Model Name": "API续写模型名",

|

||||

"Localhost": "本地",

|

||||

"Retry": "重试",

|

||||

"Delete": "删除",

|

||||

|

||||

@@ -254,7 +254,7 @@ export const Configs: FC = observer(() => {

|

||||

const newModelPath = modelPath + '-' + strategy.replace(/[:> *+]/g, '-');

|

||||

toast(t('Start Converting'), { autoClose: 1000, type: 'info' });

|

||||

ConvertModel(commonStore.settings.customPythonPath, modelPath, strategy, newModelPath).then(async () => {

|

||||

if (!await FileExists(newModelPath)) {

|

||||

if (!await FileExists(newModelPath + '.pth')) {

|

||||

toast(t('Convert Failed') + ' - ' + await GetPyError(), { type: 'error' });

|

||||

} else {

|

||||

toast(`${t('Convert Success')} - ${newModelPath}`, { type: 'success' });

|

||||

|

||||

@@ -1,5 +1,5 @@

|

||||

{

|

||||

"version": "1.3.7",

|

||||

"version": "1.3.8",

|

||||

"introduction": {

|

||||

"en": "RWKV is an open-source, commercially usable large language model with high flexibility and great potential for development.\n### About This Tool\nThis tool aims to lower the barrier of entry for using large language models, making it accessible to everyone. It provides fully automated dependency and model management. You simply need to click and run, following the instructions, to deploy a local large language model. The tool itself is very compact and only requires a single executable file for one-click deployment.\nAdditionally, this tool offers an interface that is fully compatible with the OpenAI API. This means you can use any ChatGPT client as a client for RWKV, enabling capability expansion beyond just chat functionality.\n### Preset Configuration Rules at the Bottom\nThis tool comes with a series of preset configurations to reduce complexity. The naming rules for each configuration represent the following in order: device - required VRAM/memory - model size - model language.\nFor example, \"GPU-8G-3B-EN\" indicates that this configuration is for a graphics card with 8GB of VRAM, a model size of 3 billion parameters, and it uses an English language model.\nLarger model sizes have higher performance and VRAM requirements. Among configurations with the same model size, those with higher VRAM usage will have faster runtime.\nFor example, if you have 12GB of VRAM but running the \"GPU-12G-7B-EN\" configuration is slow, you can downgrade to \"GPU-8G-3B-EN\" for a significant speed improvement.\n### About RWKV\nRWKV is an RNN with Transformer-level LLM performance, which can also be directly trained like a GPT transformer (parallelizable). And it's 100% attention-free. You only need the hidden state at position t to compute the state at position t+1. You can use the \"GPT\" mode to quickly compute the hidden state for the \"RNN\" mode.<br/>So it's combining the best of RNN and transformer - great performance, fast inference, saves VRAM, fast training, \"infinite\" ctx_len, and free sentence embedding (using the final hidden state).",

|

||||

"zh": "RWKV是一个开源且允许商用的大语言模型,灵活性很高且极具发展潜力。\n### 关于本工具\n本工具旨在降低大语言模型的使用门槛,做到人人可用,本工具提供了全自动化的依赖和模型管理,你只需要直接点击运行,跟随引导,即可完成本地大语言模型的部署,工具本身体积极小,只需要一个exe即可完成一键部署。\n此外,本工具提供了与OpenAI API完全兼容的接口,这意味着你可以把任意ChatGPT客户端用作RWKV的客户端,实现能力拓展,而不局限于聊天。\n### 底部的预设配置规则\n本工具内置了一系列预设配置,以降低使用难度,每个配置名的规则,依次代表着:设备-所需显存/内存-模型规模-模型语言。\n例如,GPU-8G-3B-CN,表示该配置用于显卡,需要8G显存,模型规模为30亿参数,使用的是中文模型。\n模型规模越大,性能要求越高,显存要求也越高,而同样模型规模的配置中,显存占用越高的,运行速度越快。\n例如当你有12G显存,但运行GPU-12G-7B-CN配置速度比较慢,可降级成GPU-8G-3B-CN,将会大幅提速。\n### 关于RWKV\nRWKV是具有Transformer级别LLM性能的RNN,也可以像GPT Transformer一样直接进行训练(可并行化)。而且它是100% attention-free的。你只需在位置t处获得隐藏状态即可计算位置t + 1处的状态。你可以使用“GPT”模式快速计算用于“RNN”模式的隐藏状态。\n因此,它将RNN和Transformer的优点结合起来 - 高性能、快速推理、节省显存、快速训练、“无限”上下文长度以及免费的语句嵌入(使用最终隐藏状态)。"

|

||||

|

||||

Reference in New Issue

Block a user