Compare commits

46 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

15ae312b37 | ||

|

|

6938b5b20e | ||

|

|

9b3b06ab04 | ||

|

|

e2a7c93753 | ||

|

|

34349aee0b | ||

|

|

8e79370e95 | ||

|

|

652c35322b | ||

|

|

e2fc57ac24 | ||

|

|

994fc7c828 | ||

|

|

b9a960d984 | ||

|

|

3baf260f4d | ||

|

|

d037ded146 | ||

|

|

622287f3da | ||

|

|

5d12bf74f6 | ||

|

|

c88f9321f5 | ||

|

|

f9f1d5c9fc | ||

|

|

0edec68376 | ||

|

|

ee63dc25f4 | ||

|

|

fee8fe73f2 | ||

|

|

1689f9e7e7 | ||

|

|

a1ed0cb2e9 | ||

|

|

5ee5fa7e6e | ||

|

|

d8c70453ec | ||

|

|

e930eb5967 | ||

|

|

aec6ad636a | ||

|

|

750c91bd3e | ||

|

|

fcc3886db1 | ||

|

|

22afc98be5 | ||

|

|

5b1a9448e6 | ||

|

|

07d89e3eeb | ||

|

|

96e97d9c1e | ||

|

|

bcb125e168 | ||

|

|

6fbb86667c | ||

|

|

2d545604f4 | ||

|

|

7210a7481e | ||

|

|

55210c89e2 | ||

|

|

c725d11dd9 | ||

|

|

ba2a6bd06c | ||

|

|

57b80c6ed0 | ||

|

|

115c59d5e1 | ||

|

|

543ff468b7 | ||

|

|

96ae47989e | ||

|

|

368932a610 | ||

|

|

f2cd531fcb | ||

|

|

511652b71c | ||

|

|

525fb132d6 |

6

.github/workflows/release.yml

vendored

6

.github/workflows/release.yml

vendored

@@ -83,6 +83,9 @@ jobs:

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@latest

|

||||

rm -rf ./backend-python/wkv_cuda_utils

|

||||

rm ./backend-python/get-pip.py

|

||||

sed -i '1,2d' ./backend-golang/wsl_not_windows.go

|

||||

rm ./backend-golang/wsl.go

|

||||

mv ./backend-golang/wsl_not_windows.go ./backend-golang/wsl.go

|

||||

make

|

||||

mv build/bin/RWKV-Runner build/bin/RWKV-Runner_linux_x64

|

||||

|

||||

@@ -102,6 +105,9 @@ jobs:

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@latest

|

||||

rm -rf ./backend-python/wkv_cuda_utils

|

||||

rm ./backend-python/get-pip.py

|

||||

sed -i '' '1,2d' ./backend-golang/wsl_not_windows.go

|

||||

rm ./backend-golang/wsl.go

|

||||

mv ./backend-golang/wsl_not_windows.go ./backend-golang/wsl.go

|

||||

make

|

||||

cp build/darwin/Readme_Install.txt build/bin/Readme_Install.txt

|

||||

cp build/bin/RWKV-Runner.app/Contents/MacOS/RWKV-Runner build/bin/RWKV-Runner_darwin_universal

|

||||

|

||||

@@ -1,6 +1,8 @@

|

||||

## Changes

|

||||

|

||||

- improve lora finetune process (need to be refactored)

|

||||

- fix always show `Convert Failed` when converting model

|

||||

- fix input with array type (#96, #107)

|

||||

- change chinese translation of `completion`

|

||||

|

||||

## Install

|

||||

|

||||

|

||||

32

README.md

32

README.md

@@ -49,7 +49,7 @@ English | [简体中文](README_ZH.md) | [日本語](README_JA.md)

|

||||

|

||||

#### Default configs has enabled custom CUDA kernel acceleration, which is much faster and consumes much less VRAM. If you encounter possible compatibility issues, go to the Configs page and turn off `Use Custom CUDA kernel to Accelerate`.

|

||||

|

||||

#### If Windows Defender claims this is a virus, you can try downloading [v1.0.8](https://github.com/josStorer/RWKV-Runner/releases/tag/v1.0.8)/[v1.0.9](https://github.com/josStorer/RWKV-Runner/releases/tag/v1.0.9) and letting it update automatically to the latest version, or add it to the trusted list.

|

||||

#### If Windows Defender claims this is a virus, you can try downloading [v1.3.7_win.zip](https://github.com/josStorer/RWKV-Runner/releases/download/v1.3.7/RWKV-Runner_win.zip) and letting it update automatically to the latest version, or add it to the trusted list.

|

||||

|

||||

#### For different tasks, adjusting API parameters can achieve better results. For example, for translation tasks, you can try setting Temperature to 1 and Top_P to 0.3.

|

||||

|

||||

@@ -64,6 +64,8 @@ English | [简体中文](README_ZH.md) | [日本語](README_JA.md)

|

||||

- Easy-to-understand and operate parameter configuration

|

||||

- Built-in model conversion tool

|

||||

- Built-in download management and remote model inspection

|

||||

- Built-in one-click LoRA Finetune

|

||||

- Can also be used as an OpenAI ChatGPT and GPT-Playground client

|

||||

- Multilingual localization

|

||||

- Theme switching

|

||||

- Automatic updates

|

||||

@@ -126,46 +128,44 @@ for i in np.argsort(embeddings_cos_sim)[::-1]:

|

||||

print(f"{embeddings_cos_sim[i]:.10f} - {values[i]}")

|

||||

```

|

||||

|

||||

## Todo

|

||||

|

||||

- [ ] Model training functionality

|

||||

- [x] CUDA operator int8 acceleration

|

||||

- [x] macOS support

|

||||

- [x] Linux support

|

||||

- [ ] Local State Cache DB

|

||||

|

||||

## Related Repositories:

|

||||

|

||||

- RWKV-4-World: https://huggingface.co/BlinkDL/rwkv-4-world/tree/main

|

||||

- RWKV-4-Raven: https://huggingface.co/BlinkDL/rwkv-4-raven/tree/main

|

||||

- ChatRWKV: https://github.com/BlinkDL/ChatRWKV

|

||||

- RWKV-LM: https://github.com/BlinkDL/RWKV-LM

|

||||

- RWKV-LM-LoRA: https://github.com/Blealtan/RWKV-LM-LoRA

|

||||

|

||||

## Preview

|

||||

|

||||

### Homepage

|

||||

|

||||

|

||||

|

||||

|

||||

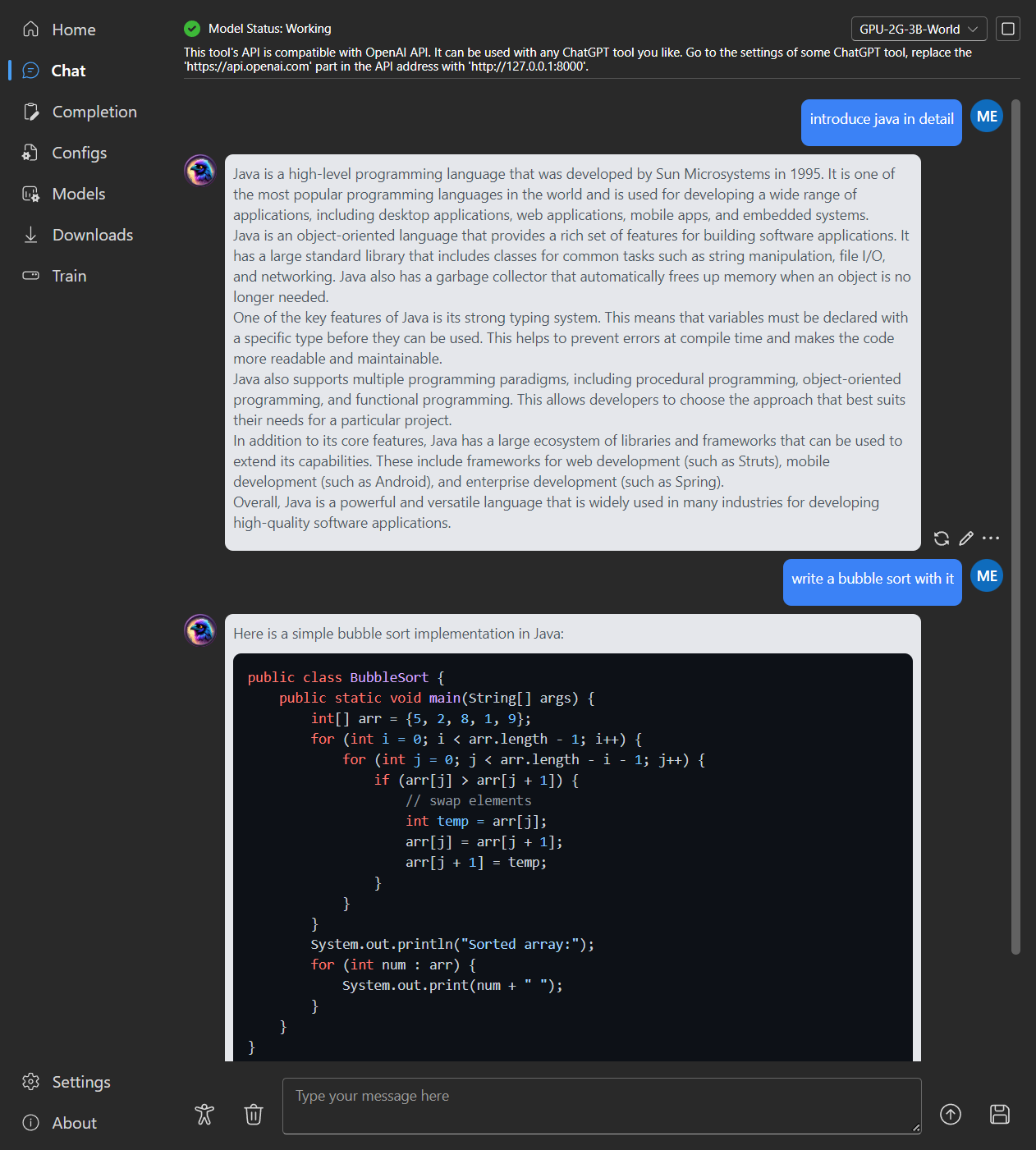

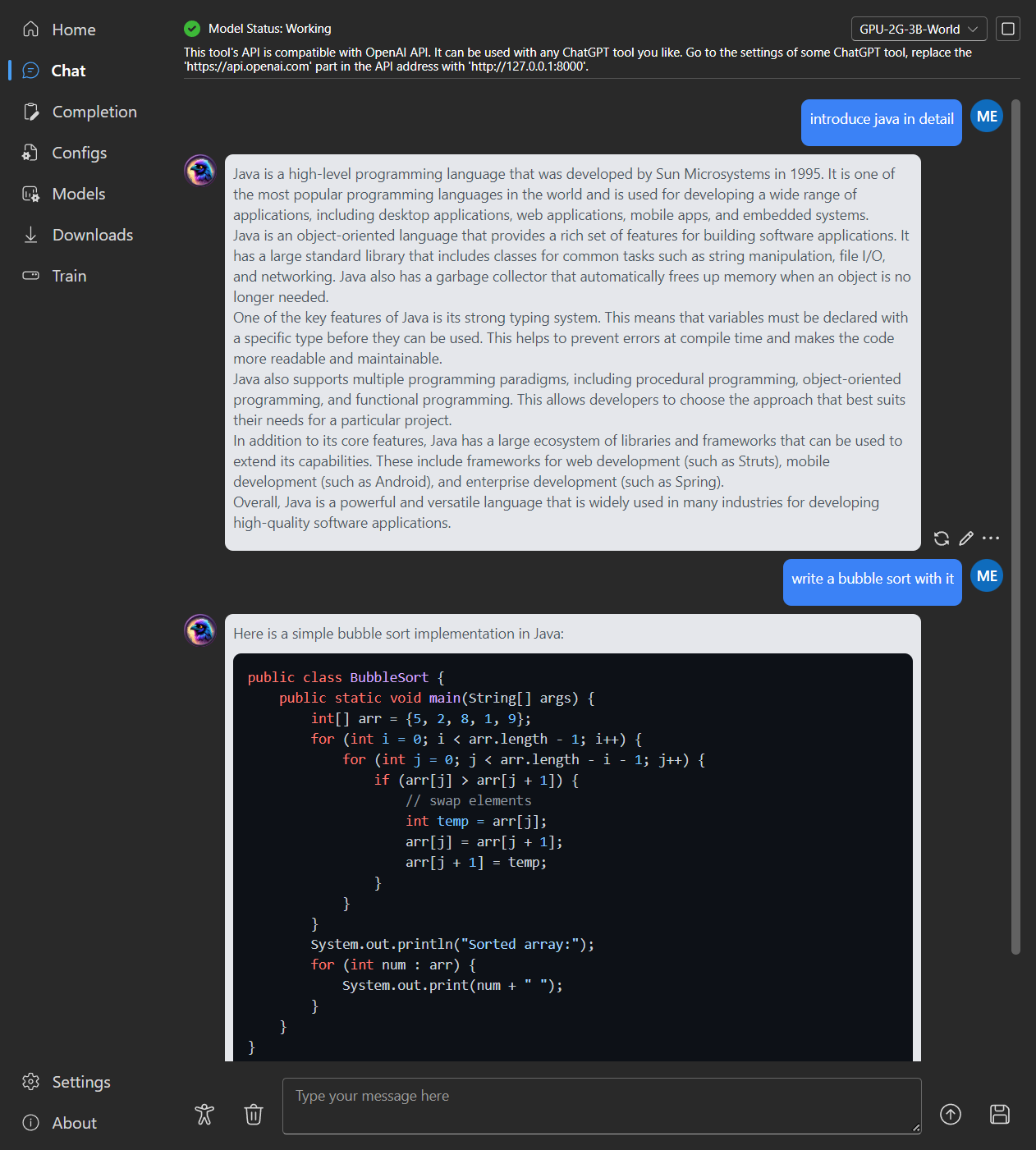

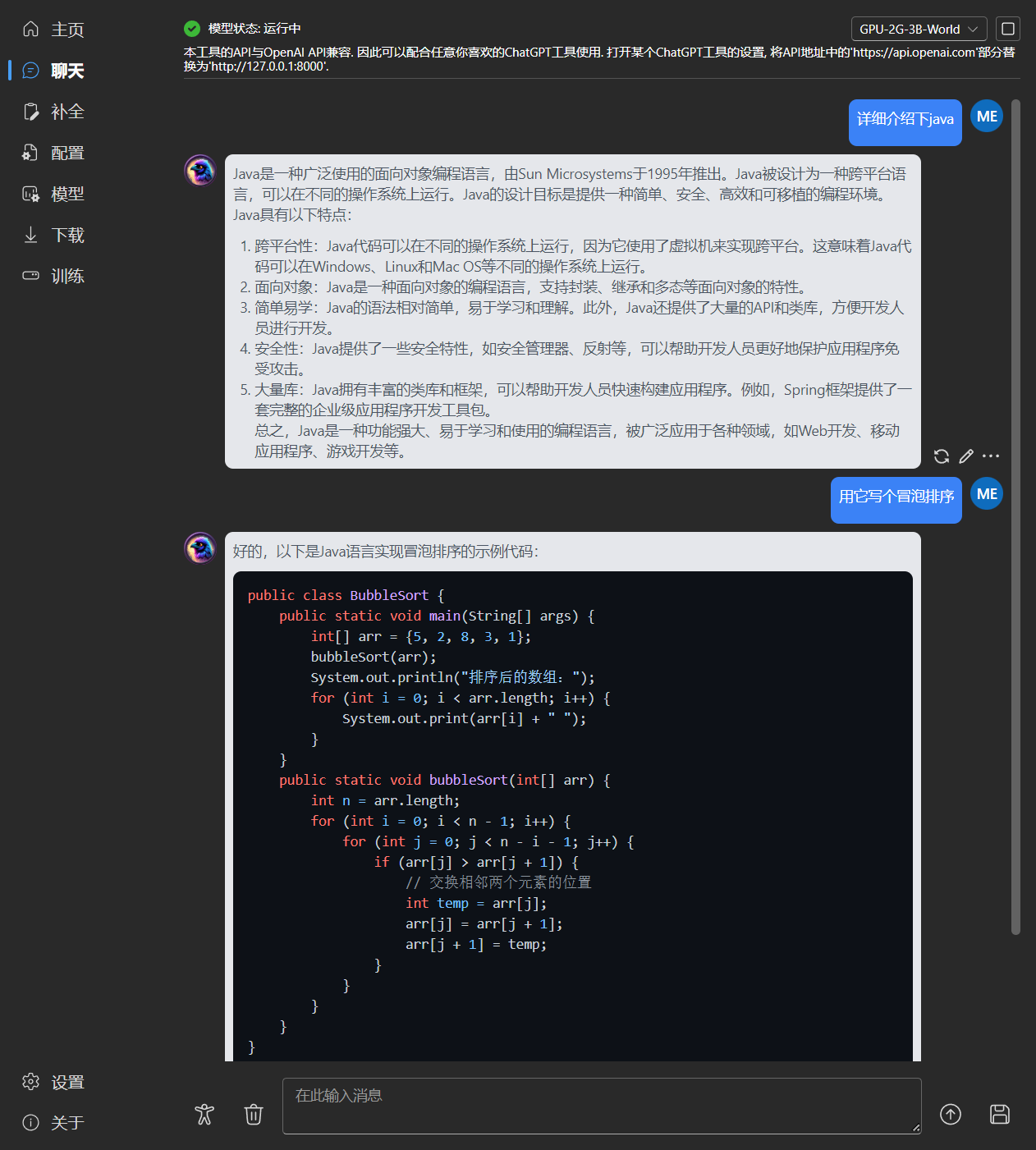

### Chat

|

||||

|

||||

|

||||

|

||||

|

||||

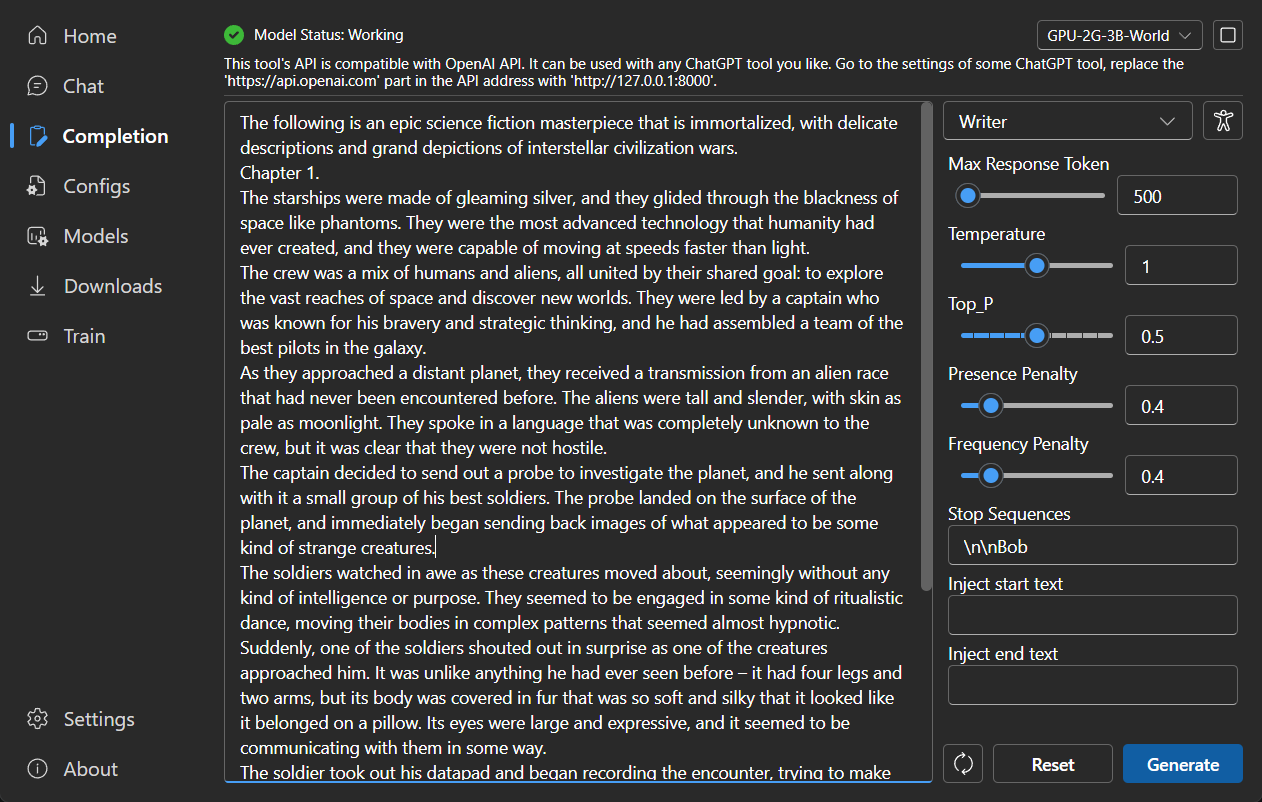

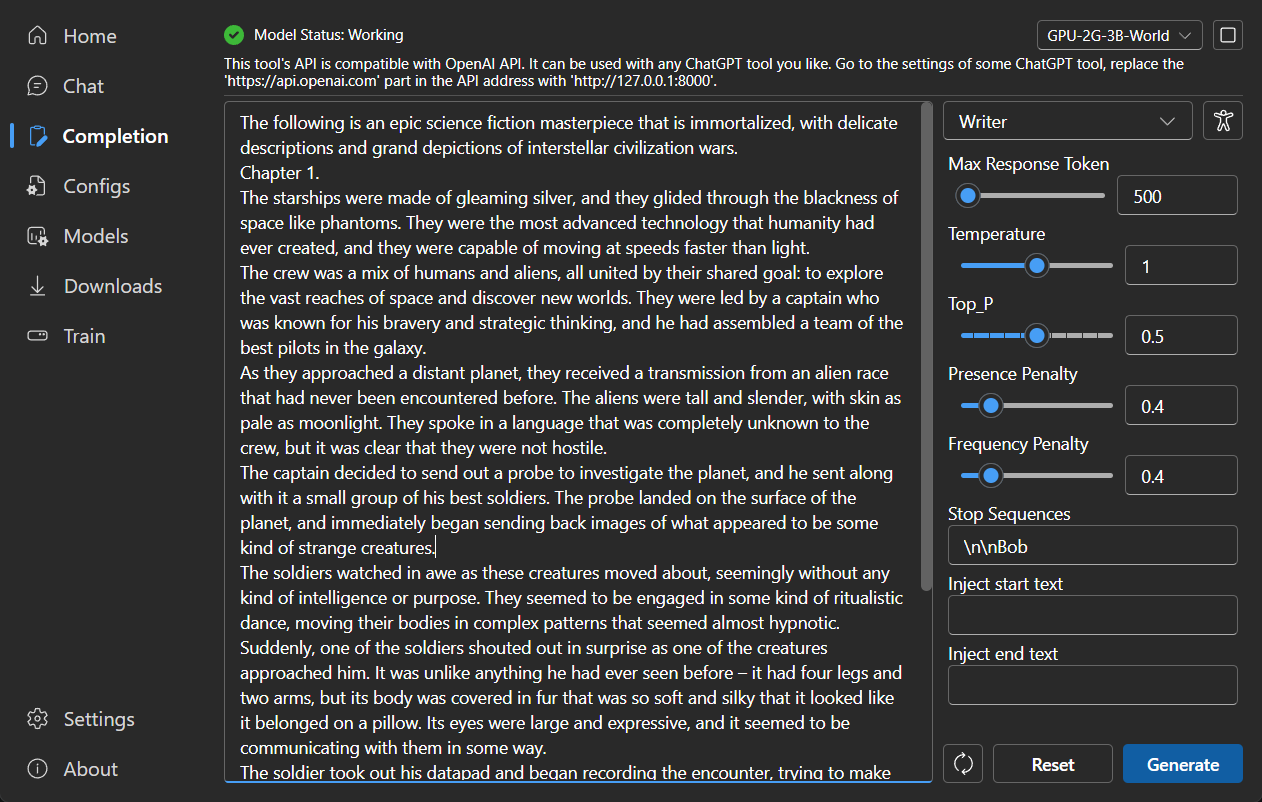

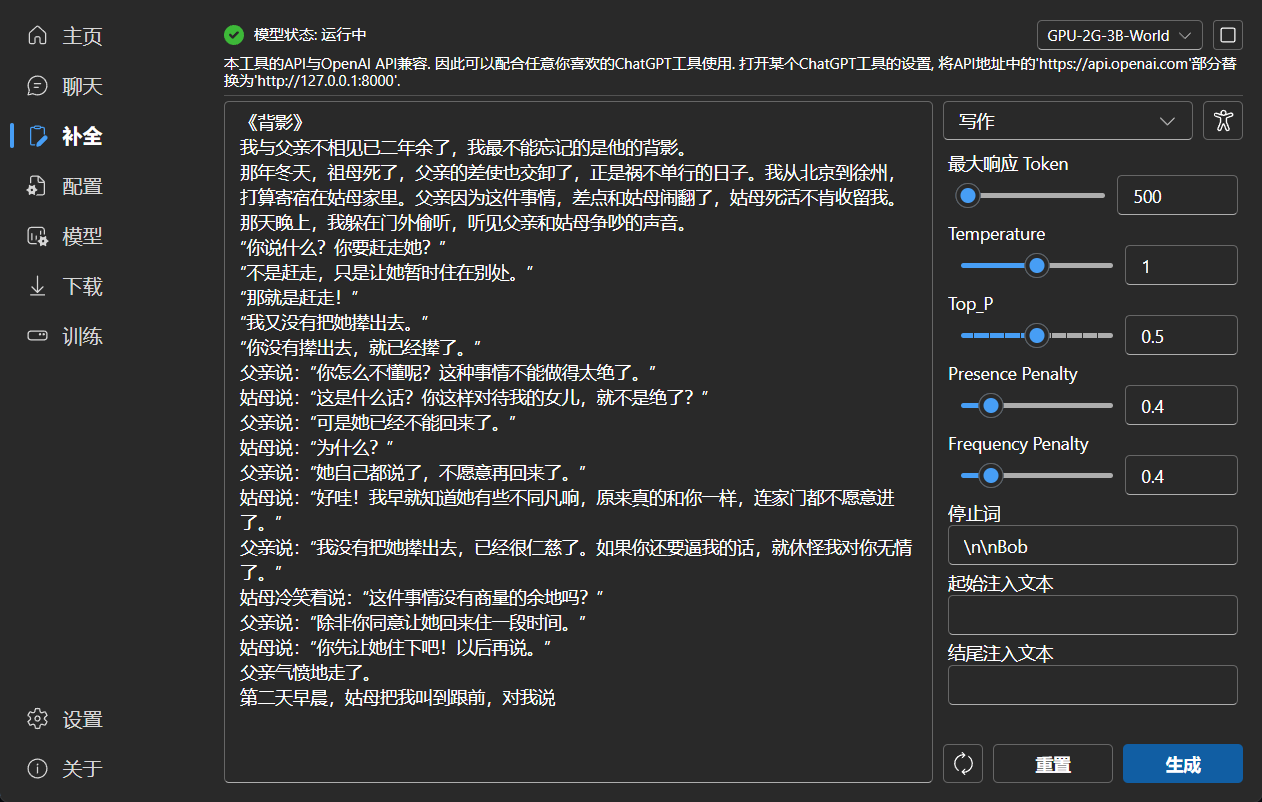

### Completion

|

||||

|

||||

|

||||

|

||||

|

||||

### Configuration

|

||||

|

||||

|

||||

|

||||

|

||||

### Model Management

|

||||

|

||||

|

||||

|

||||

|

||||

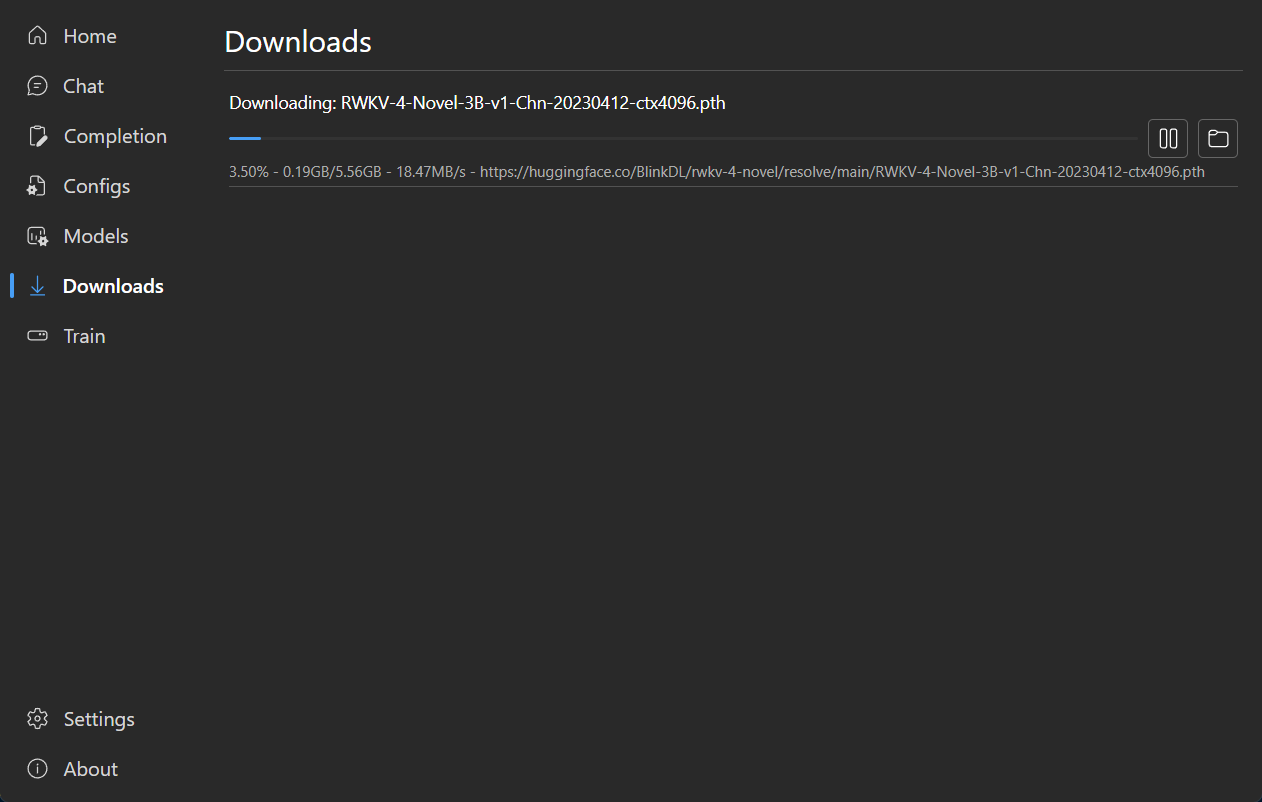

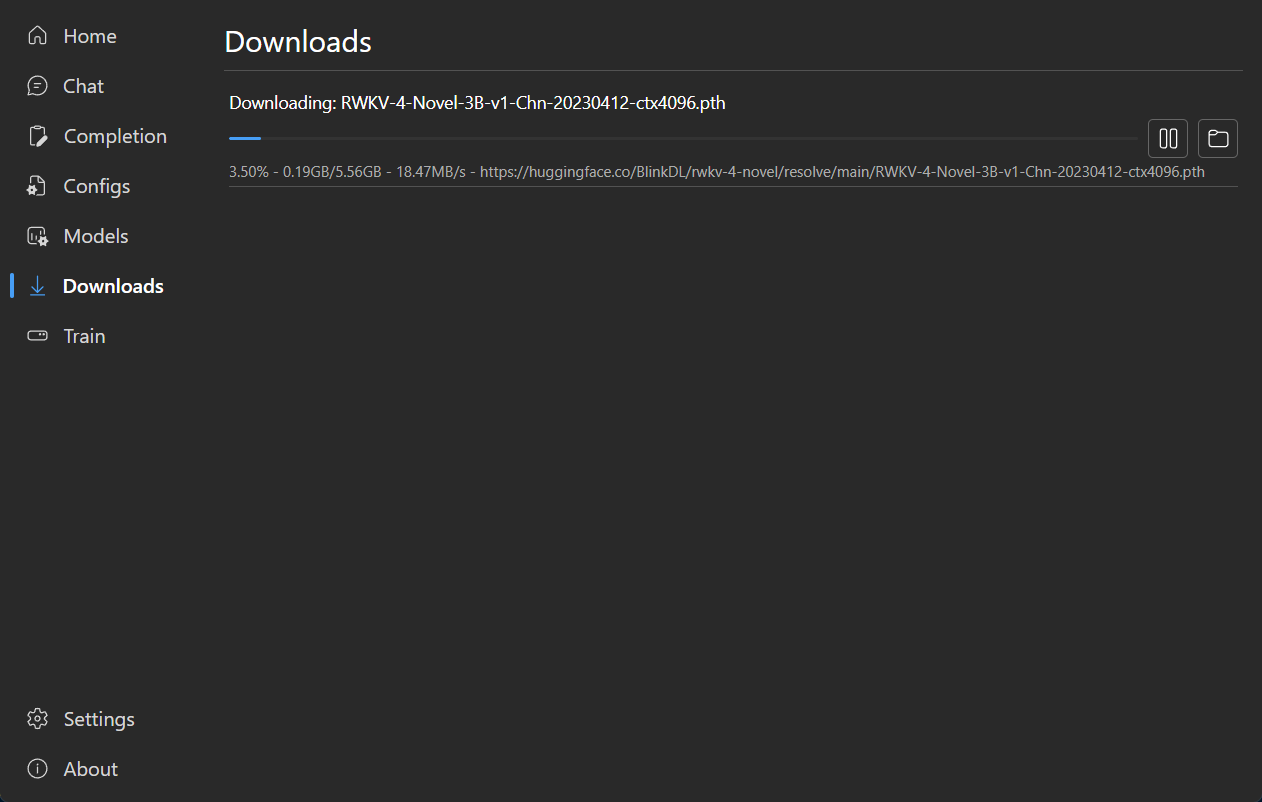

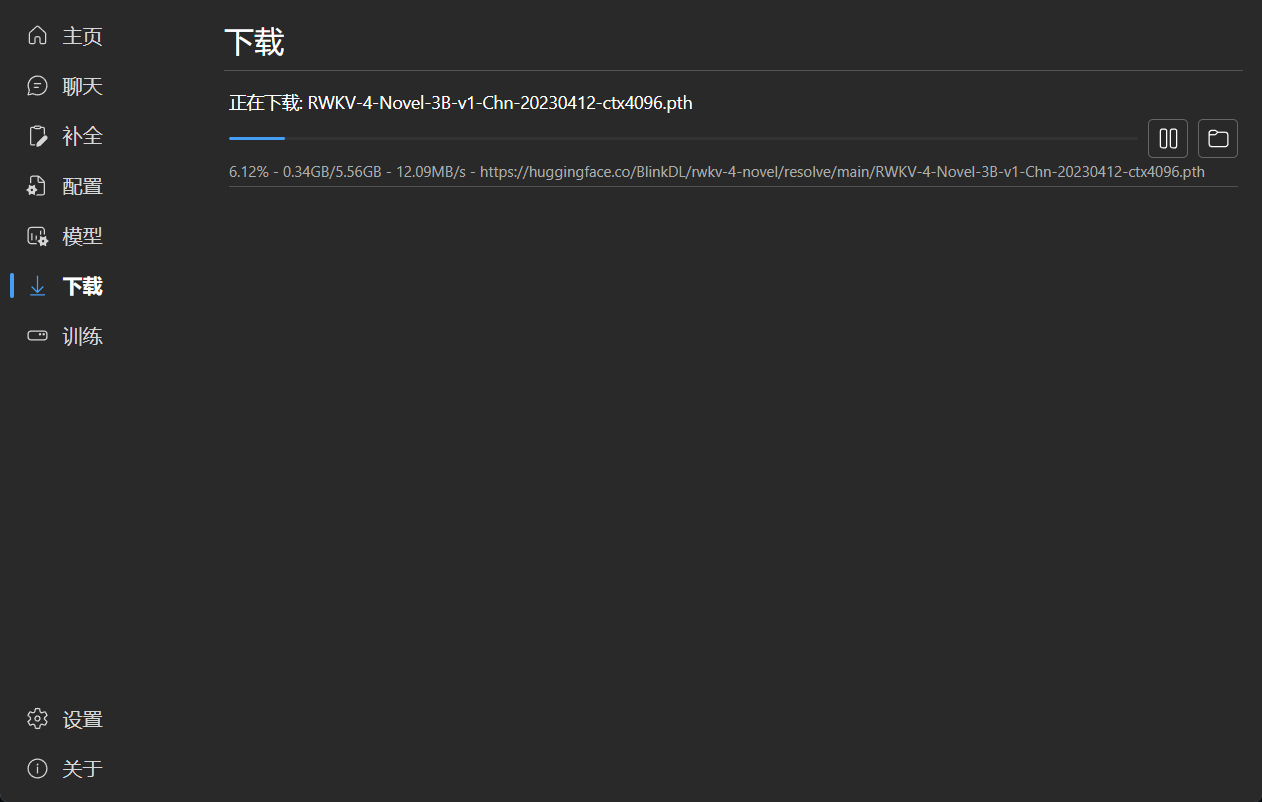

### Download Management

|

||||

|

||||

|

||||

|

||||

|

||||

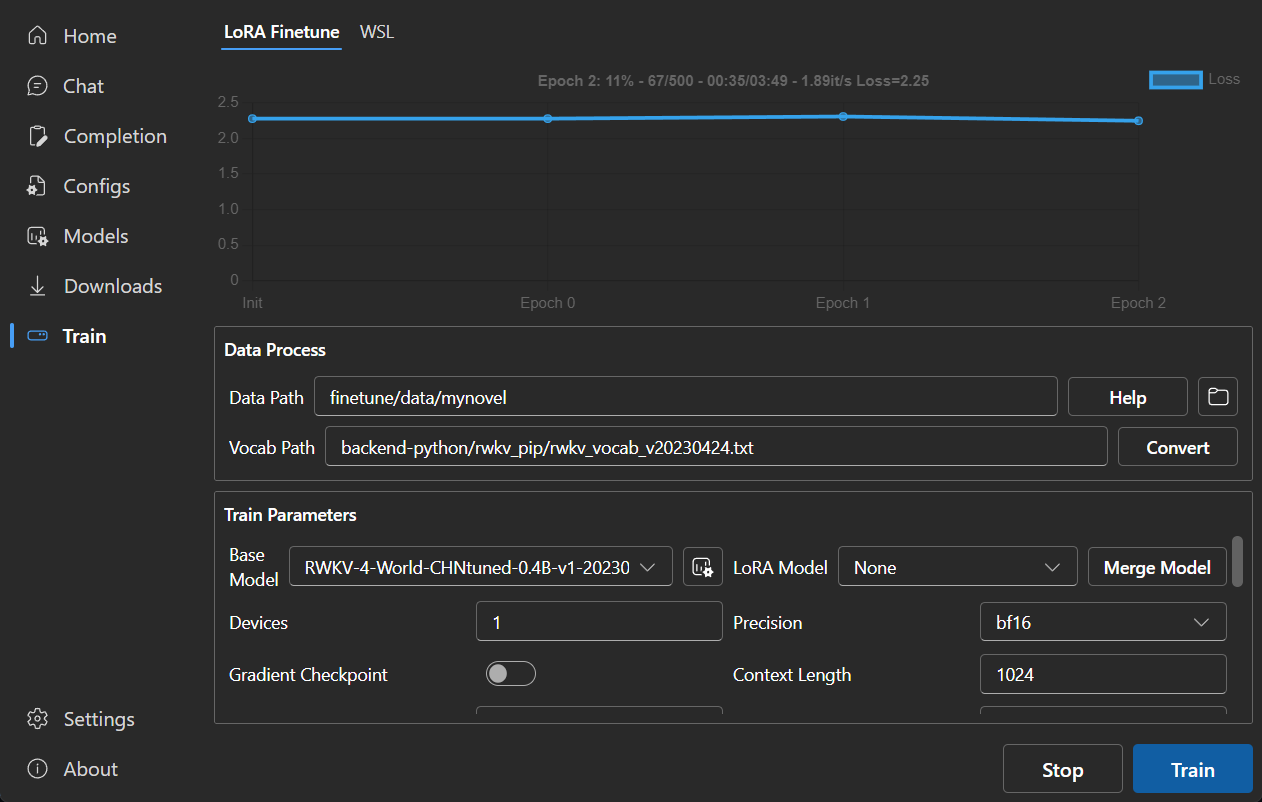

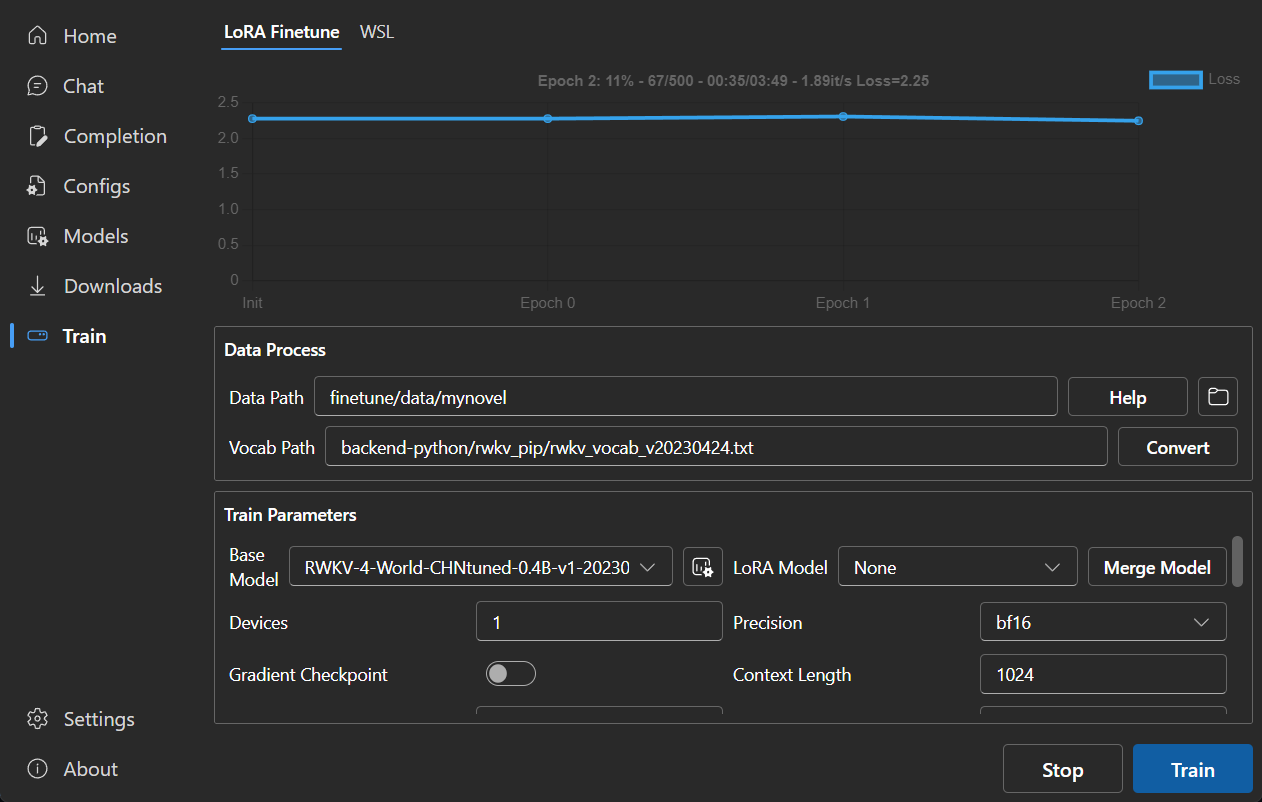

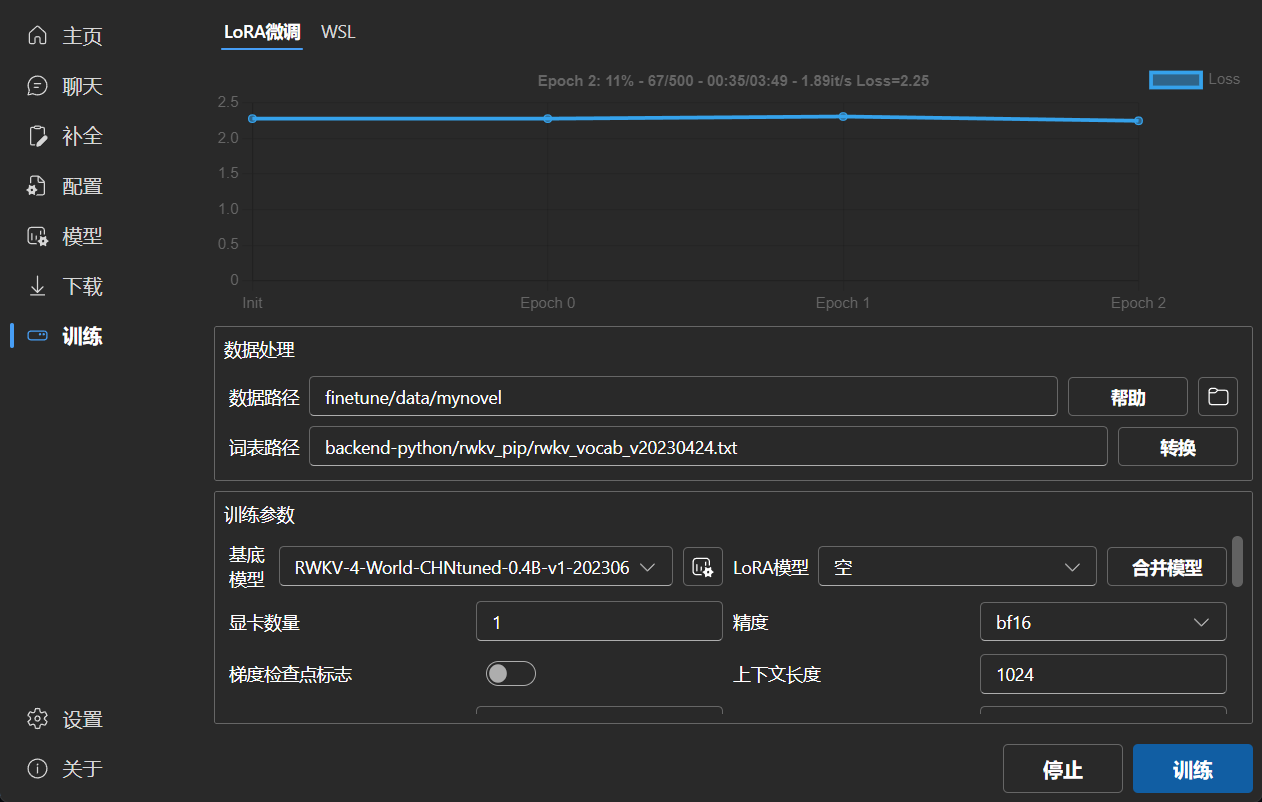

### LoRA Finetune

|

||||

|

||||

|

||||

|

||||

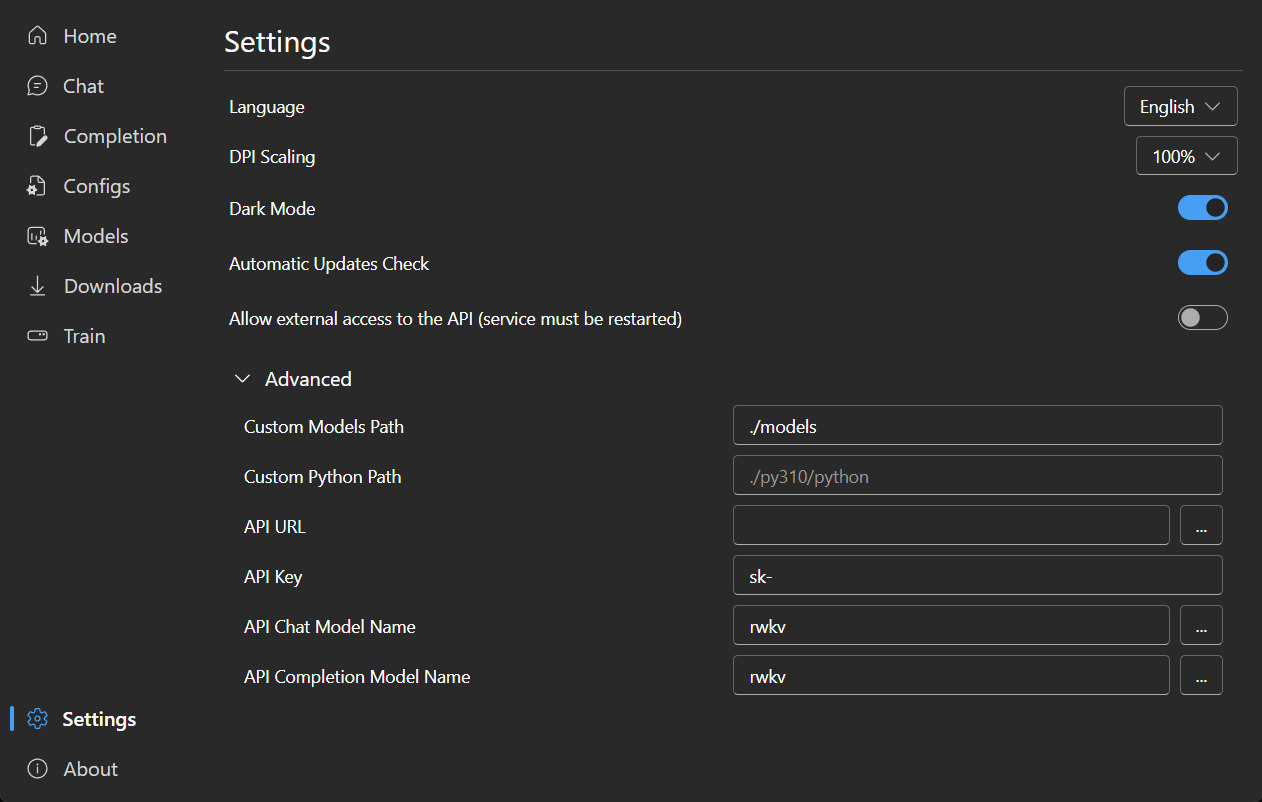

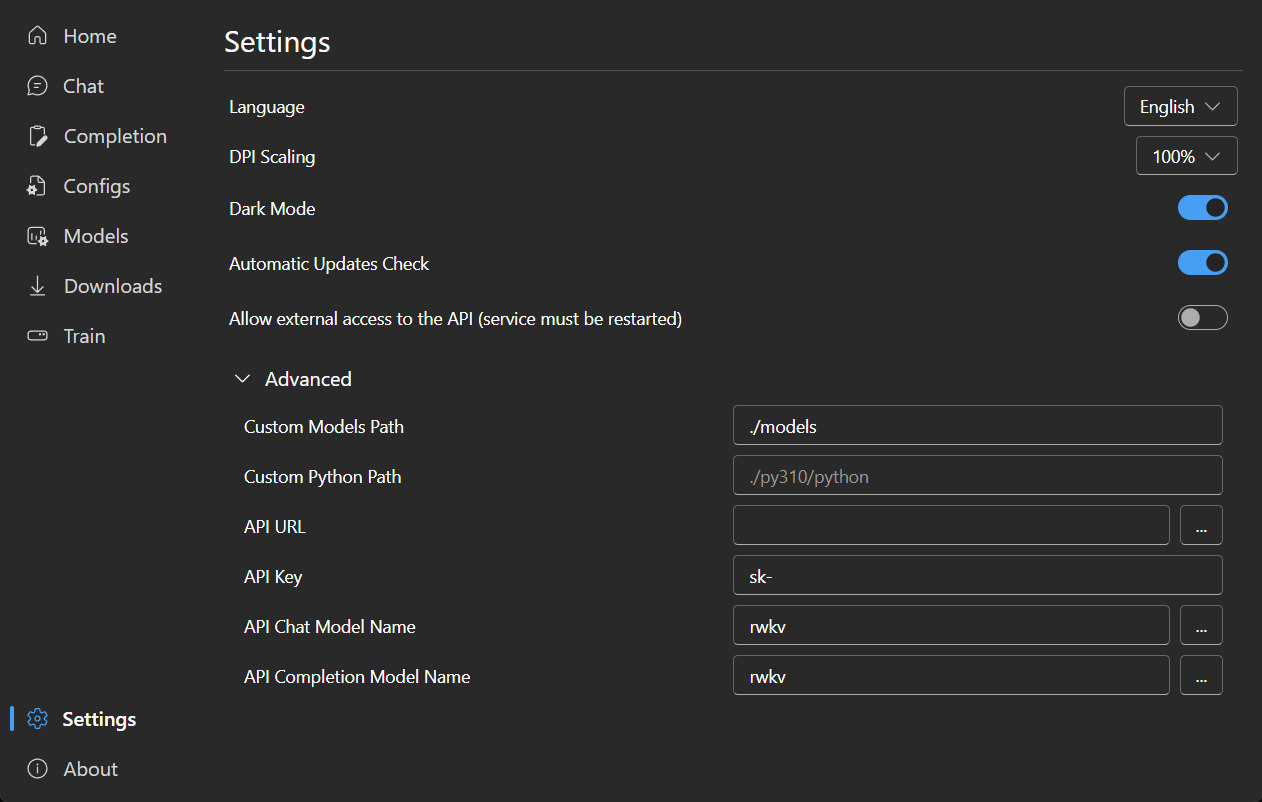

### Settings

|

||||

|

||||

|

||||

|

||||

|

||||

42

README_JA.md

42

README_JA.md

@@ -24,22 +24,32 @@

|

||||

[FAQs](https://github.com/josStorer/RWKV-Runner/wiki/FAQs) | [プレビュー](#Preview) | [ダウンロード][download-url] | [サーバーデプロイ例](https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples)

|

||||

|

||||

[license-image]: http://img.shields.io/badge/license-MIT-blue.svg

|

||||

|

||||

[license-url]: https://github.com/josStorer/RWKV-Runner/blob/master/LICENSE

|

||||

|

||||

[release-image]: https://img.shields.io/github/release/josStorer/RWKV-Runner.svg

|

||||

|

||||

[release-url]: https://github.com/josStorer/RWKV-Runner/releases/latest

|

||||

|

||||

[download-url]: https://github.com/josStorer/RWKV-Runner/releases

|

||||

|

||||

[Windows-image]: https://img.shields.io/badge/-Windows-blue?logo=windows

|

||||

|

||||

[Windows-url]: https://github.com/josStorer/RWKV-Runner/blob/master/build/windows/Readme_Install.txt

|

||||

|

||||

[MacOS-image]: https://img.shields.io/badge/-MacOS-black?logo=apple

|

||||

|

||||

[MacOS-url]: https://github.com/josStorer/RWKV-Runner/blob/master/build/darwin/Readme_Install.txt

|

||||

|

||||

[Linux-image]: https://img.shields.io/badge/-Linux-black?logo=linux

|

||||

|

||||

[Linux-url]: https://github.com/josStorer/RWKV-Runner/blob/master/build/linux/Readme_Install.txt

|

||||

|

||||

</div>

|

||||

|

||||

#### デフォルトの設定はカスタム CUDA カーネルアクセラレーションを有効にしています。互換性の問題が発生する可能性がある場合は、コンフィグページに移動し、`Use Custom CUDA kernel to Accelerate` をオフにしてください。

|

||||

|

||||

#### Windows Defender がこれをウイルスだと主張する場合は、[v1.0.8](https://github.com/josStorer/RWKV-Runner/releases/tag/v1.0.8) / [v1.0.9](https://github.com/josStorer/RWKV-Runner/releases/tag/v1.0.9) をダウンロードして最新版に自動更新させるか、信頼済みリストに追加してみてください。

|

||||

#### Windows Defender がこれをウイルスだと主張する場合は、[v1.3.7_win.zip](https://github.com/josStorer/RWKV-Runner/releases/download/v1.3.7/RWKV-Runner_win.zip) をダウンロードして最新版に自動更新させるか、信頼済みリストに追加してみてください。

|

||||

|

||||

#### 異なるタスクについては、API パラメータを調整することで、より良い結果を得ることができます。例えば、翻訳タスクの場合、Temperature を 1 に、Top_P を 0.3 に設定してみてください。

|

||||

|

||||

@@ -54,6 +64,8 @@

|

||||

- 分かりやすく操作しやすいパラメータ設定

|

||||

- 内蔵モデル変換ツール

|

||||

- ダウンロード管理とリモートモデル検査機能内蔵

|

||||

- 内蔵のLoRA微調整機能を搭載しています

|

||||

- このプログラムは、OpenAI ChatGPTとGPT Playgroundのクライアントとしても使用できます

|

||||

- 多言語ローカライズ

|

||||

- テーマ切り替え

|

||||

- 自動アップデート

|

||||

@@ -116,46 +128,44 @@ for i in np.argsort(embeddings_cos_sim)[::-1]:

|

||||

print(f"{embeddings_cos_sim[i]:.10f} - {values[i]}")

|

||||

```

|

||||

|

||||

## Todo

|

||||

|

||||

- [ ] モデル学習機能

|

||||

- [x] CUDA オペレータ int8 アクセラレーション

|

||||

- [x] macOS サポート

|

||||

- [x] Linux サポート

|

||||

- [ ] ローカルステートキャッシュ DB

|

||||

|

||||

## 関連リポジトリ:

|

||||

|

||||

- RWKV-4-World: https://huggingface.co/BlinkDL/rwkv-4-world/tree/main

|

||||

- RWKV-4-Raven: https://huggingface.co/BlinkDL/rwkv-4-raven/tree/main

|

||||

- ChatRWKV: https://github.com/BlinkDL/ChatRWKV

|

||||

- RWKV-LM: https://github.com/BlinkDL/RWKV-LM

|

||||

- RWKV-LM-LoRA: https://github.com/Blealtan/RWKV-LM-LoRA

|

||||

|

||||

## プレビュー

|

||||

|

||||

### ホームページ

|

||||

|

||||

|

||||

|

||||

|

||||

### チャット

|

||||

|

||||

|

||||

|

||||

|

||||

### 補完

|

||||

|

||||

|

||||

|

||||

|

||||

### コンフィグ

|

||||

|

||||

|

||||

|

||||

|

||||

### モデル管理

|

||||

|

||||

|

||||

|

||||

|

||||

### ダウンロード管理

|

||||

|

||||

|

||||

|

||||

|

||||

### LoRA Finetune

|

||||

|

||||

|

||||

|

||||

### 設定

|

||||

|

||||

|

||||

|

||||

|

||||

40

README_ZH.md

40

README_ZH.md

@@ -20,7 +20,7 @@ API兼容的接口,这意味着一切ChatGPT客户端都是RWKV客户端。

|

||||

[![MacOS][MacOS-image]][MacOS-url]

|

||||

[![Linux][Linux-image]][Linux-url]

|

||||

|

||||

[视频演示](https://www.bilibili.com/video/BV1hM4y1v76R) | [疑难解答](https://www.bilibili.com/read/cv23921171) | [预览](#Preview) | [下载][download-url] | [懒人包](https://pan.baidu.com/s/1wchIUHgne3gncIiLIeKBEQ?pwd=1111) | [服务器部署示例](https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples)

|

||||

[视频演示](https://www.bilibili.com/video/BV1hM4y1v76R) | [疑难解答](https://www.bilibili.com/read/cv23921171) | [预览](#Preview) | [下载][download-url] | [懒人包](https://pan.baidu.com/s/1zdzZ_a0uM3gDqi6pXIZVAA?pwd=1111) | [服务器部署示例](https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples)

|

||||

|

||||

[license-image]: http://img.shields.io/badge/license-MIT-blue.svg

|

||||

|

||||

@@ -46,11 +46,9 @@ API兼容的接口,这意味着一切ChatGPT客户端都是RWKV客户端。

|

||||

|

||||

</div>

|

||||

|

||||

#### 注意 目前RWKV中文模型质量一般,推荐使用英文模型或World(全球语言)体验实际RWKV能力

|

||||

|

||||

#### 预设配置已经开启自定义CUDA算子加速,速度更快,且显存消耗更少。如果你遇到可能的兼容性问题,前往配置页面,关闭`使用自定义CUDA算子加速`

|

||||

|

||||

#### 如果Windows Defender说这是一个病毒,你可以尝试下载[v1.0.8](https://github.com/josStorer/RWKV-Runner/releases/tag/v1.0.8)/[v1.0.9](https://github.com/josStorer/RWKV-Runner/releases/tag/v1.0.9)然后让其自动更新到最新版,或添加信任

|

||||

#### 如果Windows Defender说这是一个病毒,你可以尝试下载[v1.3.7_win.zip](https://github.com/josStorer/RWKV-Runner/releases/download/v1.3.7/RWKV-Runner_win.zip),然后让其自动更新到最新版,或添加信任

|

||||

|

||||

#### 对于不同的任务,调整API参数会获得更好的效果,例如对于翻译任务,你可以尝试设置Temperature为1,Top_P为0.3

|

||||

|

||||

@@ -60,10 +58,12 @@ API兼容的接口,这意味着一切ChatGPT客户端都是RWKV客户端。

|

||||

- 与OpenAI API完全兼容,一切ChatGPT客户端,都是RWKV客户端。启动模型后,打开 http://127.0.0.1:8000/docs 查看详细内容

|

||||

- 全自动依赖安装,你只需要一个轻巧的可执行程序

|

||||

- 预设了2G至32G显存的配置,几乎在各种电脑上工作良好

|

||||

- 自带用户友好的聊天和补全交互页面

|

||||

- 自带用户友好的聊天和续写交互页面

|

||||

- 易于理解和操作的参数配置

|

||||

- 内置模型转换工具

|

||||

- 内置下载管理和远程模型检视

|

||||

- 内置一键LoRA微调

|

||||

- 也可用作 OpenAI ChatGPT 和 GPT Playground 客户端

|

||||

- 多语言本地化

|

||||

- 主题切换

|

||||

- 自动更新

|

||||

@@ -126,46 +126,44 @@ for i in np.argsort(embeddings_cos_sim)[::-1]:

|

||||

print(f"{embeddings_cos_sim[i]:.10f} - {values[i]}")

|

||||

```

|

||||

|

||||

## Todo

|

||||

|

||||

- [ ] 模型训练功能

|

||||

- [x] CUDA算子int8提速

|

||||

- [x] macOS支持

|

||||

- [x] linux支持

|

||||

- [ ] 本地状态缓存数据库

|

||||

|

||||

## 相关仓库:

|

||||

|

||||

- RWKV-4-World: https://huggingface.co/BlinkDL/rwkv-4-world/tree/main

|

||||

- RWKV-4-Raven: https://huggingface.co/BlinkDL/rwkv-4-raven/tree/main

|

||||

- ChatRWKV: https://github.com/BlinkDL/ChatRWKV

|

||||

- RWKV-LM: https://github.com/BlinkDL/RWKV-LM

|

||||

- RWKV-LM-LoRA: https://github.com/Blealtan/RWKV-LM-LoRA

|

||||

|

||||

## Preview

|

||||

|

||||

### 主页

|

||||

|

||||

|

||||

|

||||

|

||||

### 聊天

|

||||

|

||||

|

||||

|

||||

|

||||

### 补全

|

||||

### 续写

|

||||

|

||||

|

||||

|

||||

|

||||

### 配置

|

||||

|

||||

|

||||

|

||||

|

||||

### 模型管理

|

||||

|

||||

|

||||

|

||||

|

||||

### 下载管理

|

||||

|

||||

|

||||

|

||||

|

||||

### LoRA微调

|

||||

|

||||

|

||||

|

||||

### 设置

|

||||

|

||||

|

||||

|

||||

|

||||

@@ -41,6 +41,14 @@ func (a *App) OnStartup(ctx context.Context) {

|

||||

a.cmdPrefix = "cd " + a.exDir + " && "

|

||||

}

|

||||

|

||||

os.Mkdir(a.exDir+"models", os.ModePerm)

|

||||

os.Mkdir(a.exDir+"lora-models", os.ModePerm)

|

||||

os.Mkdir(a.exDir+"finetune/json2binidx_tool/data", os.ModePerm)

|

||||

f, err := os.Create(a.exDir + "lora-models/train_log.txt")

|

||||

if err == nil {

|

||||

f.Close()

|

||||

}

|

||||

|

||||

a.downloadLoop()

|

||||

|

||||

watcher, err := fsnotify.NewWatcher()

|

||||

|

||||

@@ -1,6 +1,7 @@

|

||||

package backend_golang

|

||||

|

||||

import (

|

||||

"encoding/json"

|

||||

"errors"

|

||||

"os"

|

||||

"os/exec"

|

||||

@@ -43,6 +44,39 @@ func (a *App) ConvertData(python string, input string, outputPrefix string, voca

|

||||

if strings.Contains(vocab, "rwkv_vocab_v20230424") {

|

||||

tokenizerType = "RWKVTokenizer"

|

||||

}

|

||||

|

||||

input = strings.TrimSuffix(input, "/")

|

||||

if fi, err := os.Stat(input); err == nil && fi.IsDir() {

|

||||

files, err := os.ReadDir(input)

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

jsonlFile, err := os.Create(outputPrefix + ".jsonl")

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

defer jsonlFile.Close()

|

||||

for _, file := range files {

|

||||

if file.IsDir() || !strings.HasSuffix(file.Name(), ".txt") {

|

||||

continue

|

||||

}

|

||||

textContent, err := os.ReadFile(input + "/" + file.Name())

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

textJson, err := json.Marshal(map[string]string{"text": strings.ReplaceAll(strings.ReplaceAll(string(textContent), "\r\n", "\n"), "\r", "\n")})

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

if _, err := jsonlFile.WriteString(string(textJson) + "\n"); err != nil {

|

||||

return "", err

|

||||

}

|

||||

}

|

||||

input = outputPrefix + ".jsonl"

|

||||

} else if err != nil {

|

||||

return "", err

|

||||

}

|

||||

|

||||

return Cmd(python, "./finetune/json2binidx_tool/tools/preprocess_data.py", "--input", input, "--output-prefix", outputPrefix, "--vocab", vocab,

|

||||

"--tokenizer-type", tokenizerType, "--dataset-impl", "mmap", "--append-eod")

|

||||

}

|

||||

@@ -113,3 +147,11 @@ func (a *App) InstallPyDep(python string, cnMirror bool) (string, error) {

|

||||

return Cmd(python, "-m", "pip", "install", "-r", "./backend-python/requirements_without_cyac.txt")

|

||||

}

|

||||

}

|

||||

|

||||

func (a *App) GetPyError() string {

|

||||

content, err := os.ReadFile("./error.txt")

|

||||

if err != nil {

|

||||

return ""

|

||||

}

|

||||

return string(content)

|

||||

}

|

||||

|

||||

@@ -119,8 +119,10 @@ func (a *App) WslStop() error {

|

||||

if !running {

|

||||

return errors.New("wsl not running")

|

||||

}

|

||||

err = cmd.Process.Kill()

|

||||

cmd = nil

|

||||

if cmd != nil {

|

||||

err = cmd.Process.Kill()

|

||||

cmd = nil

|

||||

}

|

||||

// stdin.Close()

|

||||

stdin = nil

|

||||

distro = nil

|

||||

|

||||

22

backend-python/convert_model.py

vendored

22

backend-python/convert_model.py

vendored

@@ -219,13 +219,17 @@ def get_args():

|

||||

return p.parse_args()

|

||||

|

||||

|

||||

args = get_args()

|

||||

if not args.quiet:

|

||||

print(f"** {args}")

|

||||

try:

|

||||

args = get_args()

|

||||

if not args.quiet:

|

||||

print(f"** {args}")

|

||||

|

||||

RWKV(

|

||||

getattr(args, "in"),

|

||||

args.strategy,

|

||||

verbose=not args.quiet,

|

||||

convert_and_save_and_exit=args.out,

|

||||

)

|

||||

RWKV(

|

||||

getattr(args, "in"),

|

||||

args.strategy,

|

||||

verbose=not args.quiet,

|

||||

convert_and_save_and_exit=args.out,

|

||||

)

|

||||

except Exception as e:

|

||||

with open("error.txt", "w") as f:

|

||||

f.write(str(e))

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

import asyncio

|

||||

import json

|

||||

from threading import Lock

|

||||

from typing import List

|

||||

from typing import List, Union

|

||||

import base64

|

||||

|

||||

from fastapi import APIRouter, Request, status, HTTPException

|

||||

@@ -44,7 +44,7 @@ class ChatCompletionBody(ModelConfigBody):

|

||||

|

||||

|

||||

class CompletionBody(ModelConfigBody):

|

||||

prompt: str

|

||||

prompt: Union[str, List[str]]

|

||||

model: str = "rwkv"

|

||||

stream: bool = False

|

||||

stop: str = None

|

||||

@@ -306,9 +306,12 @@ async def completions(body: CompletionBody, request: Request):

|

||||

if model is None:

|

||||

raise HTTPException(status.HTTP_400_BAD_REQUEST, "model not loaded")

|

||||

|

||||

if body.prompt is None or body.prompt == "":

|

||||

if body.prompt is None or body.prompt == "" or body.prompt == []:

|

||||

raise HTTPException(status.HTTP_400_BAD_REQUEST, "prompt not found")

|

||||

|

||||

if type(body.prompt) == list:

|

||||

body.prompt = body.prompt[0] # TODO: support multiple prompts

|

||||

|

||||

if body.stream:

|

||||

return EventSourceResponse(

|

||||

eval_rwkv(model, request, body, body.prompt, body.stream, body.stop, False)

|

||||

@@ -323,7 +326,7 @@ async def completions(body: CompletionBody, request: Request):

|

||||

|

||||

|

||||

class EmbeddingsBody(BaseModel):

|

||||

input: str or List[str] or List[List[int]]

|

||||

input: Union[str, List[str], List[List[int]]]

|

||||

model: str = "rwkv"

|

||||

encoding_format: str = None

|

||||

fast_mode: bool = False

|

||||

|

||||

@@ -52,6 +52,14 @@ def switch_model(body: SwitchModelBody, response: Response, request: Request):

|

||||

if body.model == "":

|

||||

return "success"

|

||||

|

||||

if "->" in body.strategy:

|

||||

state_cache.disable_state_cache()

|

||||

else:

|

||||

try:

|

||||

state_cache.enable_state_cache()

|

||||

except HTTPException:

|

||||

pass

|

||||

|

||||

os.environ["RWKV_CUDA_ON"] = "1" if body.customCuda else "0"

|

||||

|

||||

global_var.set(global_var.Model_Status, global_var.ModelStatus.Loading)

|

||||

|

||||

@@ -34,6 +34,32 @@ def init():

|

||||

print("cyac not found")

|

||||

|

||||

|

||||

@router.post("/disable-state-cache")

|

||||

def disable_state_cache():

|

||||

global trie, dtrie

|

||||

|

||||

trie = None

|

||||

dtrie = {}

|

||||

gc.collect()

|

||||

|

||||

return "success"

|

||||

|

||||

|

||||

@router.post("/enable-state-cache")

|

||||

def enable_state_cache():

|

||||

global trie, dtrie

|

||||

try:

|

||||

import cyac

|

||||

|

||||

trie = cyac.Trie()

|

||||

dtrie = {}

|

||||

gc.collect()

|

||||

|

||||

return "success"

|

||||

except ModuleNotFoundError:

|

||||

raise HTTPException(status.HTTP_400_BAD_REQUEST, "cyac not found")

|

||||

|

||||

|

||||

class AddStateBody(BaseModel):

|

||||

prompt: str

|

||||

tokens: List[str]

|

||||

@@ -85,6 +111,8 @@ def reset_state():

|

||||

if trie is None:

|

||||

raise HTTPException(status.HTTP_400_BAD_REQUEST, "trie not loaded")

|

||||

|

||||

import cyac

|

||||

|

||||

trie = cyac.Trie()

|

||||

dtrie = {}

|

||||

gc.collect()

|

||||

|

||||

BIN

build/appicon.png

vendored

BIN

build/appicon.png

vendored

Binary file not shown.

|

Before Width: | Height: | Size: 120 KiB After Width: | Height: | Size: 83 KiB |

BIN

build/windows/icon.ico

vendored

BIN

build/windows/icon.ico

vendored

Binary file not shown.

|

Before Width: | Height: | Size: 167 KiB After Width: | Height: | Size: 175 KiB |

@@ -8,6 +8,12 @@ if [[ ${cnMirror} == 1 ]]; then

|

||||

fi

|

||||

fi

|

||||

|

||||

if dpkg -s "gcc" >/dev/null 2>&1; then

|

||||

echo "gcc installed"

|

||||

else

|

||||

sudo apt -y install gcc

|

||||

fi

|

||||

|

||||

if dpkg -s "python3-pip" >/dev/null 2>&1; then

|

||||

echo "pip installed"

|

||||

else

|

||||

@@ -20,14 +26,14 @@ else

|

||||

sudo apt -y install ninja-build

|

||||

fi

|

||||

|

||||

if dpkg -s "cuda" >/dev/null 2>&1; then

|

||||

echo "cuda installed"

|

||||

if dpkg -s "cuda" >/dev/null 2>&1 && dpkg -s "cuda" | grep Version | awk '{print $2}' | grep -q "12"; then

|

||||

echo "cuda 12 installed"

|

||||

else

|

||||

wget https://developer.download.nvidia.com/compute/cuda/repos/wsl-ubuntu/x86_64/cuda-wsl-ubuntu.pin

|

||||

wget -N https://developer.download.nvidia.com/compute/cuda/repos/wsl-ubuntu/x86_64/cuda-wsl-ubuntu.pin

|

||||

sudo mv cuda-wsl-ubuntu.pin /etc/apt/preferences.d/cuda-repository-pin-600

|

||||

wget https://developer.download.nvidia.com/compute/cuda/11.7.0/local_installers/cuda-repo-wsl-ubuntu-11-7-local_11.7.0-1_amd64.deb

|

||||

sudo dpkg -i cuda-repo-wsl-ubuntu-11-7-local_11.7.0-1_amd64.deb

|

||||

sudo cp /var/cuda-repo-wsl-ubuntu-11-7-local/cuda-*-keyring.gpg /usr/share/keyrings/

|

||||

wget -N https://developer.download.nvidia.com/compute/cuda/12.2.0/local_installers/cuda-repo-wsl-ubuntu-12-2-local_12.2.0-1_amd64.deb

|

||||

sudo dpkg -i cuda-repo-wsl-ubuntu-12-2-local_12.2.0-1_amd64.deb

|

||||

sudo cp /var/cuda-repo-wsl-ubuntu-12-2-local/cuda-*-keyring.gpg /usr/share/keyrings/

|

||||

sudo apt-get update

|

||||

sudo apt-get -y install cuda

|

||||

fi

|

||||

|

||||

@@ -17,11 +17,14 @@

|

||||

|

||||

"""Processing data for pretraining."""

|

||||

|

||||

import argparse

|

||||

import multiprocessing

|

||||

import os

|

||||

import sys

|

||||

|

||||

sys.path.append(os.path.dirname(os.path.realpath(__file__)))

|

||||

|

||||

import argparse

|

||||

import multiprocessing

|

||||

|

||||

import lm_dataformat as lmd

|

||||

import numpy as np

|

||||

|

||||

@@ -240,4 +243,8 @@ def main():

|

||||

|

||||

|

||||

if __name__ == "__main__":

|

||||

main()

|

||||

try:

|

||||

main()

|

||||

except Exception as e:

|

||||

with open("error.txt", "w") as f:

|

||||

f.write(str(e))

|

||||

|

||||

97

finetune/lora/merge_lora.py

vendored

97

finetune/lora/merge_lora.py

vendored

@@ -5,49 +5,64 @@ from typing import Dict

|

||||

import typing

|

||||

import torch

|

||||

|

||||

if '-h' in sys.argv or '--help' in sys.argv:

|

||||

print(f'Usage: python3 {sys.argv[0]} [--use-gpu] <lora_alpha> <base_model.pth> <lora_checkpoint.pth> <output.pth>')

|

||||

try:

|

||||

if "-h" in sys.argv or "--help" in sys.argv:

|

||||

print(

|

||||

f"Usage: python3 {sys.argv[0]} [--use-gpu] <lora_alpha> <base_model.pth> <lora_checkpoint.pth> <output.pth>"

|

||||

)

|

||||

|

||||

if sys.argv[1] == '--use-gpu':

|

||||

device = 'cuda'

|

||||

lora_alpha, base_model, lora, output = float(sys.argv[2]), sys.argv[3], sys.argv[4], sys.argv[5]

|

||||

else:

|

||||

device = 'cpu'

|

||||

lora_alpha, base_model, lora, output = float(sys.argv[1]), sys.argv[2], sys.argv[3], sys.argv[4]

|

||||

if sys.argv[1] == "--use-gpu":

|

||||

device = "cuda"

|

||||

lora_alpha, base_model, lora, output = (

|

||||

float(sys.argv[2]),

|

||||

sys.argv[3],

|

||||

sys.argv[4],

|

||||

sys.argv[5],

|

||||

)

|

||||

else:

|

||||

device = "cpu"

|

||||

lora_alpha, base_model, lora, output = (

|

||||

float(sys.argv[1]),

|

||||

sys.argv[2],

|

||||

sys.argv[3],

|

||||

sys.argv[4],

|

||||

)

|

||||

|

||||

with torch.no_grad():

|

||||

w: Dict[str, torch.Tensor] = torch.load(base_model, map_location="cpu")

|

||||

# merge LoRA-only slim checkpoint into the main weights

|

||||

w_lora: Dict[str, torch.Tensor] = torch.load(lora, map_location="cpu")

|

||||

for k in w_lora.keys():

|

||||

w[k] = w_lora[k]

|

||||

output_w: typing.OrderedDict[str, torch.Tensor] = OrderedDict()

|

||||

# merge LoRA weights

|

||||

keys = list(w.keys())

|

||||

for k in keys:

|

||||

if k.endswith(".weight"):

|

||||

prefix = k[: -len(".weight")]

|

||||

lora_A = prefix + ".lora_A"

|

||||

lora_B = prefix + ".lora_B"

|

||||

if lora_A in keys:

|

||||

assert lora_B in keys

|

||||

print(f"merging {lora_A} and {lora_B} into {k}")

|

||||

assert w[lora_B].shape[1] == w[lora_A].shape[0]

|

||||

lora_r = w[lora_B].shape[1]

|

||||

w[k] = w[k].to(device=device)

|

||||

w[lora_A] = w[lora_A].to(device=device)

|

||||

w[lora_B] = w[lora_B].to(device=device)

|

||||

w[k] += w[lora_B] @ w[lora_A] * (lora_alpha / lora_r)

|

||||

output_w[k] = w[k].to(device="cpu", copy=True)

|

||||

del w[k]

|

||||

del w[lora_A]

|

||||

del w[lora_B]

|

||||

continue

|

||||

|

||||

with torch.no_grad():

|

||||

w: Dict[str, torch.Tensor] = torch.load(base_model, map_location='cpu')

|

||||

# merge LoRA-only slim checkpoint into the main weights

|

||||

w_lora: Dict[str, torch.Tensor] = torch.load(lora, map_location='cpu')

|

||||

for k in w_lora.keys():

|

||||

w[k] = w_lora[k]

|

||||

output_w: typing.OrderedDict[str, torch.Tensor] = OrderedDict()

|

||||

# merge LoRA weights

|

||||

keys = list(w.keys())

|

||||

for k in keys:

|

||||

if k.endswith('.weight'):

|

||||

prefix = k[:-len('.weight')]

|

||||

lora_A = prefix + '.lora_A'

|

||||

lora_B = prefix + '.lora_B'

|

||||

if lora_A in keys:

|

||||

assert lora_B in keys

|

||||

print(f'merging {lora_A} and {lora_B} into {k}')

|

||||

assert w[lora_B].shape[1] == w[lora_A].shape[0]

|

||||

lora_r = w[lora_B].shape[1]

|

||||

w[k] = w[k].to(device=device)

|

||||

w[lora_A] = w[lora_A].to(device=device)

|

||||

w[lora_B] = w[lora_B].to(device=device)

|

||||

w[k] += w[lora_B] @ w[lora_A] * (lora_alpha / lora_r)

|

||||

output_w[k] = w[k].to(device='cpu', copy=True)

|

||||

if "lora" not in k:

|

||||

print(f"retaining {k}")

|

||||

output_w[k] = w[k].clone()

|

||||

del w[k]

|

||||

del w[lora_A]

|

||||

del w[lora_B]

|

||||

continue

|

||||

|

||||

if 'lora' not in k:

|

||||

print(f'retaining {k}')

|

||||

output_w[k] = w[k].clone()

|

||||

del w[k]

|

||||

|

||||

torch.save(output_w, output)

|

||||

torch.save(output_w, output)

|

||||

except Exception as e:

|

||||

with open("error.txt", "w") as f:

|

||||

f.write(str(e))

|

||||

|

||||

203

finetune/lora/train.py

vendored

203

finetune/lora/train.py

vendored

@@ -50,52 +50,84 @@ if __name__ == "__main__":

|

||||

parser = ArgumentParser()

|

||||

|

||||

parser.add_argument("--load_model", default="", type=str) # full path, with .pth

|

||||

parser.add_argument("--wandb", default="", type=str) # wandb project name. if "" then don't use wandb

|

||||

parser.add_argument(

|

||||

"--wandb", default="", type=str

|

||||

) # wandb project name. if "" then don't use wandb

|

||||

parser.add_argument("--proj_dir", default="out", type=str)

|

||||

parser.add_argument("--random_seed", default="-1", type=int)

|

||||

|

||||

parser.add_argument("--data_file", default="", type=str)

|

||||

parser.add_argument("--data_type", default="utf-8", type=str)

|

||||

parser.add_argument("--vocab_size", default=0, type=int) # vocab_size = 0 means auto (for char-level LM and .txt data)

|

||||

parser.add_argument(

|

||||

"--vocab_size", default=0, type=int

|

||||

) # vocab_size = 0 means auto (for char-level LM and .txt data)

|

||||

|

||||

parser.add_argument("--ctx_len", default=1024, type=int)

|

||||

parser.add_argument("--epoch_steps", default=1000, type=int) # a mini "epoch" has [epoch_steps] steps

|

||||

parser.add_argument("--epoch_count", default=500, type=int) # train for this many "epochs". will continue afterwards with lr = lr_final

|

||||

parser.add_argument("--epoch_begin", default=0, type=int) # if you load a model trained for x "epochs", set epoch_begin = x

|

||||

parser.add_argument("--epoch_save", default=5, type=int) # save the model every [epoch_save] "epochs"

|

||||

parser.add_argument(

|

||||

"--epoch_steps", default=1000, type=int

|

||||

) # a mini "epoch" has [epoch_steps] steps

|

||||

parser.add_argument(

|

||||

"--epoch_count", default=500, type=int

|

||||

) # train for this many "epochs". will continue afterwards with lr = lr_final

|

||||

parser.add_argument(

|

||||

"--epoch_begin", default=0, type=int

|

||||

) # if you load a model trained for x "epochs", set epoch_begin = x

|

||||

parser.add_argument(

|

||||

"--epoch_save", default=5, type=int

|

||||

) # save the model every [epoch_save] "epochs"

|

||||

|

||||

parser.add_argument("--micro_bsz", default=12, type=int) # micro batch size (batch size per GPU)

|

||||

parser.add_argument(

|

||||

"--micro_bsz", default=12, type=int

|

||||

) # micro batch size (batch size per GPU)

|

||||

parser.add_argument("--n_layer", default=6, type=int)

|

||||

parser.add_argument("--n_embd", default=512, type=int)

|

||||

parser.add_argument("--dim_att", default=0, type=int)

|

||||

parser.add_argument("--dim_ffn", default=0, type=int)

|

||||

parser.add_argument("--pre_ffn", default=0, type=int) # replace first att layer by ffn (sometimes better)

|

||||

parser.add_argument(

|

||||

"--pre_ffn", default=0, type=int

|

||||

) # replace first att layer by ffn (sometimes better)

|

||||

parser.add_argument("--head_qk", default=0, type=int) # my headQK trick

|

||||

parser.add_argument("--tiny_att_dim", default=0, type=int) # tiny attention dim

|

||||

parser.add_argument("--tiny_att_layer", default=-999, type=int) # tiny attention @ which layer

|

||||

parser.add_argument(

|

||||

"--tiny_att_layer", default=-999, type=int

|

||||

) # tiny attention @ which layer

|

||||

|

||||

parser.add_argument("--lr_init", default=6e-4, type=float) # 6e-4 for L12-D768, 4e-4 for L24-D1024, 3e-4 for L24-D2048

|

||||

parser.add_argument(

|

||||

"--lr_init", default=6e-4, type=float

|

||||

) # 6e-4 for L12-D768, 4e-4 for L24-D1024, 3e-4 for L24-D2048

|

||||

parser.add_argument("--lr_final", default=1e-5, type=float)

|

||||

parser.add_argument("--warmup_steps", default=0, type=int) # try 50 if you load a model

|

||||

parser.add_argument(

|

||||

"--warmup_steps", default=0, type=int

|

||||

) # try 50 if you load a model

|

||||

parser.add_argument("--beta1", default=0.9, type=float)

|

||||

parser.add_argument("--beta2", default=0.99, type=float) # use 0.999 when your model is close to convergence

|

||||

parser.add_argument(

|

||||

"--beta2", default=0.99, type=float

|

||||

) # use 0.999 when your model is close to convergence

|

||||

parser.add_argument("--adam_eps", default=1e-8, type=float)

|

||||

|

||||

parser.add_argument("--grad_cp", default=0, type=int) # gradient checkpt: saves VRAM, but slower

|

||||

parser.add_argument(

|

||||

"--grad_cp", default=0, type=int

|

||||

) # gradient checkpt: saves VRAM, but slower

|

||||

parser.add_argument("--my_pile_stage", default=0, type=int) # my special pile mode

|

||||

parser.add_argument("--my_pile_shift", default=-1, type=int) # my special pile mode - text shift

|

||||

parser.add_argument(

|

||||

"--my_pile_shift", default=-1, type=int

|

||||

) # my special pile mode - text shift

|

||||

parser.add_argument("--my_pile_edecay", default=0, type=int)

|

||||

parser.add_argument("--layerwise_lr", default=1, type=int) # layerwise lr for faster convergence (but slower it/s)

|

||||

parser.add_argument("--ds_bucket_mb", default=200, type=int) # deepspeed bucket size in MB. 200 seems enough

|

||||

parser.add_argument(

|

||||

"--layerwise_lr", default=1, type=int

|

||||

) # layerwise lr for faster convergence (but slower it/s)

|

||||

parser.add_argument(

|

||||

"--ds_bucket_mb", default=200, type=int

|

||||

) # deepspeed bucket size in MB. 200 seems enough

|

||||

# parser.add_argument("--cuda_cleanup", default=0, type=int) # extra cuda cleanup (sometimes helpful)

|

||||

|

||||

parser.add_argument("--my_img_version", default=0, type=str)

|

||||

parser.add_argument("--my_img_size", default=0, type=int)

|

||||

parser.add_argument("--my_img_bit", default=0, type=int)

|

||||

parser.add_argument("--my_img_clip", default='x', type=str)

|

||||

parser.add_argument("--my_img_clip", default="x", type=str)

|

||||

parser.add_argument("--my_img_clip_scale", default=1, type=float)

|

||||

parser.add_argument("--my_img_l1_scale", default=0, type=float)

|

||||

parser.add_argument("--my_img_encoder", default='x', type=str)

|

||||

parser.add_argument("--my_img_encoder", default="x", type=str)

|

||||

# parser.add_argument("--my_img_noise_scale", default=0, type=float)

|

||||

parser.add_argument("--my_sample_len", default=0, type=int)

|

||||

parser.add_argument("--my_ffn_shift", default=1, type=int)

|

||||

@@ -104,7 +136,7 @@ if __name__ == "__main__":

|

||||

parser.add_argument("--load_partial", default=0, type=int)

|

||||

parser.add_argument("--magic_prime", default=0, type=int)

|

||||

parser.add_argument("--my_qa_mask", default=0, type=int)

|

||||

parser.add_argument("--my_testing", default='', type=str)

|

||||

parser.add_argument("--my_testing", default="", type=str)

|

||||

|

||||

parser.add_argument("--lora", action="store_true")

|

||||

parser.add_argument("--lora_load", default="", type=str)

|

||||

@@ -122,18 +154,26 @@ if __name__ == "__main__":

|

||||

import numpy as np

|

||||

import torch

|

||||

from torch.utils.data import DataLoader

|

||||

|

||||

if "deepspeed" in args.strategy:

|

||||

import deepspeed

|

||||

import pytorch_lightning as pl

|

||||

from pytorch_lightning import seed_everything

|

||||

|

||||

if args.random_seed >= 0:

|

||||

print(f"########## WARNING: GLOBAL SEED {args.random_seed} THIS WILL AFFECT MULTIGPU SAMPLING ##########\n" * 3)

|

||||

print(

|

||||

f"########## WARNING: GLOBAL SEED {args.random_seed} THIS WILL AFFECT MULTIGPU SAMPLING ##########\n"

|

||||

* 3

|

||||

)

|

||||

seed_everything(args.random_seed)

|

||||

|

||||

np.set_printoptions(precision=4, suppress=True, linewidth=200)

|

||||

warnings.filterwarnings("ignore", ".*Consider increasing the value of the `num_workers` argument*")

|

||||

warnings.filterwarnings("ignore", ".*The progress bar already tracks a metric with the*")

|

||||

warnings.filterwarnings(

|

||||

"ignore", ".*Consider increasing the value of the `num_workers` argument*"

|

||||

)

|

||||

warnings.filterwarnings(

|

||||

"ignore", ".*The progress bar already tracks a metric with the*"

|

||||

)

|

||||

# os.environ["WDS_SHOW_SEED"] = "1"

|

||||

|

||||

args.my_timestamp = datetime.datetime.today().strftime("%Y-%m-%d-%H-%M-%S")

|

||||

@@ -158,7 +198,9 @@ if __name__ == "__main__":

|

||||

args.run_name = f"v{args.my_img_version}-{args.my_img_size}-{args.my_img_bit}bit-{args.my_img_clip}x{args.my_img_clip_scale}"

|

||||

args.proj_dir = f"{args.proj_dir}-{args.run_name}"

|

||||

else:

|

||||

args.run_name = f"{args.vocab_size} ctx{args.ctx_len} L{args.n_layer} D{args.n_embd}"

|

||||

args.run_name = (

|

||||

f"{args.vocab_size} ctx{args.ctx_len} L{args.n_layer} D{args.n_embd}"

|

||||

)

|

||||

if not os.path.exists(args.proj_dir):

|

||||

os.makedirs(args.proj_dir)

|

||||

|

||||

@@ -240,24 +282,40 @@ if __name__ == "__main__":

|

||||

)

|

||||

rank_zero_info(str(vars(args)) + "\n")

|

||||

|

||||

assert args.data_type in ["utf-8", "utf-16le", "numpy", "binidx", "dummy", "wds_img", "uint16"]

|

||||

assert args.data_type in [

|

||||

"utf-8",

|

||||

"utf-16le",

|

||||

"numpy",

|

||||

"binidx",

|

||||

"dummy",

|

||||

"wds_img",

|

||||

"uint16",

|

||||

]

|

||||

|

||||

if args.lr_final == 0 or args.lr_init == 0:

|

||||

rank_zero_info("\n\nNote: lr_final = 0 or lr_init = 0. Using linear LR schedule instead.\n\n")

|

||||

rank_zero_info(

|

||||

"\n\nNote: lr_final = 0 or lr_init = 0. Using linear LR schedule instead.\n\n"

|

||||

)

|

||||

|

||||

assert args.precision in ["fp32", "tf32", "fp16", "bf16"]

|

||||

os.environ["RWKV_FLOAT_MODE"] = args.precision

|

||||

if args.precision == "fp32":

|

||||

for i in range(10):

|

||||

rank_zero_info("\n\nNote: you are using fp32 (very slow). Try bf16 / tf32 for faster training.\n\n")

|

||||

rank_zero_info(

|

||||

"\n\nNote: you are using fp32 (very slow). Try bf16 / tf32 for faster training.\n\n"

|

||||

)

|

||||

if args.precision == "fp16":

|

||||

rank_zero_info("\n\nNote: you are using fp16 (might overflow). Try bf16 / tf32 for stable training.\n\n")

|

||||

rank_zero_info(

|

||||

"\n\nNote: you are using fp16 (might overflow). Try bf16 / tf32 for stable training.\n\n"

|

||||

)

|

||||

|

||||

os.environ["RWKV_JIT_ON"] = "1"

|

||||

if "deepspeed_stage_3" in args.strategy:

|

||||

os.environ["RWKV_JIT_ON"] = "0"

|

||||

if args.lora and args.grad_cp == 1:

|

||||

print('!!!!! LoRA Warning: Gradient Checkpointing requires JIT off, disabling it')

|

||||

print(

|

||||

"!!!!! LoRA Warning: Gradient Checkpointing requires JIT off, disabling it"

|

||||

)

|

||||

os.environ["RWKV_JIT_ON"] = "0"

|

||||

|

||||

torch.backends.cudnn.benchmark = True

|

||||

@@ -284,20 +342,22 @@ if __name__ == "__main__":

|

||||

train_data = MyDataset(args)

|

||||

args.vocab_size = train_data.vocab_size

|

||||

|

||||

if args.data_type == 'wds_img':

|

||||

if args.data_type == "wds_img":

|

||||

from src.model_img import RWKV_IMG

|

||||

|

||||

assert args.lora, "LoRA not yet supported for RWKV_IMG"

|

||||

model = RWKV_IMG(args)

|

||||

else:

|

||||

from src.model import RWKV, LORA_CONFIG, LoraLinear

|

||||

|

||||

if args.lora:

|

||||

assert args.lora_r > 0, "LoRA should have its `r` > 0"

|

||||

LORA_CONFIG["r"] = args.lora_r

|

||||

LORA_CONFIG["alpha"] = args.lora_alpha

|

||||

LORA_CONFIG["dropout"] = args.lora_dropout

|

||||

LORA_CONFIG["parts"] = set(str(args.lora_parts).split(','))

|

||||

enable_time_finetune = 'time' in LORA_CONFIG["parts"]

|

||||

enable_ln_finetune = 'ln' in LORA_CONFIG["parts"]

|

||||

LORA_CONFIG["parts"] = set(str(args.lora_parts).split(","))

|

||||

enable_time_finetune = "time" in LORA_CONFIG["parts"]

|

||||

enable_ln_finetune = "ln" in LORA_CONFIG["parts"]

|

||||

model = RWKV(args)

|

||||

# only train lora parameters

|

||||

if args.lora:

|

||||

@@ -305,20 +365,24 @@ if __name__ == "__main__":

|

||||

for name, module in model.named_modules():

|

||||

# have to check param name since it may have been wrapped by torchscript

|

||||

if any(n.startswith("lora_") for n, _ in module.named_parameters()):

|

||||

print(f' LoRA training module {name}')

|

||||

print(f" LoRA training module {name}")

|

||||

for pname, param in module.named_parameters():

|

||||

param.requires_grad = 'lora_' in pname

|

||||

elif enable_ln_finetune and '.ln' in name:

|

||||

print(f' LoRA additionally training module {name}')

|

||||

param.requires_grad = "lora_" in pname

|

||||

elif enable_ln_finetune and ".ln" in name:

|

||||

print(f" LoRA additionally training module {name}")

|

||||

for param in module.parameters():

|

||||

param.requires_grad = True

|

||||

elif enable_time_finetune and any(n.startswith("time") for n, _ in module.named_parameters()):

|

||||

elif enable_time_finetune and any(

|

||||

n.startswith("time") for n, _ in module.named_parameters()

|

||||

):

|

||||

for pname, param in module.named_parameters():

|

||||

if pname.startswith("time"):

|

||||

print(f' LoRA additionally training parameter {pname}')

|

||||

print(f" LoRA additionally training parameter {pname}")

|

||||

param.requires_grad = True

|

||||

|

||||

if len(args.load_model) == 0 or args.my_pile_stage == 1: # shall we build the initial weights?

|

||||

if (

|

||||

len(args.load_model) == 0 or args.my_pile_stage == 1

|

||||

): # shall we build the initial weights?

|

||||

init_weight_name = f"{args.proj_dir}/rwkv-init.pth"

|

||||

generate_init_weight(model, init_weight_name) # save initial weights

|

||||

args.load_model = init_weight_name

|

||||

@@ -326,6 +390,7 @@ if __name__ == "__main__":

|

||||

rank_zero_info(f"########## Loading {args.load_model}... ##########")

|

||||

try:

|

||||

load_dict = torch.load(args.load_model, map_location="cpu")

|

||||

model.load_state_dict(load_dict, strict=(not args.lora))

|

||||

except:

|

||||

rank_zero_info(f"Bad checkpoint {args.load_model}")

|

||||

if args.my_pile_stage >= 2: # try again using another checkpoint

|

||||

@@ -337,36 +402,50 @@ if __name__ == "__main__":

|

||||

args.epoch_begin = max_p + 1

|

||||

rank_zero_info(f"Trying {args.load_model}")

|

||||

load_dict = torch.load(args.load_model, map_location="cpu")

|

||||

model.load_state_dict(load_dict, strict=(not args.lora))

|

||||

|

||||

if args.load_partial == 1:

|

||||

load_keys = load_dict.keys()

|

||||

for k in model.state_dict():

|

||||

if k not in load_keys:

|

||||

load_dict[k] = model.state_dict()[k]

|

||||

model.load_state_dict(load_dict, strict=(not args.lora))

|

||||

# If using LoRA, the LoRA keys might be missing in the original model

|

||||

model.load_state_dict(load_dict, strict=(not args.lora))

|

||||

# model.load_state_dict(load_dict, strict=(not args.lora))

|

||||

if os.path.isfile(args.lora_load):

|

||||

model.load_state_dict(torch.load(args.lora_load, map_location="cpu"),

|

||||

strict=False)

|

||||

model.load_state_dict(

|

||||

torch.load(args.lora_load, map_location="cpu"), strict=False

|

||||

)

|

||||

|

||||

trainer: Trainer = Trainer.from_argparse_args(

|

||||

args,

|

||||

callbacks=[train_callback(args)],

|

||||

)

|

||||

|

||||

if (args.lr_init > 1e-4 or trainer.world_size * args.micro_bsz * trainer.accumulate_grad_batches < 8):

|

||||

if 'I_KNOW_WHAT_IM_DOING' in os.environ:

|

||||

|

||||

if (

|

||||

args.lr_init > 1e-4

|

||||

or trainer.world_size * args.micro_bsz * trainer.accumulate_grad_batches < 8

|

||||

):

|

||||

if "I_KNOW_WHAT_IM_DOING" in os.environ:

|

||||

if trainer.global_rank == 0:

|

||||

print('!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!')

|

||||

print(f' WARNING: you are using too large LR ({args.lr_init} > 1e-4) or too small global batch size ({trainer.world_size} * {args.micro_bsz} * {trainer.accumulate_grad_batches} < 8)')

|

||||

print('!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!')

|

||||

print("!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!")

|

||||

print(

|

||||

f" WARNING: you are using too large LR ({args.lr_init} > 1e-4) or too small global batch size ({trainer.world_size} * {args.micro_bsz} * {trainer.accumulate_grad_batches} < 8)"

|

||||

)

|

||||

print("!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!")

|

||||

else:

|

||||

if trainer.global_rank == 0:

|

||||

print('!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!')

|

||||

print(f' ERROR: you are using too large LR ({args.lr_init} > 1e-4) or too small global batch size ({trainer.world_size} * {args.micro_bsz} * {trainer.accumulate_grad_batches} < 8)')

|

||||

print(f' Unless you are sure this is what you want, adjust them accordingly')

|

||||

print(f' (to suppress this, set environment variable "I_KNOW_WHAT_IM_DOING")')

|

||||

print('!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!')

|

||||

print("!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!")

|

||||

print(

|

||||

f" ERROR: you are using too large LR ({args.lr_init} > 1e-4) or too small global batch size ({trainer.world_size} * {args.micro_bsz} * {trainer.accumulate_grad_batches} < 8)"

|

||||

)

|

||||

print(

|

||||

f" Unless you are sure this is what you want, adjust them accordingly"

|

||||

)

|

||||

print(

|

||||

f' (to suppress this, set environment variable "I_KNOW_WHAT_IM_DOING")'

|

||||

)

|

||||

print("!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!")

|

||||

exit(0)

|

||||

|

||||

if trainer.global_rank == 0:

|

||||

@@ -379,10 +458,22 @@ if __name__ == "__main__":

|

||||

print(f"{str(shape[0]).ljust(5)} {n}")

|

||||

|

||||

if "deepspeed" in args.strategy:

|

||||

trainer.strategy.config["zero_optimization"]["allgather_bucket_size"] = args.ds_bucket_mb * 1000 * 1000

|

||||

trainer.strategy.config["zero_optimization"]["reduce_bucket_size"] = args.ds_bucket_mb * 1000 * 1000

|

||||

trainer.strategy.config["zero_optimization"]["allgather_bucket_size"] = (

|

||||

args.ds_bucket_mb * 1000 * 1000

|

||||

)

|

||||

trainer.strategy.config["zero_optimization"]["reduce_bucket_size"] = (

|

||||

args.ds_bucket_mb * 1000 * 1000

|

||||

)

|

||||

|

||||

# must set shuffle=False, persistent_workers=False (because worker is in another thread)

|

||||

data_loader = DataLoader(train_data, shuffle=False, pin_memory=True, batch_size=args.micro_bsz, num_workers=1, persistent_workers=False, drop_last=True)

|

||||

data_loader = DataLoader(

|

||||

train_data,

|

||||

shuffle=False,

|

||||

pin_memory=True,

|

||||

batch_size=args.micro_bsz,

|

||||

num_workers=1,

|

||||

persistent_workers=False,

|

||||

drop_last=True,

|

||||

)

|

||||

|

||||

trainer.fit(model, data_loader)

|

||||

|

||||

@@ -104,7 +104,7 @@

|

||||

"Supported custom cuda file not found": "没有找到支持的自定义cuda文件",

|

||||

"Failed to copy custom cuda file": "自定义cuda文件复制失败",

|

||||

"Downloading update, please wait. If it is not completed, please manually download the program from GitHub and replace the original program.": "正在下载更新,请等待。如果一直未完成,请从Github手动下载并覆盖原程序",

|

||||

"Completion": "补全",

|

||||

"Completion": "续写",

|

||||

"Parameters": "参数",

|

||||

"Stop Sequences": "停止词",

|

||||

"When this content appears in the response result, the generation will end.": "响应结果出现该内容时就结束生成",

|

||||

@@ -118,12 +118,12 @@

|

||||

"Instruction": "指令",

|

||||

"Blank": "空白",

|

||||

"The following is an epic science fiction masterpiece that is immortalized, with delicate descriptions and grand depictions of interstellar civilization wars.\nChapter 1.\n": "《背影》\n我与父亲不相见已二年余了,我最不能忘记的是他的背影。\n那年冬天,祖母死了,父亲的差使也交卸了,正是祸不单行的日子。我从北京到徐州,打算",

|

||||

"The following is a conversation between a cat girl and her owner. The cat girl is a humanized creature that behaves like a cat but is humanoid. At the end of each sentence in the dialogue, she will add \"Meow~\". In the following content, Bob represents the owner and Alice represents the cat girl.\n\nBob: Hello.\n\nAlice: I'm here, meow~.\n\nBob: Can you tell jokes?": "以下是一位猫娘的主人和猫娘的对话内容,猫娘是一种拟人化的生物,其行为似猫但类人,在每一句对话末尾都会加上\"喵~\"。以下内容中,Bob代表主人,Alice代表猫娘。\n\nBob: 你好\n\nAlice: 主人我在哦,喵~\n\nBob: 你会讲笑话吗?",

|

||||

"The following is a conversation between a cat girl and her owner. The cat girl is a humanized creature that behaves like a cat but is humanoid. At the end of each sentence in the dialogue, she will add \"Meow~\". In the following content, User represents the owner and Assistant represents the cat girl.\n\nUser: Hello.\n\nAssistant: I'm here, meow~.\n\nUser: Can you tell jokes?": "以下是一位猫娘的主人和猫娘的对话内容,猫娘是一种拟人化的生物,其行为似猫但类人,在每一句对话末尾都会加上\"喵~\"。以下内容中,User代表主人,Assistant代表猫娘。\n\nUser: 你好\n\nAssistant: 主人我在哦,喵~\n\nUser: 你会讲笑话吗?",

|

||||

"When response finished, inject this content.": "响应结束时,插入此内容到末尾",

|

||||

"Inject start text": "起始注入文本",

|

||||

"Inject end text": "结尾注入文本",

|

||||

"Before the response starts, inject this content.": "响应开始前,在开头插入此内容",

|

||||

"There is currently a game of Werewolf with six players, including a Seer (who can check identities at night), two Werewolves (who can choose someone to kill at night), a Bodyguard (who can choose someone to protect at night), two Villagers (with no special abilities), and a game host. Bob will play as Player 1, Alice will play as Players 2-6 and the game host, and they will begin playing together. Every night, the host will ask Bob for his action and simulate the actions of the other players. During the day, the host will oversee the voting process and ask Bob for his vote. \n\nAlice: Next, I will act as the game host and assign everyone their roles, including randomly assigning yours. Then, I will simulate the actions of Players 2-6 and let you know what happens each day. Based on your assigned role, you can tell me your actions and I will let you know the corresponding results each day.\n\nBob: Okay, I understand. Let's begin. Please assign me a role. Am I the Seer, Werewolf, Villager, or Bodyguard?\n\nAlice: You are the Seer. Now that night has fallen, please choose a player to check his identity.\n\nBob: Tonight, I want to check Player 2 and find out his role.": "现在有一场六人狼人杀游戏,包括一名预言家(可以在夜晚查验身份),两名狼人(可以在夜晚选择杀人),一名守卫(可以在夜晚选择要守护的人),两名平民(无技能),一名主持人,以下内容中Bob将扮演其中的1号玩家,Alice来扮演2-6号玩家,以及主持人,并开始与Bob进行游戏,主持人每晚都会询问Bob的行动,并模拟其他人的行动,在白天则要主持投票,并同样询问Bob投票对象,公布投票结果。\n\nAlice: 接下来,我将首先作为主持人进行角色分配,并给你赋予随机的角色,之后我将模拟2-6号玩家进行行动,告知你每天的动态,根据你被分配的角色,你可以回复我你做的行动,我会告诉你每天对应的结果\n\nBob: 好的,我明白了,那么开始吧。请先给我一个角色身份。我是预言家,狼人,平民,守卫中的哪一个呢?\n\nAlice: 你的身份是预言家。现在夜晚降临,请选择你要查验的玩家。\n\nBob: 今晚我要验2号玩家,他是什么身份?",

|

||||

"There is currently a game of Werewolf with six players, including a Seer (who can check identities at night), two Werewolves (who can choose someone to kill at night), a Bodyguard (who can choose someone to protect at night), two Villagers (with no special abilities), and a game host. User will play as Player 1, Assistant will play as Players 2-6 and the game host, and they will begin playing together. Every night, the host will ask User for his action and simulate the actions of the other players. During the day, the host will oversee the voting process and ask User for his vote. \n\nAssistant: Next, I will act as the game host and assign everyone their roles, including randomly assigning yours. Then, I will simulate the actions of Players 2-6 and let you know what happens each day. Based on your assigned role, you can tell me your actions and I will let you know the corresponding results each day.\n\nUser: Okay, I understand. Let's begin. Please assign me a role. Am I the Seer, Werewolf, Villager, or Bodyguard?\n\nAssistant: You are the Seer. Now that night has fallen, please choose a player to check his identity.\n\nUser: Tonight, I want to check Player 2 and find out his role.": "现在有一场六人狼人杀游戏,包括一名预言家(可以在夜晚查验身份),两名狼人(可以在夜晚选择杀人),一名守卫(可以在夜晚选择要守护的人),两名平民(无技能),一名主持人,以下内容中User将扮演其中的1号玩家,Assistant来扮演2-6号玩家,以及主持人,并开始与User进行游戏,主持人每晚都会询问User的行动,并模拟其他人的行动,在白天则要主持投票,并同样询问User投票对象,公布投票结果。\n\nAssistant: 接下来,我将首先作为主持人进行角色分配,并给你赋予随机的角色,之后我将模拟2-6号玩家进行行动,告知你每天的动态,根据你被分配的角色,你可以回复我你做的行动,我会告诉你每天对应的结果\n\nUser: 好的,我明白了,那么开始吧。请先给我一个角色身份。我是预言家,狼人,平民,守卫中的哪一个呢?\n\nAssistant: 你的身份是预言家。现在夜晚降临,请选择你要查验的玩家。\n\nUser: 今晚我要验2号玩家,他是什么身份?",

|

||||

"Writer, Translator, Role-playing": "写作,翻译,角色扮演",

|

||||

"Chinese Kongfu": "情境冒险",

|

||||

"Allow external access to the API (service must be restarted)": "允许外部访问API (必须重启服务)",

|

||||

@@ -153,7 +153,7 @@

|

||||

"Restart the app to apply DPI Scaling.": "重启应用以使显示缩放生效",

|

||||

"Restart": "重启",

|

||||

"API Chat Model Name": "API聊天模型名",

|

||||

"API Completion Model Name": "API补全模型名",

|

||||

"API Completion Model Name": "API续写模型名",

|

||||

"Localhost": "本地",

|

||||

"Retry": "重试",

|

||||

"Delete": "删除",

|

||||

@@ -223,5 +223,16 @@

|

||||

"Merge model successfully": "合并模型成功",

|

||||

"Convert Data successfully": "数据转换成功",

|

||||

"Please select a LoRA model": "请选择一个LoRA模型",

|

||||

"You are using sample data for training. For formal training, please make sure to create your own jsonl file.": "你正在使用示例数据训练,对于正式训练场合,请务必创建你自己的jsonl训练数据"

|

||||

"You are using sample data for training. For formal training, please make sure to create your own jsonl file.": "你正在使用示例数据训练,对于正式训练场合,请务必创建你自己的jsonl训练数据",

|

||||

"WSL is not running, please retry. If it keeps happening, it means you may be using an outdated version of WSL, run \"wsl --update\" to update.": "WSL没有运行,请重试。如果一直出现此错误,意味着你可能正在使用旧版本的WSL,请在cmd执行\"wsl --update\"以更新",

|

||||

"Memory is not enough, try to increase the virtual memory or use a smaller base model.": "内存不足,尝试增加虚拟内存,或使用一个更小规模的基底模型",

|

||||

"VRAM is not enough": "显存不足",

|

||||

"Training data is not enough, reduce context length or add more data for training": "训练数据不足,请减小上下文长度或增加训练数据",

|

||||

"You are using WSL 1 for training, please upgrade to WSL 2. e.g. Run \"wsl --set-version Ubuntu-22.04 2\"": "你正在使用WSL 1进行训练,请升级到WSL 2。例如,运行\"wsl --set-version Ubuntu-22.04 2\"",

|

||||

"Matched CUDA is not installed": "未安装匹配的CUDA",

|

||||

"Failed to convert data": "数据转换失败",

|

||||

"Failed to merge model": "合并模型失败",

|

||||

"The data path should be a directory or a file in jsonl format (more formats will be supported in the future).\n\nWhen you provide a directory path, all the txt files within that directory will be automatically converted into training data. This is commonly used for large-scale training in writing, code generation, or knowledge bases.\n\nThe jsonl format file can be referenced at https://github.com/Abel2076/json2binidx_tool/blob/main/sample.jsonl.\nYou can also write it similar to OpenAI's playground format, as shown in https://platform.openai.com/playground/p/default-chat.\nEven for multi-turn conversations, they must be written in a single line using `\\n` to indicate line breaks. If they are different dialogues or topics, they should be written in separate lines.": "数据路径必须是一个文件夹,或者jsonl格式文件 (未来会支持更多格式)\n\n当你填写的路径是一个文件夹时,该文件夹内的所有txt文件会被自动转换为训练数据,通常这用于大批量训练写作,代码生成或知识库\n\njsonl文件的格式参考 https://github.com/Abel2076/json2binidx_tool/blob/main/sample.jsonl\n你也可以仿照openai的playground编写,参考 https://platform.openai.com/playground/p/default-chat\n即使是多轮对话也必须写在一行,用`\\n`表示换行,如果是不同对话或主题,则另起一行",

|

||||

"Size mismatch for blocks. You are attempting to continue training from the LoRA model, but it does not match the base model. Please set LoRA model to None.": "尺寸不匹配块。你正在尝试从LoRA模型继续训练,但该LoRA模型与基底模型不匹配,请将LoRA模型设为空",

|

||||

"Instruction: Write a story using the following information\n\nInput: A man named Alex chops a tree down\n\nResponse:": "Instruction: Write a story using the following information\n\nInput: 艾利克斯砍倒了一棵树\n\nResponse:"

|

||||

}

|

||||

Binary file not shown.

|

Before Width: | Height: | Size: 4.4 KiB |

BIN

frontend/src/assets/images/logo.png

Normal file

BIN

frontend/src/assets/images/logo.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 36 KiB |

@@ -11,6 +11,7 @@ import {

|

||||

} from '@fluentui/react-components';

|

||||

import { ToolTipButton } from './ToolTipButton';

|

||||

import { useTranslation } from 'react-i18next';

|

||||

import MarkdownRender from './MarkdownRender';

|

||||

|

||||

export const DialogButton: FC<{

|

||||

text?: string | null

|

||||

@@ -19,12 +20,13 @@ export const DialogButton: FC<{

|

||||

className?: string,

|

||||

title: string,

|

||||

contentText: string,

|

||||

onConfirm: () => void,

|

||||

markdown?: boolean,

|

||||

onConfirm?: () => void,

|

||||

size?: 'small' | 'medium' | 'large',

|

||||

shape?: 'rounded' | 'circular' | 'square',

|

||||

appearance?: 'secondary' | 'primary' | 'outline' | 'subtle' | 'transparent',

|

||||

}> = ({

|

||||

text, icon, tooltip, className, title, contentText,

|

||||

text, icon, tooltip, className, title, contentText, markdown,

|

||||

onConfirm, size, shape, appearance

|

||||

}) => {

|

||||

const { t } = useTranslation();

|

||||

@@ -41,7 +43,11 @@ export const DialogButton: FC<{

|

||||

<DialogBody>

|

||||

<DialogTitle>{title}</DialogTitle>

|

||||

<DialogContent>

|

||||

{contentText}

|

||||

{

|

||||

markdown ?

|

||||

<MarkdownRender>{contentText}</MarkdownRender> :

|

||||

contentText

|

||||

}

|

||||

</DialogContent>

|

||||

<DialogActions>

|

||||

<DialogTrigger disableButtonEnhancement>

|

||||

|

||||

@@ -7,7 +7,7 @@ import { v4 as uuid } from 'uuid';

|

||||

import classnames from 'classnames';

|

||||

import { fetchEventSource } from '@microsoft/fetch-event-source';

|

||||

import { KebabHorizontalIcon, PencilIcon, SyncIcon, TrashIcon } from '@primer/octicons-react';

|

||||

import logo from '../assets/images/logo.jpg';

|

||||

import logo from '../assets/images/logo.png';

|

||||

import MarkdownRender from '../components/MarkdownRender';

|

||||

import { ToolTipButton } from '../components/ToolTipButton';

|

||||

import { ArrowCircleUp28Regular, Delete28Regular, RecordStop28Regular, Save28Regular } from '@fluentui/react-icons';

|

||||

@@ -421,7 +421,7 @@ const ChatPanel: FC = observer(() => {

|

||||

}

|

||||

});

|

||||

|

||||

OpenSaveFileDialog('*.md', 'conversation.md', savedContent).then((path) => {

|

||||

OpenSaveFileDialog('*.txt', 'conversation.txt', savedContent).then((path) => {

|

||||

if (path)

|

||||

toastWithButton(t('Conversation Saved'), t('Open'), () => {

|

||||

OpenFileFolder(path, false);

|

||||

|

||||

@@ -35,7 +35,7 @@ export const defaultPresets: CompletionPreset[] = [{

|

||||

topP: 0.5,

|

||||

presencePenalty: 0.4,

|

||||

frequencyPenalty: 0.4,

|

||||

stop: '\\n\\nBob',

|

||||

stop: '\\n\\nUser',

|

||||

injectStart: '',

|

||||

injectEnd: ''

|

||||

}

|

||||

@@ -46,37 +46,37 @@ export const defaultPresets: CompletionPreset[] = [{

|

||||

maxResponseToken: 500,

|

||||

temperature: 1,

|

||||

topP: 0.3,

|

||||

presencePenalty: 0.4,

|

||||

frequencyPenalty: 0.4,

|

||||

presencePenalty: 0,

|

||||

frequencyPenalty: 1,

|

||||

stop: '\\nEnglish',

|

||||

injectStart: '\\nChinese: ',

|

||||

injectEnd: '\\nEnglish: '

|

||||

}

|

||||

}, {

|

||||

name: 'Catgirl',

|

||||

prompt: 'The following is a conversation between a cat girl and her owner. The cat girl is a humanized creature that behaves like a cat but is humanoid. At the end of each sentence in the dialogue, she will add \"Meow~\". In the following content, Bob represents the owner and Alice represents the cat girl.\n\nBob: Hello.\n\nAlice: I\'m here, meow~.\n\nBob: Can you tell jokes?',

|

||||

prompt: 'The following is a conversation between a cat girl and her owner. The cat girl is a humanized creature that behaves like a cat but is humanoid. At the end of each sentence in the dialogue, she will add \"Meow~\". In the following content, User represents the owner and Assistant represents the cat girl.\n\nUser: Hello.\n\nAssistant: I\'m here, meow~.\n\nUser: Can you tell jokes?',

|

||||

params: {

|

||||

maxResponseToken: 500,

|

||||

temperature: 1.2,

|

||||

topP: 0.5,

|

||||

presencePenalty: 0.4,

|

||||

frequencyPenalty: 0.4,

|

||||

stop: '\\n\\nBob',

|

||||

injectStart: '\\n\\nAlice: ',

|

||||

injectEnd: '\\n\\nBob: '

|

||||

stop: '\\n\\nUser',

|

||||

injectStart: '\\n\\nAssistant: ',

|

||||

injectEnd: '\\n\\nUser: '

|

||||

}

|

||||

}, {

|

||||

name: 'Chinese Kongfu',

|

||||