Compare commits

161 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

622287f3da | ||

|

|

5d12bf74f6 | ||

|

|

c88f9321f5 | ||

|

|

f9f1d5c9fc | ||

|

|

0edec68376 | ||

|

|

ee63dc25f4 | ||

|

|

fee8fe73f2 | ||

|

|

1689f9e7e7 | ||

|

|

a1ed0cb2e9 | ||

|

|

5ee5fa7e6e | ||

|

|

d8c70453ec | ||

|

|

e930eb5967 | ||

|

|

aec6ad636a | ||

|

|

750c91bd3e | ||

|

|

fcc3886db1 | ||

|

|

22afc98be5 | ||

|

|

5b1a9448e6 | ||

|

|

07d89e3eeb | ||

|

|

96e97d9c1e | ||

|

|

bcb125e168 | ||

|

|

6fbb86667c | ||

|

|

2d545604f4 | ||

|

|

7210a7481e | ||

|

|

55210c89e2 | ||

|

|

c725d11dd9 | ||

|

|

ba2a6bd06c | ||

|

|

57b80c6ed0 | ||

|

|

115c59d5e1 | ||

|

|

543ff468b7 | ||

|

|

96ae47989e | ||

|

|

368932a610 | ||

|

|

f2cd531fcb | ||

|

|

511652b71c | ||

|

|

525fb132d6 | ||

|

|

5acb1fd958 | ||

|

|

76761ee453 | ||

|

|

134b2884e6 | ||

|

|

261e7c8916 | ||

|

|

987854fe49 | ||

|

|

c54d10795f | ||

|

|

b7d9ab0845 | ||

|

|

176800444a | ||

|

|

00c13cfc3f | ||

|

|

620e0228ed | ||

|

|

87ca694b0b | ||

|

|

417389c5f6 | ||

|

|

fa9f62b42c | ||

|

|

2c4e9f69eb | ||

|

|

119204368d | ||

|

|

87a86042d2 | ||

|

|

32c386799d | ||

|

|

b56a55e81d | ||

|

|

2fe7a23049 | ||

|

|

9ed3547738 | ||

|

|

a0522594da | ||

|

|

1cac147df4 | ||

|

|

db67f30082 | ||

|

|

08cf09416a | ||

|

|

7f2f4f15c1 | ||

|

|

97f6af595e | ||

|

|

447f4572b1 | ||

|

|

5c9b4a4c05 | ||

|

|

70f2271b94 | ||

|

|

15cd689741 | ||

|

|

82a68593bb | ||

|

|

21910af96a | ||

|

|

412a0fe135 | ||

|

|

cf0972ba52 | ||

|

|

3fe9ef4546 | ||

|

|

4cd5a56070 | ||

|

|

35a7437714 | ||

|

|

131a7ddf4a | ||

|

|

1465908574 | ||

|

|

3eb10f08bb | ||

|

|

b20990d380 | ||

|

|

1a5bf4a95e | ||

|

|

3d123524e7 | ||

|

|

25a41e51b3 | ||

|

|

f998ff239a | ||

|

|

bae9ae6551 | ||

|

|

285e8b1577 | ||

|

|

ce915cdf6a | ||

|

|

84317a03e8 | ||

|

|

ac1fa09604 | ||

|

|

43bc08648d | ||

|

|

e93c77394d | ||

|

|

4b2509e643 | ||

|

|

14fbb437ff | ||

|

|

8963543159 | ||

|

|

377f71b16b | ||

|

|

d32351c130 | ||

|

|

967be6f88f | ||

|

|

fcdda71b46 | ||

|

|

138251932c | ||

|

|

4d1a2396e3 | ||

|

|

b06e292989 | ||

|

|

b1d5b84dd6 | ||

|

|

2beddab114 | ||

|

|

7f85a08508 | ||

|

|

721653a812 | ||

|

|

d99488f22f | ||

|

|

21c3009945 | ||

|

|

3f77762fda | ||

|

|

9590d93c34 | ||

|

|

e0e846a191 | ||

|

|

e9cc9b0798 | ||

|

|

51c5696bb9 | ||

|

|

64f0610ed7 | ||

|

|

1591430742 | ||

|

|

17c690dfb1 | ||

|

|

4b640f884b | ||

|

|

8976764ee5 | ||

|

|

47db663fcd | ||

|

|

366e67bb6e | ||

|

|

b52bae6e17 | ||

|

|

714b8834c7 | ||

|

|

631704d04d | ||

|

|

5896593951 | ||

|

|

8431b5d24f | ||

|

|

bbd1ac1484 | ||

|

|

5990567a79 | ||

|

|

fa0fcc2c89 | ||

|

|

face4c97e8 | ||

|

|

c0ad99673b | ||

|

|

510683c57e | ||

|

|

cea1d8b4d1 | ||

|

|

b7c34b0d42 | ||

|

|

a95fbbbd78 | ||

|

|

d1560674b3 | ||

|

|

4fdfbd2f82 | ||

|

|

635767408f | ||

|

|

39a7eee8ea | ||

|

|

6ec6044901 | ||

|

|

4760a552d4 | ||

|

|

6294327273 | ||

|

|

260f51955a | ||

|

|

29ea886576 | ||

|

|

dae3f72d04 | ||

|

|

796338a32f | ||

|

|

66621e4ceb | ||

|

|

a6f5b520c3 | ||

|

|

c23c644fbc | ||

|

|

cb85c0938d | ||

|

|

88a5d11e15 | ||

|

|

1ecb0b444b | ||

|

|

72d601370d | ||

|

|

4814b88172 | ||

|

|

cfad67a922 | ||

|

|

c28f5604ab | ||

|

|

5853b8ca8d | ||

|

|

ebfc0ce672 | ||

|

|

e62fcd152a | ||

|

|

9bd9b9ecbd | ||

|

|

17faa9c5b8 | ||

|

|

4cd445bf77 | ||

|

|

4fb35845b0 | ||

|

|

f373f1caa8 | ||

|

|

4e75531651 | ||

|

|

539c538d65 | ||

|

|

d90186db33 | ||

|

|

a2d8729ae3 |

2

.gitattributes

vendored

2

.gitattributes

vendored

@@ -3,4 +3,6 @@ backend-python/wkv_cuda_utils/** linguist-vendored

|

||||

backend-python/get-pip.py linguist-vendored

|

||||

backend-python/convert_model.py linguist-vendored

|

||||

build/** linguist-vendored

|

||||

finetune/lora/** linguist-vendored

|

||||

finetune/json2binidx_tool/** linguist-vendored

|

||||

frontend/wailsjs/** linguist-generated

|

||||

123

.github/workflows/release.yml

vendored

Normal file

123

.github/workflows/release.yml

vendored

Normal file

@@ -0,0 +1,123 @@

|

||||

name: release

|

||||

on:

|

||||

push:

|

||||

tags:

|

||||

- "v*"

|

||||

|

||||

permissions:

|

||||

contents: write

|

||||

env:

|

||||

GH_TOKEN: ${{ github.token }}

|

||||

|

||||

jobs:

|

||||

create-draft:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- run: echo "VERSION=${GITHUB_REF_NAME#v}" >> $GITHUB_ENV

|

||||

- uses: actions/checkout@v3

|

||||

with:

|

||||

ref: master

|

||||

|

||||

- uses: jossef/action-set-json-field@v2.1

|

||||

with:

|

||||

file: manifest.json

|

||||

field: version

|

||||

value: ${{ env.VERSION }}

|

||||

|

||||

- continue-on-error: true

|

||||

run: |

|

||||

git config --global user.email "github-actions[bot]@users.noreply.github.com"

|

||||

git config --global user.name "github-actions[bot]"

|

||||

git commit -am "release ${{github.ref_name}}"

|

||||

git push

|

||||

|

||||

- run: |

|

||||

gh release create ${{github.ref_name}} -d -F CURRENT_CHANGE.md -t ${{github.ref_name}}

|

||||

|

||||

windows:

|

||||

runs-on: windows-latest

|

||||

needs: create-draft

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

with:

|

||||

ref: master

|

||||

- uses: actions/setup-go@v4

|

||||

with:

|

||||

go-version: '1.20.5'

|

||||

- uses: actions/setup-python@v4

|

||||

id: cp310

|

||||

with:

|

||||

python-version: '3.10'

|

||||

- uses: crazy-max/ghaction-chocolatey@v2

|

||||

with:

|

||||

args: install upx

|

||||

- run: |

|

||||

Start-BitsTransfer https://www.python.org/ftp/python/3.10.11/python-3.10.11-embed-amd64.zip ./python-3.10.11-embed-amd64.zip

|

||||

Expand-Archive ./python-3.10.11-embed-amd64.zip -DestinationPath ./py310

|

||||

$content=Get-Content "./py310/python310._pth"; $content | ForEach-Object {if ($_.ReadCount -eq 3) {"Lib\\site-packages"} else {$_}} | Set-Content ./py310/python310._pth

|

||||

./py310/python ./backend-python/get-pip.py

|

||||

./py310/python -m pip install Cython

|

||||

Copy-Item -Path "${{ steps.cp310.outputs.python-path }}/../include" -Destination "py310/include" -Recurse

|

||||

Copy-Item -Path "${{ steps.cp310.outputs.python-path }}/../libs" -Destination "py310/libs" -Recurse

|

||||

./py310/python -m pip install cyac

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@latest

|

||||

make

|

||||

Rename-Item -Path "build/bin/RWKV-Runner.exe" -NewName "RWKV-Runner_windows_x64.exe"

|

||||

|

||||

- run: gh release upload ${{github.ref_name}} build/bin/RWKV-Runner_windows_x64.exe

|

||||

|

||||

linux:

|

||||

runs-on: ubuntu-20.04

|

||||

needs: create-draft

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

with:

|

||||

ref: master

|

||||

- uses: actions/setup-go@v4

|

||||

with:

|

||||

go-version: '1.20.5'

|

||||

- run: |

|

||||

sudo apt-get update

|

||||

sudo apt-get install upx

|

||||

sudo apt-get install build-essential libgtk-3-dev libwebkit2gtk-4.0-dev

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@latest

|

||||

rm -rf ./backend-python/wkv_cuda_utils

|

||||

rm ./backend-python/get-pip.py

|

||||

sed -i '1,2d' ./backend-golang/wsl_not_windows.go

|

||||

rm ./backend-golang/wsl.go

|

||||

mv ./backend-golang/wsl_not_windows.go ./backend-golang/wsl.go

|

||||

make

|

||||

mv build/bin/RWKV-Runner build/bin/RWKV-Runner_linux_x64

|

||||

|

||||

- run: gh release upload ${{github.ref_name}} build/bin/RWKV-Runner_linux_x64

|

||||

|

||||

macos:

|

||||

runs-on: macos-13

|

||||

needs: create-draft

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

with:

|

||||

ref: master

|

||||

- uses: actions/setup-go@v4

|

||||

with:

|

||||

go-version: '1.20.5'

|

||||

- run: |

|

||||

go install github.com/wailsapp/wails/v2/cmd/wails@latest

|

||||

rm -rf ./backend-python/wkv_cuda_utils

|

||||

rm ./backend-python/get-pip.py

|

||||

sed -i '' '1,2d' ./backend-golang/wsl_not_windows.go

|

||||

rm ./backend-golang/wsl.go

|

||||

mv ./backend-golang/wsl_not_windows.go ./backend-golang/wsl.go

|

||||

make

|

||||

cp build/darwin/Readme_Install.txt build/bin/Readme_Install.txt

|

||||

cp build/bin/RWKV-Runner.app/Contents/MacOS/RWKV-Runner build/bin/RWKV-Runner_darwin_universal

|

||||

cd build/bin && zip -r RWKV-Runner_macos_universal.zip RWKV-Runner.app Readme_Install.txt

|

||||

|

||||

- run: gh release upload ${{github.ref_name}} build/bin/RWKV-Runner_macos_universal.zip build/bin/RWKV-Runner_darwin_universal

|

||||

|

||||

publish-release:

|

||||

runs-on: ubuntu-latest

|

||||

needs: [ windows, linux, macos ]

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- run: gh release edit ${{github.ref_name}} --draft=false

|

||||

6

.gitignore

vendored

6

.gitignore

vendored

@@ -8,14 +8,18 @@ __pycache__

|

||||

*.bin

|

||||

/config.json

|

||||

/cache.json

|

||||

/presets.json

|

||||

/frontend/stats.html

|

||||

/frontend/package.json.md5

|

||||

/py310

|

||||

*.zip

|

||||

/cmd-helper.bat

|

||||

/install-py-dep.bat

|

||||

/backend-python/wkv_cuda

|

||||

*.exe

|

||||

*.old

|

||||

.DS_Store

|

||||

*.log.*

|

||||

*.log

|

||||

*.log

|

||||

train_log.txt

|

||||

finetune/json2binidx_tool/data

|

||||

|

||||

19

.vscode/launch.json

vendored

19

.vscode/launch.json

vendored

@@ -10,9 +10,24 @@

|

||||

"name": "Python",

|

||||

"type": "python",

|

||||

"request": "launch",

|

||||

"program": "./backend-python/main.py",

|

||||

"program": "${workspaceFolder}/backend-python/main.py",

|

||||

"console": "integratedTerminal",

|

||||

"justMyCode": false,

|

||||

"justMyCode": false

|

||||

},

|

||||

{

|

||||

"name": "Golang",

|

||||

"type": "go",

|

||||

"request": "launch",

|

||||

"mode": "exec",

|

||||

"program": "${workspaceFolder}/build/bin/testwails.exe",

|

||||

"console": "integratedTerminal",

|

||||

"preLaunchTask": "build dev"

|

||||

},

|

||||

{

|

||||

"name": "Frontend",

|

||||

"type": "node-terminal",

|

||||

"request": "launch",

|

||||

"command": "wails dev -browser"

|

||||

}

|

||||

]

|

||||

}

|

||||

40

.vscode/tasks.json

vendored

Normal file

40

.vscode/tasks.json

vendored

Normal file

@@ -0,0 +1,40 @@

|

||||

{

|

||||

"version": "2.0.0",

|

||||

"tasks": [

|

||||

{

|

||||

"label": "build dev",

|

||||

"type": "shell",

|

||||

"options": {

|

||||

"cwd": "${workspaceFolder}",

|

||||

"env": {

|

||||

"CGO_ENABLED": "1"

|

||||

}

|

||||

},

|

||||

"osx": {

|

||||

"options": {

|

||||

"env": {

|

||||

"CGO_CFLAGS": "-mmacosx-version-min=10.13",

|

||||

"CGO_LDFLAGS": "-framework UniformTypeIdentifiers -mmacosx-version-min=10.13"

|

||||

}

|

||||

}

|

||||

},

|

||||

"windows": {

|

||||

"options": {

|

||||

"env": {

|

||||

"CGO_ENABLED": "0"

|

||||

}

|

||||

}

|

||||

},

|

||||

"command": "go",

|

||||

"args": [

|

||||

"build",

|

||||

"-tags",

|

||||

"dev",

|

||||

"-gcflags",

|

||||

"all=-N -l",

|

||||

"-o",

|

||||

"build/bin/testwails.exe"

|

||||

]

|

||||

}

|

||||

]

|

||||

}

|

||||

14

CURRENT_CHANGE.md

Normal file

14

CURRENT_CHANGE.md

Normal file

@@ -0,0 +1,14 @@

|

||||

## Changes

|

||||

|

||||

- improve `/completions` api compatibility

|

||||

- improve training data path compatibility

|

||||

- update manifest

|

||||

- update presets

|

||||

- chore

|

||||

|

||||

## Install

|

||||

|

||||

- Windows: https://github.com/josStorer/RWKV-Runner/blob/master/build/windows/Readme_Install.txt

|

||||

- MacOS: https://github.com/josStorer/RWKV-Runner/blob/master/build/darwin/Readme_Install.txt

|

||||

- Linux: https://github.com/josStorer/RWKV-Runner/blob/master/build/linux/Readme_Install.txt

|

||||

- Server-Deploy-Examples: https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples

|

||||

12

Makefile

12

Makefile

@@ -1,16 +1,22 @@

|

||||

ifeq ($(OS), Windows_NT)

|

||||

build: build-windows

|

||||

else

|

||||

else ifeq ($(shell uname -s), Darwin)

|

||||

build: build-macos

|

||||

else

|

||||

build: build-linux

|

||||

endif

|

||||

|

||||

build-windows:

|

||||

@echo ---- build for windows

|

||||

wails build -upx -ldflags "-s -w"

|

||||

wails build -upx -ldflags "-s -w" -platform windows/amd64

|

||||

|

||||

build-macos:

|

||||

@echo ---- build for macos

|

||||

wails build -ldflags "-s -w"

|

||||

wails build -ldflags "-s -w" -platform darwin/universal

|

||||

|

||||

build-linux:

|

||||

@echo ---- build for linux

|

||||

wails build -upx -ldflags "-s -w" -platform linux/amd64

|

||||

|

||||

dev:

|

||||

wails dev

|

||||

|

||||

91

README.md

91

README.md

@@ -13,9 +13,15 @@ compatible with the OpenAI API, which means that every ChatGPT client is an RWKV

|

||||

[![license][license-image]][license-url]

|

||||

[![release][release-image]][release-url]

|

||||

|

||||

English | [简体中文](README_ZH.md)

|

||||

English | [简体中文](README_ZH.md) | [日本語](README_JA.md)

|

||||

|

||||

[FAQs](https://github.com/josStorer/RWKV-Runner/wiki/FAQs) | [Preview](#Preview) | [Download][download-url]

|

||||

### Install

|

||||

|

||||

[![Windows][Windows-image]][Windows-url]

|

||||

[![MacOS][MacOS-image]][MacOS-url]

|

||||

[![Linux][Linux-image]][Linux-url]

|

||||

|

||||

[FAQs](https://github.com/josStorer/RWKV-Runner/wiki/FAQs) | [Preview](#Preview) | [Download][download-url] | [Server-Deploy-Examples](https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples)

|

||||

|

||||

[license-image]: http://img.shields.io/badge/license-MIT-blue.svg

|

||||

|

||||

@@ -27,9 +33,23 @@ English | [简体中文](README_ZH.md)

|

||||

|

||||

[download-url]: https://github.com/josStorer/RWKV-Runner/releases

|

||||

|

||||

[Windows-image]: https://img.shields.io/badge/-Windows-blue?logo=windows

|

||||

|

||||

[Windows-url]: https://github.com/josStorer/RWKV-Runner/blob/master/build/windows/Readme_Install.txt

|

||||

|

||||

[MacOS-image]: https://img.shields.io/badge/-MacOS-black?logo=apple

|

||||

|

||||

[MacOS-url]: https://github.com/josStorer/RWKV-Runner/blob/master/build/darwin/Readme_Install.txt

|

||||

|

||||

[Linux-image]: https://img.shields.io/badge/-Linux-black?logo=linux

|

||||

|

||||

[Linux-url]: https://github.com/josStorer/RWKV-Runner/blob/master/build/linux/Readme_Install.txt

|

||||

|

||||

</div>

|

||||

|

||||

#### Default configs do not enable custom CUDA kernel acceleration, but I strongly recommend that you enable it and run with int8 precision, which is much faster and consumes much less VRAM. Go to the Configs page and turn on `Use Custom CUDA kernel to Accelerate`.

|

||||

#### Default configs has enabled custom CUDA kernel acceleration, which is much faster and consumes much less VRAM. If you encounter possible compatibility issues, go to the Configs page and turn off `Use Custom CUDA kernel to Accelerate`.

|

||||

|

||||

#### If Windows Defender claims this is a virus, you can try downloading [v1.0.8](https://github.com/josStorer/RWKV-Runner/releases/tag/v1.0.8)/[v1.0.9](https://github.com/josStorer/RWKV-Runner/releases/tag/v1.0.9) and letting it update automatically to the latest version, or add it to the trusted list.

|

||||

|

||||

#### For different tasks, adjusting API parameters can achieve better results. For example, for translation tasks, you can try setting Temperature to 1 and Top_P to 0.3.

|

||||

|

||||

@@ -44,6 +64,8 @@ English | [简体中文](README_ZH.md)

|

||||

- Easy-to-understand and operate parameter configuration

|

||||

- Built-in model conversion tool

|

||||

- Built-in download management and remote model inspection

|

||||

- Built-in one-click LoRA Finetune

|

||||

- Can also be used as an OpenAI ChatGPT and GPT-Playground client

|

||||

- Multilingual localization

|

||||

- Theme switching

|

||||

- Automatic updates

|

||||

@@ -67,46 +89,83 @@ body.json:

|

||||

}

|

||||

```

|

||||

|

||||

## Todo

|

||||

## Embeddings API Example

|

||||

|

||||

- [ ] Model training functionality

|

||||

- [x] CUDA operator int8 acceleration

|

||||

- [ ] macOS support

|

||||

- [ ] Linux support

|

||||

- [ ] Local State Cache DB

|

||||

If you are using langchain, just use `OpenAIEmbeddings(openai_api_base="http://127.0.0.1:8000", openai_api_key="sk-")`

|

||||

|

||||

```python

|

||||

import numpy as np

|

||||

import requests

|

||||

|

||||

|

||||

def cosine_similarity(a, b):

|

||||

return np.dot(a, b) / (np.linalg.norm(a) * np.linalg.norm(b))

|

||||

|

||||

|

||||

values = [

|

||||

"I am a girl",

|

||||

"我是个女孩",

|

||||

"私は女の子です",

|

||||

"广东人爱吃福建人",

|

||||

"我是个人类",

|

||||

"I am a human",

|

||||

"that dog is so cute",

|

||||

"私はねこむすめです、にゃん♪",

|

||||

"宇宙级特大事件!号外号外!"

|

||||

]

|

||||

|

||||

embeddings = []

|

||||

for v in values:

|

||||

r = requests.post("http://127.0.0.1:8000/embeddings", json={"input": v})

|

||||

embedding = r.json()["data"][0]["embedding"]

|

||||

embeddings.append(embedding)

|

||||

|

||||

compared_embedding = embeddings[0]

|

||||

|

||||

embeddings_cos_sim = [cosine_similarity(compared_embedding, e) for e in embeddings]

|

||||

|

||||

for i in np.argsort(embeddings_cos_sim)[::-1]:

|

||||

print(f"{embeddings_cos_sim[i]:.10f} - {values[i]}")

|

||||

```

|

||||

|

||||

## Related Repositories:

|

||||

|

||||

- RWKV-4-World: https://huggingface.co/BlinkDL/rwkv-4-world/tree/main

|

||||

- RWKV-4-Raven: https://huggingface.co/BlinkDL/rwkv-4-raven/tree/main

|

||||

- ChatRWKV: https://github.com/BlinkDL/ChatRWKV

|

||||

- RWKV-LM: https://github.com/BlinkDL/RWKV-LM

|

||||

- RWKV-LM-LoRA: https://github.com/Blealtan/RWKV-LM-LoRA

|

||||

|

||||

## Preview

|

||||

|

||||

### Homepage

|

||||

|

||||

|

||||

|

||||

|

||||

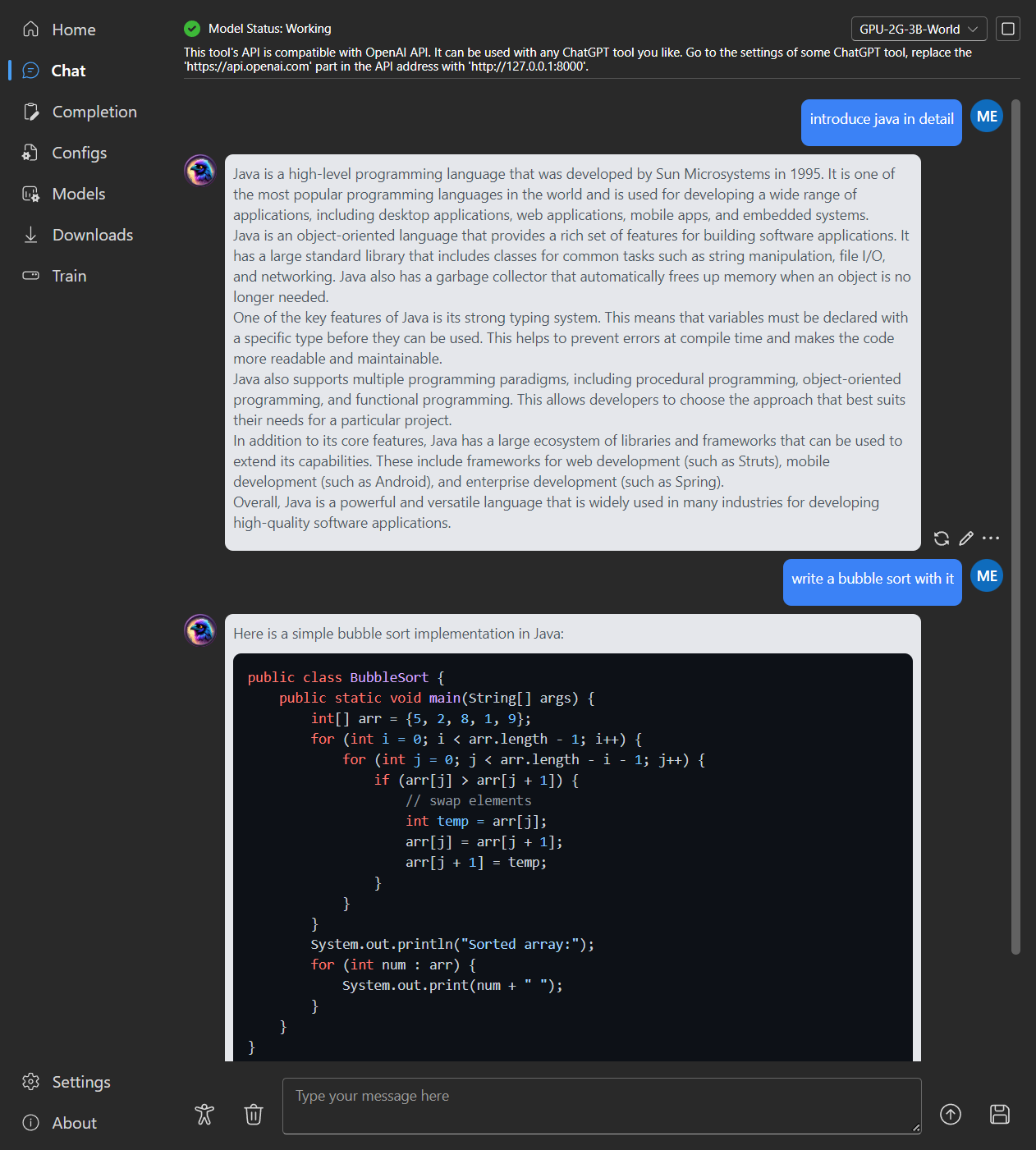

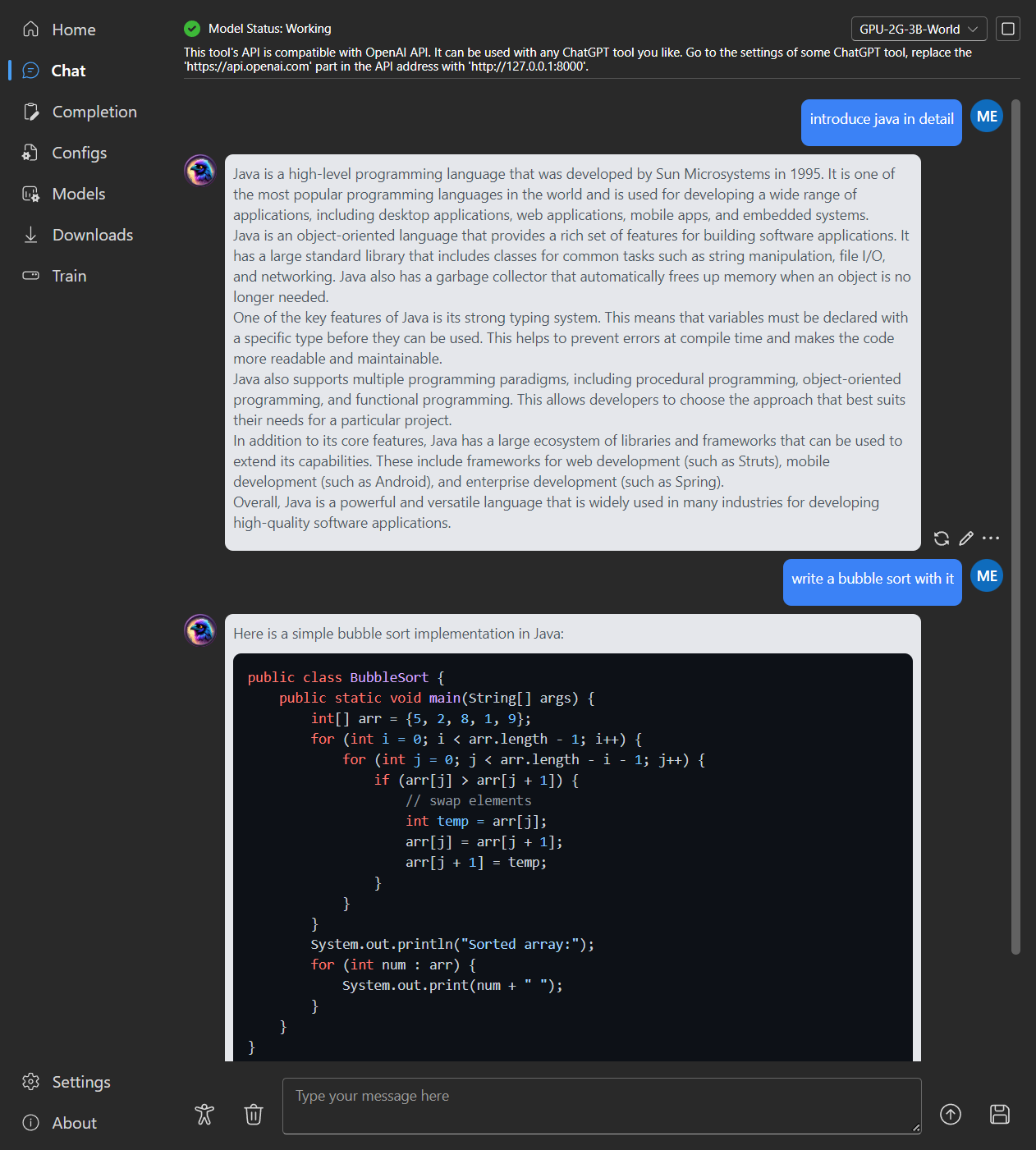

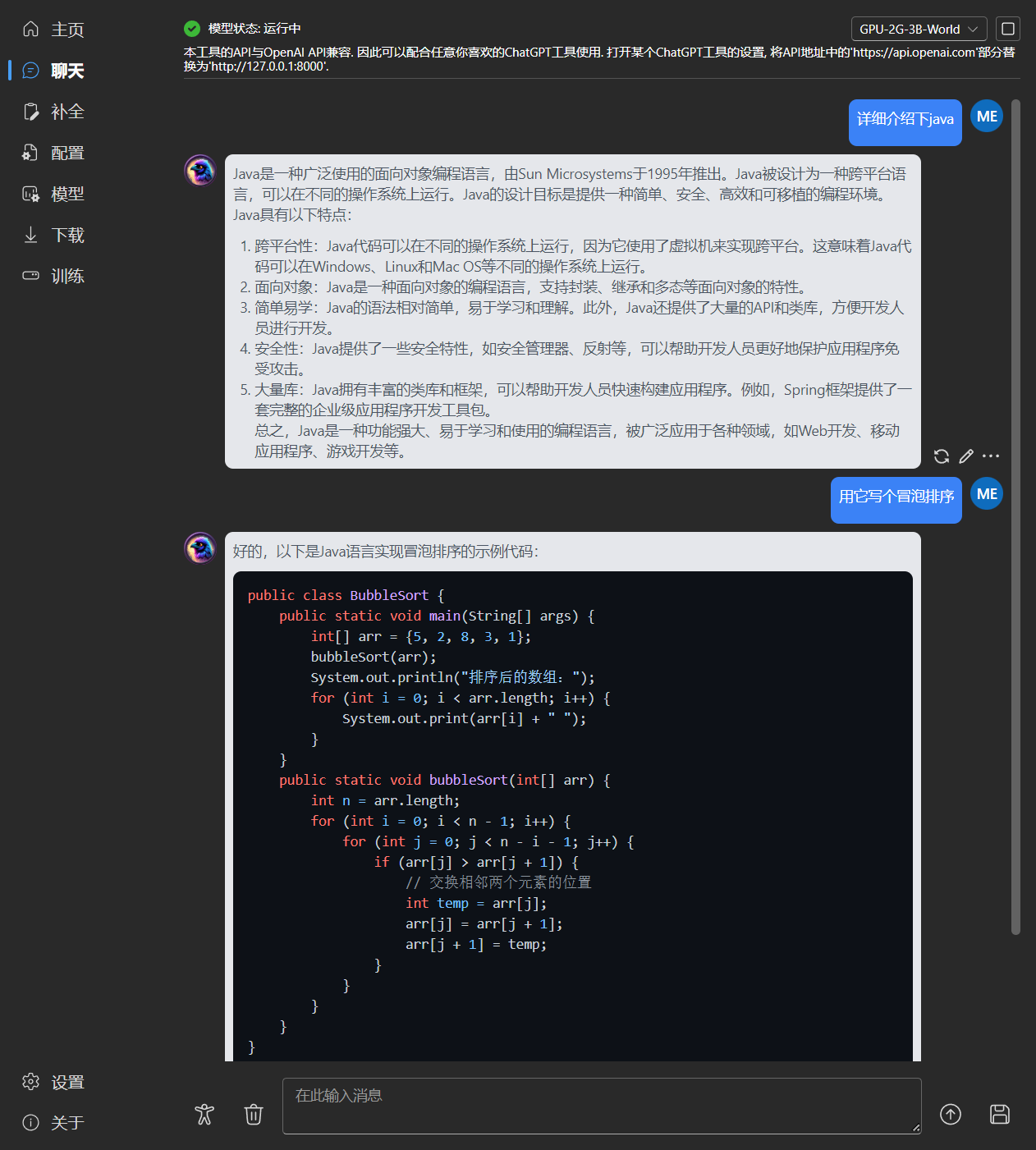

### Chat

|

||||

|

||||

|

||||

|

||||

|

||||

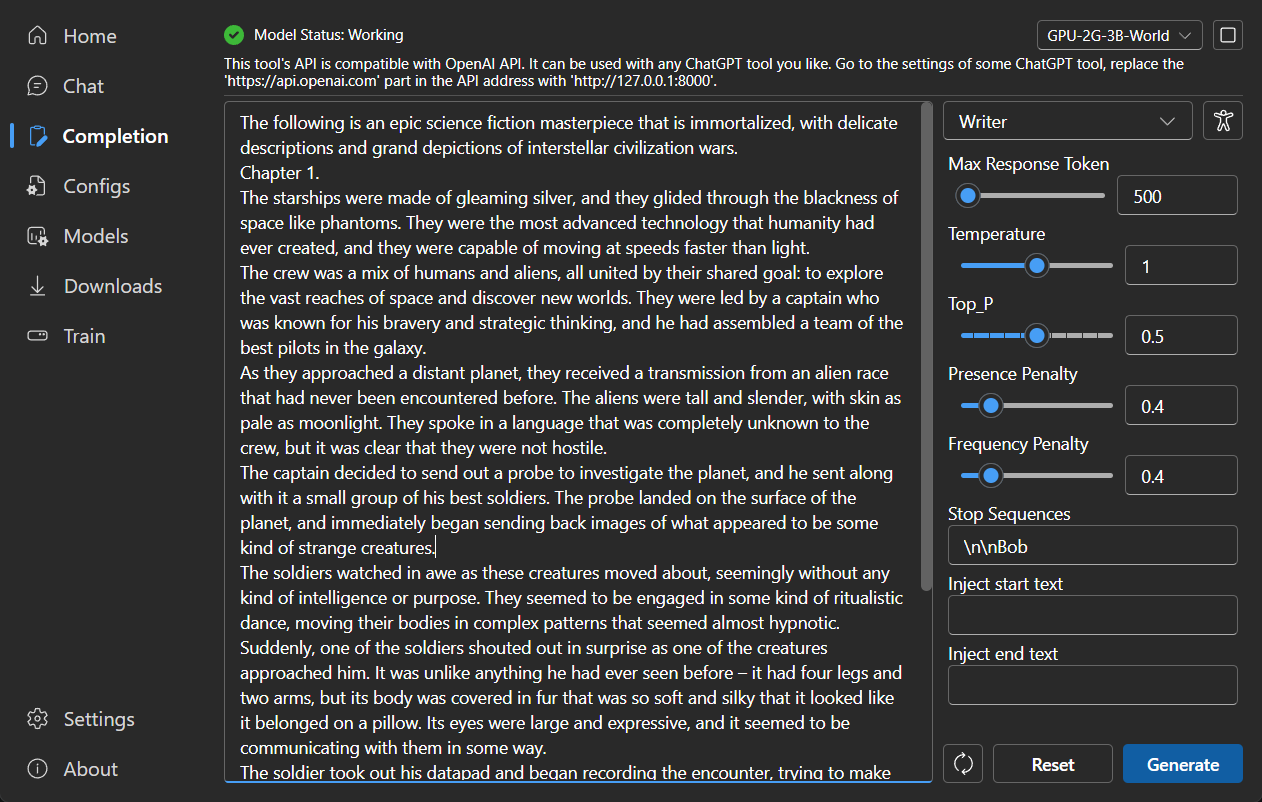

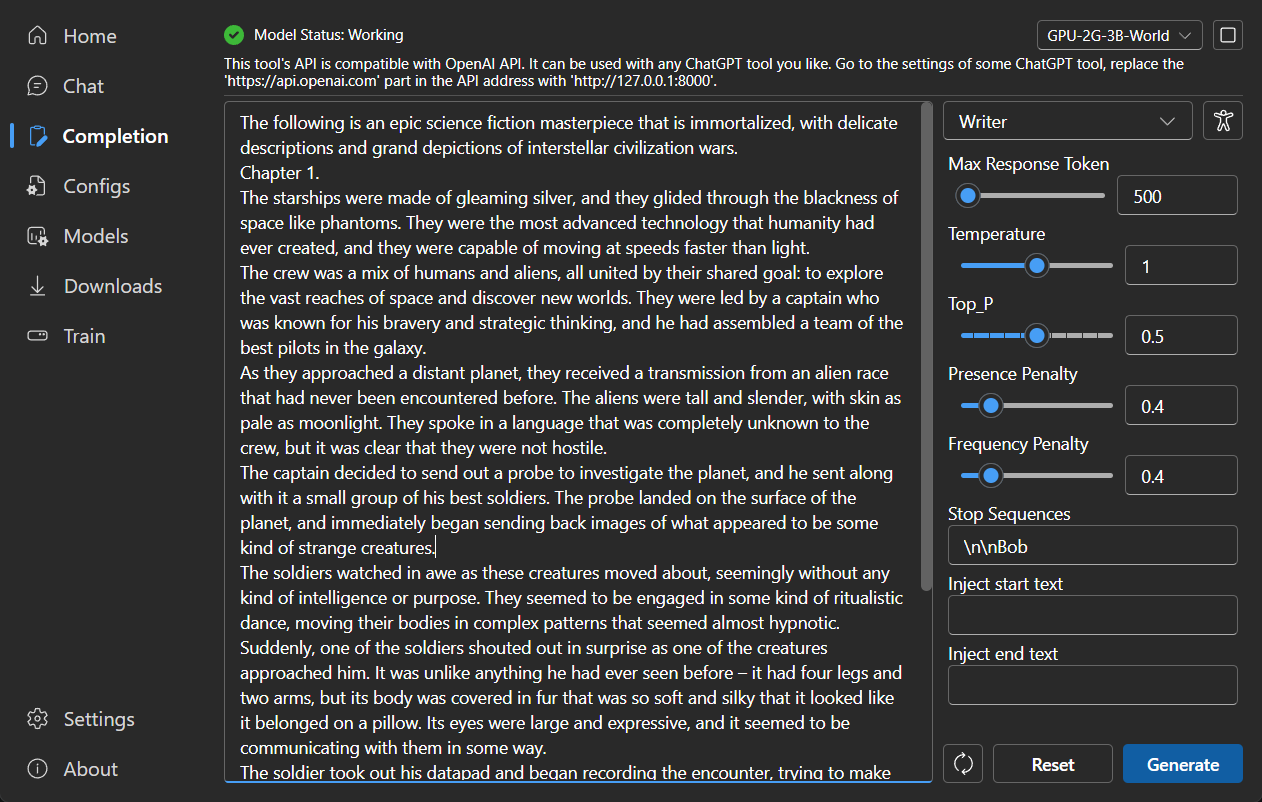

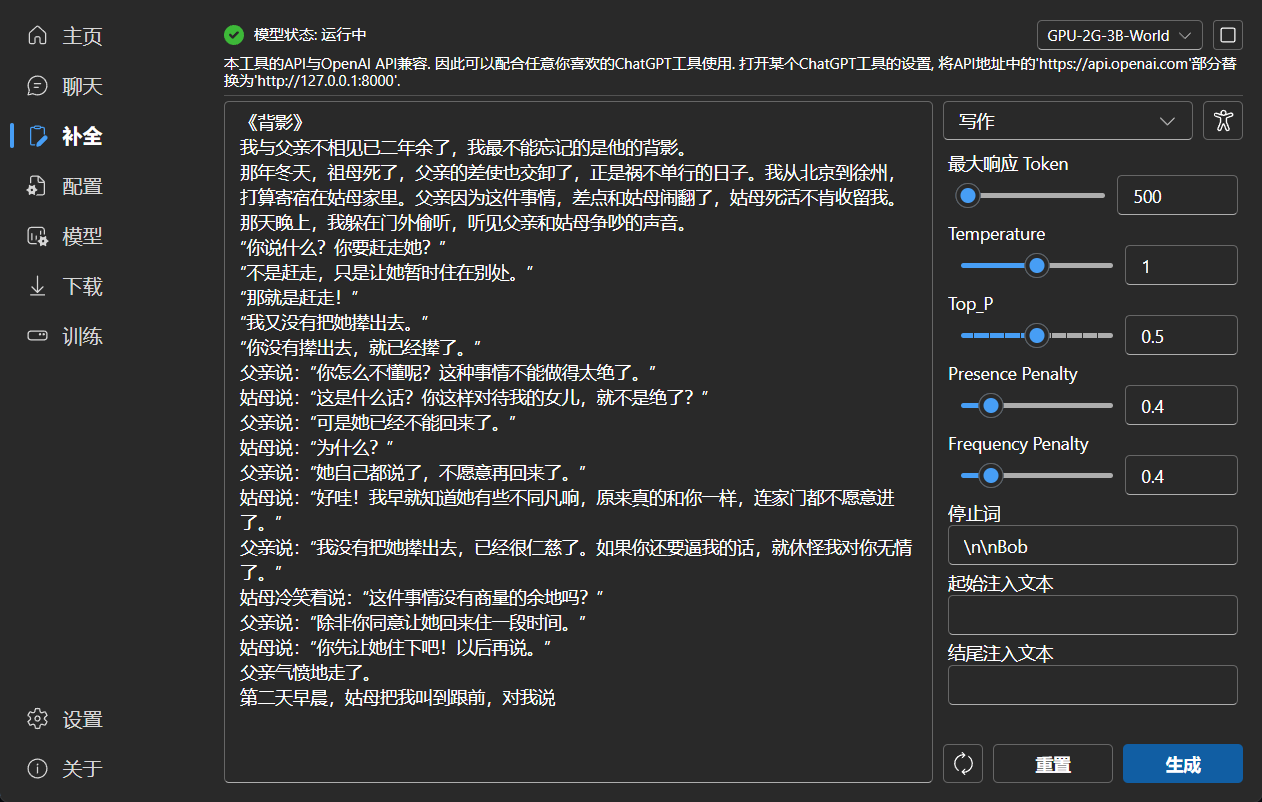

### Completion

|

||||

|

||||

|

||||

|

||||

|

||||

### Configuration

|

||||

|

||||

|

||||

|

||||

|

||||

### Model Management

|

||||

|

||||

|

||||

|

||||

|

||||

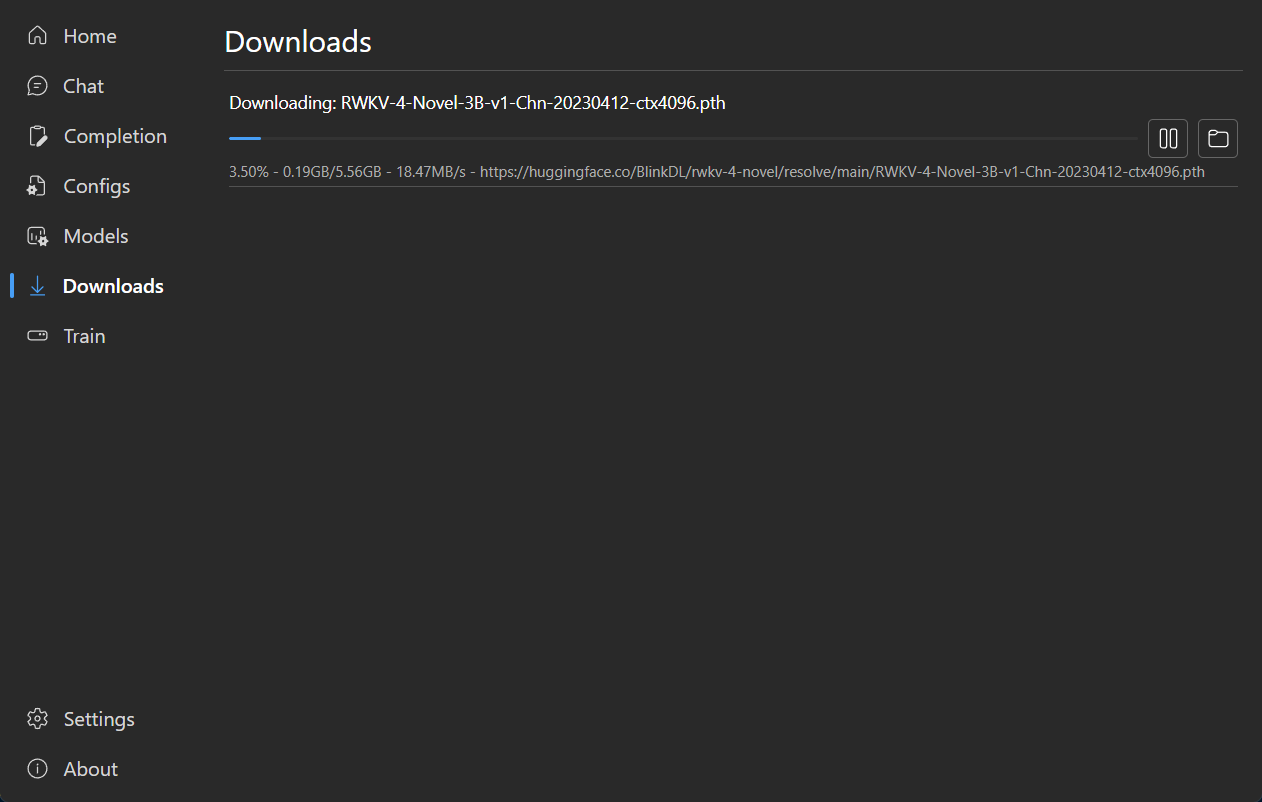

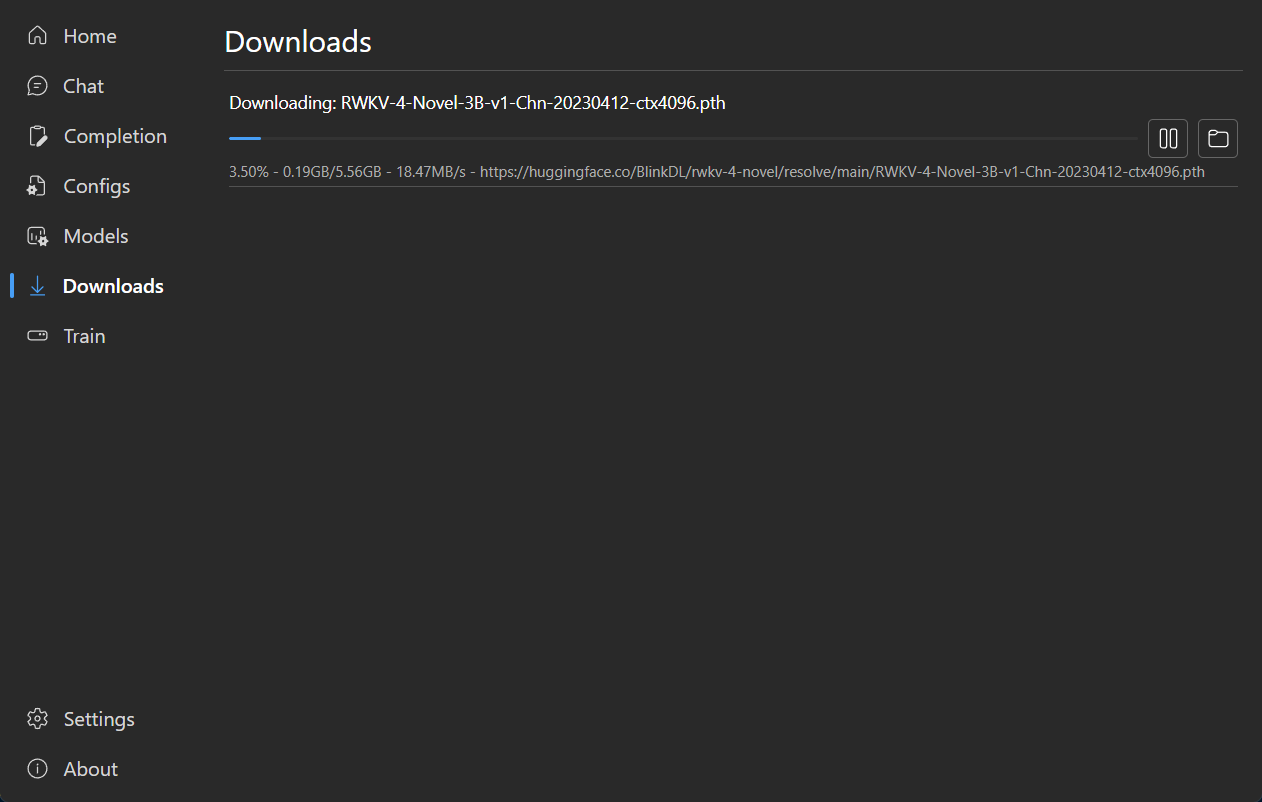

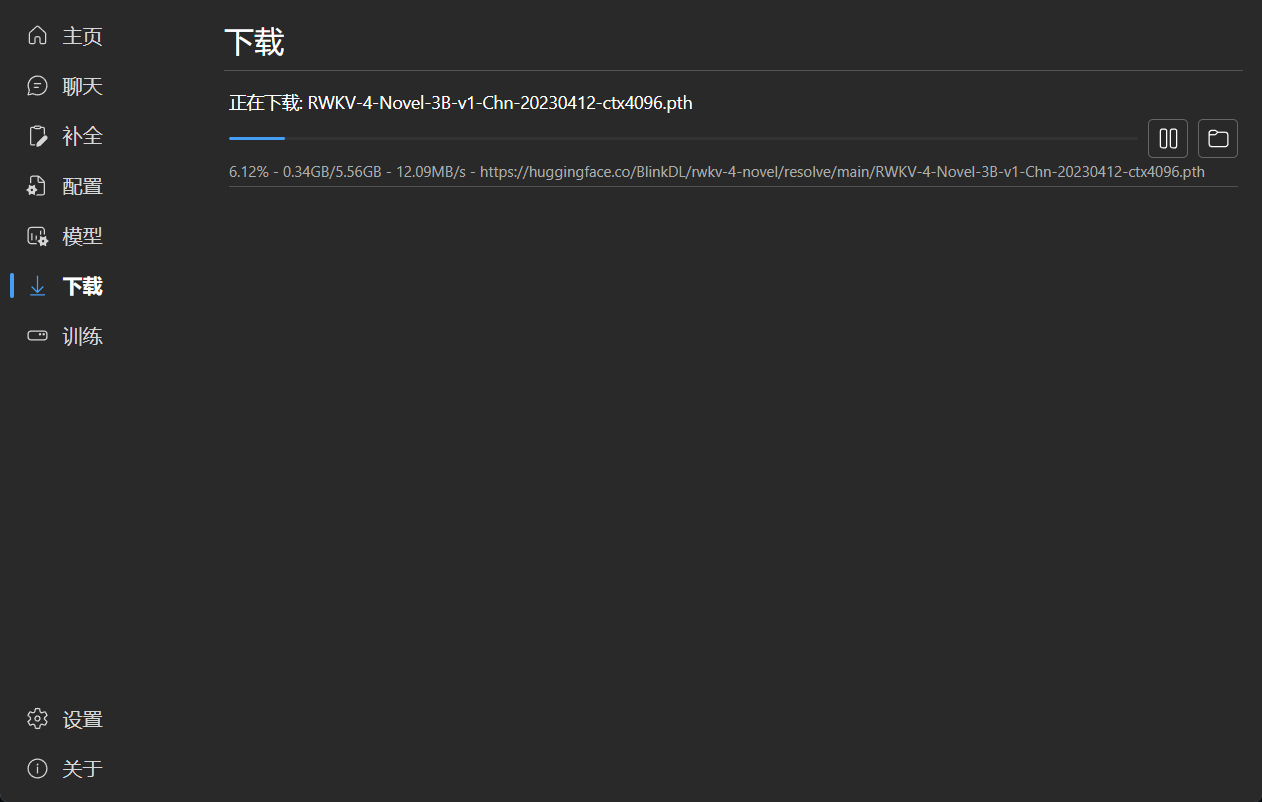

### Download Management

|

||||

|

||||

|

||||

|

||||

|

||||

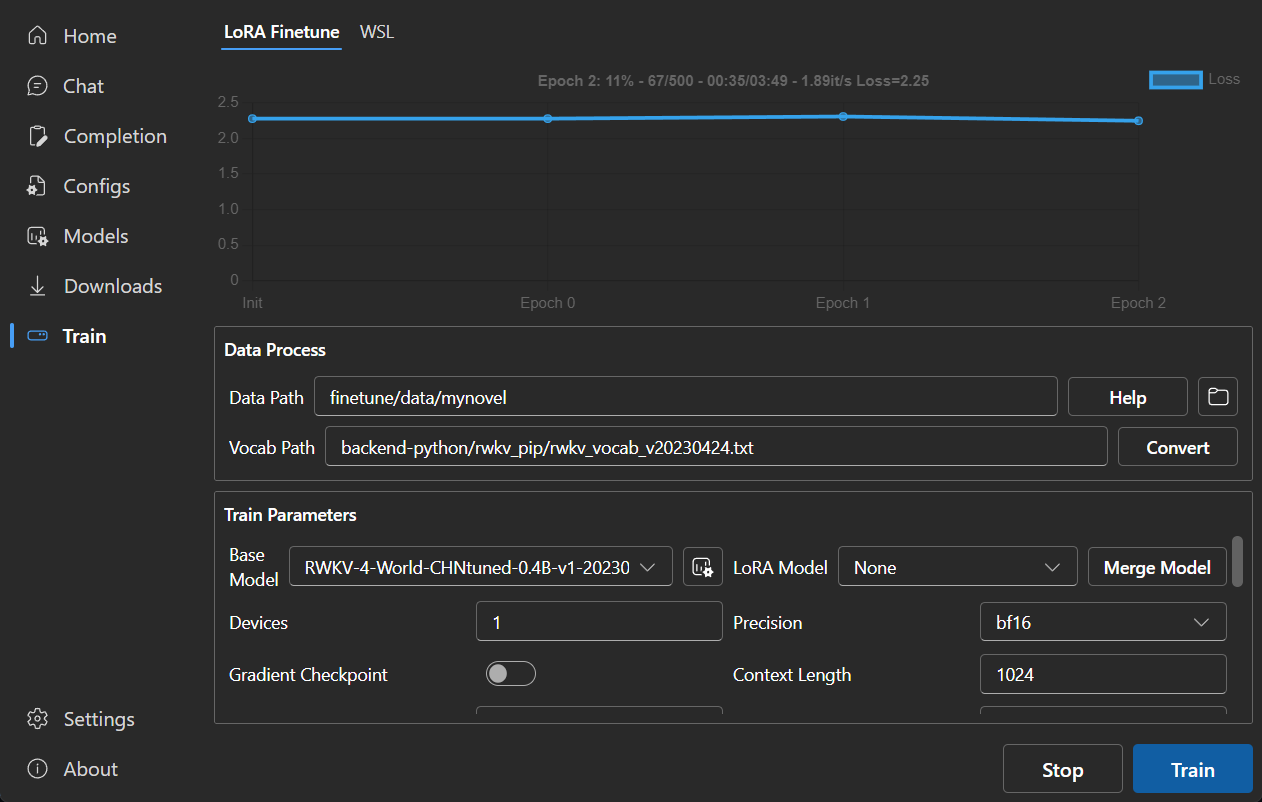

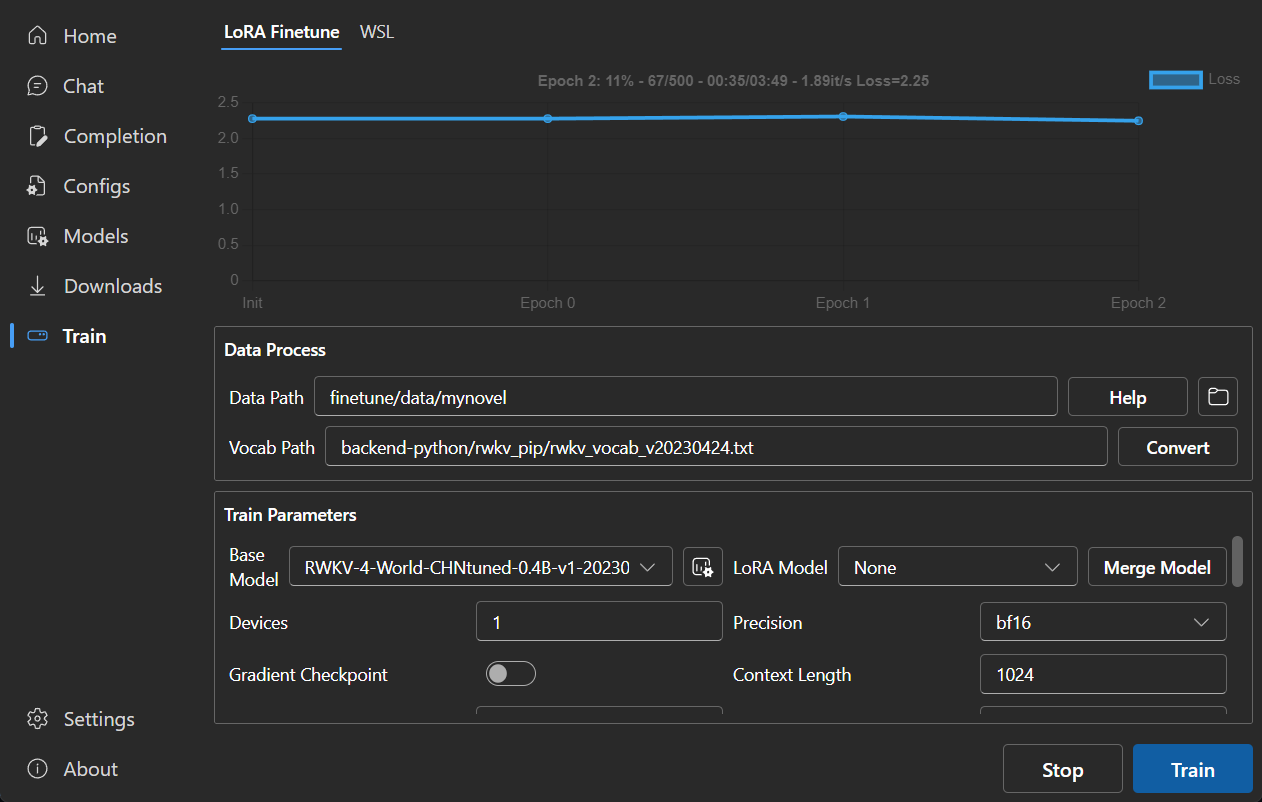

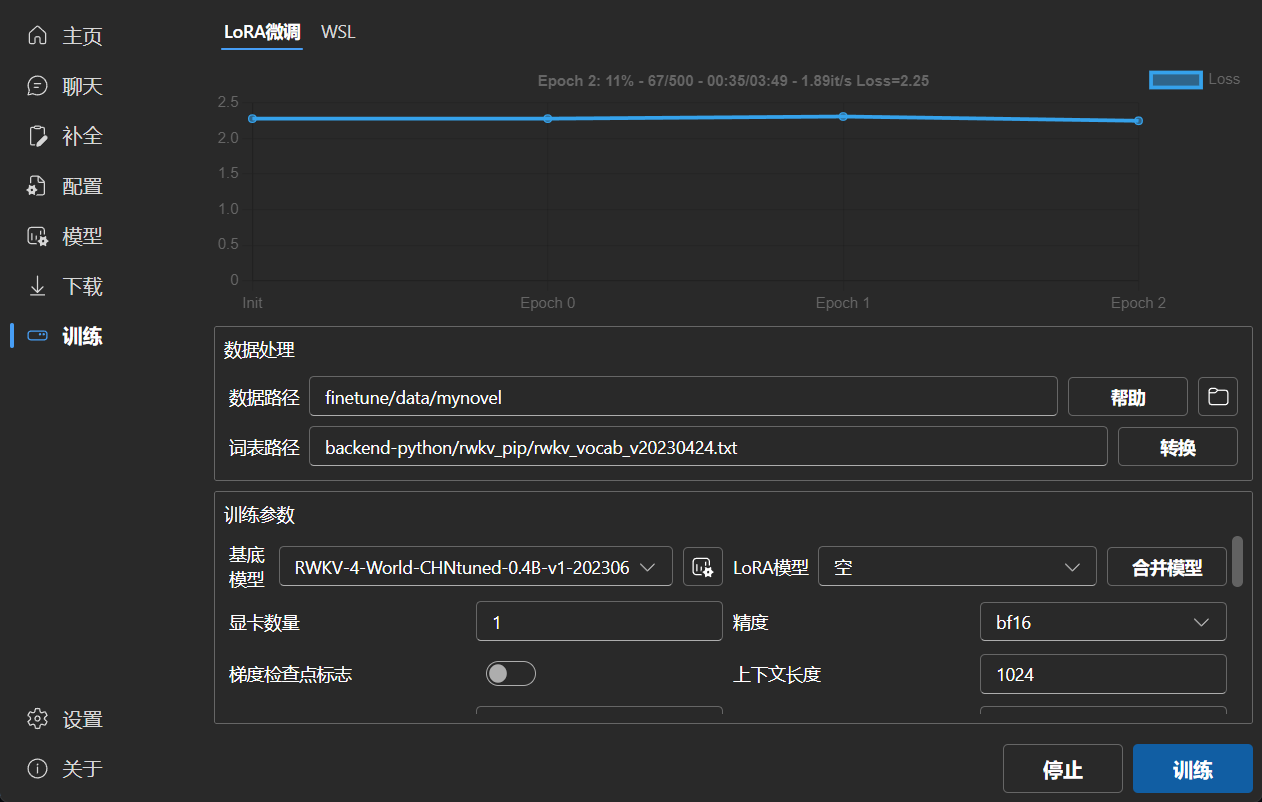

### LoRA Finetune

|

||||

|

||||

|

||||

|

||||

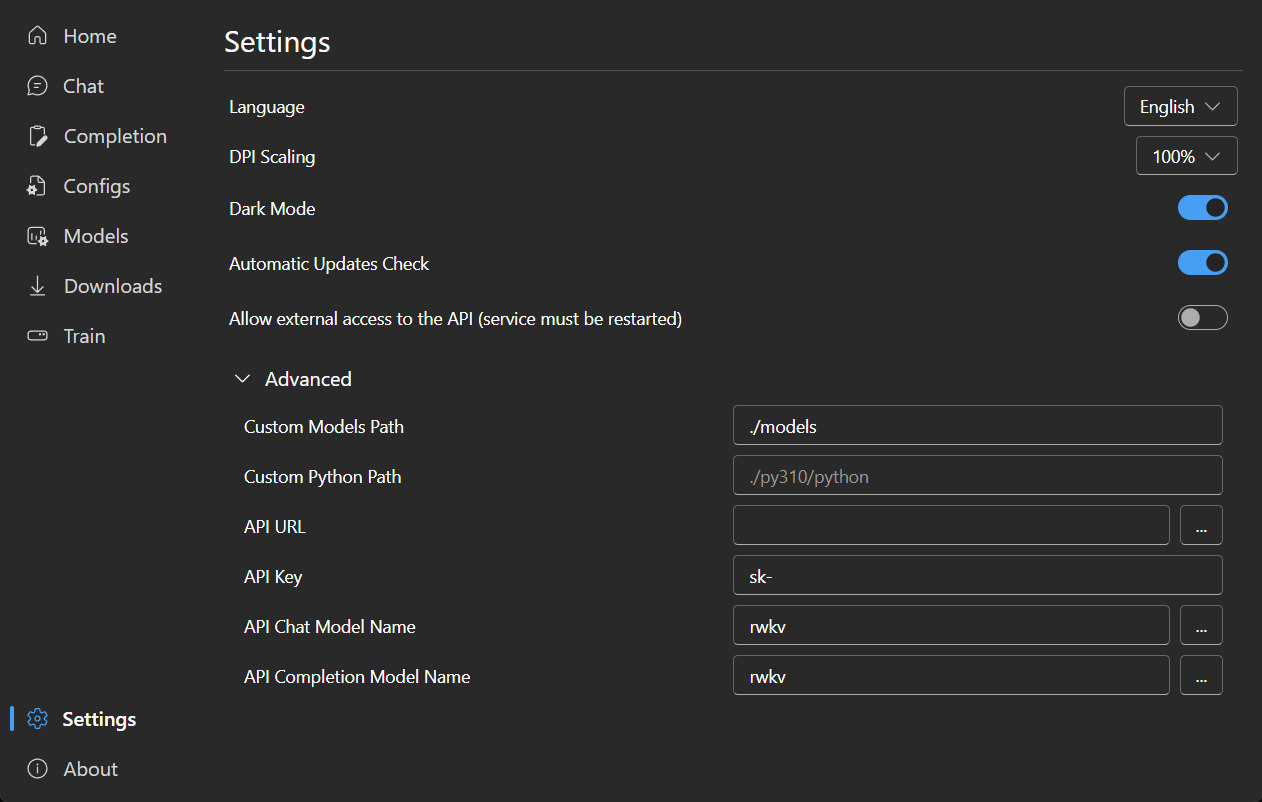

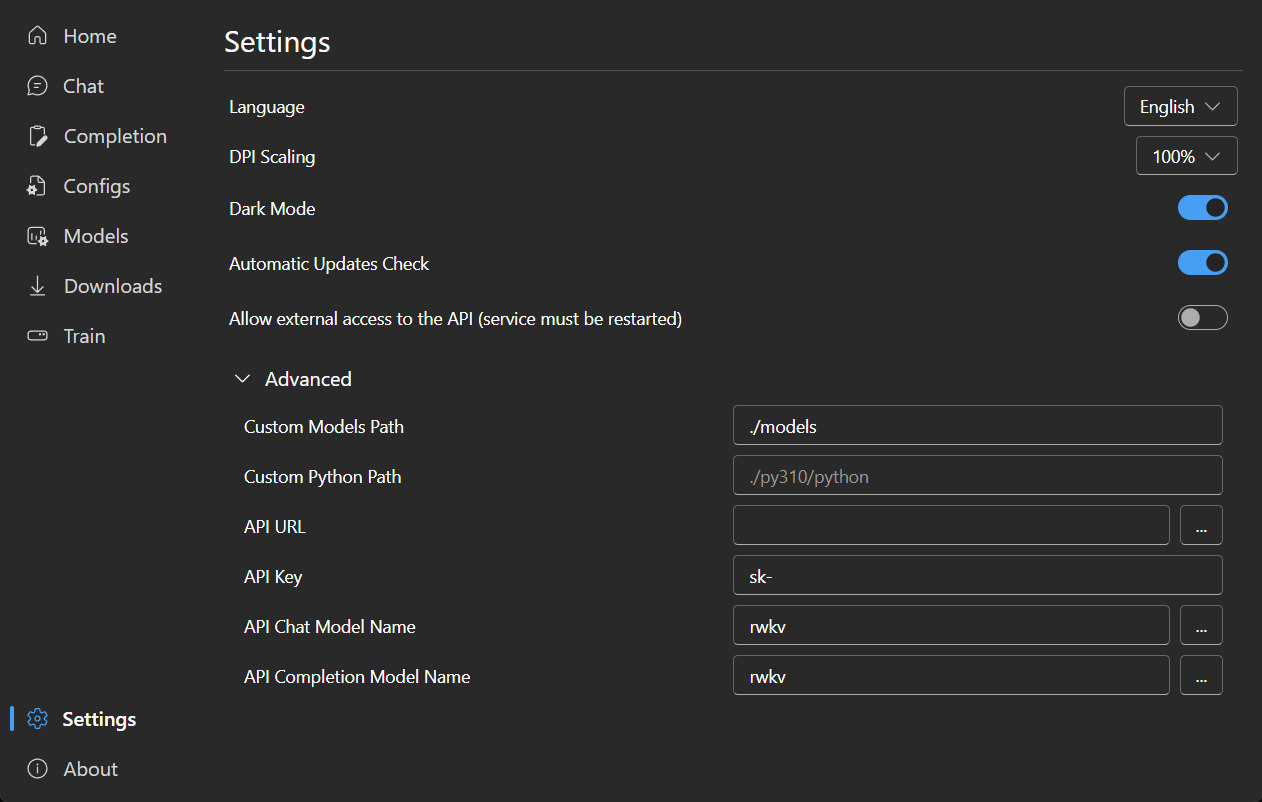

### Settings

|

||||

|

||||

|

||||

|

||||

|

||||

171

README_JA.md

Normal file

171

README_JA.md

Normal file

@@ -0,0 +1,171 @@

|

||||

<p align="center">

|

||||

<img src="https://github.com/josStorer/RWKV-Runner/assets/13366013/d24834b0-265d-45f5-93c0-fac1e19562af">

|

||||

</p>

|

||||

|

||||

<h1 align="center">RWKV Runner</h1>

|

||||

|

||||

<div align="center">

|

||||

|

||||

このプロジェクトは、すべてを自動化することで、大規模な言語モデルを使用する際の障壁をなくすことを目的としています。必要なのは、

|

||||

わずか数メガバイトの軽量な実行プログラムだけです。さらに、このプロジェクトは OpenAI API と互換性のあるインターフェイスを提供しており、

|

||||

すべての ChatGPT クライアントは RWKV クライアントであることを意味します。

|

||||

|

||||

[![license][license-image]][license-url]

|

||||

[![release][release-image]][release-url]

|

||||

|

||||

[English](README.md) | [简体中文](README_ZH.md) | 日本語

|

||||

|

||||

### インストール

|

||||

|

||||

[![Windows][Windows-image]][Windows-url]

|

||||

[![MacOS][MacOS-image]][MacOS-url]

|

||||

[![Linux][Linux-image]][Linux-url]

|

||||

|

||||

[FAQs](https://github.com/josStorer/RWKV-Runner/wiki/FAQs) | [プレビュー](#Preview) | [ダウンロード][download-url] | [サーバーデプロイ例](https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples)

|

||||

|

||||

[license-image]: http://img.shields.io/badge/license-MIT-blue.svg

|

||||

|

||||

[license-url]: https://github.com/josStorer/RWKV-Runner/blob/master/LICENSE

|

||||

|

||||

[release-image]: https://img.shields.io/github/release/josStorer/RWKV-Runner.svg

|

||||

|

||||

[release-url]: https://github.com/josStorer/RWKV-Runner/releases/latest

|

||||

|

||||

[download-url]: https://github.com/josStorer/RWKV-Runner/releases

|

||||

|

||||

[Windows-image]: https://img.shields.io/badge/-Windows-blue?logo=windows

|

||||

|

||||

[Windows-url]: https://github.com/josStorer/RWKV-Runner/blob/master/build/windows/Readme_Install.txt

|

||||

|

||||

[MacOS-image]: https://img.shields.io/badge/-MacOS-black?logo=apple

|

||||

|

||||

[MacOS-url]: https://github.com/josStorer/RWKV-Runner/blob/master/build/darwin/Readme_Install.txt

|

||||

|

||||

[Linux-image]: https://img.shields.io/badge/-Linux-black?logo=linux

|

||||

|

||||

[Linux-url]: https://github.com/josStorer/RWKV-Runner/blob/master/build/linux/Readme_Install.txt

|

||||

|

||||

</div>

|

||||

|

||||

#### デフォルトの設定はカスタム CUDA カーネルアクセラレーションを有効にしています。互換性の問題が発生する可能性がある場合は、コンフィグページに移動し、`Use Custom CUDA kernel to Accelerate` をオフにしてください。

|

||||

|

||||

#### Windows Defender がこれをウイルスだと主張する場合は、[v1.0.8](https://github.com/josStorer/RWKV-Runner/releases/tag/v1.0.8) / [v1.0.9](https://github.com/josStorer/RWKV-Runner/releases/tag/v1.0.9) をダウンロードして最新版に自動更新させるか、信頼済みリストに追加してみてください。

|

||||

|

||||

#### 異なるタスクについては、API パラメータを調整することで、より良い結果を得ることができます。例えば、翻訳タスクの場合、Temperature を 1 に、Top_P を 0.3 に設定してみてください。

|

||||

|

||||

## 特徴

|

||||

|

||||

- RWKV モデル管理とワンクリック起動

|

||||

- OpenAI API と完全に互換性があり、すべての ChatGPT クライアントを RWKV クライアントにします。モデル起動後、

|

||||

http://127.0.0.1:8000/docs を開いて詳細をご覧ください。

|

||||

- 依存関係の自動インストールにより、軽量な実行プログラムのみを必要とします

|

||||

- 2G から 32G の VRAM のコンフィグが含まれており、ほとんどのコンピュータで動作します

|

||||

- ユーザーフレンドリーなチャットと完成インタラクションインターフェースを搭載

|

||||

- 分かりやすく操作しやすいパラメータ設定

|

||||

- 内蔵モデル変換ツール

|

||||

- ダウンロード管理とリモートモデル検査機能内蔵

|

||||

- 内蔵のLoRA微調整機能を搭載しています

|

||||

- このプログラムは、OpenAI ChatGPTとGPT Playgroundのクライアントとしても使用できます

|

||||

- 多言語ローカライズ

|

||||

- テーマ切り替え

|

||||

- 自動アップデート

|

||||

|

||||

## API 同時実行ストレステスト

|

||||

|

||||

```bash

|

||||

ab -p body.json -T application/json -c 20 -n 100 -l http://127.0.0.1:8000/chat/completions

|

||||

```

|

||||

|

||||

body.json:

|

||||

|

||||

```json

|

||||

{

|

||||

"messages": [

|

||||

{

|

||||

"role": "user",

|

||||

"content": "Hello"

|

||||

}

|

||||

]

|

||||

}

|

||||

```

|

||||

|

||||

## 埋め込み API の例

|

||||

|

||||

LangChain を使用している場合は、`OpenAIEmbeddings(openai_api_base="http://127.0.0.1:8000", openai_api_key="sk-")`を使用してください

|

||||

|

||||

```python

|

||||

import numpy as np

|

||||

import requests

|

||||

|

||||

|

||||

def cosine_similarity(a, b):

|

||||

return np.dot(a, b) / (np.linalg.norm(a) * np.linalg.norm(b))

|

||||

|

||||

|

||||

values = [

|

||||

"I am a girl",

|

||||

"我是个女孩",

|

||||

"私は女の子です",

|

||||

"广东人爱吃福建人",

|

||||

"我是个人类",

|

||||

"I am a human",

|

||||

"that dog is so cute",

|

||||

"私はねこむすめです、にゃん♪",

|

||||

"宇宙级特大事件!号外号外!"

|

||||

]

|

||||

|

||||

embeddings = []

|

||||

for v in values:

|

||||

r = requests.post("http://127.0.0.1:8000/embeddings", json={"input": v})

|

||||

embedding = r.json()["data"][0]["embedding"]

|

||||

embeddings.append(embedding)

|

||||

|

||||

compared_embedding = embeddings[0]

|

||||

|

||||

embeddings_cos_sim = [cosine_similarity(compared_embedding, e) for e in embeddings]

|

||||

|

||||

for i in np.argsort(embeddings_cos_sim)[::-1]:

|

||||

print(f"{embeddings_cos_sim[i]:.10f} - {values[i]}")

|

||||

```

|

||||

|

||||

## 関連リポジトリ:

|

||||

|

||||

- RWKV-4-World: https://huggingface.co/BlinkDL/rwkv-4-world/tree/main

|

||||

- RWKV-4-Raven: https://huggingface.co/BlinkDL/rwkv-4-raven/tree/main

|

||||

- ChatRWKV: https://github.com/BlinkDL/ChatRWKV

|

||||

- RWKV-LM: https://github.com/BlinkDL/RWKV-LM

|

||||

- RWKV-LM-LoRA: https://github.com/Blealtan/RWKV-LM-LoRA

|

||||

|

||||

## プレビュー

|

||||

|

||||

### ホームページ

|

||||

|

||||

|

||||

|

||||

### チャット

|

||||

|

||||

|

||||

|

||||

### 補完

|

||||

|

||||

|

||||

|

||||

### コンフィグ

|

||||

|

||||

|

||||

|

||||

### モデル管理

|

||||

|

||||

|

||||

|

||||

### ダウンロード管理

|

||||

|

||||

|

||||

|

||||

### LoRA Finetune

|

||||

|

||||

|

||||

|

||||

### 設定

|

||||

|

||||

|

||||

93

README_ZH.md

93

README_ZH.md

@@ -12,9 +12,15 @@ API兼容的接口,这意味着一切ChatGPT客户端都是RWKV客户端。

|

||||

[![license][license-image]][license-url]

|

||||

[![release][release-image]][release-url]

|

||||

|

||||

[English](README.md) | 简体中文

|

||||

[English](README.md) | 简体中文 | [日本語](README_JA.md)

|

||||

|

||||

[视频演示](https://www.bilibili.com/video/BV1hM4y1v76R) | [疑难解答](https://www.bilibili.com/read/cv23921171) | [预览](#Preview) | [下载][download-url] | [懒人包](https://pan.baidu.com/s/1wchIUHgne3gncIiLIeKBEQ?pwd=1111)

|

||||

### 安装

|

||||

|

||||

[![Windows][Windows-image]][Windows-url]

|

||||

[![MacOS][MacOS-image]][MacOS-url]

|

||||

[![Linux][Linux-image]][Linux-url]

|

||||

|

||||

[视频演示](https://www.bilibili.com/video/BV1hM4y1v76R) | [疑难解答](https://www.bilibili.com/read/cv23921171) | [预览](#Preview) | [下载][download-url] | [懒人包](https://pan.baidu.com/s/1wchIUHgne3gncIiLIeKBEQ?pwd=1111) | [服务器部署示例](https://github.com/josStorer/RWKV-Runner/tree/master/deploy-examples)

|

||||

|

||||

[license-image]: http://img.shields.io/badge/license-MIT-blue.svg

|

||||

|

||||

@@ -26,11 +32,25 @@ API兼容的接口,这意味着一切ChatGPT客户端都是RWKV客户端。

|

||||

|

||||

[download-url]: https://github.com/josStorer/RWKV-Runner/releases

|

||||

|

||||

[Windows-image]: https://img.shields.io/badge/-Windows-blue?logo=windows

|

||||

|

||||

[Windows-url]: https://github.com/josStorer/RWKV-Runner/blob/master/build/windows/Readme_Install.txt

|

||||

|

||||

[MacOS-image]: https://img.shields.io/badge/-MacOS-black?logo=apple

|

||||

|

||||

[MacOS-url]: https://github.com/josStorer/RWKV-Runner/blob/master/build/darwin/Readme_Install.txt

|

||||

|

||||

[Linux-image]: https://img.shields.io/badge/-Linux-black?logo=linux

|

||||

|

||||

[Linux-url]: https://github.com/josStorer/RWKV-Runner/blob/master/build/linux/Readme_Install.txt

|

||||

|

||||

</div>

|

||||

|

||||

#### 注意 目前RWKV中文模型质量一般,推荐使用英文模型体验实际RWKV能力

|

||||

#### 注意 目前RWKV中文模型质量一般,推荐使用英文模型或World(全球语言)体验实际RWKV能力

|

||||

|

||||

#### 预设配置没有开启自定义CUDA算子加速,但我强烈建议你开启它并使用int8量化运行,速度非常快,且显存消耗少得多。前往配置页面,打开`使用自定义CUDA算子加速`

|

||||

#### 预设配置已经开启自定义CUDA算子加速,速度更快,且显存消耗更少。如果你遇到可能的兼容性问题,前往配置页面,关闭`使用自定义CUDA算子加速`

|

||||

|

||||

#### 如果Windows Defender说这是一个病毒,你可以尝试下载[v1.0.8](https://github.com/josStorer/RWKV-Runner/releases/tag/v1.0.8)/[v1.0.9](https://github.com/josStorer/RWKV-Runner/releases/tag/v1.0.9)然后让其自动更新到最新版,或添加信任

|

||||

|

||||

#### 对于不同的任务,调整API参数会获得更好的效果,例如对于翻译任务,你可以尝试设置Temperature为1,Top_P为0.3

|

||||

|

||||

@@ -44,6 +64,8 @@ API兼容的接口,这意味着一切ChatGPT客户端都是RWKV客户端。

|

||||

- 易于理解和操作的参数配置

|

||||

- 内置模型转换工具

|

||||

- 内置下载管理和远程模型检视

|

||||

- 内置一键LoRA微调

|

||||

- 也可用作 OpenAI ChatGPT 和 GPT Playground 客户端

|

||||

- 多语言本地化

|

||||

- 主题切换

|

||||

- 自动更新

|

||||

@@ -67,46 +89,83 @@ body.json:

|

||||

}

|

||||

```

|

||||

|

||||

## Todo

|

||||

## Embeddings API 示例

|

||||

|

||||

- [ ] 模型训练功能

|

||||

- [x] CUDA算子int8提速

|

||||

- [ ] macOS支持

|

||||

- [ ] linux支持

|

||||

- [ ] 本地状态缓存数据库

|

||||

如果你在用langchain, 直接使用 `OpenAIEmbeddings(openai_api_base="http://127.0.0.1:8000", openai_api_key="sk-")`

|

||||

|

||||

```python

|

||||

import numpy as np

|

||||

import requests

|

||||

|

||||

|

||||

def cosine_similarity(a, b):

|

||||

return np.dot(a, b) / (np.linalg.norm(a) * np.linalg.norm(b))

|

||||

|

||||

|

||||

values = [

|

||||

"I am a girl",

|

||||

"我是个女孩",

|

||||

"私は女の子です",

|

||||

"广东人爱吃福建人",

|

||||

"我是个人类",

|

||||

"I am a human",

|

||||

"that dog is so cute",

|

||||

"私はねこむすめです、にゃん♪",

|

||||

"宇宙级特大事件!号外号外!"

|

||||

]

|

||||

|

||||

embeddings = []

|

||||

for v in values:

|

||||

r = requests.post("http://127.0.0.1:8000/embeddings", json={"input": v})

|

||||

embedding = r.json()["data"][0]["embedding"]

|

||||

embeddings.append(embedding)

|

||||

|

||||

compared_embedding = embeddings[0]

|

||||

|

||||

embeddings_cos_sim = [cosine_similarity(compared_embedding, e) for e in embeddings]

|

||||

|

||||

for i in np.argsort(embeddings_cos_sim)[::-1]:

|

||||

print(f"{embeddings_cos_sim[i]:.10f} - {values[i]}")

|

||||

```

|

||||

|

||||

## 相关仓库:

|

||||

|

||||

- RWKV-4-World: https://huggingface.co/BlinkDL/rwkv-4-world/tree/main

|

||||

- RWKV-4-Raven: https://huggingface.co/BlinkDL/rwkv-4-raven/tree/main

|

||||

- ChatRWKV: https://github.com/BlinkDL/ChatRWKV

|

||||

- RWKV-LM: https://github.com/BlinkDL/RWKV-LM

|

||||

- RWKV-LM-LoRA: https://github.com/Blealtan/RWKV-LM-LoRA

|

||||

|

||||

## Preview

|

||||

|

||||

### 主页

|

||||

|

||||

|

||||

|

||||

|

||||

### 聊天

|

||||

|

||||

|

||||

|

||||

|

||||

### 补全

|

||||

|

||||

|

||||

|

||||

|

||||

### 配置

|

||||

|

||||

|

||||

|

||||

|

||||

### 模型管理

|

||||

|

||||

|

||||

|

||||

|

||||

### 下载管理

|

||||

|

||||

|

||||

|

||||

|

||||

### LoRA微调

|

||||

|

||||

|

||||

|

||||

### 设置

|

||||

|

||||

|

||||

|

||||

|

||||

@@ -2,21 +2,25 @@ package backend_golang

|

||||

|

||||

import (

|

||||

"context"

|

||||

"errors"

|

||||

"net/http"

|

||||

"os"

|

||||

"os/exec"

|

||||

"path/filepath"

|

||||

"runtime"

|

||||

|

||||

"github.com/fsnotify/fsnotify"

|

||||

"github.com/minio/selfupdate"

|

||||

wruntime "github.com/wailsapp/wails/v2/pkg/runtime"

|

||||

)

|

||||

|

||||

// App struct

|

||||

type App struct {

|

||||

ctx context.Context

|

||||

exDir string

|

||||

cmdPrefix string

|

||||

ctx context.Context

|

||||

HasConfigData bool

|

||||

ConfigData map[string]any

|

||||

exDir string

|

||||

cmdPrefix string

|

||||

}

|

||||

|

||||

// NewApp creates a new App application struct

|

||||

@@ -37,7 +41,36 @@ func (a *App) OnStartup(ctx context.Context) {

|

||||

a.cmdPrefix = "cd " + a.exDir + " && "

|

||||

}

|

||||

|

||||

os.Mkdir(a.exDir+"models", os.ModePerm)

|

||||

os.Mkdir(a.exDir+"lora-models", os.ModePerm)

|

||||

os.Mkdir(a.exDir+"finetune/json2binidx_tool/data", os.ModePerm)

|

||||

f, err := os.Create(a.exDir + "lora-models/train_log.txt")

|

||||

if err == nil {

|

||||

f.Close()

|

||||

}

|

||||

|

||||

a.downloadLoop()

|

||||

|

||||

watcher, err := fsnotify.NewWatcher()

|

||||

if err == nil {

|

||||

watcher.Add("./lora-models")

|

||||

watcher.Add("./models")

|

||||

go func() {

|

||||

for {

|

||||

select {

|

||||

case event, ok := <-watcher.Events:

|

||||

if !ok {

|

||||

return

|

||||

}

|

||||

wruntime.EventsEmit(ctx, "fsnotify", event.Name)

|

||||

case _, ok := <-watcher.Errors:

|

||||

if !ok {

|

||||

return

|

||||

}

|

||||

}

|

||||

}

|

||||

}()

|

||||

}

|

||||

}

|

||||

|

||||

func (a *App) UpdateApp(url string) (broken bool, err error) {

|

||||

@@ -53,15 +86,30 @@ func (a *App) UpdateApp(url string) (broken bool, err error) {

|

||||

}

|

||||

return false, err

|

||||

}

|

||||

name, err := os.Executable()

|

||||

if err != nil {

|

||||

return false, err

|

||||

if runtime.GOOS == "windows" {

|

||||

name, err := os.Executable()

|

||||

if err != nil {

|

||||

return false, err

|

||||

}

|

||||

exec.Command(name, os.Args[1:]...).Start()

|

||||

wruntime.Quit(a.ctx)

|

||||

}

|

||||

exec.Command(name, os.Args[1:]...).Start()

|

||||

wruntime.Quit(a.ctx)

|

||||

return false, nil

|

||||

}

|

||||

|

||||

func (a *App) RestartApp() error {

|

||||

if runtime.GOOS == "windows" {

|

||||

name, err := os.Executable()

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

exec.Command(name, os.Args[1:]...).Start()

|

||||

wruntime.Quit(a.ctx)

|

||||

return nil

|

||||

}

|

||||

return errors.New("unsupported OS")

|

||||

}

|

||||

|

||||

func (a *App) GetPlatform() string {

|

||||

return runtime.GOOS

|

||||

}

|

||||

|

||||

@@ -1,6 +1,7 @@

|

||||

package backend_golang

|

||||

|

||||

import (

|

||||

"context"

|

||||

"path/filepath"

|

||||

"time"

|

||||

|

||||

@@ -18,6 +19,7 @@ func (a *App) DownloadFile(path string, url string) error {

|

||||

|

||||

type DownloadStatus struct {

|

||||

resp *grab.Response

|

||||

cancel context.CancelFunc

|

||||

Name string `json:"name"`

|

||||

Path string `json:"path"`

|

||||

Url string `json:"url"`

|

||||

@@ -29,7 +31,7 @@ type DownloadStatus struct {

|

||||

Done bool `json:"done"`

|

||||

}

|

||||

|

||||

var downloadList []DownloadStatus

|

||||

var downloadList []*DownloadStatus

|

||||

|

||||

func existsInDownloadList(url string) bool {

|

||||

for _, ds := range downloadList {

|

||||

@@ -41,49 +43,58 @@ func existsInDownloadList(url string) bool {

|

||||

}

|

||||

|

||||

func (a *App) PauseDownload(url string) {

|

||||

for i, ds := range downloadList {

|

||||

for _, ds := range downloadList {

|

||||

if ds.Url == url {

|

||||

if ds.resp != nil {

|

||||

ds.resp.Cancel()

|

||||

}

|

||||

|

||||

downloadList[i] = DownloadStatus{

|

||||

resp: ds.resp,

|

||||

Name: ds.Name,

|

||||

Path: ds.Path,

|

||||

Url: ds.Url,

|

||||

Downloading: false,

|

||||

if ds.cancel != nil {

|

||||

ds.cancel()

|

||||

}

|

||||

ds.resp = nil

|

||||

ds.Downloading = false

|

||||

ds.Speed = 0

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func (a *App) ContinueDownload(url string) {

|

||||

for i, ds := range downloadList {

|

||||

for _, ds := range downloadList {

|

||||

if ds.Url == url {

|

||||

client := grab.NewClient()

|

||||

req, _ := grab.NewRequest(ds.Path, ds.Url)

|

||||

resp := client.Do(req)

|

||||

if !ds.Downloading && ds.resp == nil && !ds.Done {

|

||||

ds.Downloading = true

|

||||

|

||||

downloadList[i] = DownloadStatus{

|

||||

resp: resp,

|

||||

Name: ds.Name,

|

||||

Path: ds.Path,

|

||||

Url: ds.Url,

|

||||

Downloading: true,

|

||||

req, err := grab.NewRequest(ds.Path, ds.Url)

|

||||

if err != nil {

|

||||

ds.Downloading = false

|

||||

break

|

||||

}

|

||||

// if PauseDownload() is called before the request finished, ds.Downloading will be false

|

||||

// if the user keeps clicking pause and resume, it may result in multiple requests being successfully downloaded at the same time

|

||||

// so we have to create a context and cancel it when PauseDownload() is called

|

||||

ctx, cancel := context.WithCancel(context.Background())

|

||||

ds.cancel = cancel

|

||||

req = req.WithContext(ctx)

|

||||

resp := grab.DefaultClient.Do(req)

|

||||

|

||||

if resp != nil && resp.HTTPResponse != nil &&

|

||||

resp.HTTPResponse.StatusCode >= 200 && resp.HTTPResponse.StatusCode < 300 {

|

||||

ds.resp = resp

|

||||

} else {

|

||||

ds.Downloading = false

|

||||

}

|

||||

}

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func (a *App) AddToDownloadList(path string, url string) {

|

||||

if !existsInDownloadList(url) {

|

||||

downloadList = append(downloadList, DownloadStatus{

|

||||

downloadList = append(downloadList, &DownloadStatus{

|

||||

resp: nil,

|

||||

Name: filepath.Base(path),

|

||||

Path: a.exDir + path,

|

||||

Url: url,

|

||||

Downloading: true,

|

||||

Downloading: false,

|

||||

})

|

||||

a.ContinueDownload(url)

|

||||

} else {

|

||||

@@ -96,32 +107,17 @@ func (a *App) downloadLoop() {

|

||||

go func() {

|

||||

for {

|

||||

<-ticker.C

|

||||

for i, ds := range downloadList {

|

||||

transferred := int64(0)

|

||||

size := int64(0)

|

||||

speed := float64(0)

|

||||

progress := float64(0)

|

||||

downloading := ds.Downloading

|

||||

done := false

|

||||

for _, ds := range downloadList {

|

||||

if ds.resp != nil {

|

||||

transferred = ds.resp.BytesComplete()

|

||||

size = ds.resp.Size()

|

||||

speed = ds.resp.BytesPerSecond()

|

||||

progress = 100 * ds.resp.Progress()

|

||||

downloading = !ds.resp.IsComplete()

|

||||

done = ds.resp.Progress() == 1

|

||||

}

|

||||

downloadList[i] = DownloadStatus{

|

||||

resp: ds.resp,

|

||||

Name: ds.Name,

|

||||

Path: ds.Path,

|

||||

Url: ds.Url,

|

||||

Transferred: transferred,

|

||||

Size: size,

|

||||

Speed: speed,

|

||||

Progress: progress,

|

||||

Downloading: downloading,

|

||||

Done: done,

|

||||

ds.Transferred = ds.resp.BytesComplete()

|

||||

ds.Size = ds.resp.Size()

|

||||

ds.Speed = ds.resp.BytesPerSecond()

|

||||

ds.Progress = 100 * ds.resp.Progress()

|

||||

ds.Downloading = !ds.resp.IsComplete()

|

||||

ds.Done = ds.resp.Progress() == 1

|

||||

if !ds.Downloading {

|

||||

ds.resp = nil

|

||||

}

|

||||

}

|

||||

}

|

||||

runtime.EventsEmit(a.ctx, "downloadList", downloadList)

|

||||

|

||||

@@ -10,6 +10,8 @@ import (

|

||||

"runtime"

|

||||

"strings"

|

||||

"time"

|

||||

|

||||

wruntime "github.com/wailsapp/wails/v2/pkg/runtime"

|

||||

)

|

||||

|

||||

func (a *App) SaveJson(fileName string, jsonData any) error {

|

||||

@@ -119,8 +121,34 @@ func (a *App) CopyFile(src string, dst string) error {

|

||||

return nil

|

||||

}

|

||||

|

||||

func (a *App) OpenFileFolder(path string) error {

|

||||

absPath, err := filepath.Abs(a.exDir + path)

|

||||

func (a *App) OpenSaveFileDialog(filterPattern string, defaultFileName string, savedContent string) (string, error) {

|

||||

path, err := wruntime.SaveFileDialog(a.ctx, wruntime.SaveDialogOptions{

|

||||

DefaultFilename: defaultFileName,

|

||||

Filters: []wruntime.FileFilter{{

|

||||

Pattern: filterPattern,

|

||||

}},

|

||||

CanCreateDirectories: true,

|

||||

})

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

if path == "" {

|

||||

return "", nil

|

||||

}

|

||||

if err := os.WriteFile(path, []byte(savedContent), 0644); err != nil {

|

||||

return "", err

|

||||

}

|

||||

return path, nil

|

||||

}

|

||||

|

||||

func (a *App) OpenFileFolder(path string, relative bool) error {

|

||||

var absPath string

|

||||

var err error

|

||||

if relative {

|

||||

absPath, err = filepath.Abs(a.exDir + path)

|

||||

} else {

|

||||

absPath, err = filepath.Abs(path)

|

||||

}

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

@@ -140,7 +168,12 @@ func (a *App) OpenFileFolder(path string) error {

|

||||

}

|

||||

return nil

|

||||

case "linux":

|

||||

println("unsupported OS")

|

||||

cmd := exec.Command("xdg-open", absPath)

|

||||

err := cmd.Run()

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

return nil

|

||||

}

|

||||

return errors.New("unsupported OS")

|

||||

}

|

||||

|

||||

@@ -1,10 +1,13 @@

|

||||

package backend_golang

|

||||

|

||||

import (

|

||||

"encoding/json"

|

||||

"errors"

|

||||

"os"

|

||||

"os/exec"

|

||||

"runtime"

|

||||

"strconv"

|

||||

"strings"

|

||||

)

|

||||

|

||||

func (a *App) StartServer(python string, port int, host string) (string, error) {

|

||||

@@ -29,6 +32,71 @@ func (a *App) ConvertModel(python string, modelPath string, strategy string, out

|

||||

return Cmd(python, "./backend-python/convert_model.py", "--in", modelPath, "--out", outPath, "--strategy", strategy)

|

||||

}

|

||||

|

||||

func (a *App) ConvertData(python string, input string, outputPrefix string, vocab string) (string, error) {

|

||||

var err error

|

||||

if python == "" {

|

||||

python, err = GetPython()

|

||||

}

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

tokenizerType := "HFTokenizer"

|

||||

if strings.Contains(vocab, "rwkv_vocab_v20230424") {

|

||||

tokenizerType = "RWKVTokenizer"

|

||||

}

|

||||

|

||||

input = strings.TrimSuffix(input, "/")

|

||||

if fi, err := os.Stat(input); err == nil && fi.IsDir() {

|

||||

files, err := os.ReadDir(input)

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

jsonlFile, err := os.Create(outputPrefix + ".jsonl")

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

defer jsonlFile.Close()

|

||||

for _, file := range files {

|

||||

if file.IsDir() || !strings.HasSuffix(file.Name(), ".txt") {

|

||||

continue

|

||||

}

|

||||

textContent, err := os.ReadFile(input + "/" + file.Name())

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

textJson, err := json.Marshal(map[string]string{"text": string(textContent)})

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

if _, err := jsonlFile.WriteString(string(textJson) + "\n"); err != nil {

|

||||

return "", err

|

||||

}

|

||||

}

|

||||

input = outputPrefix + ".jsonl"

|

||||

} else if err != nil {

|

||||

return "", err

|

||||

}

|

||||

|

||||

return Cmd(python, "./finetune/json2binidx_tool/tools/preprocess_data.py", "--input", input, "--output-prefix", outputPrefix, "--vocab", vocab,

|

||||

"--tokenizer-type", tokenizerType, "--dataset-impl", "mmap", "--append-eod")

|

||||

}

|

||||

|

||||

func (a *App) MergeLora(python string, useGpu bool, loraAlpha int, baseModel string, loraPath string, outputPath string) (string, error) {

|

||||

var err error

|

||||

if python == "" {

|

||||

python, err = GetPython()

|

||||

}

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

args := []string{python, "./finetune/lora/merge_lora.py"}

|

||||

if useGpu {

|

||||

args = append(args, "--use-gpu")

|

||||

}

|

||||

args = append(args, strconv.Itoa(loraAlpha), baseModel, loraPath, outputPath)

|

||||

return Cmd(args...)

|

||||

}

|

||||

|

||||

func (a *App) DepCheck(python string) error {

|

||||

var err error

|

||||

if python == "" {

|

||||

@@ -48,32 +116,42 @@ func (a *App) InstallPyDep(python string, cnMirror bool) (string, error) {

|

||||

var err error

|

||||

if python == "" {

|

||||

python, err = GetPython()

|

||||

if runtime.GOOS == "windows" {

|

||||

python = `"%CD%/` + python + `"`

|

||||

}

|

||||

}

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

|

||||

if runtime.GOOS == "windows" {

|

||||

ChangeFileLine("./py310/python310._pth", 3, "Lib\\site-packages")

|

||||

installScript := python + " ./backend-python/get-pip.py -i https://pypi.tuna.tsinghua.edu.cn/simple\n" +

|

||||

python + " -m pip install torch==1.13.1 torchvision==0.14.1 torchaudio==0.13.1 --index-url https://download.pytorch.org/whl/cu117\n" +

|

||||

python + " -m pip install -r ./backend-python/requirements.txt -i https://pypi.tuna.tsinghua.edu.cn/simple\n" +

|

||||

"exit"

|

||||

if !cnMirror {

|

||||

installScript = strings.Replace(installScript, " -i https://pypi.tuna.tsinghua.edu.cn/simple", "", -1)

|

||||

installScript = strings.Replace(installScript, "requirements.txt", "requirements_versions.txt", -1)

|

||||

}

|

||||

err = os.WriteFile("./install-py-dep.bat", []byte(installScript), 0644)

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

return Cmd("install-py-dep.bat")

|

||||

}

|

||||

|

||||

if cnMirror {

|

||||

_, err = Cmd(python, "./backend-python/get-pip.py", "-i", "https://pypi.tuna.tsinghua.edu.cn/simple")

|

||||

return Cmd(python, "-m", "pip", "install", "-r", "./backend-python/requirements_without_cyac.txt", "-i", "https://pypi.tuna.tsinghua.edu.cn/simple")

|

||||

} else {

|

||||

_, err = Cmd(python, "./backend-python/get-pip.py")

|

||||

}

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

if runtime.GOOS == "windows" {

|

||||

_, err = Cmd(python, "-m", "pip", "install", "torch==1.13.1", "torchvision==0.14.1", "torchaudio==0.13.1", "--index-url", "https://download.pytorch.org/whl/cu117")

|

||||

} else {

|

||||

_, err = Cmd(python, "-m", "pip", "install", "torch", "torchvision", "torchaudio")

|

||||

}

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

if cnMirror {

|

||||

return Cmd(python, "-m", "pip", "install", "-r", "./backend-python/requirements.txt", "-i", "https://pypi.tuna.tsinghua.edu.cn/simple")

|

||||

} else {

|

||||

return Cmd(python, "-m", "pip", "install", "-r", "./backend-python/requirements_versions.txt")

|

||||

return Cmd(python, "-m", "pip", "install", "-r", "./backend-python/requirements_without_cyac.txt")

|

||||

}

|

||||

}

|

||||

|

||||

func (a *App) GetPyError() string {

|

||||

content, err := os.ReadFile("./error.txt")

|

||||

if err != nil {

|

||||

return ""

|

||||

}

|

||||

return string(content)

|

||||

}

|

||||

|

||||

@@ -17,20 +17,19 @@ import (

|

||||

func Cmd(args ...string) (string, error) {

|

||||

switch platform := runtime.GOOS; platform {

|

||||

case "windows":

|

||||

_, err := os.Stat("cmd-helper.bat")

|

||||

if err != nil {

|

||||

if err := os.WriteFile("./cmd-helper.bat", []byte("start %*"), 0644); err != nil {

|

||||

return "", err

|

||||

}

|

||||

if err := os.WriteFile("./cmd-helper.bat", []byte("start %*"), 0644); err != nil {

|

||||

return "", err

|

||||

}

|

||||

cmdHelper, err := filepath.Abs("./cmd-helper")

|

||||

if err != nil {

|

||||

return "", err

|

||||

}

|

||||

|

||||

for _, arg := range args {

|

||||

if strings.Contains(arg, " ") && strings.Contains(cmdHelper, " ") {

|

||||

return "", errors.New("path contains space") // golang bug https://github.com/golang/go/issues/17149#issuecomment-473976818

|

||||

if strings.Contains(cmdHelper, " ") {

|

||||

for _, arg := range args {

|

||||

if strings.Contains(arg, " ") {

|

||||

return "", errors.New("path contains space") // golang bug https://github.com/golang/go/issues/17149#issuecomment-473976818

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

@@ -46,7 +45,7 @@ func Cmd(args ...string) (string, error) {

|

||||

return "", err

|

||||

}

|

||||

exDir := filepath.Dir(ex) + "/../../../"

|

||||

cmd := exec.Command("osascript", "-e", `tell application 'Terminal' to do script '`+"cd "+exDir+" && "+strings.Join(args, " ")+`'`)

|

||||

cmd := exec.Command("osascript", "-e", `tell application "Terminal" to do script "`+"cd "+exDir+" && "+strings.Join(args, " ")+`"`)

|

||||

err = cmd.Start()

|

||||

if err != nil {

|

||||

return "", err

|

||||

|

||||

181

backend-golang/wsl.go

Normal file

181

backend-golang/wsl.go

Normal file

@@ -0,0 +1,181 @@

|

||||

//go:build windows

|

||||

|

||||

package backend_golang

|

||||

|

||||

import (

|

||||

"bufio"

|

||||

"context"

|

||||

"errors"

|

||||

"io"

|

||||

"os"

|

||||

"os/exec"

|

||||

"path/filepath"

|

||||

"strings"

|

||||

"time"

|

||||

|

||||

su "github.com/nyaosorg/go-windows-su"

|

||||

wsl "github.com/ubuntu/gowsl"

|

||||

wruntime "github.com/wailsapp/wails/v2/pkg/runtime"

|

||||

)

|

||||

|

||||

var distro *wsl.Distro

|

||||

var stdin io.WriteCloser

|

||||

var cmd *exec.Cmd

|

||||

|

||||

func isWslRunning() (bool, error) {

|

||||

if distro == nil {

|

||||

return false, nil

|

||||

}

|

||||

state, err := distro.State()

|

||||

if err != nil {

|

||||

return false, err

|

||||

}

|

||||

if state != wsl.Running {

|

||||

distro = nil

|

||||

return false, nil

|

||||

}

|

||||

return true, nil

|

||||

}

|

||||

|

||||

func (a *App) WslStart() error {

|

||||

running, err := isWslRunning()

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

if running {

|

||||

return nil

|

||||

}

|

||||

distros, err := wsl.RegisteredDistros(context.Background())

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

for _, d := range distros {

|

||||

if strings.Contains(d.Name(), "Ubuntu") {

|

||||

distro = &d

|

||||

break

|

||||

}

|

||||

}

|

||||

if distro == nil {

|

||||

return errors.New("ubuntu not found")

|

||||

}

|

||||

|

||||

cmd = exec.Command("wsl", "-d", distro.Name(), "-u", "root")

|

||||

|

||||

stdin, err = cmd.StdinPipe()

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

|

||||

stdout, err := cmd.StdoutPipe()

|

||||

cmd.Stderr = cmd.Stdout

|

||||

if err != nil {

|

||||

// stdin.Close()

|

||||

stdin = nil

|

||||

return err

|

||||

}

|

||||

|

||||

go func() {

|

||||

reader := bufio.NewReader(stdout)

|

||||

for {

|

||||

if stdin == nil {

|

||||

break

|

||||

}

|

||||

line, _, err := reader.ReadLine()

|

||||

if err != nil {

|

||||

wruntime.EventsEmit(a.ctx, "wslerr", err.Error())

|

||||

break

|

||||

}

|

||||

wruntime.EventsEmit(a.ctx, "wsl", string(line))

|

||||

}

|

||||

// stdout.Close()

|

||||

}()

|

||||

|

||||

if err := cmd.Start(); err != nil {

|

||||

return err

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func (a *App) WslCommand(command string) error {

|

||||

running, err := isWslRunning()

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

if !running {

|

||||

return errors.New("wsl not running")

|

||||

}

|

||||

_, err = stdin.Write([]byte(command + "\n"))

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func (a *App) WslStop() error {

|

||||

running, err := isWslRunning()

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

if !running {

|

||||

return errors.New("wsl not running")

|

||||

}

|

||||

if cmd != nil {

|

||||

err = cmd.Process.Kill()

|

||||

cmd = nil

|

||||

}

|

||||

// stdin.Close()

|

||||

stdin = nil

|

||||

distro = nil

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func (a *App) WslIsEnabled() error {

|

||||

ex, err := os.Executable()

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

exDir := filepath.Dir(ex)

|

||||

|

||||

data, err := os.ReadFile(exDir + "/wsl.state")

|

||||

if err == nil {

|

||||

if strings.Contains(string(data), "Enabled") {

|

||||

return nil

|

||||

}

|

||||

}

|

||||

|

||||

cmd := `-Command (Get-WindowsOptionalFeature -Online -FeatureName Microsoft-Windows-Subsystem-Linux).State | Out-File -Encoding utf8 -FilePath ` + exDir + "/wsl.state"

|

||||

_, err = su.ShellExecute(su.RUNAS, "powershell", cmd, exDir)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

time.Sleep(2 * time.Second)

|

||||

data, err = os.ReadFile(exDir + "/wsl.state")

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

if strings.Contains(string(data), "Enabled") {

|

||||

return nil

|

||||

} else {

|

||||

return errors.New("wsl is not enabled")

|

||||

}

|

||||

}

|

||||

|

||||

func (a *App) WslEnable(forceMode bool) error {

|

||||

cmd := `/online /enable-feature /featurename:Microsoft-Windows-Subsystem-Linux`

|

||||

_, err := su.ShellExecute(su.RUNAS, "dism", cmd, `C:\`)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

if forceMode {

|

||||

os.WriteFile("./wsl.state", []byte("Enabled"), 0644)

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func (a *App) WslInstallUbuntu() error {

|

||||

_, err := Cmd("ms-windows-store://pdp/?ProductId=9PN20MSR04DW")

|

||||

return err

|

||||

}

|

||||

31

backend-golang/wsl_not_windows.go

Normal file

31

backend-golang/wsl_not_windows.go

Normal file

@@ -0,0 +1,31 @@

|

||||

//go:build darwin || linux

|

||||

|

||||

package backend_golang

|

||||

|

||||

import (

|

||||

"errors"

|

||||

)

|

||||

|

||||

func (a *App) WslStart() error {

|

||||

return errors.New("wsl not supported")

|

||||

}

|

||||

|

||||

func (a *App) WslCommand(command string) error {

|

||||

return errors.New("wsl not supported")

|

||||

}

|

||||

|

||||

func (a *App) WslStop() error {

|

||||

return errors.New("wsl not supported")

|

||||

}

|

||||

|

||||

func (a *App) WslIsEnabled() error {

|

||||

return errors.New("wsl not supported")

|

||||

}

|

||||

|

||||

func (a *App) WslEnable(forceMode bool) error {

|

||||

return errors.New("wsl not supported")

|

||||

}

|

||||

|

||||

func (a *App) WslInstallUbuntu() error {

|

||||

return errors.New("wsl not supported")

|

||||

}

|

||||

22

backend-python/convert_model.py

vendored

22

backend-python/convert_model.py

vendored

@@ -219,13 +219,17 @@ def get_args():

|

||||

return p.parse_args()

|

||||

|

||||