clear

This commit is contained in:

3

node_modules/through2/.npmignore

generated

vendored

Normal file

3

node_modules/through2/.npmignore

generated

vendored

Normal file

@@ -0,0 +1,3 @@

|

||||

test

|

||||

.jshintrc

|

||||

.travis.yml

|

||||

336

node_modules/through2/LICENSE.html

generated

vendored

Normal file

336

node_modules/through2/LICENSE.html

generated

vendored

Normal file

@@ -0,0 +1,336 @@

|

||||

<!doctype html>

|

||||

<!-- Created with GFM2HTML: https://github.com/rvagg/gfm2html -->

|

||||

<html lang="en">

|

||||

<head>

|

||||

<meta charset="utf-8">

|

||||

<meta http-equiv="X-UA-Compatible" content="IE=edge">

|

||||

<title></title>

|

||||

<meta name="description" content="">

|

||||

<meta name="viewport" content="width=device-width, initial-scale=1">

|

||||

<meta name="created-with" content="https://github.com/rvagg/gfm2html">

|

||||

|

||||

<style type="text/css">

|

||||

/* most of normalize.css */

|

||||

article,aside,details,figcaption,figure,footer,header,hgroup,main,nav,section,summary{display:block;}[hidden],template{display:none;}html{font-family:sans-serif;/*1*/-ms-text-size-adjust:100%;/*2*/-webkit-text-size-adjust:100%;/*2*/}body{margin:0;}a{background:transparent;}a:focus{outline:thindotted;}a:active,a:hover{outline:0;}h1{font-size:2em;margin:0.67em0;}abbr[title]{border-bottom:1pxdotted;}b,strong{font-weight:bold;}dfn{font-style:italic;}hr{-moz-box-sizing:content-box;box-sizing:content-box;height:0;}mark{background:#ff0;color:#000;}code,kbd,pre,samp{font-family:monospace,serif;font-size:1em;}pre{white-space:pre-wrap;}q{quotes:"\201C""\201D""\2018""\2019";}small{font-size:80%;}sub,sup{font-size:75%;line-height:0;position:relative;vertical-align:baseline;}sup{top:-0.5em;}sub{bottom:-0.25em;}img{border:0;}svg:not(:root){overflow:hidden;}table{border-collapse:collapse;border-spacing:0;}

|

||||

|

||||

html {

|

||||

font: 14px 'Helvetica Neue', Helvetica, arial, freesans, clean, sans-serif;

|

||||

}

|

||||

|

||||

.container {

|

||||

line-height: 1.6;

|

||||

color: #333;

|

||||

background: #eee;

|

||||

border-radius: 3px;

|

||||

padding: 3px;

|

||||

width: 790px;

|

||||

margin: 10px auto;

|

||||

}

|

||||

|

||||

.body-content {

|

||||

background-color: #fff;

|

||||

border: 1px solid #CACACA;

|

||||

padding: 30px;

|

||||

}

|

||||

|

||||

.body-content > *:first-child {

|

||||

margin-top: 0 !important;

|

||||

}

|

||||

|

||||

a, a:visited {

|

||||

color: #4183c4;

|

||||

text-decoration: none;

|

||||

}

|

||||

|

||||

a:hover {

|

||||

text-decoration: underline;

|

||||

}

|

||||

|

||||

p, blockquote, ul, ol, dl, table, pre {

|

||||

margin: 15px 0;

|

||||

}

|

||||

|

||||

.markdown-body h1

|

||||

, .markdown-body h2

|

||||

, .markdown-body h3

|

||||

, .markdown-body h4

|

||||

, .markdown-body h5

|

||||

, .markdown-body h6 {

|

||||

margin: 20px 0 10px;

|

||||

padding: 0;

|

||||

font-weight: bold;

|

||||

}

|

||||

|

||||

h1 {

|

||||

font-size: 2.5em;

|

||||

color: #000;

|

||||

border-bottom: 1px solid #ddd;

|

||||

}

|

||||

|

||||

h2 {

|

||||

font-size: 2em;

|

||||

border-bottom: 1px solid #eee;

|

||||

color: #000;

|

||||

}

|

||||

|

||||

img {

|

||||

max-width: 100%;

|

||||

}

|

||||

|

||||

hr {

|

||||

background: transparent url("/img/hr.png") repeat-x 0 0;

|

||||

border: 0 none;

|

||||

color: #ccc;

|

||||

height: 4px;

|

||||

padding: 0;

|

||||

}

|

||||

|

||||

table {

|

||||

border-collapse: collapse;

|

||||

border-spacing: 0;

|

||||

}

|

||||

|

||||

tr:nth-child(2n) {

|

||||

background-color: #f8f8f8;

|

||||

}

|

||||

|

||||

.markdown-body tr {

|

||||

border-top: 1px solid #ccc;

|

||||

background-color: #fff;

|

||||

}

|

||||

|

||||

td, th {

|

||||

border: 1px solid #ccc;

|

||||

padding: 6px 13px;

|

||||

}

|

||||

|

||||

th {

|

||||

font-weight: bold;

|

||||

}

|

||||

|

||||

blockquote {

|

||||

border-left: 4px solid #ddd;

|

||||

padding: 0 15px;

|

||||

color: #777;

|

||||

}

|

||||

|

||||

blockquote > :last-child, blockquote > :first-child {

|

||||

margin-bottom: 0px;

|

||||

}

|

||||

|

||||

pre, code {

|

||||

font-size: 13px;

|

||||

font-family: 'UbuntuMono', monospace;

|

||||

white-space: nowrap;

|

||||

margin: 0 2px;

|

||||

padding: 0px 5px;

|

||||

border: 1px solid #eaeaea;

|

||||

background-color: #f8f8f8;

|

||||

border-radius: 3px;

|

||||

}

|

||||

|

||||

pre > code {

|

||||

white-space: pre;

|

||||

}

|

||||

|

||||

pre {

|

||||

overflow-x: auto;

|

||||

white-space: pre;

|

||||

padding: 10px;

|

||||

line-height: 150%;

|

||||

background-color: #f8f8f8;

|

||||

border-color: #ccc;

|

||||

}

|

||||

|

||||

pre code, pre tt {

|

||||

margin: 0;

|

||||

padding: 0;

|

||||

border: 0;

|

||||

background-color: transparent;

|

||||

border: none;

|

||||

}

|

||||

|

||||

.highlight .c

|

||||

, .highlight .cm

|

||||

, .highlight .cp

|

||||

, .highlight .c1 {

|

||||

color:#999988;

|

||||

font-style:italic;

|

||||

}

|

||||

|

||||

.highlight .err {

|

||||

color:#a61717;

|

||||

background-color:#e3d2d2

|

||||

}

|

||||

|

||||

.highlight .o

|

||||

, .highlight .gs

|

||||

, .highlight .kc

|

||||

, .highlight .kd

|

||||

, .highlight .kn

|

||||

, .highlight .kp

|

||||

, .highlight .kr {

|

||||

font-weight:bold

|

||||

}

|

||||

|

||||

.highlight .cs {

|

||||

color:#999999;

|

||||

font-weight:bold;

|

||||

font-style:italic

|

||||

}

|

||||

|

||||

.highlight .gd {

|

||||

color:#000000;

|

||||

background-color:#ffdddd

|

||||

}

|

||||

|

||||

.highlight .gd .x {

|

||||

color:#000000;

|

||||

background-color:#ffaaaa

|

||||

}

|

||||

|

||||

.highlight .ge {

|

||||

font-style:italic

|

||||

}

|

||||

|

||||

.highlight .gr

|

||||

, .highlight .gt {

|

||||

color:#aa0000

|

||||

}

|

||||

|

||||

.highlight .gh

|

||||

, .highlight .bp {

|

||||

color:#999999

|

||||

}

|

||||

|

||||

.highlight .gi {

|

||||

color:#000000;

|

||||

background-color:#ddffdd

|

||||

}

|

||||

|

||||

.highlight .gi .x {

|

||||

color:#000000;

|

||||

background-color:#aaffaa

|

||||

}

|

||||

|

||||

.highlight .go {

|

||||

color:#888888

|

||||

}

|

||||

|

||||

.highlight .gp

|

||||

, .highlight .nn {

|

||||

color:#555555

|

||||

}

|

||||

|

||||

|

||||

.highlight .gu {

|

||||

color:#800080;

|

||||

font-weight:bold

|

||||

}

|

||||

|

||||

|

||||

.highlight .kt {

|

||||

color:#445588;

|

||||

font-weight:bold

|

||||

}

|

||||

|

||||

.highlight .m

|

||||

, .highlight .mf

|

||||

, .highlight .mh

|

||||

, .highlight .mi

|

||||

, .highlight .mo

|

||||

, .highlight .il {

|

||||

color:#009999

|

||||

}

|

||||

|

||||

.highlight .s

|

||||

, .highlight .sb

|

||||

, .highlight .sc

|

||||

, .highlight .sd

|

||||

, .highlight .s2

|

||||

, .highlight .se

|

||||

, .highlight .sh

|

||||

, .highlight .si

|

||||

, .highlight .sx

|

||||

, .highlight .s1 {

|

||||

color:#d14

|

||||

}

|

||||

|

||||

.highlight .n {

|

||||

color:#333333

|

||||

}

|

||||

|

||||

.highlight .na

|

||||

, .highlight .no

|

||||

, .highlight .nv

|

||||

, .highlight .vc

|

||||

, .highlight .vg

|

||||

, .highlight .vi

|

||||

, .highlight .nb {

|

||||

color:#0086B3

|

||||

}

|

||||

|

||||

.highlight .nc {

|

||||

color:#445588;

|

||||

font-weight:bold

|

||||

}

|

||||

|

||||

.highlight .ni {

|

||||

color:#800080

|

||||

}

|

||||

|

||||

.highlight .ne

|

||||

, .highlight .nf {

|

||||

color:#990000;

|

||||

font-weight:bold

|

||||

}

|

||||

|

||||

.highlight .nt {

|

||||

color:#000080

|

||||

}

|

||||

|

||||

.highlight .ow {

|

||||

font-weight:bold

|

||||

}

|

||||

|

||||

.highlight .w {

|

||||

color:#bbbbbb

|

||||

}

|

||||

|

||||

.highlight .sr {

|

||||

color:#009926

|

||||

}

|

||||

|

||||

.highlight .ss {

|

||||

color:#990073

|

||||

}

|

||||

|

||||

.highlight .gc {

|

||||

color:#999;

|

||||

background-color:#EAF2F5

|

||||

}

|

||||

|

||||

@media print {

|

||||

.container {

|

||||

background: transparent;

|

||||

border-radius: 0;

|

||||

padding: 0;

|

||||

}

|

||||

|

||||

.body-content {

|

||||

border: none;

|

||||

}

|

||||

}

|

||||

</style>

|

||||

</head>

|

||||

<body>

|

||||

<div class="container">

|

||||

<div class="body-content"><h1 id="the-mit-license-mit-">The MIT License (MIT)</h1>

|

||||

<p><strong>Copyright (c) 2016 Rod Vagg (the "Original Author") and additional contributors</strong></p>

|

||||

<p>Permission is hereby granted, free of charge, to any person obtaining a copy of this software and associated documentation files (the "Software"), to deal in the Software without restriction, including without limitation the rights to use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the Software, and to permit persons to whom the Software is furnished to do so, subject to the following conditions:</p>

|

||||

<p>The above copyright notice and this permission notice shall be included in all copies or substantial portions of the Software.</p>

|

||||

<p>THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.</p>

|

||||

</div>

|

||||

</div>

|

||||

</body>

|

||||

</html>

|

||||

9

node_modules/through2/LICENSE.md

generated

vendored

Normal file

9

node_modules/through2/LICENSE.md

generated

vendored

Normal file

@@ -0,0 +1,9 @@

|

||||

# The MIT License (MIT)

|

||||

|

||||

**Copyright (c) 2016 Rod Vagg (the "Original Author") and additional contributors**

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy of this software and associated documentation files (the "Software"), to deal in the Software without restriction, including without limitation the rights to use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the Software, and to permit persons to whom the Software is furnished to do so, subject to the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be included in all copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

|

||||

136

node_modules/through2/README.md

generated

vendored

Normal file

136

node_modules/through2/README.md

generated

vendored

Normal file

@@ -0,0 +1,136 @@

|

||||

# through2

|

||||

|

||||

[](https://nodei.co/npm/through2/)

|

||||

|

||||

**A tiny wrapper around Node streams.Transform (Streams2) to avoid explicit subclassing noise**

|

||||

|

||||

Inspired by [Dominic Tarr](https://github.com/dominictarr)'s [through](https://github.com/dominictarr/through) in that it's so much easier to make a stream out of a function than it is to set up the prototype chain properly: `through(function (chunk) { ... })`.

|

||||

|

||||

Note: As 2.x.x this module starts using **Streams3** instead of Stream2. To continue using a Streams2 version use `npm install through2@0` to fetch the latest version of 0.x.x. More information about Streams2 vs Streams3 and recommendations see the article **[Why I don't use Node's core 'stream' module](http://r.va.gg/2014/06/why-i-dont-use-nodes-core-stream-module.html)**.

|

||||

|

||||

```js

|

||||

fs.createReadStream('ex.txt')

|

||||

.pipe(through2(function (chunk, enc, callback) {

|

||||

for (var i = 0; i < chunk.length; i++)

|

||||

if (chunk[i] == 97)

|

||||

chunk[i] = 122 // swap 'a' for 'z'

|

||||

|

||||

this.push(chunk)

|

||||

|

||||

callback()

|

||||

}))

|

||||

.pipe(fs.createWriteStream('out.txt'))

|

||||

.on('finish', function () {

|

||||

doSomethingSpecial()

|

||||

})

|

||||

```

|

||||

|

||||

Or object streams:

|

||||

|

||||

```js

|

||||

var all = []

|

||||

|

||||

fs.createReadStream('data.csv')

|

||||

.pipe(csv2())

|

||||

.pipe(through2.obj(function (chunk, enc, callback) {

|

||||

var data = {

|

||||

name : chunk[0]

|

||||

, address : chunk[3]

|

||||

, phone : chunk[10]

|

||||

}

|

||||

this.push(data)

|

||||

|

||||

callback()

|

||||

}))

|

||||

.on('data', function (data) {

|

||||

all.push(data)

|

||||

})

|

||||

.on('end', function () {

|

||||

doSomethingSpecial(all)

|

||||

})

|

||||

```

|

||||

|

||||

Note that `through2.obj(fn)` is a convenience wrapper around `through2({ objectMode: true }, fn)`.

|

||||

|

||||

## API

|

||||

|

||||

<b><code>through2([ options, ] [ transformFunction ] [, flushFunction ])</code></b>

|

||||

|

||||

Consult the **[stream.Transform](http://nodejs.org/docs/latest/api/stream.html#stream_class_stream_transform)** documentation for the exact rules of the `transformFunction` (i.e. `this._transform`) and the optional `flushFunction` (i.e. `this._flush`).

|

||||

|

||||

### options

|

||||

|

||||

The options argument is optional and is passed straight through to `stream.Transform`. So you can use `objectMode:true` if you are processing non-binary streams (or just use `through2.obj()`).

|

||||

|

||||

The `options` argument is first, unlike standard convention, because if I'm passing in an anonymous function then I'd prefer for the options argument to not get lost at the end of the call:

|

||||

|

||||

```js

|

||||

fs.createReadStream('/tmp/important.dat')

|

||||

.pipe(through2({ objectMode: true, allowHalfOpen: false },

|

||||

function (chunk, enc, cb) {

|

||||

cb(null, 'wut?') // note we can use the second argument on the callback

|

||||

// to provide data as an alternative to this.push('wut?')

|

||||

}

|

||||

)

|

||||

.pipe(fs.createWriteStream('/tmp/wut.txt'))

|

||||

```

|

||||

|

||||

### transformFunction

|

||||

|

||||

The `transformFunction` must have the following signature: `function (chunk, encoding, callback) {}`. A minimal implementation should call the `callback` function to indicate that the transformation is done, even if that transformation means discarding the chunk.

|

||||

|

||||

To queue a new chunk, call `this.push(chunk)`—this can be called as many times as required before the `callback()` if you have multiple pieces to send on.

|

||||

|

||||

Alternatively, you may use `callback(err, chunk)` as shorthand for emitting a single chunk or an error.

|

||||

|

||||

If you **do not provide a `transformFunction`** then you will get a simple pass-through stream.

|

||||

|

||||

### flushFunction

|

||||

|

||||

The optional `flushFunction` is provided as the last argument (2nd or 3rd, depending on whether you've supplied options) is called just prior to the stream ending. Can be used to finish up any processing that may be in progress.

|

||||

|

||||

```js

|

||||

fs.createReadStream('/tmp/important.dat')

|

||||

.pipe(through2(

|

||||

function (chunk, enc, cb) { cb(null, chunk) }, // transform is a noop

|

||||

function (cb) { // flush function

|

||||

this.push('tacking on an extra buffer to the end');

|

||||

cb();

|

||||

}

|

||||

))

|

||||

.pipe(fs.createWriteStream('/tmp/wut.txt'));

|

||||

```

|

||||

|

||||

<b><code>through2.ctor([ options, ] transformFunction[, flushFunction ])</code></b>

|

||||

|

||||

Instead of returning a `stream.Transform` instance, `through2.ctor()` returns a **constructor** for a custom Transform. This is useful when you want to use the same transform logic in multiple instances.

|

||||

|

||||

```js

|

||||

var FToC = through2.ctor({objectMode: true}, function (record, encoding, callback) {

|

||||

if (record.temp != null && record.unit == "F") {

|

||||

record.temp = ( ( record.temp - 32 ) * 5 ) / 9

|

||||

record.unit = "C"

|

||||

}

|

||||

this.push(record)

|

||||

callback()

|

||||

})

|

||||

|

||||

// Create instances of FToC like so:

|

||||

var converter = new FToC()

|

||||

// Or:

|

||||

var converter = FToC()

|

||||

// Or specify/override options when you instantiate, if you prefer:

|

||||

var converter = FToC({objectMode: true})

|

||||

```

|

||||

|

||||

## See Also

|

||||

|

||||

- [through2-map](https://github.com/brycebaril/through2-map) - Array.prototype.map analog for streams.

|

||||

- [through2-filter](https://github.com/brycebaril/through2-filter) - Array.prototype.filter analog for streams.

|

||||

- [through2-reduce](https://github.com/brycebaril/through2-reduce) - Array.prototype.reduce analog for streams.

|

||||

- [through2-spy](https://github.com/brycebaril/through2-spy) - Wrapper for simple stream.PassThrough spies.

|

||||

- the [mississippi stream utility collection](https://github.com/maxogden/mississippi) includes `through2` as well as many more useful stream modules similar to this one

|

||||

|

||||

## License

|

||||

|

||||

**through2** is Copyright (c) 2013 Rod Vagg [@rvagg](https://twitter.com/rvagg) and licensed under the MIT license. All rights not explicitly granted in the MIT license are reserved. See the included LICENSE file for more details.

|

||||

1

node_modules/through2/node_modules/isarray/.npmignore

generated

vendored

Normal file

1

node_modules/through2/node_modules/isarray/.npmignore

generated

vendored

Normal file

@@ -0,0 +1 @@

|

||||

node_modules

|

||||

4

node_modules/through2/node_modules/isarray/.travis.yml

generated

vendored

Normal file

4

node_modules/through2/node_modules/isarray/.travis.yml

generated

vendored

Normal file

@@ -0,0 +1,4 @@

|

||||

language: node_js

|

||||

node_js:

|

||||

- "0.8"

|

||||

- "0.10"

|

||||

6

node_modules/through2/node_modules/isarray/Makefile

generated

vendored

Normal file

6

node_modules/through2/node_modules/isarray/Makefile

generated

vendored

Normal file

@@ -0,0 +1,6 @@

|

||||

|

||||

test:

|

||||

@node_modules/.bin/tape test.js

|

||||

|

||||

.PHONY: test

|

||||

|

||||

60

node_modules/through2/node_modules/isarray/README.md

generated

vendored

Normal file

60

node_modules/through2/node_modules/isarray/README.md

generated

vendored

Normal file

@@ -0,0 +1,60 @@

|

||||

|

||||

# isarray

|

||||

|

||||

`Array#isArray` for older browsers.

|

||||

|

||||

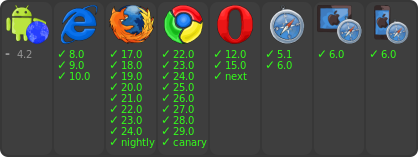

[](http://travis-ci.org/juliangruber/isarray)

|

||||

[](https://www.npmjs.org/package/isarray)

|

||||

|

||||

[

|

||||

](https://ci.testling.com/juliangruber/isarray)

|

||||

|

||||

## Usage

|

||||

|

||||

```js

|

||||

var isArray = require('isarray');

|

||||

|

||||

console.log(isArray([])); // => true

|

||||

console.log(isArray({})); // => false

|

||||

```

|

||||

|

||||

## Installation

|

||||

|

||||

With [npm](http://npmjs.org) do

|

||||

|

||||

```bash

|

||||

$ npm install isarray

|

||||

```

|

||||

|

||||

Then bundle for the browser with

|

||||

[browserify](https://github.com/substack/browserify).

|

||||

|

||||

With [component](http://component.io) do

|

||||

|

||||

```bash

|

||||

$ component install juliangruber/isarray

|

||||

```

|

||||

|

||||

## License

|

||||

|

||||

(MIT)

|

||||

|

||||

Copyright (c) 2013 Julian Gruber <julian@juliangruber.com>

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy of

|

||||

this software and associated documentation files (the "Software"), to deal in

|

||||

the Software without restriction, including without limitation the rights to

|

||||

use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies

|

||||

of the Software, and to permit persons to whom the Software is furnished to do

|

||||

so, subject to the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be included in all

|

||||

copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

||||

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

||||

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

||||

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

||||

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

||||

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

||||

SOFTWARE.

|

||||

19

node_modules/through2/node_modules/isarray/component.json

generated

vendored

Normal file

19

node_modules/through2/node_modules/isarray/component.json

generated

vendored

Normal file

@@ -0,0 +1,19 @@

|

||||

{

|

||||

"name" : "isarray",

|

||||

"description" : "Array#isArray for older browsers",

|

||||

"version" : "0.0.1",

|

||||

"repository" : "juliangruber/isarray",

|

||||

"homepage": "https://github.com/juliangruber/isarray",

|

||||

"main" : "index.js",

|

||||

"scripts" : [

|

||||

"index.js"

|

||||

],

|

||||

"dependencies" : {},

|

||||

"keywords": ["browser","isarray","array"],

|

||||

"author": {

|

||||

"name": "Julian Gruber",

|

||||

"email": "mail@juliangruber.com",

|

||||

"url": "http://juliangruber.com"

|

||||

},

|

||||

"license": "MIT"

|

||||

}

|

||||

5

node_modules/through2/node_modules/isarray/index.js

generated

vendored

Normal file

5

node_modules/through2/node_modules/isarray/index.js

generated

vendored

Normal file

@@ -0,0 +1,5 @@

|

||||

var toString = {}.toString;

|

||||

|

||||

module.exports = Array.isArray || function (arr) {

|

||||

return toString.call(arr) == '[object Array]';

|

||||

};

|

||||

104

node_modules/through2/node_modules/isarray/package.json

generated

vendored

Normal file

104

node_modules/through2/node_modules/isarray/package.json

generated

vendored

Normal file

@@ -0,0 +1,104 @@

|

||||

{

|

||||

"_args": [

|

||||

[

|

||||

{

|

||||

"raw": "isarray@~1.0.0",

|

||||

"scope": null,

|

||||

"escapedName": "isarray",

|

||||

"name": "isarray",

|

||||

"rawSpec": "~1.0.0",

|

||||

"spec": ">=1.0.0 <1.1.0",

|

||||

"type": "range"

|

||||

},

|

||||

"D:\\web\\layui\\res\\layui\\node_modules\\through2\\node_modules\\readable-stream"

|

||||

]

|

||||

],

|

||||

"_from": "isarray@>=1.0.0 <1.1.0",

|

||||

"_id": "isarray@1.0.0",

|

||||

"_inCache": true,

|

||||

"_location": "/through2/isarray",

|

||||

"_nodeVersion": "5.1.0",

|

||||

"_npmUser": {

|

||||

"name": "juliangruber",

|

||||

"email": "julian@juliangruber.com"

|

||||

},

|

||||

"_npmVersion": "3.3.12",

|

||||

"_phantomChildren": {},

|

||||

"_requested": {

|

||||

"raw": "isarray@~1.0.0",

|

||||

"scope": null,

|

||||

"escapedName": "isarray",

|

||||

"name": "isarray",

|

||||

"rawSpec": "~1.0.0",

|

||||

"spec": ">=1.0.0 <1.1.0",

|

||||

"type": "range"

|

||||

},

|

||||

"_requiredBy": [

|

||||

"/through2/readable-stream"

|

||||

],

|

||||

"_resolved": "https://registry.npmjs.org/isarray/-/isarray-1.0.0.tgz",

|

||||

"_shasum": "bb935d48582cba168c06834957a54a3e07124f11",

|

||||

"_shrinkwrap": null,

|

||||

"_spec": "isarray@~1.0.0",

|

||||

"_where": "D:\\web\\layui\\res\\layui\\node_modules\\through2\\node_modules\\readable-stream",

|

||||

"author": {

|

||||

"name": "Julian Gruber",

|

||||

"email": "mail@juliangruber.com",

|

||||

"url": "http://juliangruber.com"

|

||||

},

|

||||

"bugs": {

|

||||

"url": "https://github.com/juliangruber/isarray/issues"

|

||||

},

|

||||

"dependencies": {},

|

||||

"description": "Array#isArray for older browsers",

|

||||

"devDependencies": {

|

||||

"tape": "~2.13.4"

|

||||

},

|

||||

"directories": {},

|

||||

"dist": {

|

||||

"shasum": "bb935d48582cba168c06834957a54a3e07124f11",

|

||||

"tarball": "https://registry.npmjs.org/isarray/-/isarray-1.0.0.tgz"

|

||||

},

|

||||

"gitHead": "2a23a281f369e9ae06394c0fb4d2381355a6ba33",

|

||||

"homepage": "https://github.com/juliangruber/isarray",

|

||||

"keywords": [

|

||||

"browser",

|

||||

"isarray",

|

||||

"array"

|

||||

],

|

||||

"license": "MIT",

|

||||

"main": "index.js",

|

||||

"maintainers": [

|

||||

{

|

||||

"name": "juliangruber",

|

||||

"email": "julian@juliangruber.com"

|

||||

}

|

||||

],

|

||||

"name": "isarray",

|

||||

"optionalDependencies": {},

|

||||

"readme": "ERROR: No README data found!",

|

||||

"repository": {

|

||||

"type": "git",

|

||||

"url": "git://github.com/juliangruber/isarray.git"

|

||||

},

|

||||

"scripts": {

|

||||

"test": "tape test.js"

|

||||

},

|

||||

"testling": {

|

||||

"files": "test.js",

|

||||

"browsers": [

|

||||

"ie/8..latest",

|

||||

"firefox/17..latest",

|

||||

"firefox/nightly",

|

||||

"chrome/22..latest",

|

||||

"chrome/canary",

|

||||

"opera/12..latest",

|

||||

"opera/next",

|

||||

"safari/5.1..latest",

|

||||

"ipad/6.0..latest",

|

||||

"iphone/6.0..latest",

|

||||

"android-browser/4.2..latest"

|

||||

]

|

||||

},

|

||||

"version": "1.0.0"

|

||||

}

|

||||

20

node_modules/through2/node_modules/isarray/test.js

generated

vendored

Normal file

20

node_modules/through2/node_modules/isarray/test.js

generated

vendored

Normal file

@@ -0,0 +1,20 @@

|

||||

var isArray = require('./');

|

||||

var test = require('tape');

|

||||

|

||||

test('is array', function(t){

|

||||

t.ok(isArray([]));

|

||||

t.notOk(isArray({}));

|

||||

t.notOk(isArray(null));

|

||||

t.notOk(isArray(false));

|

||||

|

||||

var obj = {};

|

||||

obj[0] = true;

|

||||

t.notOk(isArray(obj));

|

||||

|

||||

var arr = [];

|

||||

arr.foo = 'bar';

|

||||

t.ok(isArray(arr));

|

||||

|

||||

t.end();

|

||||

});

|

||||

|

||||

9

node_modules/through2/node_modules/readable-stream/.npmignore

generated

vendored

Normal file

9

node_modules/through2/node_modules/readable-stream/.npmignore

generated

vendored

Normal file

@@ -0,0 +1,9 @@

|

||||

build/

|

||||

test/

|

||||

examples/

|

||||

fs.js

|

||||

zlib.js

|

||||

.zuul.yml

|

||||

.nyc_output

|

||||

coverage

|

||||

docs/

|

||||

49

node_modules/through2/node_modules/readable-stream/.travis.yml

generated

vendored

Normal file

49

node_modules/through2/node_modules/readable-stream/.travis.yml

generated

vendored

Normal file

@@ -0,0 +1,49 @@

|

||||

sudo: false

|

||||

language: node_js

|

||||

before_install:

|

||||

- npm install -g npm@2

|

||||

- npm install -g npm

|

||||

notifications:

|

||||

email: false

|

||||

matrix:

|

||||

fast_finish: true

|

||||

include:

|

||||

- node_js: '0.8'

|

||||

env: TASK=test

|

||||

- node_js: '0.10'

|

||||

env: TASK=test

|

||||

- node_js: '0.11'

|

||||

env: TASK=test

|

||||

- node_js: '0.12'

|

||||

env: TASK=test

|

||||

- node_js: 1

|

||||

env: TASK=test

|

||||

- node_js: 2

|

||||

env: TASK=test

|

||||

- node_js: 3

|

||||

env: TASK=test

|

||||

- node_js: 4

|

||||

env: TASK=test

|

||||

- node_js: 5

|

||||

env: TASK=test

|

||||

- node_js: 6

|

||||

env: TASK=test

|

||||

- node_js: 5

|

||||

env: TASK=browser BROWSER_NAME=android BROWSER_VERSION="4.0..latest"

|

||||

- node_js: 5

|

||||

env: TASK=browser BROWSER_NAME=ie BROWSER_VERSION="9..latest"

|

||||

- node_js: 5

|

||||

env: TASK=browser BROWSER_NAME=opera BROWSER_VERSION="11..latest"

|

||||

- node_js: 5

|

||||

env: TASK=browser BROWSER_NAME=chrome BROWSER_VERSION="-3..latest"

|

||||

- node_js: 5

|

||||

env: TASK=browser BROWSER_NAME=firefox BROWSER_VERSION="-3..latest"

|

||||

- node_js: 5

|

||||

env: TASK=browser BROWSER_NAME=safari BROWSER_VERSION="5..latest"

|

||||

- node_js: 5

|

||||

env: TASK=browser BROWSER_NAME=microsoftedge BROWSER_VERSION=latest

|

||||

script: "npm run $TASK"

|

||||

env:

|

||||

global:

|

||||

- secure: rE2Vvo7vnjabYNULNyLFxOyt98BoJexDqsiOnfiD6kLYYsiQGfr/sbZkPMOFm9qfQG7pjqx+zZWZjGSswhTt+626C0t/njXqug7Yps4c3dFblzGfreQHp7wNX5TFsvrxd6dAowVasMp61sJcRnB2w8cUzoe3RAYUDHyiHktwqMc=

|

||||

- secure: g9YINaKAdMatsJ28G9jCGbSaguXCyxSTy+pBO6Ch0Cf57ZLOTka3HqDj8p3nV28LUIHZ3ut5WO43CeYKwt4AUtLpBS3a0dndHdY6D83uY6b2qh5hXlrcbeQTq2cvw2y95F7hm4D1kwrgZ7ViqaKggRcEupAL69YbJnxeUDKWEdI=

|

||||

18

node_modules/through2/node_modules/readable-stream/LICENSE

generated

vendored

Normal file

18

node_modules/through2/node_modules/readable-stream/LICENSE

generated

vendored

Normal file

@@ -0,0 +1,18 @@

|

||||

Copyright Joyent, Inc. and other Node contributors. All rights reserved.

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy

|

||||

of this software and associated documentation files (the "Software"), to

|

||||

deal in the Software without restriction, including without limitation the

|

||||

rights to use, copy, modify, merge, publish, distribute, sublicense, and/or

|

||||

sell copies of the Software, and to permit persons to whom the Software is

|

||||

furnished to do so, subject to the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be included in

|

||||

all copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

||||

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

||||

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

||||

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

||||

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING

|

||||

FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS

|

||||

IN THE SOFTWARE.

|

||||

40

node_modules/through2/node_modules/readable-stream/README.md

generated

vendored

Normal file

40

node_modules/through2/node_modules/readable-stream/README.md

generated

vendored

Normal file

@@ -0,0 +1,40 @@

|

||||

# readable-stream

|

||||

|

||||

***Node-core v7.0.0 streams for userland*** [](https://travis-ci.org/nodejs/readable-stream)

|

||||

|

||||

|

||||

[](https://nodei.co/npm/readable-stream/)

|

||||

[](https://nodei.co/npm/readable-stream/)

|

||||

|

||||

|

||||

[](https://saucelabs.com/u/readable-stream)

|

||||

|

||||

```bash

|

||||

npm install --save readable-stream

|

||||

```

|

||||

|

||||

***Node-core streams for userland***

|

||||

|

||||

This package is a mirror of the Streams2 and Streams3 implementations in

|

||||

Node-core.

|

||||

|

||||

Full documentation may be found on the [Node.js website](https://nodejs.org/dist/v7.1.0/docs/api/).

|

||||

|

||||

If you want to guarantee a stable streams base, regardless of what version of

|

||||

Node you, or the users of your libraries are using, use **readable-stream** *only* and avoid the *"stream"* module in Node-core, for background see [this blogpost](http://r.va.gg/2014/06/why-i-dont-use-nodes-core-stream-module.html).

|

||||

|

||||

As of version 2.0.0 **readable-stream** uses semantic versioning.

|

||||

|

||||

# Streams WG Team Members

|

||||

|

||||

* **Chris Dickinson** ([@chrisdickinson](https://github.com/chrisdickinson)) <christopher.s.dickinson@gmail.com>

|

||||

- Release GPG key: 9554F04D7259F04124DE6B476D5A82AC7E37093B

|

||||

* **Calvin Metcalf** ([@calvinmetcalf](https://github.com/calvinmetcalf)) <calvin.metcalf@gmail.com>

|

||||

- Release GPG key: F3EF5F62A87FC27A22E643F714CE4FF5015AA242

|

||||

* **Rod Vagg** ([@rvagg](https://github.com/rvagg)) <rod@vagg.org>

|

||||

- Release GPG key: DD8F2338BAE7501E3DD5AC78C273792F7D83545D

|

||||

* **Sam Newman** ([@sonewman](https://github.com/sonewman)) <newmansam@outlook.com>

|

||||

* **Mathias Buus** ([@mafintosh](https://github.com/mafintosh)) <mathiasbuus@gmail.com>

|

||||

* **Domenic Denicola** ([@domenic](https://github.com/domenic)) <d@domenic.me>

|

||||

* **Matteo Collina** ([@mcollina](https://github.com/mcollina)) <matteo.collina@gmail.com>

|

||||

- Release GPG key: 3ABC01543F22DD2239285CDD818674489FBC127E

|

||||

60

node_modules/through2/node_modules/readable-stream/doc/wg-meetings/2015-01-30.md

generated

vendored

Normal file

60

node_modules/through2/node_modules/readable-stream/doc/wg-meetings/2015-01-30.md

generated

vendored

Normal file

@@ -0,0 +1,60 @@

|

||||

# streams WG Meeting 2015-01-30

|

||||

|

||||

## Links

|

||||

|

||||

* **Google Hangouts Video**: http://www.youtube.com/watch?v=I9nDOSGfwZg

|

||||

* **GitHub Issue**: https://github.com/iojs/readable-stream/issues/106

|

||||

* **Original Minutes Google Doc**: https://docs.google.com/document/d/17aTgLnjMXIrfjgNaTUnHQO7m3xgzHR2VXBTmi03Qii4/

|

||||

|

||||

## Agenda

|

||||

|

||||

Extracted from https://github.com/iojs/readable-stream/labels/wg-agenda prior to meeting.

|

||||

|

||||

* adopt a charter [#105](https://github.com/iojs/readable-stream/issues/105)

|

||||

* release and versioning strategy [#101](https://github.com/iojs/readable-stream/issues/101)

|

||||

* simpler stream creation [#102](https://github.com/iojs/readable-stream/issues/102)

|

||||

* proposal: deprecate implicit flowing of streams [#99](https://github.com/iojs/readable-stream/issues/99)

|

||||

|

||||

## Minutes

|

||||

|

||||

### adopt a charter

|

||||

|

||||

* group: +1's all around

|

||||

|

||||

### What versioning scheme should be adopted?

|

||||

* group: +1’s 3.0.0

|

||||

* domenic+group: pulling in patches from other sources where appropriate

|

||||

* mikeal: version independently, suggesting versions for io.js

|

||||

* mikeal+domenic: work with TC to notify in advance of changes

|

||||

simpler stream creation

|

||||

|

||||

### streamline creation of streams

|

||||

* sam: streamline creation of streams

|

||||

* domenic: nice simple solution posted

|

||||

but, we lose the opportunity to change the model

|

||||

may not be backwards incompatible (double check keys)

|

||||

|

||||

**action item:** domenic will check

|

||||

|

||||

### remove implicit flowing of streams on(‘data’)

|

||||

* add isFlowing / isPaused

|

||||

* mikeal: worrying that we’re documenting polyfill methods – confuses users

|

||||

* domenic: more reflective API is probably good, with warning labels for users

|

||||

* new section for mad scientists (reflective stream access)

|

||||

* calvin: name the “third state”

|

||||

* mikeal: maybe borrow the name from whatwg?

|

||||

* domenic: we’re missing the “third state”

|

||||

* consensus: kind of difficult to name the third state

|

||||

* mikeal: figure out differences in states / compat

|

||||

* mathias: always flow on data – eliminates third state

|

||||

* explore what it breaks

|

||||

|

||||

**action items:**

|

||||

* ask isaac for ability to list packages by what public io.js APIs they use (esp. Stream)

|

||||

* ask rod/build for infrastructure

|

||||

* **chris**: explore the “flow on data” approach

|

||||

* add isPaused/isFlowing

|

||||

* add new docs section

|

||||

* move isPaused to that section

|

||||

|

||||

|

||||

1

node_modules/through2/node_modules/readable-stream/duplex.js

generated

vendored

Normal file

1

node_modules/through2/node_modules/readable-stream/duplex.js

generated

vendored

Normal file

@@ -0,0 +1 @@

|

||||

module.exports = require("./lib/_stream_duplex.js")

|

||||

75

node_modules/through2/node_modules/readable-stream/lib/_stream_duplex.js

generated

vendored

Normal file

75

node_modules/through2/node_modules/readable-stream/lib/_stream_duplex.js

generated

vendored

Normal file

@@ -0,0 +1,75 @@

|

||||

// a duplex stream is just a stream that is both readable and writable.

|

||||

// Since JS doesn't have multiple prototypal inheritance, this class

|

||||

// prototypally inherits from Readable, and then parasitically from

|

||||

// Writable.

|

||||

|

||||

'use strict';

|

||||

|

||||

/*<replacement>*/

|

||||

|

||||

var objectKeys = Object.keys || function (obj) {

|

||||

var keys = [];

|

||||

for (var key in obj) {

|

||||

keys.push(key);

|

||||

}return keys;

|

||||

};

|

||||

/*</replacement>*/

|

||||

|

||||

module.exports = Duplex;

|

||||

|

||||

/*<replacement>*/

|

||||

var processNextTick = require('process-nextick-args');

|

||||

/*</replacement>*/

|

||||

|

||||

/*<replacement>*/

|

||||

var util = require('core-util-is');

|

||||

util.inherits = require('inherits');

|

||||

/*</replacement>*/

|

||||

|

||||

var Readable = require('./_stream_readable');

|

||||

var Writable = require('./_stream_writable');

|

||||

|

||||

util.inherits(Duplex, Readable);

|

||||

|

||||

var keys = objectKeys(Writable.prototype);

|

||||

for (var v = 0; v < keys.length; v++) {

|

||||

var method = keys[v];

|

||||

if (!Duplex.prototype[method]) Duplex.prototype[method] = Writable.prototype[method];

|

||||

}

|

||||

|

||||

function Duplex(options) {

|

||||

if (!(this instanceof Duplex)) return new Duplex(options);

|

||||

|

||||

Readable.call(this, options);

|

||||

Writable.call(this, options);

|

||||

|

||||

if (options && options.readable === false) this.readable = false;

|

||||

|

||||

if (options && options.writable === false) this.writable = false;

|

||||

|

||||

this.allowHalfOpen = true;

|

||||

if (options && options.allowHalfOpen === false) this.allowHalfOpen = false;

|

||||

|

||||

this.once('end', onend);

|

||||

}

|

||||

|

||||

// the no-half-open enforcer

|

||||

function onend() {

|

||||

// if we allow half-open state, or if the writable side ended,

|

||||

// then we're ok.

|

||||

if (this.allowHalfOpen || this._writableState.ended) return;

|

||||

|

||||

// no more data can be written.

|

||||

// But allow more writes to happen in this tick.

|

||||

processNextTick(onEndNT, this);

|

||||

}

|

||||

|

||||

function onEndNT(self) {

|

||||

self.end();

|

||||

}

|

||||

|

||||

function forEach(xs, f) {

|

||||

for (var i = 0, l = xs.length; i < l; i++) {

|

||||

f(xs[i], i);

|

||||

}

|

||||

}

|

||||

26

node_modules/through2/node_modules/readable-stream/lib/_stream_passthrough.js

generated

vendored

Normal file

26

node_modules/through2/node_modules/readable-stream/lib/_stream_passthrough.js

generated

vendored

Normal file

@@ -0,0 +1,26 @@

|

||||

// a passthrough stream.

|

||||

// basically just the most minimal sort of Transform stream.

|

||||

// Every written chunk gets output as-is.

|

||||

|

||||

'use strict';

|

||||

|

||||

module.exports = PassThrough;

|

||||

|

||||

var Transform = require('./_stream_transform');

|

||||

|

||||

/*<replacement>*/

|

||||

var util = require('core-util-is');

|

||||

util.inherits = require('inherits');

|

||||

/*</replacement>*/

|

||||

|

||||

util.inherits(PassThrough, Transform);

|

||||

|

||||

function PassThrough(options) {

|

||||

if (!(this instanceof PassThrough)) return new PassThrough(options);

|

||||

|

||||

Transform.call(this, options);

|

||||

}

|

||||

|

||||

PassThrough.prototype._transform = function (chunk, encoding, cb) {

|

||||

cb(null, chunk);

|

||||

};

|

||||

941

node_modules/through2/node_modules/readable-stream/lib/_stream_readable.js

generated

vendored

Normal file

941

node_modules/through2/node_modules/readable-stream/lib/_stream_readable.js

generated

vendored

Normal file

@@ -0,0 +1,941 @@

|

||||

'use strict';

|

||||

|

||||

module.exports = Readable;

|

||||

|

||||

/*<replacement>*/

|

||||

var processNextTick = require('process-nextick-args');

|

||||

/*</replacement>*/

|

||||

|

||||

/*<replacement>*/

|

||||

var isArray = require('isarray');

|

||||

/*</replacement>*/

|

||||

|

||||

/*<replacement>*/

|

||||

var Duplex;

|

||||

/*</replacement>*/

|

||||

|

||||

Readable.ReadableState = ReadableState;

|

||||

|

||||

/*<replacement>*/

|

||||

var EE = require('events').EventEmitter;

|

||||

|

||||

var EElistenerCount = function (emitter, type) {

|

||||

return emitter.listeners(type).length;

|

||||

};

|

||||

/*</replacement>*/

|

||||

|

||||

/*<replacement>*/

|

||||

var Stream;

|

||||

(function () {

|

||||

try {

|

||||

Stream = require('st' + 'ream');

|

||||

} catch (_) {} finally {

|

||||

if (!Stream) Stream = require('events').EventEmitter;

|

||||

}

|

||||

})();

|

||||

/*</replacement>*/

|

||||

|

||||

var Buffer = require('buffer').Buffer;

|

||||

/*<replacement>*/

|

||||

var bufferShim = require('buffer-shims');

|

||||

/*</replacement>*/

|

||||

|

||||

/*<replacement>*/

|

||||

var util = require('core-util-is');

|

||||

util.inherits = require('inherits');

|

||||

/*</replacement>*/

|

||||

|

||||

/*<replacement>*/

|

||||

var debugUtil = require('util');

|

||||

var debug = void 0;

|

||||

if (debugUtil && debugUtil.debuglog) {

|

||||

debug = debugUtil.debuglog('stream');

|

||||

} else {

|

||||

debug = function () {};

|

||||

}

|

||||

/*</replacement>*/

|

||||

|

||||

var BufferList = require('./internal/streams/BufferList');

|

||||

var StringDecoder;

|

||||

|

||||

util.inherits(Readable, Stream);

|

||||

|

||||

function prependListener(emitter, event, fn) {

|

||||

// Sadly this is not cacheable as some libraries bundle their own

|

||||

// event emitter implementation with them.

|

||||

if (typeof emitter.prependListener === 'function') {

|

||||

return emitter.prependListener(event, fn);

|

||||

} else {

|

||||

// This is a hack to make sure that our error handler is attached before any

|

||||

// userland ones. NEVER DO THIS. This is here only because this code needs

|

||||

// to continue to work with older versions of Node.js that do not include

|

||||

// the prependListener() method. The goal is to eventually remove this hack.

|

||||

if (!emitter._events || !emitter._events[event]) emitter.on(event, fn);else if (isArray(emitter._events[event])) emitter._events[event].unshift(fn);else emitter._events[event] = [fn, emitter._events[event]];

|

||||

}

|

||||

}

|

||||

|

||||

function ReadableState(options, stream) {

|

||||

Duplex = Duplex || require('./_stream_duplex');

|

||||

|

||||

options = options || {};

|

||||

|

||||

// object stream flag. Used to make read(n) ignore n and to

|

||||

// make all the buffer merging and length checks go away

|

||||

this.objectMode = !!options.objectMode;

|

||||

|

||||

if (stream instanceof Duplex) this.objectMode = this.objectMode || !!options.readableObjectMode;

|

||||

|

||||

// the point at which it stops calling _read() to fill the buffer

|

||||

// Note: 0 is a valid value, means "don't call _read preemptively ever"

|

||||

var hwm = options.highWaterMark;

|

||||

var defaultHwm = this.objectMode ? 16 : 16 * 1024;

|

||||

this.highWaterMark = hwm || hwm === 0 ? hwm : defaultHwm;

|

||||

|

||||

// cast to ints.

|

||||

this.highWaterMark = ~ ~this.highWaterMark;

|

||||

|

||||

// A linked list is used to store data chunks instead of an array because the

|

||||

// linked list can remove elements from the beginning faster than

|

||||

// array.shift()

|

||||

this.buffer = new BufferList();

|

||||

this.length = 0;

|

||||

this.pipes = null;

|

||||

this.pipesCount = 0;

|

||||

this.flowing = null;

|

||||

this.ended = false;

|

||||

this.endEmitted = false;

|

||||

this.reading = false;

|

||||

|

||||

// a flag to be able to tell if the onwrite cb is called immediately,

|

||||

// or on a later tick. We set this to true at first, because any

|

||||

// actions that shouldn't happen until "later" should generally also

|

||||

// not happen before the first write call.

|

||||

this.sync = true;

|

||||

|

||||

// whenever we return null, then we set a flag to say

|

||||

// that we're awaiting a 'readable' event emission.

|

||||

this.needReadable = false;

|

||||

this.emittedReadable = false;

|

||||

this.readableListening = false;

|

||||

this.resumeScheduled = false;

|

||||

|

||||

// Crypto is kind of old and crusty. Historically, its default string

|

||||

// encoding is 'binary' so we have to make this configurable.

|

||||

// Everything else in the universe uses 'utf8', though.

|

||||

this.defaultEncoding = options.defaultEncoding || 'utf8';

|

||||

|

||||

// when piping, we only care about 'readable' events that happen

|

||||

// after read()ing all the bytes and not getting any pushback.

|

||||

this.ranOut = false;

|

||||

|

||||

// the number of writers that are awaiting a drain event in .pipe()s

|

||||

this.awaitDrain = 0;

|

||||

|

||||

// if true, a maybeReadMore has been scheduled

|

||||

this.readingMore = false;

|

||||

|

||||

this.decoder = null;

|

||||

this.encoding = null;

|

||||

if (options.encoding) {

|

||||

if (!StringDecoder) StringDecoder = require('string_decoder/').StringDecoder;

|

||||

this.decoder = new StringDecoder(options.encoding);

|

||||

this.encoding = options.encoding;

|

||||

}

|

||||

}

|

||||

|

||||

function Readable(options) {

|

||||

Duplex = Duplex || require('./_stream_duplex');

|

||||

|

||||

if (!(this instanceof Readable)) return new Readable(options);

|

||||

|

||||

this._readableState = new ReadableState(options, this);

|

||||

|

||||

// legacy

|

||||

this.readable = true;

|

||||

|

||||

if (options && typeof options.read === 'function') this._read = options.read;

|

||||

|

||||

Stream.call(this);

|

||||

}

|

||||

|

||||

// Manually shove something into the read() buffer.

|

||||

// This returns true if the highWaterMark has not been hit yet,

|

||||

// similar to how Writable.write() returns true if you should

|

||||

// write() some more.

|

||||

Readable.prototype.push = function (chunk, encoding) {

|

||||

var state = this._readableState;

|

||||

|

||||

if (!state.objectMode && typeof chunk === 'string') {

|

||||

encoding = encoding || state.defaultEncoding;

|

||||

if (encoding !== state.encoding) {

|

||||

chunk = bufferShim.from(chunk, encoding);

|

||||

encoding = '';

|

||||

}

|

||||

}

|

||||

|

||||

return readableAddChunk(this, state, chunk, encoding, false);

|

||||

};

|

||||

|

||||

// Unshift should *always* be something directly out of read()

|

||||

Readable.prototype.unshift = function (chunk) {

|

||||

var state = this._readableState;

|

||||

return readableAddChunk(this, state, chunk, '', true);

|

||||

};

|

||||

|

||||

Readable.prototype.isPaused = function () {

|

||||

return this._readableState.flowing === false;

|

||||

};

|

||||

|

||||

function readableAddChunk(stream, state, chunk, encoding, addToFront) {

|

||||

var er = chunkInvalid(state, chunk);

|

||||

if (er) {

|

||||

stream.emit('error', er);

|

||||

} else if (chunk === null) {

|

||||

state.reading = false;

|

||||

onEofChunk(stream, state);

|

||||

} else if (state.objectMode || chunk && chunk.length > 0) {

|

||||

if (state.ended && !addToFront) {

|

||||

var e = new Error('stream.push() after EOF');

|

||||

stream.emit('error', e);

|

||||

} else if (state.endEmitted && addToFront) {

|

||||

var _e = new Error('stream.unshift() after end event');

|

||||

stream.emit('error', _e);

|

||||

} else {

|

||||

var skipAdd;

|

||||

if (state.decoder && !addToFront && !encoding) {

|

||||

chunk = state.decoder.write(chunk);

|

||||

skipAdd = !state.objectMode && chunk.length === 0;

|

||||

}

|

||||

|

||||

if (!addToFront) state.reading = false;

|

||||

|

||||

// Don't add to the buffer if we've decoded to an empty string chunk and

|

||||

// we're not in object mode

|

||||

if (!skipAdd) {

|

||||

// if we want the data now, just emit it.

|

||||

if (state.flowing && state.length === 0 && !state.sync) {

|

||||

stream.emit('data', chunk);

|

||||

stream.read(0);

|

||||

} else {

|

||||

// update the buffer info.

|

||||

state.length += state.objectMode ? 1 : chunk.length;

|

||||

if (addToFront) state.buffer.unshift(chunk);else state.buffer.push(chunk);

|

||||

|

||||

if (state.needReadable) emitReadable(stream);

|

||||

}

|

||||

}

|

||||

|

||||

maybeReadMore(stream, state);

|

||||

}

|

||||

} else if (!addToFront) {

|

||||

state.reading = false;

|

||||

}

|

||||

|

||||

return needMoreData(state);

|

||||

}

|

||||

|

||||

// if it's past the high water mark, we can push in some more.

|

||||

// Also, if we have no data yet, we can stand some

|

||||

// more bytes. This is to work around cases where hwm=0,

|

||||

// such as the repl. Also, if the push() triggered a

|

||||

// readable event, and the user called read(largeNumber) such that

|

||||

// needReadable was set, then we ought to push more, so that another

|

||||

// 'readable' event will be triggered.

|

||||

function needMoreData(state) {

|

||||

return !state.ended && (state.needReadable || state.length < state.highWaterMark || state.length === 0);

|

||||

}

|

||||

|

||||

// backwards compatibility.

|

||||

Readable.prototype.setEncoding = function (enc) {

|

||||

if (!StringDecoder) StringDecoder = require('string_decoder/').StringDecoder;

|

||||

this._readableState.decoder = new StringDecoder(enc);

|

||||

this._readableState.encoding = enc;

|

||||

return this;

|

||||

};

|

||||

|

||||

// Don't raise the hwm > 8MB

|

||||

var MAX_HWM = 0x800000;

|

||||

function computeNewHighWaterMark(n) {

|

||||

if (n >= MAX_HWM) {

|

||||

n = MAX_HWM;

|

||||

} else {

|

||||

// Get the next highest power of 2 to prevent increasing hwm excessively in

|

||||

// tiny amounts

|

||||

n--;

|

||||

n |= n >>> 1;

|

||||

n |= n >>> 2;

|

||||

n |= n >>> 4;

|

||||

n |= n >>> 8;

|

||||

n |= n >>> 16;

|

||||

n++;

|

||||

}

|

||||

return n;

|

||||

}

|

||||

|

||||

// This function is designed to be inlinable, so please take care when making

|

||||

// changes to the function body.

|

||||

function howMuchToRead(n, state) {

|

||||

if (n <= 0 || state.length === 0 && state.ended) return 0;

|

||||

if (state.objectMode) return 1;

|

||||

if (n !== n) {

|

||||

// Only flow one buffer at a time

|

||||

if (state.flowing && state.length) return state.buffer.head.data.length;else return state.length;

|

||||

}

|

||||

// If we're asking for more than the current hwm, then raise the hwm.

|

||||

if (n > state.highWaterMark) state.highWaterMark = computeNewHighWaterMark(n);

|

||||

if (n <= state.length) return n;

|

||||

// Don't have enough

|

||||

if (!state.ended) {

|

||||

state.needReadable = true;

|

||||

return 0;

|

||||

}

|

||||

return state.length;

|

||||

}

|

||||

|

||||

// you can override either this method, or the async _read(n) below.

|

||||

Readable.prototype.read = function (n) {

|

||||

debug('read', n);

|

||||

n = parseInt(n, 10);

|

||||

var state = this._readableState;

|

||||

var nOrig = n;

|

||||

|

||||

if (n !== 0) state.emittedReadable = false;

|

||||

|

||||

// if we're doing read(0) to trigger a readable event, but we

|

||||

// already have a bunch of data in the buffer, then just trigger

|

||||

// the 'readable' event and move on.

|

||||

if (n === 0 && state.needReadable && (state.length >= state.highWaterMark || state.ended)) {

|

||||

debug('read: emitReadable', state.length, state.ended);

|

||||

if (state.length === 0 && state.ended) endReadable(this);else emitReadable(this);

|

||||

return null;

|

||||

}

|

||||

|

||||

n = howMuchToRead(n, state);

|

||||

|

||||

// if we've ended, and we're now clear, then finish it up.

|

||||

if (n === 0 && state.ended) {

|

||||

if (state.length === 0) endReadable(this);

|

||||

return null;

|

||||

}

|

||||

|

||||

// All the actual chunk generation logic needs to be

|

||||

// *below* the call to _read. The reason is that in certain

|

||||

// synthetic stream cases, such as passthrough streams, _read

|

||||

// may be a completely synchronous operation which may change

|

||||

// the state of the read buffer, providing enough data when

|

||||

// before there was *not* enough.

|

||||

//

|

||||

// So, the steps are:

|

||||

// 1. Figure out what the state of things will be after we do

|

||||

// a read from the buffer.

|

||||

//

|

||||

// 2. If that resulting state will trigger a _read, then call _read.

|

||||

// Note that this may be asynchronous, or synchronous. Yes, it is

|

||||

// deeply ugly to write APIs this way, but that still doesn't mean

|

||||

// that the Readable class should behave improperly, as streams are

|

||||

// designed to be sync/async agnostic.

|

||||

// Take note if the _read call is sync or async (ie, if the read call

|

||||

// has returned yet), so that we know whether or not it's safe to emit

|

||||

// 'readable' etc.

|

||||

//

|

||||

// 3. Actually pull the requested chunks out of the buffer and return.

|

||||

|

||||

// if we need a readable event, then we need to do some reading.

|

||||

var doRead = state.needReadable;

|

||||

debug('need readable', doRead);

|

||||

|

||||

// if we currently have less than the highWaterMark, then also read some

|

||||

if (state.length === 0 || state.length - n < state.highWaterMark) {

|

||||

doRead = true;

|

||||

debug('length less than watermark', doRead);

|

||||

}

|

||||

|

||||

// however, if we've ended, then there's no point, and if we're already

|

||||

// reading, then it's unnecessary.

|

||||

if (state.ended || state.reading) {

|

||||

doRead = false;

|

||||

debug('reading or ended', doRead);

|

||||

} else if (doRead) {

|

||||

debug('do read');

|

||||

state.reading = true;

|

||||

state.sync = true;

|

||||

// if the length is currently zero, then we *need* a readable event.

|

||||

if (state.length === 0) state.needReadable = true;

|

||||

// call internal read method

|

||||

this._read(state.highWaterMark);

|

||||

state.sync = false;

|

||||

// If _read pushed data synchronously, then `reading` will be false,

|

||||

// and we need to re-evaluate how much data we can return to the user.

|

||||

if (!state.reading) n = howMuchToRead(nOrig, state);

|

||||

}

|

||||

|

||||

var ret;

|

||||

if (n > 0) ret = fromList(n, state);else ret = null;

|

||||

|

||||

if (ret === null) {

|

||||

state.needReadable = true;

|

||||

n = 0;

|

||||

} else {

|

||||

state.length -= n;

|

||||

}

|

||||

|

||||

if (state.length === 0) {

|

||||

// If we have nothing in the buffer, then we want to know

|

||||